以下是今天所学的知识点与代码测试:

Spark-Streaming

DStream实操

案例一:WordCount案例

需求:使用 netcat 工具向 9999 端口不断的发送数据,通过 SparkStreaming 读取端口数据并统计不同单词出现的次数

实验步骤:

- 添加依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.12</artifactId>

<version>3.0.0</version>

</dependency>

- 编写代码

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("streaming")

val ssc = new StreamingContext(sparkConf,Seconds(3))

val lineStreams = ssc.socketTextStream("node01",9999)

val wordStreams = lineStreams.flatMap(_.split(" "))

val wordAndOneStreams = wordStreams.map((_,1))

val wordAndCountStreams = wordAndOneStreams.reduceByKey(_+_)

wordAndCountStreams.print()

ssc.start()

ssc.awaitTermination()

- 启动netcat发送数据

nc -lk 9999

Spark-Streaming核心编程(一)

DStream 创建

创建DStream的三种方式:RDD队列、自定义数据源、kafka数据源

RDD队列

可以通过使用 ssc.queueStream(queueOfRDDs)来创建 DStream,每一个推送到这个队列中的 RDD,都会作为一个DStream 处理。

案例:

需求:循环创建几个 RDD,将 RDD 放入队列。通过 SparkStream 创建 Dstream,计算 WordCount

代码:

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("RDDStream")

val ssc = new StreamingContext(sparkConf, Seconds(4))

val rddQueue = new mutable.Queue[RDD[Int]]()

val inputStream = ssc.queueStream(rddQueue,oneAtATime = false)

val mappedStream = inputStream.map((_,1))

val reducedStream = mappedStream.reduceByKey(_ + _)

reducedStream.print()

ssc.start()

for (i <- 1 to 5) {

rddQueue += ssc.sparkContext.makeRDD(1 to 300, 10)

Thread.sleep(2000)

}

ssc.awaitTermination()

结果展示:

自定义数据源

自定义数据源需要继承 Receiver,并实现 onStart、onStop 方法来自定义数据源采集。

案例:自定义数据源,实现监控某个端口号,获取该端口号内容。

- 自定义数据源

class CustomerReceiver(host:String,port:Int) extends Receiver[String](StorageLevel.MEMORY_ONLY) {

override def onStart(): Unit = {

new Thread("Socket Receiver"){

override def run(): Unit ={

receive()

}

}.start()

}

def receive(): Unit ={

var socket:Socket = new Socket(host,port)

var input :String = null

var reader = new BufferedReader(new InputStreamReader(socket.getInputStream,StandardCharsets.UTF_8))

input = reader.readLine()

while(!isStopped() && input != null){

store(input)

input = reader.readLine()

}

reader.close()

socket.close()

restart("restart")

}

override def onStop(): Unit = {}

}

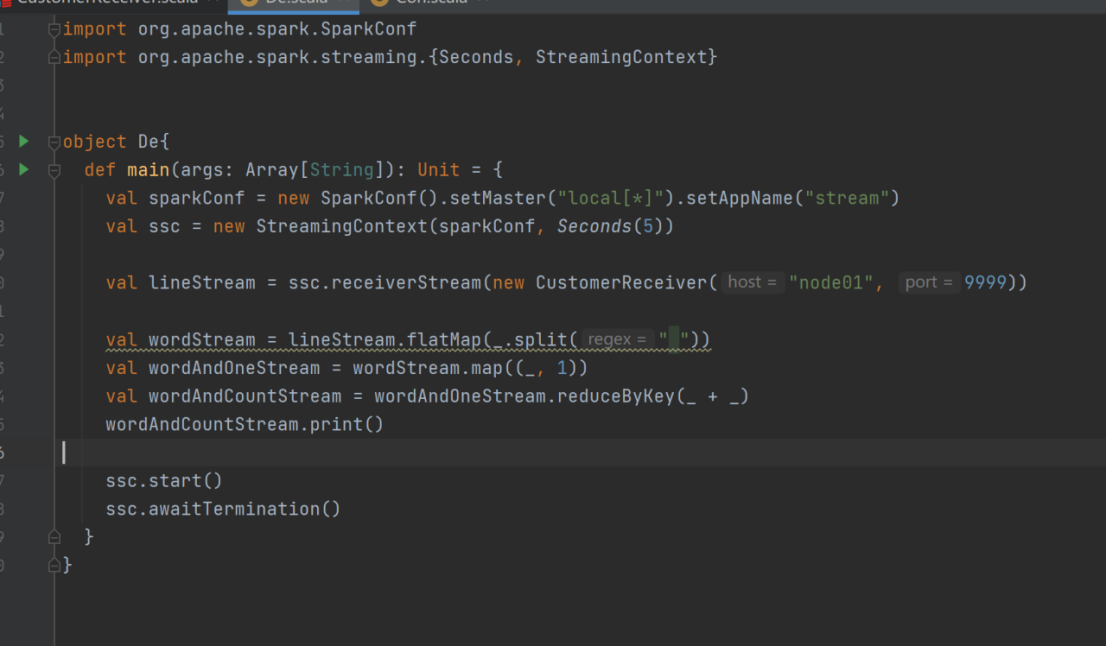

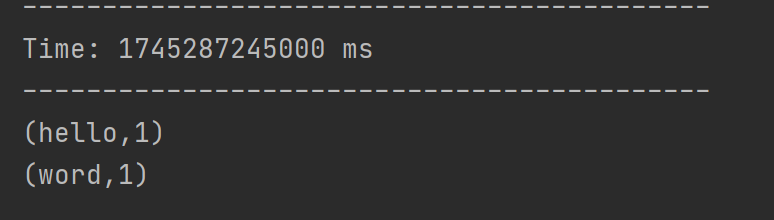

- 使用自定义的数据源采集数据

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("stream")

val ssc = new StreamingContext(sparkConf,Seconds(5))

val lineStream = ssc.receiverStream(new CustomerReceiver("node01",9999))

val wordStream = lineStream.flatMap(_.split(" "))

val wordAndOneStream = wordStream.map((_,1))

val wordAndCountStream = wordAndOneStream.reduceByKey(_+_)

wordAndCountStream.print()

ssc.start()

ssc.awaitTermination()

424

424

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?