本文将在yolov5s结构中插入通道注意力机制SENet为例。分别从以下两个方面添加注意力机制:

1.不改变网络深度的改进(直接替换某些层)

2.改变原网络深度(增加层数)

目录

在添加注意力之前,需要在models/common.py中添加SENet代码。代码如下:

class SE_Block(nn.Module):

def __init__(self, c1, c2):

super().__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1) # 平均池化

self.fc = nn.Sequential(

nn.Linear(c1, c2 // 16, bias=False),

nn.ReLU(inplace=True),

nn.Linear(c2 // 16, c2, bias=False),

nn.Sigmoid()

)

def forward(self, x):

# 添加注意力模块

b, c, _, _ = x.size() # 分别获取batch_size,channel

y = self.avg_pool(x).view(b, c) # y的shape为【batch_size, channels】

y = self.fc(y).view(b, c, 1, 1) # shape为【batch_size, channels, 1, 1】

out = x * y.expand_as(x) # shape 为【batch, channels,feature_w, feature_h】

return out

1.不改变网络深度的改进

网络的修改需要修改两个位置:yaml文件、models/yolo.py

1.1yaml修改

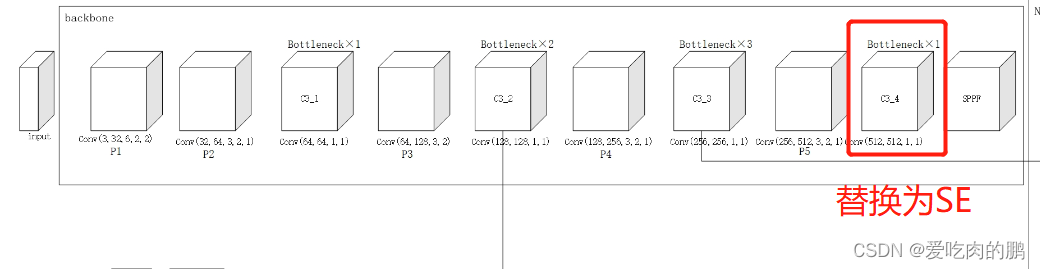

首先是打开models/yolov5s.yaml文件,我们在backbone中的SPPF之前增加SENet。增添位置如下,是将backbone中第4个C3模块替换为SE_Block,如下图。

【需要注意的是通道数要匹配,SENet并不改变通道数,由于原C3的输出通道数为1024*0.5=512,所以我们这里的写的是1024,这里的1024是传入到上面我们定义的Class SE_Block(nn.Moudel)中的c2参数,c1参数是由上一层的输出通道数控制的】。

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2 conv1(3,32,k=6,s=2,p=2)

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4 conv2(32,64,k=3,s=2,p=1)

[-1, 3, C3, [128]], # C3_1 有Bottleneck

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8 conv3(64,128,k=3,s=2,p=1)

[-1, 6, C3, [256]], # C3_2 Bottleneck重复两次

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16 conv4(128,256,k=3,s=2,p=1)

[-1, 9, C3, [512]], # C3_3 Bottleneck重复三次 输出256通道

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32 Conv5(256,512,k=3,s=2,p=1)

#[-1, 3, C3, [1024]], # C3_4 Bottleneck重复1次 输出512通道

[-1, 1, SE_Block, [1024]], # 增加通道注意力机制 输出为512通道

[-1, 1, SPPF, [1024, 5]], # 9 每个都是K为5的池化

]

1.2yolo.py修改

主要是在下面的代码中,在列表中添加SE_Block,这样可以获得我们要传入的参数。这样就改完了。

if m in [Conv, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, MixConv2d, Focus, CrossConv,

BottleneckCSP, C3, C3TR, C3SPP, C3Ghost, SE_Block]:

改完以后可以随便训练几轮试试,或者打印一下模型,看看改的对不对。可以看到在我自己的数据集上训练是可以正常进行的,

Epoch gpu_mem box obj cls labels img_size

1/299 1.4G 0.05173 0.03137 0 5 640: 100%|████████████████████████████████████████████████████████████████████████| 180/180 [04:09<00:00, 1.39s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100%|██████████████████████████████████████████████████████████| 10/10 [00:05<00:00, 1.94it/s]

all 80 133 0.416 0.444 0.393 0.118

Epoch gpu_mem box obj cls labels img_size

2/299 1.4G 0.04407 0.02762 0 4 640: 100%|████████████████████████████████████████████████████████████████████████| 180/180 [04:09<00:00, 1.39s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100%|██████████████████████████████████████████████████████████| 10/10 [00:05<00:00, 1.95it/s]

all 80 133 0.655 0.571 0.581 0.21

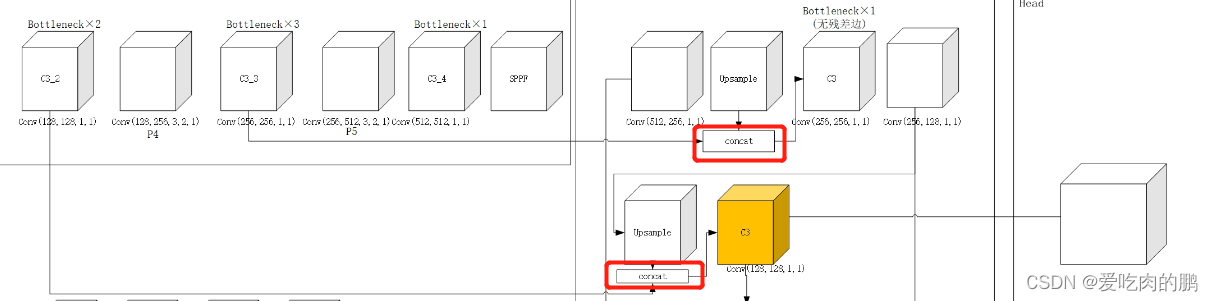

2.改变原网络深度

比如我要在第一个C3后面加一个SE。yaml的修改如下。接下来稍微麻烦一点了【需要你了解v5的每层结构】,由于我们在backbone中加入了一层,也就是相当于后面的网络与之前相比都往后移动了一层,那么在后面的Concat部分中融合的特征层的索引也会收到影响,因此我们需要的是修改Concat层的from参数。

会改变原来网络中的这些地方:

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2 conv1(3,32,k=6,s=2,p=2)

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4 conv2(32,64,k=3,s=2,p=1)

[-1, 3, C3, [128]], # C3_1 有Bottleneck

[-1, 1, SE_Block, [128]], # 增加通道注意力机制 输出为512通道

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8 conv3(64,128,k=3,s=2,p=1)

[-1, 6, C3, [256]], # C3_2 Bottleneck重复两次

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16 conv4(128,256,k=3,s=2,p=1)

[-1, 9, C3, [512]], # C3_3 Bottleneck重复三次 输出256通道

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32 Conv5(256,512,k=3,s=2,p=1)

[-1, 3, C3, [1024]], # C3_4 Bottleneck重复1次 输出512通道

[-1, 1, SPPF, [1024, 5]], # 9 每个都是K为5的池化

]

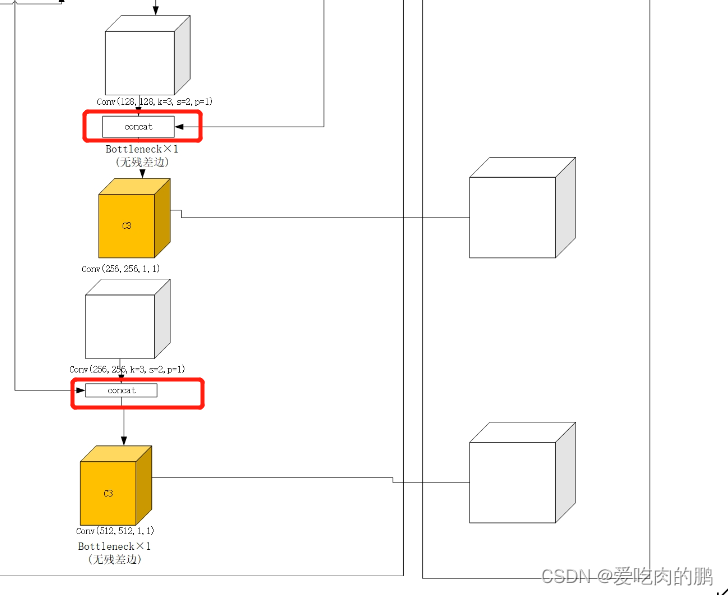

可以看到实际就是每个Concat也后面移动一层,因此yaml修改为一下。最终的Detect的from也需要修改。

head:

[[-1, 1, Conv, [512, 1, 1]], # conv1(512,256,1,1)

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 7], 1, Concat, [1]], # cat backbone P4 将C3_3与SPPF出来后的上采样拼接 拼接后的通道为512

[-1, 3, C3, [512, False]], # 13 conv(256,256,k=1,s=1) 没有残差边

[-1, 1, Conv, [256, 1, 1]], # conv2(256,128,1,1)

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 5], 1, Concat, [1]], # cat backbone P3 与C3_2拼接,输出256通道

[-1, 3, C3, [256, False]], # 17 (P3/8-small) conv3(128,128,1,1)

[-1, 1, Conv, [256, 3, 2]],# conv4(128,128,3,2,1)

[[-1, 15], 1, Concat, [1]], # cat head P4 拼接后256通道

[-1, 3, C3, [512, False]], # 20 (P4/16-medium) conv5(256,256,1,1)

[-1, 1, Conv, [512, 3, 2]],# conv6(256,256,3,2,1)

[[-1, 11], 1, Concat, [1]], # cat head P5 拼接后是512

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[18, 21, 24], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

同理,也需要在models/yolo.py中修改如下,增加SE_Block:

if m in [Conv, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, MixConv2d, Focus, CrossConv,

BottleneckCSP, C3, C3TR, C3SPP, C3Ghost, SE_Block]:

也可以训练一个epoch看看,或者打印一下网络。这样就改完啦。

Starting training for 300 epochs...

Epoch gpu_mem box obj cls labels img_size

0/299 0.419G 0.09617 0.03019 0 13 640: 12%|████████▉ | 22/180 [00:15<01:05, 2.40it/s]

Model(

(model): Sequential(

(0): Conv(

(conv): Conv2d(3, 32, kernel_size=(6, 6), stride=(2, 2), padding=(2, 2), bias=False)

(bn): BatchNorm2d(32, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(1): Conv(

(conv): Conv2d(32, 64, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(2): C3(

(cv1): Conv(

(conv): Conv2d(64, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(64, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(3): SE_Block(

(avg_pool): AdaptiveAvgPool2d(output_size=1)

(fc): Sequential(

(0): Linear(in_features=64, out_features=4, bias=False)

(1): ReLU(inplace=True)

(2): Linear(in_features=4, out_features=64, bias=False)

(3): Sigmoid()

)

)

(4): Conv(

(conv): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(5): C3(

(cv1): Conv(

(conv): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(1): Bottleneck(

(cv1): Conv(

(conv): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(6): Conv(

(conv): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(7): C3(

(cv1): Conv(

(conv): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(1): Bottleneck(

(cv1): Conv(

(conv): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(2): Bottleneck(

(cv1): Conv(

(conv): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(128, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(8): Conv(

(conv): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(512, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(9): C3(

(cv1): Conv(

(conv): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(512, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(256, eps=0.001, momentum=0.03, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(10): SPPF(

(cv1): Conv(

(conv): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

本人从事网路安全工作12年,曾在2个大厂工作过,安全服务、售后服务、售前、攻防比赛、安全讲师、销售经理等职位都做过,对这个行业了解比较全面。

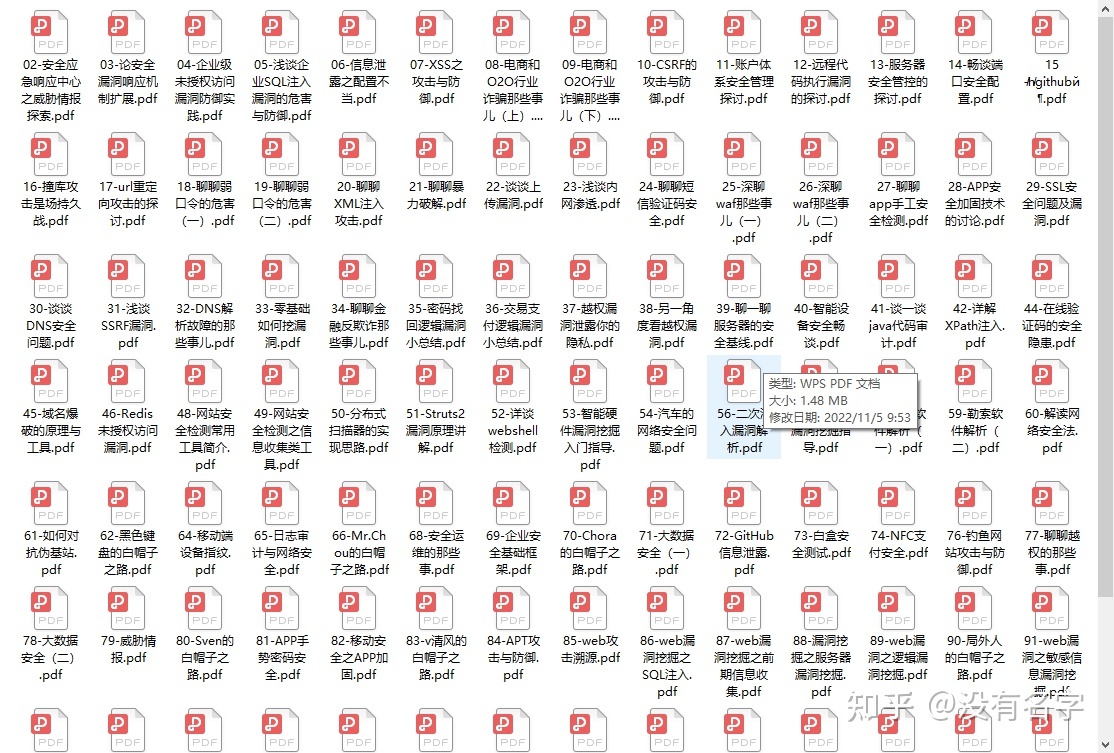

最近遍览了各种网络安全类的文章,内容参差不齐,其中不伐有大佬倾力教学,也有各种不良机构浑水摸鱼,在收到几条私信,发现大家对一套完整的系统的网络安全从学习路线到学习资料,甚至是工具有着不小的需求。

最后,我将这部分内容融会贯通成了一套282G的网络安全资料包,所有类目条理清晰,知识点层层递进,需要的小伙伴可以点击下方小卡片领取哦!下面就开始进入正题,如何从一个萌新一步一步进入网络安全行业。

### 学习路线图

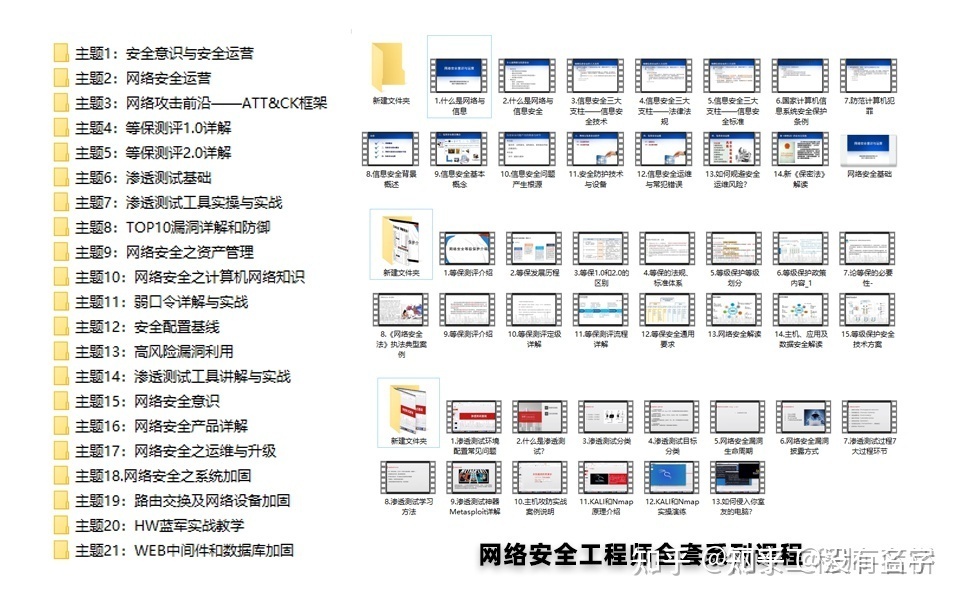

其中最为瞩目也是最为基础的就是网络安全学习路线图,这里我给大家分享一份打磨了3个月,已经更新到4.0版本的网络安全学习路线图。

相比起繁琐的文字,还是生动的视频教程更加适合零基础的同学们学习,这里也是整理了一份与上述学习路线一一对应的网络安全视频教程。

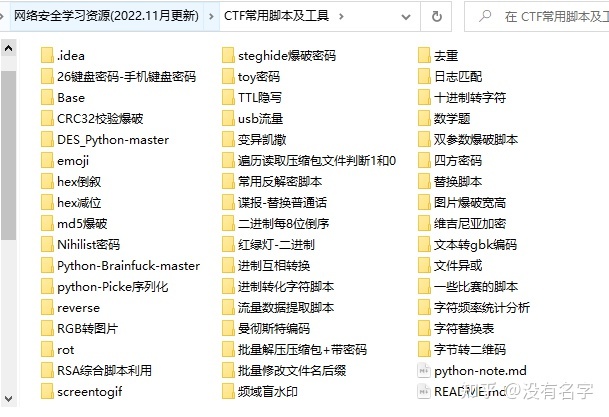

#### 网络安全工具箱

当然,当你入门之后,仅仅是视频教程已经不能满足你的需求了,你肯定需要学习各种工具的使用以及大量的实战项目,这里也分享一份**我自己整理的网络安全入门工具以及使用教程和实战。**

#### 项目实战

最后就是项目实战,这里带来的是**SRC资料&HW资料**,毕竟实战是检验真理的唯一标准嘛~

#### 面试题

归根结底,我们的最终目的都是为了就业,所以这份结合了多位朋友的亲身经验打磨的面试题合集你绝对不能错过!

4765

4765

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?