网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。

### Insert overwrite

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(99, “a99”, 20.0, 900L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(OPERATION.key(),“insert_overwrite”).

option(PRECOMBINE_FIELD.key(), “ts”).

option(RECORDKEY_FIELD.key(), “id”).

option(TBL_NAME.key(), “hudi_mor_tbl_shell”).

option(TABLE_TYPE_OPT_KEY, “MERGE_ON_READ”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。发现只有新增的这一条数据。

### 删除数据

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(2, “a2”, 400.0, 2222L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(OPERATION_OPT_KEY,“delete”).

option(PRECOMBINE_FIELD_OPT_KEY, “ts”).

option(RECORDKEY_FIELD_OPT_KEY, “id”).

option(TABLE_NAME, “hudi_mor_tbl_shell”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。

### Spark SQL方式

启动Hudi spark sql的方法:

./spark-sql

–master yarn

–conf ‘spark.serializer=org.apache.spark.serializer.KryoSerializer’

–conf ‘spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension’

–conf ‘spark.sql.catalog.spark_catalog=org.apache.spark.sql.hudi.catalog.HoodieCatalog’

如果使用Hudi的版本为0.11.x,需要执行:

./spark-sql

–master yarn

–conf ‘spark.serializer=org.apache.spark.serializer.KryoSerializer’

–conf ‘spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension’

创建表:

create table hudi_mor_tbl (

id int,

name string,

price double,

ts bigint

) using hudi

tblproperties (

type = ‘mor’,

primaryKey = ‘id’,

preCombineField = ‘ts’

)

location ‘hdfs:///hudi/hudi_mor_tbl’;

验证:

show tables;

### 插入数据

SQL方式:

insert into hudi_mor_tbl select 1, ‘a1’, 20, 1000;

验证:

select * from hudi_mor_tbl;

### 普通查询

SQL方式:

select * from hudi_mor_tbl;

### 修改数据

SQL方式:

update hudi_mor_tbl set price = price * 2, ts = 1111 where id = 1;

验证:

select * from hudi_mor_tbl;

### insert overwrite

SQL方式:

insert overwrite hudi_mor_tbl select 99, ‘a99’, 20.0, 900;

验证:

select * from hudi_mor_tbl;

发现只有新增的这一条数据。

### 删除数据

SQL方式:

delete from hudi_mor_tbl where id % 2 = 1;

验证:

select * from hudi_mor_tbl;

### Kerberos和权限配置

例如,如果要允许Hudi用户对Hudi表进行操作,提交队列为default,表路径为hdfs:///hudi/t1,可以通过以下步骤使用Ranger进行设置:

1、在Ranger中创建一个名为hudi的用户。

2、分配给hudi用户以下目录的读写权限:/hdfs/hudi/t1,/tmp,/user/hudi。

3、赋予hudi用户对yarn default队列的权限。

如果启用了Kerberos,还需要执行以下额外步骤:

1、在Kerberos中创建hudi@PAULTECH.COM主体,并生成相应的keytab文件。

2、在执行kinit之后,确保hudi用户具有相应的权限以执行相关操作。

通过这些设置,Hudi用户应该能够在指定的表路径下执行操作,并具有必要的HDFS和YARN权限,确保了对应用程序的顺利运行。

### FAQ

**1、spark-sql或者spark-shell启动出现NoClassDefFoundError: org/apache/hadoop/shaded/javax/ws/rs/core/NoContentException**

问题日志:

Exception in thread “main” java.lang.NoClassDefFoundError: org/apache/hadoop/shaded/javax/ws/rs/core/NoContentException

at org.apache.hadoop.yarn.util.timeline.TimelineUtils.(TimelineUtils.java:60)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.serviceInit(YarnClientImpl.java:200)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

at org.apache.spark.deploy.yarn.Client.submitApplication(Client.scala:191)

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.start(YarnClientSchedulerBackend.scala:62)

at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:222)

at org.apache.spark.SparkContext.(SparkContext.scala:585)

at org.apache.spark.SparkContext

.

g

e

t

O

r

C

r

e

a

t

e

(

S

p

a

r

k

C

o

n

t

e

x

t

.

s

c

a

l

a

:

2704

)

a

t

o

r

g

.

a

p

a

c

h

e

.

s

p

a

r

k

.

s

q

l

.

S

p

a

r

k

S

e

s

s

i

o

n

.getOrCreate(SparkContext.scala:2704) at org.apache.spark.sql.SparkSession

.getOrCreate(SparkContext.scala:2704)atorg.apache.spark.sql.SparkSessionBuilder.

a

n

o

n

f

u

n

anonfun

anonfungetOrCreate

2

(

S

p

a

r

k

S

e

s

s

i

o

n

.

s

c

a

l

a

:

953

)

a

t

s

c

a

l

a

.

O

p

t

i

o

n

.

g

e

t

O

r

E

l

s

e

(

O

p

t

i

o

n

.

s

c

a

l

a

:

189

)

a

t

o

r

g

.

a

p

a

c

h

e

.

s

p

a

r

k

.

s

q

l

.

S

p

a

r

k

S

e

s

s

i

o

n

2(SparkSession.scala:953) at scala.Option.getOrElse(Option.scala:189) at org.apache.spark.sql.SparkSession

2(SparkSession.scala:953)atscala.Option.getOrElse(Option.scala:189)atorg.apache.spark.sql.SparkSessionBuilder.getOrCreate(SparkSession.scala:947)

at org.apache.spark.sql.hive.thriftserver.SparkSQLEnv

.

i

n

i

t

(

S

p

a

r

k

S

Q

L

E

n

v

.

s

c

a

l

a

:

54

)

a

t

o

r

g

.

a

p

a

c

h

e

.

s

p

a

r

k

.

s

q

l

.

h

i

v

e

.

t

h

r

i

f

t

s

e

r

v

e

r

.

S

p

a

r

k

S

Q

L

C

L

I

D

r

i

v

e

r

.

<

i

n

i

t

>

(

S

p

a

r

k

S

Q

L

C

L

I

D

r

i

v

e

r

.

s

c

a

l

a

:

327

)

a

t

o

r

g

.

a

p

a

c

h

e

.

s

p

a

r

k

.

s

q

l

.

h

i

v

e

.

t

h

r

i

f

t

s

e

r

v

e

r

.

S

p

a

r

k

S

Q

L

C

L

I

D

r

i

v

e

r

.init(SparkSQLEnv.scala:54) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.<init>(SparkSQLCLIDriver.scala:327) at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver

.init(SparkSQLEnv.scala:54)atorg.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.<init>(SparkSQLCLIDriver.scala:327)atorg.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala:159)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org

a

p

a

c

h

e

apache

apachespark

d

e

p

l

o

y

deploy

deploySparkSubmitKaTeX parse error: Can't use function '$' in math mode at position 88: …ubmit.doRunMain$̲1(SparkSubmit.s…anon

2.

d

o

S

u

b

m

i

t

(

S

p

a

r

k

S

u

b

m

i

t

.

s

c

a

l

a

:

1046

)

a

t

o

r

g

.

a

p

a

c

h

e

.

s

p

a

r

k

.

d

e

p

l

o

y

.

S

p

a

r

k

S

u

b

m

i

t

2.doSubmit(SparkSubmit.scala:1046) at org.apache.spark.deploy.SparkSubmit

2.doSubmit(SparkSubmit.scala:1046)atorg.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala:1055)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.shaded.javax.ws.rs.core.NoContentException

at java.net.URLClassLoader.findClass(URLClassLoader.java:381)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:331)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

… 27 more

问题原因:Hadoop和Spark版本不匹配所致。

解决方案:可禁用Yarn的timeline-service。禁用方法请看环境配置。

参考链接:

<https://github.com/apache/kyuubi/issues/2904>

**2、创建表的时候出现 CreateHoodieTableCommand: Failed to create catalog table in metastore: org.apache.hudi.hadoop.realtime.HoodieParquetRealtimeInputFormat**

从原始报错看不出来是什么问题,需要增加代码:

hudi-spark-datasource/hudi-spark-common/src/main/scala/org/apache/spark/sql/hudi/command/CreateHoodieTableCommand.scala

85行左右修改为:

case NonFatal(e) => {

logWarning(s"Failed to create catalog table in metastore: ${e.getMessage}“)

logWarning(s"Failed to create catalog table in metastore: ${e.getClass}”)

logWarning(s"Failed to create catalog table in metastore: ${e.getStackTrace.mkString(“Array(”, ", “, “)”)}”)

}

编译替换后再次运行。可看到更为详细的报错日志:

org.apache.hudi.hadoop.realtime.HoodieParquetRealtimeInputFormat。经过查找,发现这个class在hudi-hadoop-mr-bundle包中。

**将Hudi编译后的hudi-hadoop-mr-bundle-0.13.1.jar放入到hive安装目录的lib或者auxlib中。重启Hive metastore服务后恢复正常。**

**3、spark-sql或者spark-shell命令太长,每次都要加入Hudi必须的conf配置,可否简化**

有办法简化,可以将Hudi的配置加入到spark-defaults.conf配置文件中。例如对于Hudi 0.13.1版本可在spark-defaults.conf中加入:

spark.serializer=org.apache.spark.serializer.KryoSerializer

spark.sql.catalog.spark_catalog=org.apache.spark.sql.hudi.catalog.HoodieCatalog

spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension

修改之后在启动spark-shell只需要执行:

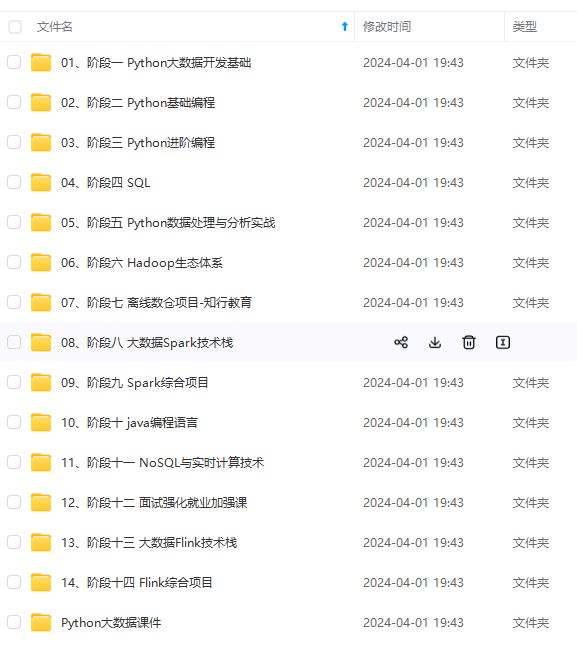

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

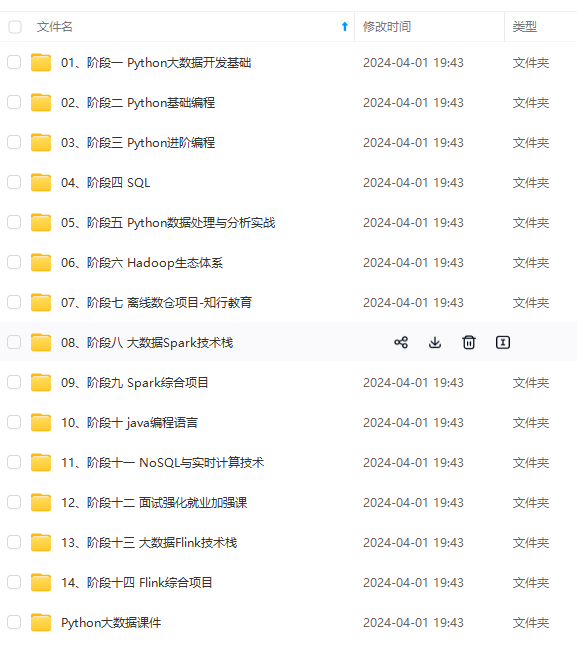

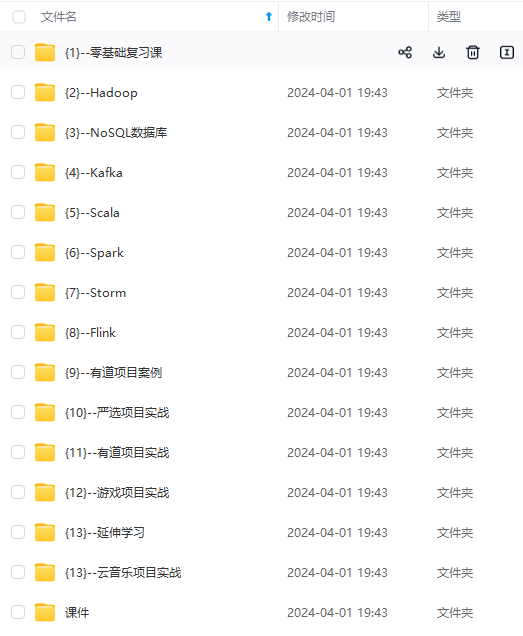

修改之后在启动spark-shell只需要执行:

[外链图片转存中…(img-CJqvucF7-1715818354962)]

[外链图片转存中…(img-xryWij9M-1715818354962)]

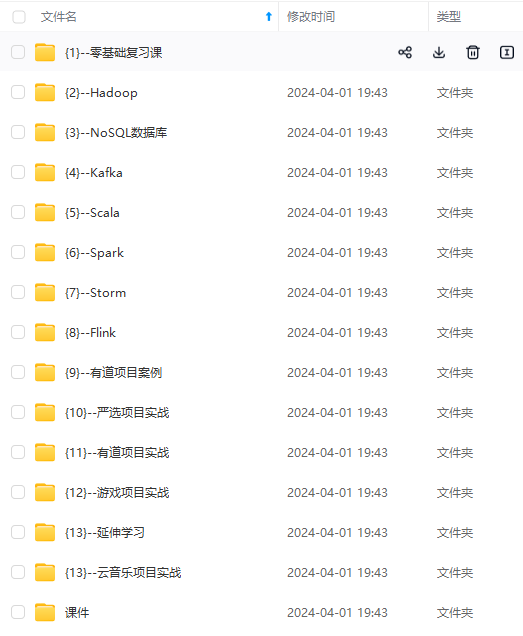

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

2538

2538

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?