特征提取和匹配

(1)使用OpenCV提取和匹配

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include "opencv2/imgcodecs/legacy/constants_c.h"

using namespace std;

using namespace cv;

int main(int argc, char **argv) {

//-- 读取图像

Mat img_1 = imread("../1.png");

Mat img_2 = imread("../2.png");

//-- 初始化

std::vector<KeyPoint> keypoints_1, keypoints_2;

Mat descriptors_1, descriptors_2;

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// Ptr<FeatureDetector> detector = FeatureDetector::create(detector_name);

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create(descriptor_name);

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

Mat outimg1;

drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("ORB特征点", outimg1);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配, 使用 Hamming 距离

vector<DMatch> matches;

//BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, matches);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = matches[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

// 仅供娱乐的写法

min_dist = min_element(matches.begin(), matches.end(),

[](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; })->distance;

max_dist = max_element(matches.begin(), matches.end(),

[](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; })->distance;

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

std::vector<DMatch> good_matches;

for (int i = 0; i < descriptors_1.rows; i++) {

if (matches[i].distance <= max(2 * min_dist, 30.0)) {

good_matches.push_back(matches[i]);

}

}

//-- 第五步:绘制匹配结果

Mat img_match;

Mat img_goodmatch;

drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match);

drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch);

imshow("所有匹配点对", img_match);

imshow("优化后匹配点对", img_goodmatch);

waitKey(0);

return 0;

}

0初始化

std::vector<KeyPoint> keypoints_1, keypoints_2; //a

Mat descriptors_1, descriptors_2; //b

Ptr<FeatureDetector> detector = ORB::create(); //c

Ptr<DescriptorExtractor> descriptor = ORB::create();

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

a. opencv提供了KeyPoint数据类型,具体表示特征点:

class KeyPoint

{

Point2f pt; //特征点坐标

float size; //特征点邻域直径

float angle; //特征点的方向,值为0~360,负值表示不使用

float response; //特征点的响应强度,代表了该点是特征点的稳健度,可以用于后续处理中特征点排序

int octave; //特征点所在的图像金字塔的层组

int class_id; //用于聚类的id

}

b. cv::Mat是opencv用来记录大型数组的主要类型

c. Ptr:智能指针,定义了一个名为detector的指针

FeatureDetector:特征检测器

Ptr<FeatureDetector> detector = FeatureDetector::create(“ORB”);

此处写法为opencv2写法(感觉更好理解哈哈),查阅资料:

OpenCV 2.4.3提供了10种特征检测方法:

- “FAST” – FastFeatureDetector

- “STAR” – StarFeatureDetector

- “SIFT” – SIFT (nonfree module)

- “SURF” – SURF (nonfree module)

- “ORB” – ORB

- “MSER” – MSER

- “GFTT” – GoodFeaturesToTrackDetector

- “HARRIS” – GoodFeaturesToTrackDetector with Harris detector enabled

- “Dense” – DenseFeatureDetector

- “SimpleBlob” – SimpleBlobDetector

DescriptorExtractor:描述提取器?

上述两种什么器应该分别对应关键点与描述子。

DescriptorMatcher:描述匹配器,将生成的关键点与描述子匹配起来,同时选择匹配方法。

enum MatcherType

{

FLANNBASED = 1,

BRUTEFORCE = 2, //暴力匹配法L2范数距离 欧氏距离

BRUTEFORCE_L1 = 3, //绝对值之差

BRUTEFORCE_HAMMING = 4, //汉明距离

BRUTEFORCE_HAMMINGLUT = 5, //汉明距离平方

BRUTEFORCE_SL2 = 6

};

DescriptorMatcher:特征匹配器

1提取特征点

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

Mat outimg1;

drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("ORB特征点", outimg1);

2.匹配特征点

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配, 使用 Hamming 距离

vector<DMatch> matches;

//BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, matches);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = matches[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

std::vector<DMatch> good_matches;

for (int i = 0; i < descriptors_1.rows; i++) {

if (matches[i].distance <= max(2 * min_dist, 30.0)) {

good_matches.push_back(matches[i]);

}

}

暴力匹配:对每一个特征点 x t m x_t^m xtm 与所以的 x t + 1 n x_{t+1}^n xt+1n 测量描述子的距离,然后排序,取最近的一个作为匹配点。

match()函数第三个参数matches是一个容器,需要提取出匹配的距离结果,存储的距离为这个特征点计算得到的最近的距离。

3.绘制匹配结果

//-- 第五步:绘制匹配结果

Mat img_match;

Mat img_goodmatch;

drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match);

drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch);

imshow("所有匹配点对", img_match);

imshow("优化后匹配点对", img_goodmatch);

waitKey(0);

使用opencv提供的绘制匹配结果的函数。记住就行了哈哈

(2)作业2 ORB特征点

2.00 算法分析

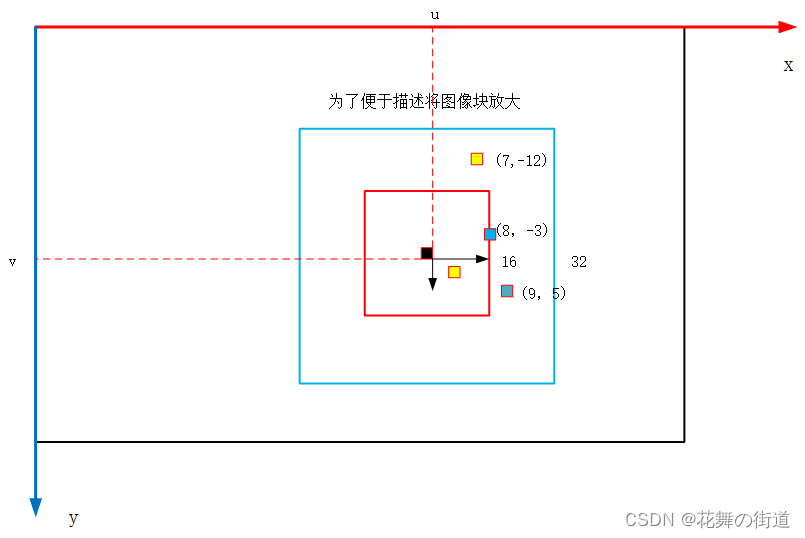

(1)上图黑框表示的是一张图片,蓝框表示ORB计算BRIEF描述子时所选取的图像块区域,红框ORB检测Oriented FAST关键点时选取的图像块区域,中间黑色点是检测到的关键点。

(2)选取的图像块需要保证蓝框在图像相片的范围内不能超出,需要检查边界,检查大的描述子区域便足够。

(3)重点在描述子的计算上,已知关键点(u,v)以及该点的转角 θ \theta θ (灰度质心法计算所的)。

BRIEF是一种二进制描述子,位数可以选择128位或256位,现选择256位的描述子 d = [ d 1 , d 2 ⋯ d 256 ] d = [d_1,d_2 \cdots d_{256}] d=[d1,d2⋯d256],如何得到 d i d_i di 呢?方法如下:

在关键点周围选取256对点,程序中已给出如下,前面两个数是一个点,后面是一个,前两对点在图上表示如图蓝点与黄点。每一对点均按照 θ \theta θ 旋转,可以得到一对新的点,比较这两个新点的像素值,前者大于后者d为0,前者小于后者d为1。

int ORB_pattern[256 * 4] = {

8, -3, 9, 5 /*mean (0), correlation (0)*/,

4, 2, 7, -12 /*mean (1.12461e-05), correlation (0.0437584)*/,

-11, 9, -8, 2 /*mean (3.37382e-05), correlation (0.0617409)*/,

7, -12, 12, -13 /*mean (5.62303e-05), correlation (0.0636977)*/,

......

}

(4)两张图片特征点的匹配

经过上述步骤我们已经得到两张图片的特征点,关键点与描述子分别储存在vector容器中。对于两组描述子P,Q,P中的每一个描述子计算与Q中描述子的距离,将两个描述子每一位异或运算然后得数相加得到距离称为汉明距离。使用DMatch用来储存匹配信息。

2.01 主程序

int main(int argc, char **argv) {

// 1.load image

cv::Mat first_image = cv::imread(first_file, 0); // load grayscale image

cv::Mat second_image = cv::imread(second_file, 0); // load grayscale image

// plot the image

cv::imshow("first image", first_image);

cv::imshow("second image", second_image);

cv::waitKey(0);

// 2.提取 FAST 关键点,但是不具有旋转不变性

vector<cv::KeyPoint> keypoints;

cv::FAST(first_image, keypoints, 40);

cout << "keypoints: " << keypoints.size() << endl;

//加入灰度质心角后称为 Oriented FAST关键点

computeAngle(first_image, keypoints); //即将keypoints更新了

vector<cv::KeyPoint> keypoints2;

cv::FAST(second_image, keypoints2, 40);

cout << "keypoints2: " << keypoints2.size() << endl;

computeAngle(second_image, keypoints2);

// 3.计算描述子

vector<DescType> descriptors;

vector<DescType> descriptors2;

computeORBDesc(first_image, keypoints, descriptors);

computeORBDesc(second_image, keypoints2, descriptors2);

// plot the keypoints

cv::Mat image_show;

cv::drawKeypoints(first_image, keypoints, image_show, cv::Scalar::all(-1),

cv::DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

cv::imshow("features", image_show);

cv::imwrite("feat1.png", image_show);

cv::waitKey(0);

// 4.暴力匹配

vector<cv::DMatch> matches;

bfMatch(descriptors, descriptors2, matches);

cout << "matches: " << matches.size() << endl;

// plot the matches

cv::drawMatches(first_image, keypoints, second_image, keypoints2, matches, image_show);

cv::imshow("matches", image_show);

cv::imwrite("matches.png", image_show);

cv::waitKey(0);

cout << "done." << endl;

return 0;

}

2.1 ORB提取

- 由于要取图像 16x16 块,所以位于边缘处的点(比如 u < 8 的)对应的图像块可能会出界,此时

需要判断该点是否在边缘处,并跳过这些点。 - 由于矩的定义方式,在画图特征点之后,角度看起来总是指向图像中更亮的地方。

- std::atan 和 std::atan2 会返回弧度制的旋转角,但 OpenCV 中使用角度制,如使用 std::atan 类

函数,请转换一下。

思路:提取 FAST 关键点,但是仅提取关键点不具有旋转不变性,也就是说当图片旋转后后续无法正确匹配,所以需要利用灰度质心法增加旋转不变性。

0读入图片

1定义一个cv::KeyPoint类型的容器,名字叫keypoints;利用FAST类提取关键点,注意第一个参数是读入的图片,第二个参数是刚刚定义的容器的名字keypoints,用于存储所提取的关键点,此时不具有旋转不变性

2利用灰度质心算法求解角度,cv::KeyPoint类下有成员变量angle,所以计算得到的灰度质心角便被存入keypoints,在程序中即为kp.angle。

根据灰度质心法算法,编写的代码如下:

//compute the angle

void computeAngle(const cv::Mat &image, vector<cv::KeyPoint> &keypoints) {

int half_patch_size = 8;

int half_boundary = 16;

for (auto &kp : keypoints) {

// Check if keypoint is too close to the edges

if (kp.pt.x < half_boundary || kp.pt.y < half_boundary ||

kp.pt.x >= image.cols - half_boundary || kp.pt.y >= image.rows - half_boundary){

continue;

}

// Compute angle from a 16x16 patch

float m01 = 0, m10 = 0;

for (int dx = -half_patch_size; dx < half_patch_size; ++dx) {

for (int dy = -half_patch_size; dy < half_patch_size; ++dy) {

uchar pixel = image.at<uchar>(kp.pt.y + dy, kp.pt.x + dx);

m10 += dx * pixel;

m01 += dy * pixel;

}

}

kp.angle = atan(m01/m10) * 180.f / M_PI;

}

}

2.2 ORB描述

请你完成 compute-ORBDesc 函数,实现此处计算。注意,通常我们会固定 p, q 的取法(称为 ORB 的 pattern),否则每

次都重新随机选取,会使得描述不稳定。我们在全局变量 ORB_pattern 中定义了 p, q 的取法,格式为u p , v p , u q , v q 。请你根据给定的 pattern 完成 ORB 描述的计算。

提示:

- p, q 同样要做边界检查,否则会跑出图像外。如果跑出图像外,就设这个描述子为空。

- 调用 cos 和 sin 时同样请注意弧度和角度的转换。

int ORB_pattern[256 * 4] = {

8, -3, 9, 5 /*mean (0), correlation (0)*/,

4, 2, 7, -12 /*mean (1.12461e-05), correlation (0.0437584)*/,

-11, 9, -8, 2 /*mean (3.37382e-05), correlation (0.0617409)*/,

7, -12, 12, -13 /*mean (5.62303e-05), correlation (0.0636977)*/,

......

}

// compute the descriptor

void computeORBDesc(const cv::Mat &image, vector<cv::KeyPoint> &keypoints,

vector<DescType> &desc) {

for (auto &kp : keypoints) {

DescType d(256, false);

for (int i = 0; i < 256; i++) {

int u = int(kp.pt.x), v = int(kp.pt.y);

// Check if keypoint is too close to the edges

if (u < 16 || v < 16 || u >= image.cols - 16 || v >= image.rows - 16) {

d.clear();

break;

}

else {

float angle = kp.angle / 180.f * M_PI;

float cos_theta = cos(angle);

float sin_theta = sin(angle);

cv::Point2f p(ORB_pattern[i*4], ORB_pattern[i * 4 + 1]);

cv::Point2f q(ORB_pattern[i*4 + 2], ORB_pattern[i * 4 + 3]);

// rotate with theta around z

cv::Point2f pp = cv::Point2f(cos_theta * p.x - sin_theta * p.y, sin_theta * p.x + cos_theta * p.y) + kp.pt;;

cv::Point2f qq = cv::Point2f(cos_theta * q.x - sin_theta * q.y, sin_theta * q.x + cos_theta * q.y) + kp.pt;;

// Check if the rotated pair is out of bounds

if(pp.x<0 || pp.y<0 || pp.x>=image.cols || pp.y>=image.rows ||

qq.x<0 || qq.y<0 || qq.x>=image.cols || qq.y>=image.rows){

desc.clear();

break;

}

// Compare the pair

d[i] = image.at<uchar>(pp.y, pp.x) < image.at<uchar>(qq.y, qq.x);

}

}

desc.push_back(d);

}

int bad = 0;

for (auto &d : desc) {

if (d.empty())

bad++;

}

cout << "bad/total: " << bad << "/" << desc.size() << endl;

}

2.3 暴力匹配

void bfMatch(const vector<DescType> &desc1, const vector<DescType> &desc2,

vector<cv::DMatch> &matches) {

int d_max = 50;

// Find matches between desc1 and desc

for(int i = 0; i < desc1.size(); i++) {

if (desc1[i].empty()) {

continue;

}

// Find the closest match for desc1 from desc2

float min_dist = 256;

int min_desc_idx = -1;

for (int j = 0; j < desc2.size(); j++) {

if (desc2[j].empty()) {

continue;

}

// Compute hamming distance

int distance = 0;

for (int k = 0; k < 256; ++k) {

//distance += (desc1[i][k] != desc2[j][k]) ? 1: 0;

distance += (desc1[i][k]) ^ (desc2[j][k]);

}

// Update closest match

if (distance < min_dist) {

min_dist = distance;

min_desc_idx = j;

}

}

// Check if min distance <= threshold

if (min_dist < d_max) {

cv::DMatch match;

match.distance = min_dist;

match.queryIdx = i;

match.trainIdx = min_desc_idx;

matches.push_back(match);

}

}

}

2.4 多线程ORB

暂时先不做了,涉及到C++17新的标准还没有学习到那个地步。

3271

3271

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?