首先通过读入一个带标签的txt文件。如图:

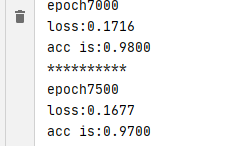

然后建立逻辑回归二分类模型对其进行分类,得到结果如下图:

代码如下:

import torch

import numpy as np

import matplotlib.pyplot as plt

from torch import nn

from torch import optim

import os

from torch.autograd import Variable

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

with open('E:\code\TestSet.txt', 'r') as f:

data_list = f.readlines()

data_list = [i.strip().split() for i in data_list]

data = [(float(i[0]), float(i[1]), float(i[2])) for i in data_list]

x0 = list(filter(lambda x: x[-1] == 0.0, data))

x1 = list(filter(lambda x: x[-1] == 1.0, data))

plot_x0_0 = [i[0] for i in x0]

plot_x0_1 = [i[1] for i in x0]

plot_x1_0 = [i[0] for i in x1]

plot_x1_1 = [i[1] for i in x1]

x_data=[(k[0],k[1]) for k in data]

y_data=[k[2] for k in data ]

print(x_data)

print(y_data)

x_data=torch.Tensor(x_data)

y_data=torch.Tensor(y_data).unsqueeze(1)

class LogisticRegression(nn.Module):

def __init__(self):

super(LogisticRegression, self).__init__()

self.linear=nn.Linear(2,1)

self.sm=nn.Sigmoid()

def forward(self,x):

x=self.linear(x)

x=self.sm(x)

return x

model=LogisticRegression()

criterion=nn.BCELoss()

optimizer=optim.SGD(model.parameters(),lr=1e-3,momentum=0.9)

for epoch in range(7500):

x=Variable(x_data)

y=Variable(y_data)

out=model(x)

loss=criterion(out,y)

print_loss=loss.item()

mask=out.ge(0.5).float()

correct=(mask==y).sum()

acc=correct.item()/x.size(0)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epoch+1)%500==0:

print('*'*10)

print('epoch{}'.format(epoch+1))

print('loss:{:.4f}'.format(print_loss))

print('acc is:{:.4f}'.format(acc))

w0,w1=model.linear.weight[0]

w0=w0.item()

w1=w1.item()

b=model.linear.bias.item()

plot_x=np.arange(-4,4,0.1)

plot_y=(-w0*plot_x-b)/w1

plt.plot(plot_x0_0, plot_x0_1, 'ro', label='x0')

plt.plot(plot_x1_0, plot_x1_1, 'bo', label='x1')

plt.legend(loc='best')

plt.plot(plot_x,plot_y,'g')

plt.show()

参考《深度学习入门之pytorch》,廖星宇编著

818

818

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?