调整各参数

这里用cifar10数据集为例

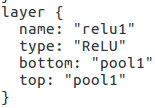

激活函数

Sigmoid、ReLU、TanH、Absolute Value、Power、BNLL

看一下cifar10中用的是什么激活函数

cifar10_quick.prototxt

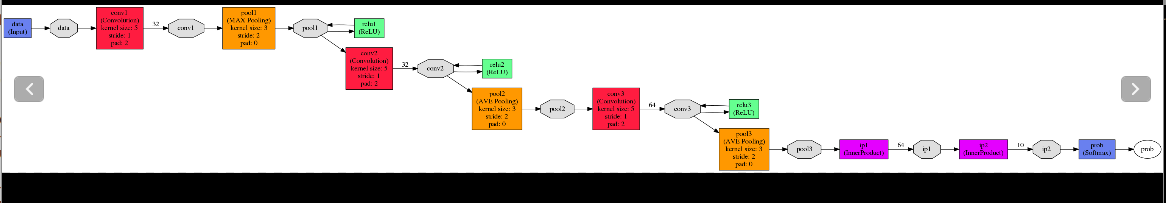

还有一种更直观的方法是,我们将训练网络“画”出来

回到caffe的根目录

cd python

python draw_net.py ../examples/cifar10/cifar10_quick.prototxt cifar10.png

因此我们可以清楚的看到这里面三次用到ReLU激活函数

现在我们尝试改一下激活函数,以下是caffe中六种激活函数

layer {

name: "relu1"

type: "ReLU"

bottom: "pool1"

top: "pool1"

} layer {

name: "encode1neuron"

bottom: "encode1"

top: "encode1neuron"

type: "Sigmoid"

} layer {

name: "layer"

bottom: "in"

top: "out"

type: "TanH"

} layer {

name: "layer"

bottom: "in"

top: "out"

type: "AbsVal"

} layer {

name: "layer"

bottom: "in"

top: "out"

type: "Power"

power_param {

power: 2

scale: 1

shift: 0

}

} layer {

name: "layer"

bottom: "in"

top: "out"

type: “BNLL”

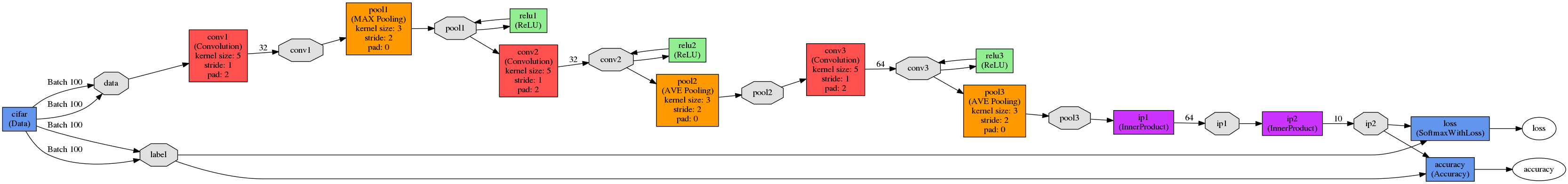

} 我们来看一下cifar10_quick_train_test.prototxt 的网络图

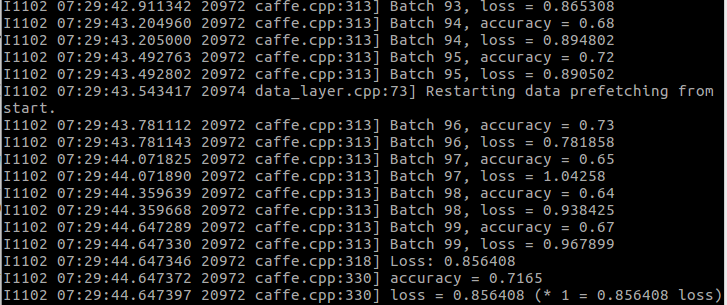

修改之前:

./build/tools/caffe test \

-model examples/cifar10_quick_train_test.prototxt \

-weights examples/cifar10/cifar10_quick_iter_4000.caffemodel

我们把cifar10里的 cifar10_quick_train_test.prototxt 里的激活函数改成Sigmoid试试

name: "CIFAR10_quick"

layer {

name: "cifar"

type: "Data"

top: "data"

top: "label"

include {

phase: TRAIN

}

transform_param {

mean_file: "examples/cifar10/mean.binaryproto"

}

data_param {

source: "examples/cifar10/cifar10_train_lmdb"

batch_size: 100

backend: LMDB

}

}

layer {

name: "cifar"

type: "Data"

top: "data"

top: "label"

include {

phase: TEST

}

transform_param {

mean_file: "examples/cifar10/mean.binaryproto"

}

data_param {

source: "examples/cifar10/cifar10_test_lmdb"

batch_size:

本文以Caffe框架为例,探讨了在cifar10数据集上调整模型参数的过程,包括激活函数的选择(如ReLU、Sigmoid、BNLL等)、学习率(lr)策略、dropout的运用及其优缺点,以及batch normalization在模型优化中的作用。通过实验,强调了合适参数对模型性能的重要性。

本文以Caffe框架为例,探讨了在cifar10数据集上调整模型参数的过程,包括激活函数的选择(如ReLU、Sigmoid、BNLL等)、学习率(lr)策略、dropout的运用及其优缺点,以及batch normalization在模型优化中的作用。通过实验,强调了合适参数对模型性能的重要性。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1728

1728

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?