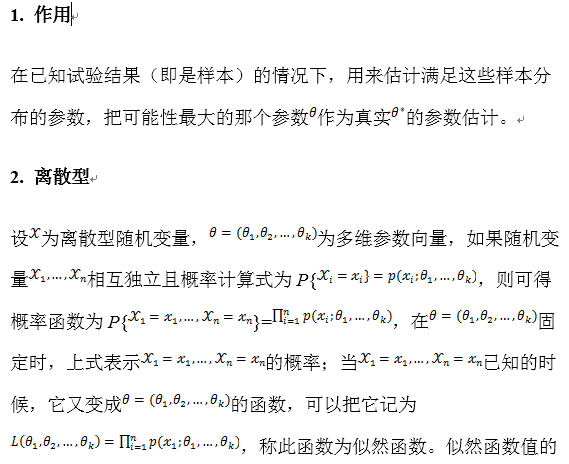

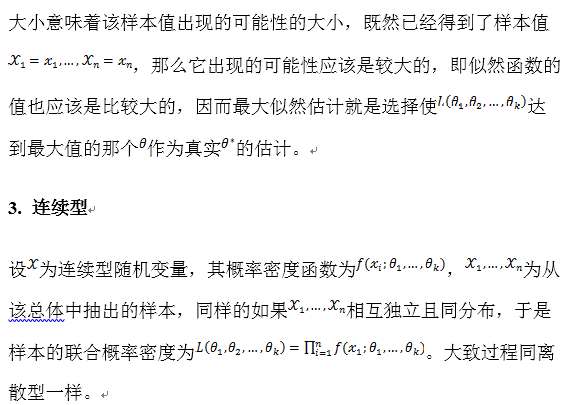

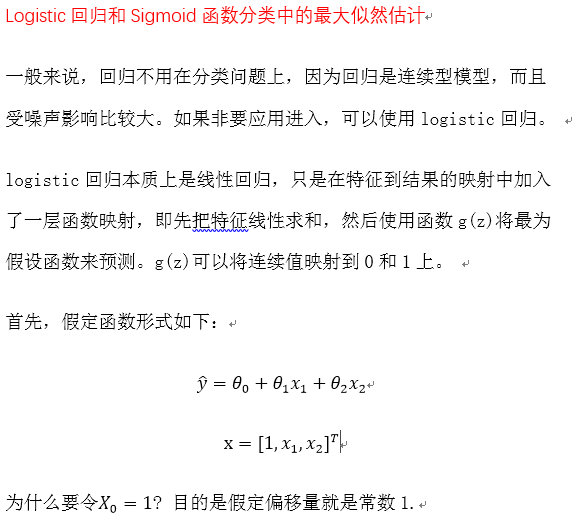

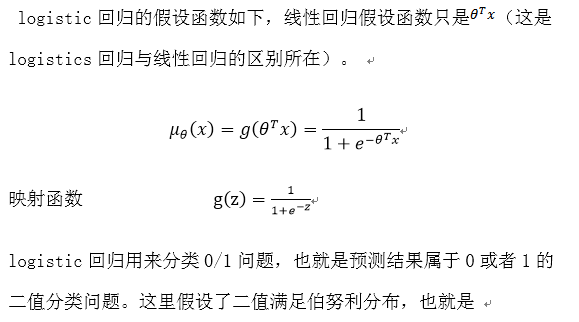

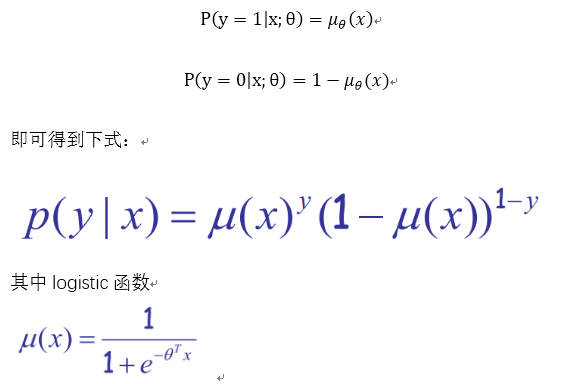

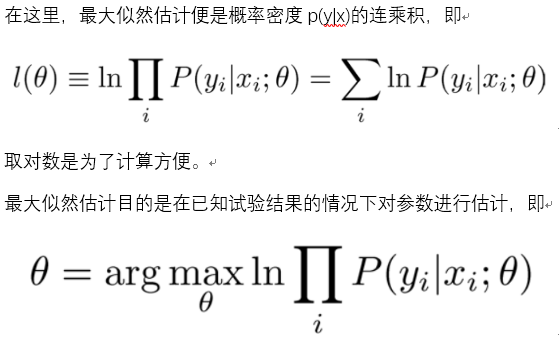

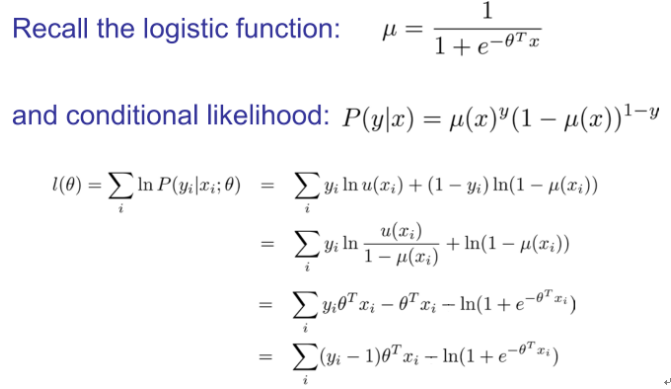

在讲Logistic回归前,先具体说明一下什么是最大似然估计,可以参考最大似然估计学习总结------MadTurtle

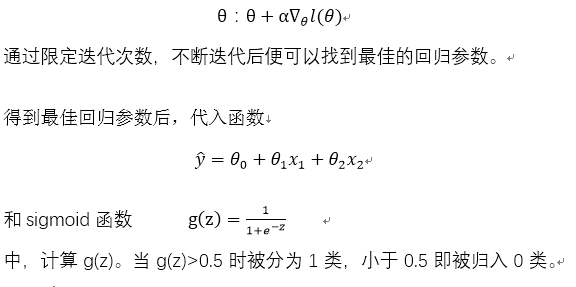

梯度上升算法的代码如下:

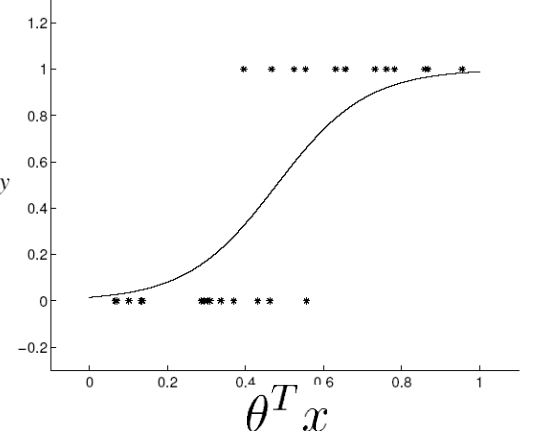

def sigmoid(inX):

return 1.0/(1+exp(-inX))

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn) #convert to NumPy matrix

labelMat = mat(classLabels).transpose() #convert to NumPy matrix

m,n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = ones((n,1))

for k in range(maxCycles): #heavy on matrix operations

h = sigmoid(dataMatrix*weights) #matrix mult

error = (labelMat - h) #vector subtraction

weights = weights + alpha * dataMatrix.transpose()* error #matrix mult

return weights参考:

2010年龙星计划课件

《机器学习实战》

797

797

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?