卷积神经网络实现数字识别

1.导入相关的库

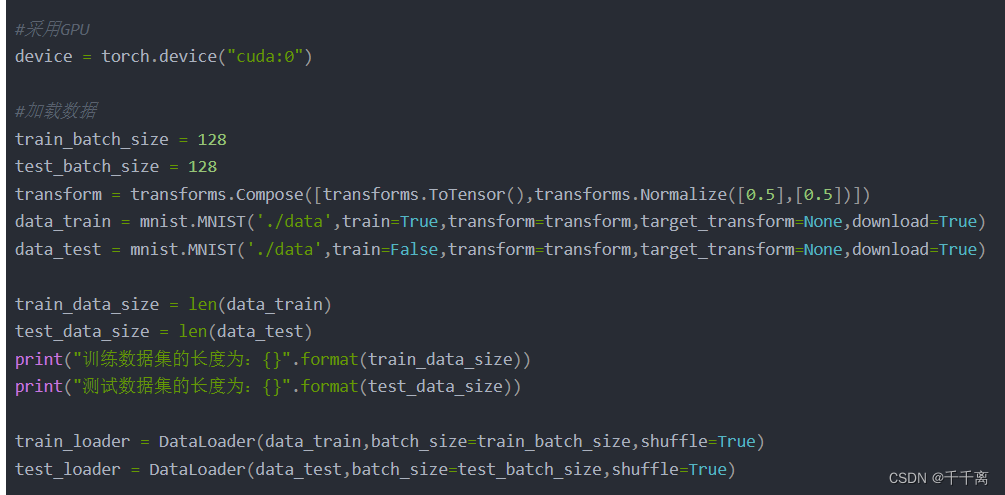

2.载入数据

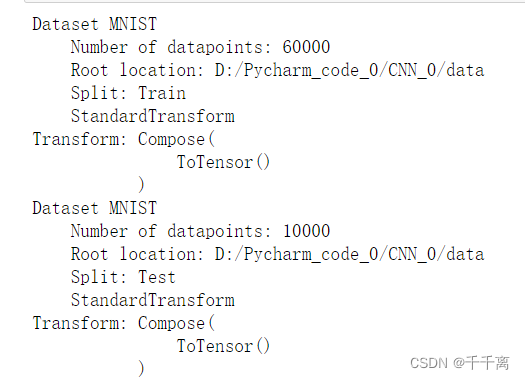

(数据集组成)

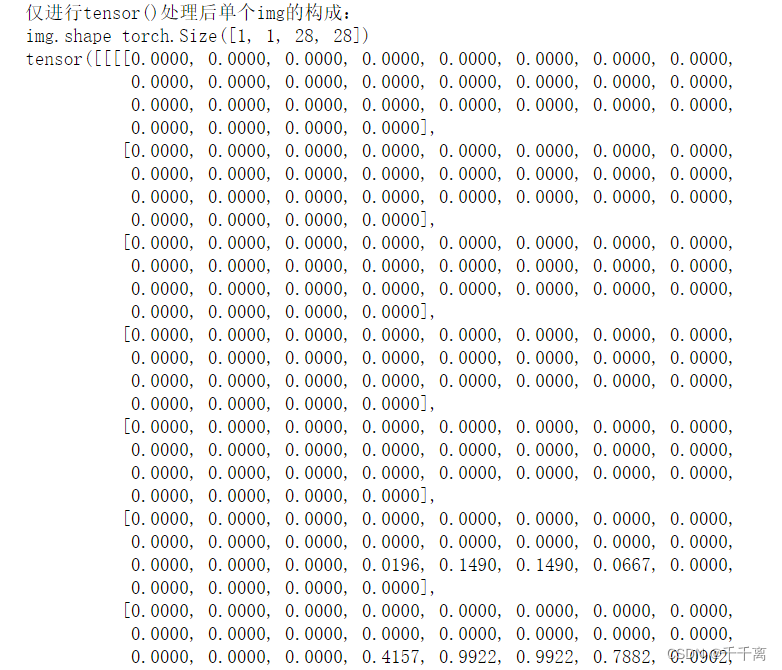

(tensor()格式的为标准化的单张img)

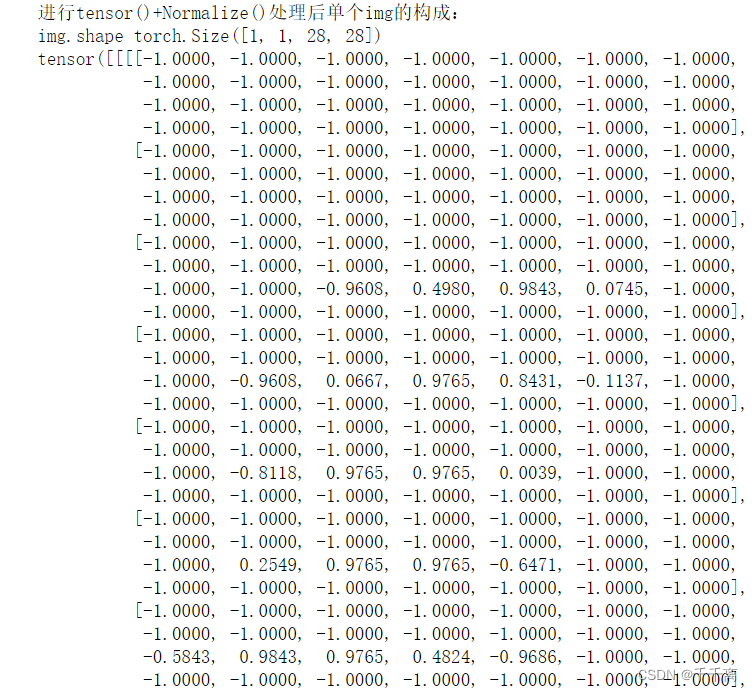

(标准化后)

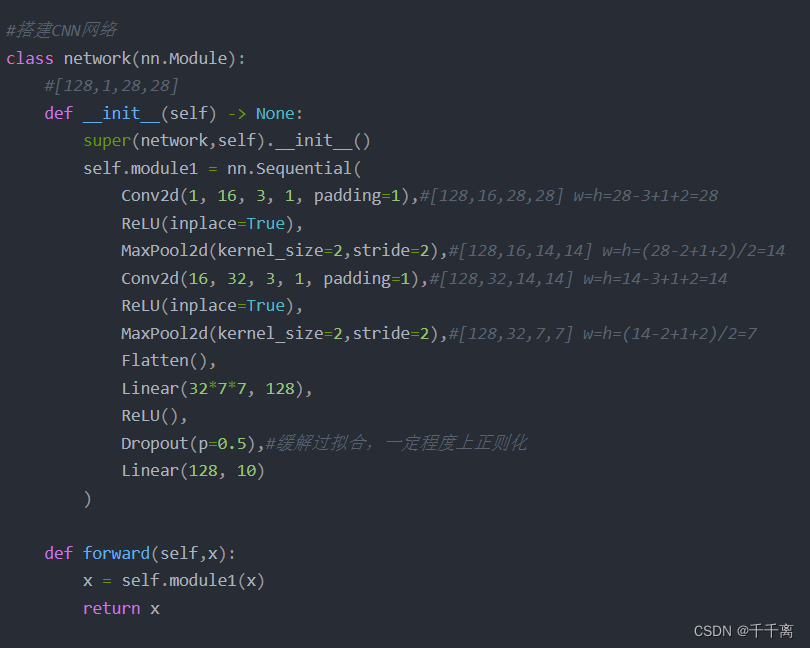

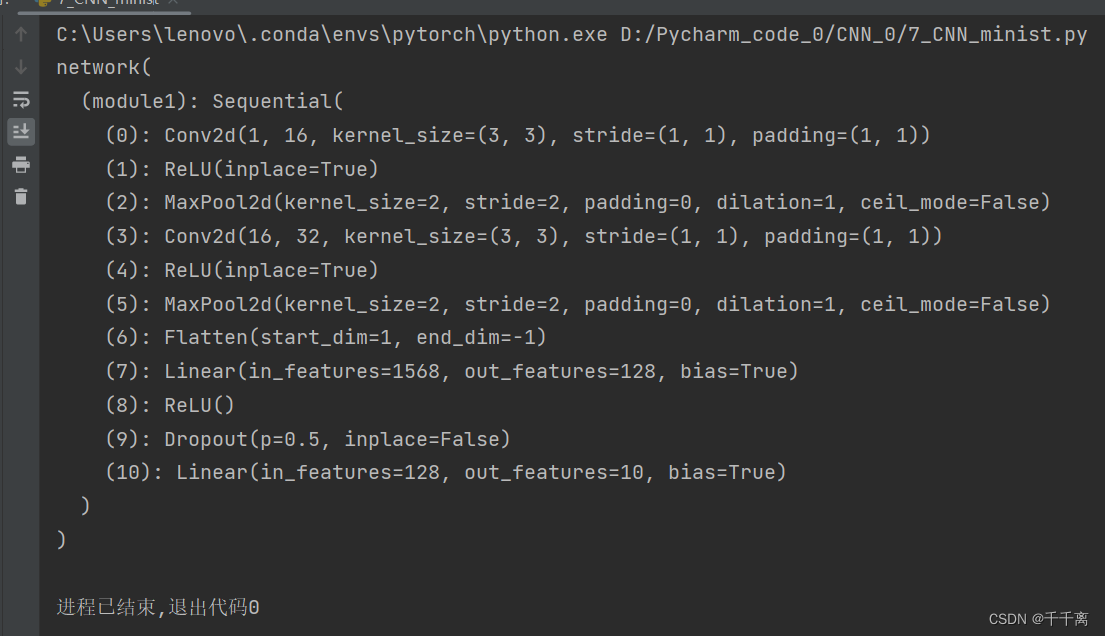

3.CNN骨架

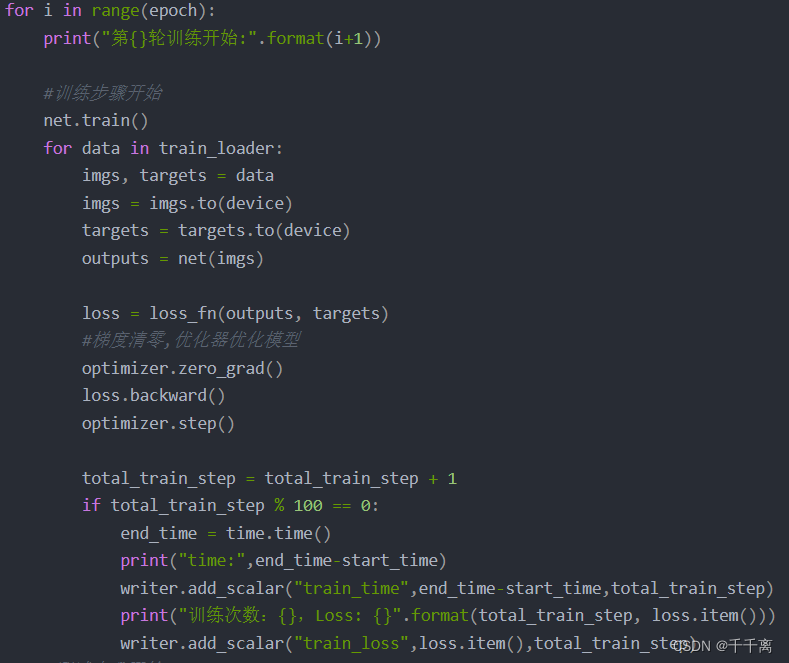

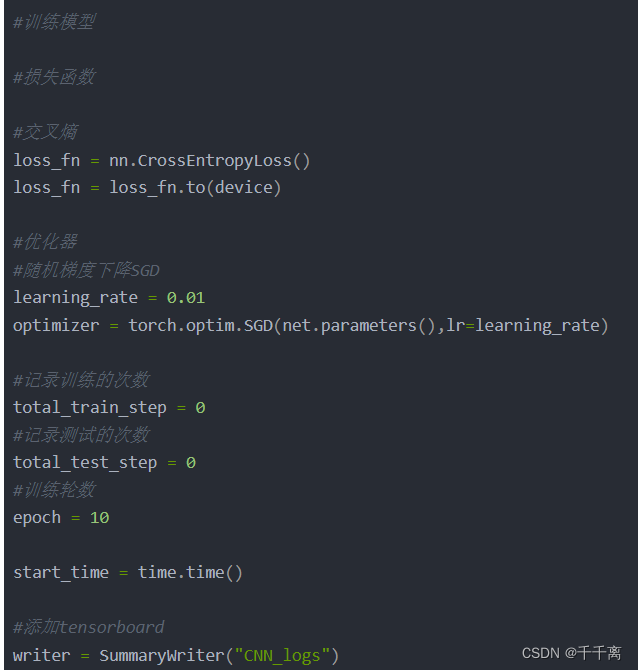

4.训练

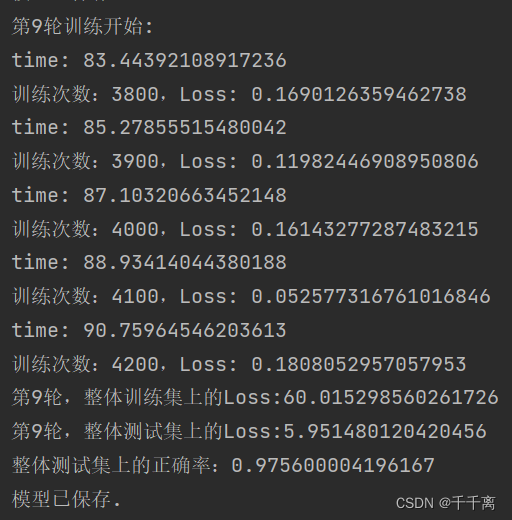

(第九轮训练)

5.测试

6.保存模型

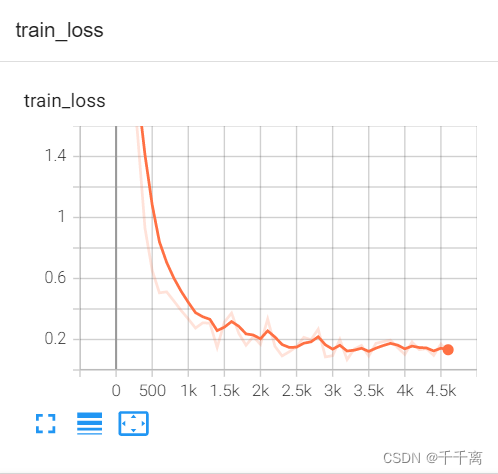

(训练损失曲线)

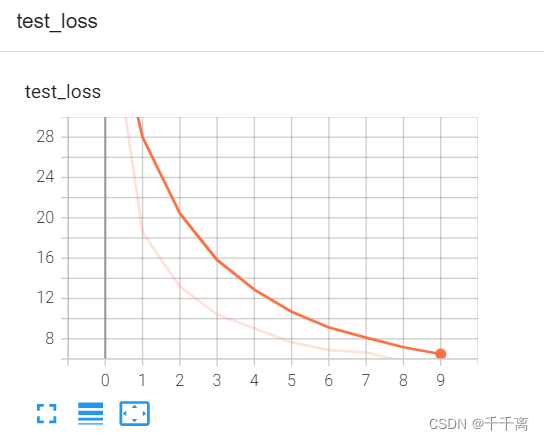

(测试损失曲线)

(测试正确率曲线)

完整代码

import time

import torch

from matplotlib import pyplot as plt

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, ReLU, Dropout

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

from torchvision import transforms

from torchvision.datasets import mnist

#采用GPU

device = torch.device("cuda:0")

#加载数据

train_batch_size = 128

test_batch_size = 128

transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize([0.5],[0.5])])

data_train = mnist.MNIST('./data',train=True,transform=transform,target_transform=None,download=True)

data_test = mnist.MNIST('./data',train=False,transform=transform,target_transform=None,download=True)

train_data_size = len(data_train)

test_data_size = len(data_test)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(test_data_size))

train_loader = DataLoader(data_train,batch_size=train_batch_size,shuffle=True)

test_loader = DataLoader(data_test,batch_size=test_batch_size,shuffle=True)

# 可视化数据

examples = enumerate(test_loader)

batch_idx, (example_data, example_targets) = next(examples)

plt.figure(figsize=(9, 9))

for i in range(9):

plt.subplot(3, 3, i+1)

plt.title("Ground Truth:{}".format(example_targets[i]))

plt.imshow(example_data[i][0], cmap='gray', interpolation='none')

plt.xticks([])

plt.yticks([])

plt.show()

#搭建CNN网络

class network(nn.Module):

#[128,1,28,28]

def __init__(self) -> None:

super(network,self).__init__()

self.module1 = nn.Sequential(

Conv2d(1, 16, 3, 1, padding=1),#[128,16,28,28] w=h=28-3+1+2=28

ReLU(inplace=True),

MaxPool2d(kernel_size=2,stride=2),#[128,16,14,14] w=h=(28-2+1+2)/2=14

Conv2d(16, 32, 3, 1, padding=1),#[128,32,14,14] w=h=14-3+1+2=14

ReLU(inplace=True),

MaxPool2d(kernel_size=2,stride=2),#[128,32,7,7] w=h=(14-2+1+2)/2=7

Flatten(),

Linear(32*7*7, 128),

ReLU(),

Dropout(p=0.5),#缓解过拟合,一定程度上正则化

Linear(128, 10)

)

def forward(self,x):

x = self.module1(x)

return x

net = network()

net = net.to(device)

print("模型结构为:")

print(net)

#训练模型

#损失函数

#交叉熵

loss_fn = nn.CrossEntropyLoss()

loss_fn = loss_fn.to(device)

#优化器

#随机梯度下降SGD

learning_rate = 0.01

optimizer = torch.optim.SGD(net.parameters(),lr=learning_rate)

#记录训练的次数

total_train_step = 0

#记录测试的次数

total_test_step = 0

#训练轮数

epoch = 10

start_time = time.time()

#添加tensorboard

writer = SummaryWriter("CNN_logs")

for i in range(epoch):

print("第{}轮训练开始:".format(i+1))

#训练步骤开始

net.train()

total_train_loss = 0

for data in train_loader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = net(imgs)

loss = loss_fn(outputs, targets)

total_train_loss = total_train_loss + loss.item()

#梯度清零,优化器优化模型

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_train_step = total_train_step + 1

if total_train_step % 100 == 0:

end_time = time.time()

print("time:",end_time-start_time)

writer.add_scalar("train_time",end_time-start_time,total_train_step)

print("训练次数:{},Loss: {}".format(total_train_step, loss.item()))

writer.add_scalar("train_loss",loss.item(),total_train_step)

#测试步骤开始

net.eval()

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_loader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = net(imgs)

loss = loss_fn(outputs, targets)

total_test_loss = total_test_loss + loss.item()

#argmax(1)行,(0)列

#正确率

accuracy = (outputs.argmax(1)==targets).sum()

total_accuracy = total_accuracy + accuracy

print("第{}轮,整体测试集上的Loss:{}".format(i+1, total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("(每一轮)all_test_loss",total_test_loss, total_test_step)

writer.add_scalar("test_accuracy",total_accuracy / test_data_size, total_test_step)

total_test_step = total_test_step+1

#保存每一轮训练的结果

torch.save(net, "network_{}.pth".format(i+1))

#torch.save(net.state_dict(),"net_{}.pth".format(i+1))

print("模型已保存.")

writer.close()

2981

2981

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?