往期文章链接目录

文章目录

NLP = NLU + NLG

- NLU: Natural Language Understanding

- NLG: Natural Language Generation

NLG may be viewed as the opposite of NLU: whereas in NLU, the system needs to disambiguate the input sentence to produce the machine representation language, in NLG the system needs to make decisions about how to put a concept into human understandable words.

Classical applications in NLP

-

Question Answering

-

Sentiment Analysis

-

Machine Translation

-

Text Summarization: Text summarization refers to the technique of shortening long pieces of text. The intention is to create a coherent and fluent summary having only the main points outlined in the document. It involves both NLU and NLG. It requires the machine to first understand human text and overcome the long distance dependence problems (NLU) and then generate human understandable text (NLG).

- Extraction-based summarization: The extractive text summarization technique involves pulling keyphrases from the source document and combining them to make a summary. The extraction is made according to the defined metric without making any changes to the texts. The grammar might not be right.

- Abstraction-based summarization: The abstraction technique entails paraphrasing and shortening parts of the source document. The abstractive text summarization algorithms create new phrases and sentences that relay the most useful information from the original text — just like humans do.

- Therefore, abstraction performs better than extraction. However, the text summarization algorithms required to do abstraction are more difficult to develop; that’s why the use of extraction is still popular.

-

Information Extraction: Information extraction is the task of automatically extracting structured information from unstructured and/or semi-structured machine-readable documents and other electronically represented sources. QA uses information extraction a lot.

-

Dialogue System

- task-oriented dialogue system.

Text preprocessing

Tokenization

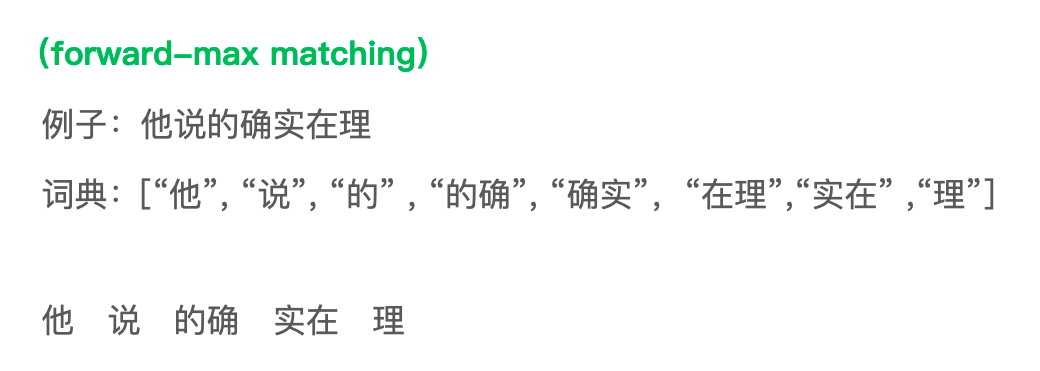

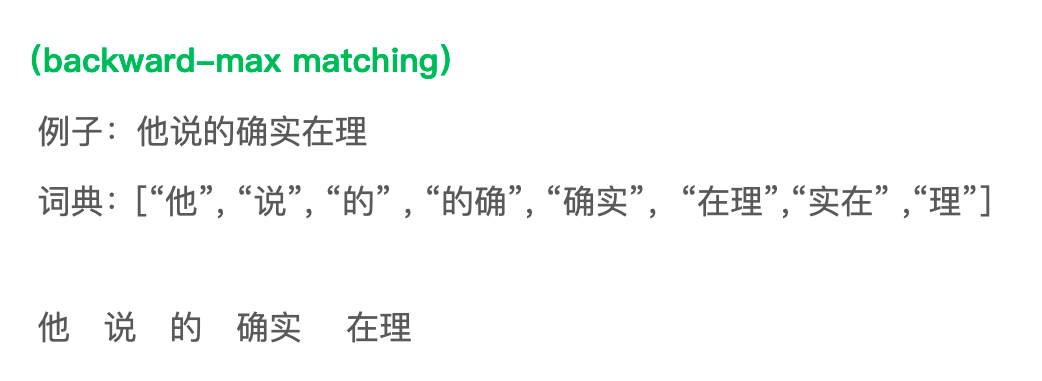

For Chinese, classical methods are forward max-matching and backward max-matching.

Shortcoming: Do not take semantic meaning into account.

Tokenization based on Language Modeling. Given an input, generate all possible way to split the sentence and then find the one with the highest possibility.

Unigram model:

P ( s ) = P ( w 1 ) P ( w 2 ) . . . P ( w k ) P(s) = P(w_1)P(w_2)...P(w_k) P(s)=P(w1)P(w2)...P(wk)

Bigram model:

P ( s ) = P ( w 1 ) P ( w 2 ∣ w 1 ) P ( w 3 ∣ w 2 ) . . . P ( w k ∣ w k − 1 ) P(s) = P(w_1)P(w_2 | w_1)P(w_3|w_2) ... P(w_k |w_{k-1}) P(s)=P(w1)P(w2∣w1)P(w3∣w2)...P(wk∣w

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2531

2531

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?