overfitting过拟合

原教程地址

一 过拟合基础理论

过拟合简单来说就是不能表达除了训练数据以外的数据(教程中有很生动的图像表示,建议大家直接去看教程)

1.1 过拟合的解决方法

(1)增加数据量

(2)运用正规化:L1,L2…regularization

适用于大多数的机器学习,神经网络,方法大同小异,可以简化关键公式为:

y=Wx

(W为机器学习所要学习到的各种参数,在过拟合中,W往往变化率比较大,为了不让W一次性变化的太大,在计算误差值上做一些改变)

原始误差

L1正规化:cost=(Wx-real y)^2+abs(W)

L2正规化:cost=(Wx-real y)2+(W)2

通过*(预测值-真实值)^2来计算cost*,如果W变化的太大,cost也跟着变大,使之变成一种惩罚机制

其中abs代表绝对值

其他的L3,L4… 都是将平方换成立方或者四次方

用这些方法可以保证让计算出来的曲线不会过于扭曲

(3)专门用于神经网络的正规化:

Dropout regularization

在训练的时候,随机忽略一些神经元和神经的连结,使得神经网络变得不完整。用不完整的神经网络训练一次。

第二次再随机忽略一些,变成另一个不完整的神经网络…

随机drop掉的规则使得在每一次训练中,每一次预测的结果都不会依赖其中某一部分特定的神经元

L1,L2与dropout两种正则化的对比

L1,L2中,会惩罚过度依赖的W参数

dropout从根本上不让神经网络产生过度依赖

二 代码

2.1 原代码

import tensorflow as tf

from sklearn.datasets import load_digits

from sklearn.cross_validation import train_test_split

from sklearn.preprocessing import LabelBinarizer

# load data

digits = load_digits()

X = digits.data # 加载0-9数字的图片data

y = digits.target

y = LabelBinarizer().fit_transform(y)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=.3)

def add_layer(inputs, in_size, out_size, layer_name, activation_function=None):

# add one more layer and return the output of this layer

Weights = tf.Variable(tf.random.normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, )

Wx_plus_b = tf.matmul(inputs, Weights) + biases

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, ) # 激活#

tf.histogram_summary(layer_name + '/outputs', outputs) # 在tensorboard中貌似一定要有一个histogram_summary否则会报错

return outputs

# define placeholder for inputs to network

# 每一张图片有64个像素点

xs = tf.placeholder(tf.float32, [None, 64]) # 8x8

# 每一个sample有10个输出

ys = tf.placeholder(tf.float32, [None, 10])

# add output layer

#这里不用tanh用其他方法会报出数据变成none的错误

l1 = add_layer(xs, 64, 100, 'l1', activation_function=tf.nn.tanh)

prediction = add_layer(l1, 100, 10, 'l2', activation_function=tf.nn.softmax)

# the loss between prediction and real data

cross_entropy = tf.reduce_mean(-tf.reduce_sum(ys * tf.log(prediction),

reduction_indices=[1])) # loss

tf.scalar_summary('loss', cross_entropy)

train_step = tf.train.GradientDescentOptimizer(0.6).minimize(cross_entropy)

sess = tf.Session()

merged = tf.merge_all_summaries()

#summary writer goes in here

train_writer = tf.train.SummaryWriter("logs/train", sess.graph)

test_writer = tf.train.SummaryWriter("logs/test", sess.graph)

# important step

sess.run(tf.initialize_all_variables())

for i in range(500):

sess.run(train_step, feed_dict={xs: X_train, ys: y_train})

if i % 50 == 0:

# record loss

train_result = sess.run(merged, feed_dict={xs: X_train, ys: y_train})

test_result = sess.run(merged, feed_dict={xs: X_test, ys: y_test})

train_writer.add_summary(train_result, i)

test_writer.add_summary(test_result, i)

2.2 报错及解决

1

ModuleNotFoundError: No module named 'sklearn’

查看代码

from sklearn.datasets import load_digits

from sklearn.cross_validation import train_test_split

from sklearn.preprocessing import LabelBinarizer

这个报错在写代码的时候就会飘红,就是没有导入sklearn这个库而已,需要注意的是sklearn是scikit-learn缩写

因此直接在Terminal里面输入:

pip install scikit-learn

看到最后的successfully即可(这里我用阿里云镜像下载的,非常方便快速)

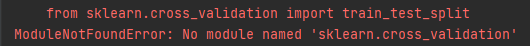

2

ModuleNotFoundError: No module named 'sklearn.cross_validation’

查看代码

from sklearn.cross_validation import train_test_split

这个问题是因为版本更新,需要更改为

from sklearn.model_selection import train_test_split

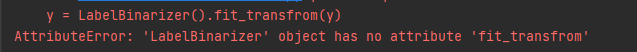

3

AttributeError: ‘LabelBinarizer’ object has no attribute 'fit_transfrom’

查看代码

y = LabelBinarizer().fit_transfrom(y)

我们按住ctrl点击LabelBinarizer进入他的函数可以看到在class LabelBinarizer类中有fit_transform函数

那么,真相只有一个,就是我打错了拼写(手动狗头,好吧之前检查拼写居然没发现这个bug,是个乌龙,希望大家一定要认真检查拼写)

更正拼写问题就好了

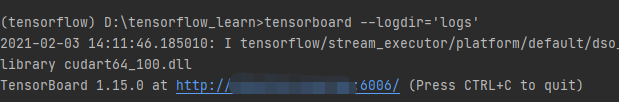

4

tensorboard中显示不出来结果

我在terminal中(因为用的是pycharm直接在它的terminal就可以了,不用再cd到根目录)

输入命令tensorboard --logdir=‘logs’

(马赛克的部分是主机名,教程显示的这个url6006前面是0.0.0.0)

然而当我直接复制带有我主机名的url到浏览器后会发生这样的情况

而我复制http://localhost:6006/也不能显示结果

解决办法

将命令*tensorboard --logdir=‘logs’*中的引号去掉

即可

tensorboard --logdir=logs

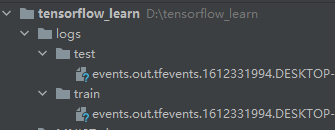

2.3 结果

logs中出现这个

test data的误差会略大于training data,即有一点overfitting

三 Overfitting的克服

将dropout结果加载到Wx_plus_b中,把这个结果的50%丢弃掉(不考虑),每一次都是任意的50%,这样可以较为有效的去掉dropout的影响

3.1 修改代码

首先在之前的代码placeholder的部分,定义保留的能力的变量keep_probkeep_prob = tf.placeholder(tf.float32)

# define placeholder for inputs to network

keep_prob = tf.placeholder(tf.float32)# 保持有多少结果不被drop掉

# 每一张图片有64个像素点

xs = tf.placeholder(tf.float32, [None, 64]) # 8x8

# 每一个sample有10个输出

ys = tf.placeholder(tf.float32, [None, 10])

然后在sess.run中增加keep_prob参数,留多少写多少,完全保留写1,被drop掉的部分用(1-想要被保留的)

for i in range(500):

sess.run(train_step, feed_dict={xs: X_train, ys: y_train, keep_prob: 0.5}) # 一般会有50%被drop掉,如果想要drop掉40%,就要改成0.6(1-0.4)

if i % 50 == 0:

# record loss

train_result = sess.run(merged, feed_dict={xs: X_train, ys: y_train, keep_prob: 1}) # 记录过程不想被drop掉

test_result = sess.run(merged, feed_dict={xs: X_test, ys: y_test, keep_prob: 1})

train_writer.add_summary(train_result, i)

test_writer.add_summary(test_result, i)

dropout的主功能写在add_layer中,因为主要针对Wx_plus_b进行dropout,在这个函数中只添加一句代码Wx_plus_b = tf.nn.dropout(Wx_plus_b, keep_prob)

def add_layer(inputs, in_size, out_size, layer_name, activation_function=None):

# add one more layer and return the output of this layer

Weights = tf.Variable(tf.random.normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, )

Wx_plus_b = tf.matmul(inputs, Weights) + biases

Wx_plus_b = tf.nn.dropout(Wx_plus_b, keep_prob) # dropout 的主功能

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, ) # 激活#

tf.summary.histogram(layer_name + '/outputs', outputs) # 在tensorboard中貌似一定要有一个histogram_summary否则会报错

# tf.summary.histogram()

return outputs

最后改一下参数,将100改为50,否则会因为数据过大而报错

# add output layer

#这里不用tanh用其他方法会报出数据变成none的错误

l1 = add_layer(xs, 64, 50, 'l1', activation_function=tf.nn.tanh)

prediction = add_layer(l1, 50, 10, 'l2', activation_function=tf.nn.softmax)

3.2 dropout结果对比

没有dropout时,全部保留keep_prob: 1

随机dropout 50%的结果keep_prob: 0.5

可以明显看到test和train数据的相差度明显减小

3.3 修改后的完整代码

import tensorflow as tf

from sklearn.datasets import load_digits

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelBinarizer

# load data

digits = load_digits()

X = digits.data # 加载0-9数字的图片data

y = digits.target

y = LabelBinarizer().fit_transform(y)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=.3)

def add_layer(inputs, in_size, out_size, layer_name, activation_function=None):

# add one more layer and return the output of this layer

Weights = tf.Variable(tf.random.normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, )

Wx_plus_b = tf.matmul(inputs, Weights) + biases

Wx_plus_b = tf.nn.dropout(Wx_plus_b, keep_prob) # dropout 的主功能

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, ) # 激活#

tf.summary.histogram(layer_name + '/outputs', outputs) # 在tensorboard中貌似一定要有一个histogram_summary否则会报错

return outputs

# define placeholder for inputs to network

keep_prob = tf.placeholder(tf.float32)# 保持有多少结果不被drop掉

# 每一张图片有64个像素点

xs = tf.placeholder(tf.float32, [None, 64]) # 8x8

# 每一个sample有10个输出

ys = tf.placeholder(tf.float32, [None, 10])

# add output layer

#这里不用tanh用其他方法会报出数据变成none的错误

l1 = add_layer(xs, 64, 50, 'l1', activation_function=tf.nn.tanh)

prediction = add_layer(l1, 50, 10, 'l2', activation_function=tf.nn.softmax)

# the loss between prediction and real data

cross_entropy = tf.reduce_mean(-tf.reduce_sum(ys * tf.log(prediction),

reduction_indices=[1])) # loss

# tf.scalar_summary('loss', cross_entropy)

tf.summary.scalar('loss', cross_entropy)

train_step = tf.train.GradientDescentOptimizer(0.6).minimize(cross_entropy)

sess = tf.Session()

# merged = tf.merge_all_summaries()

merged = tf.summary.merge_all()

#summary writer goes in here

# train_writer = tf.train.SummaryWriter("logs/train", sess.graph)

# test_writer = tf.train.SummaryWriter("logs/test", sess.graph)

train_writer = tf.compat.v1.summary.FileWriter("logs/train", sess.graph)

test_writer = tf.compat.v1.summary.FileWriter("logs/test", sess.graph)

# important step

sess.run(tf.initialize_all_variables())

for i in range(500):

sess.run(train_step, feed_dict={xs: X_train, ys: y_train, keep_prob: 0.5}) # 一般会有50%被drop掉,如果想要drop掉40%,就要改成0.6(1-0.4)

if i % 50 == 0:

# record loss

train_result = sess.run(merged, feed_dict={xs: X_train, ys: y_train, keep_prob: 1}) # 记录过程不想被drop掉

test_result = sess.run(merged, feed_dict={xs: X_test, ys: y_test, keep_prob: 1})

train_writer.add_summary(train_result, i)

test_writer.add_summary(test_result, i)

被注释掉的部分也可以用,就是版本导致的写的习惯而已,目前pycharm好像比较推荐使用没被注释掉这种

9767

9767

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?