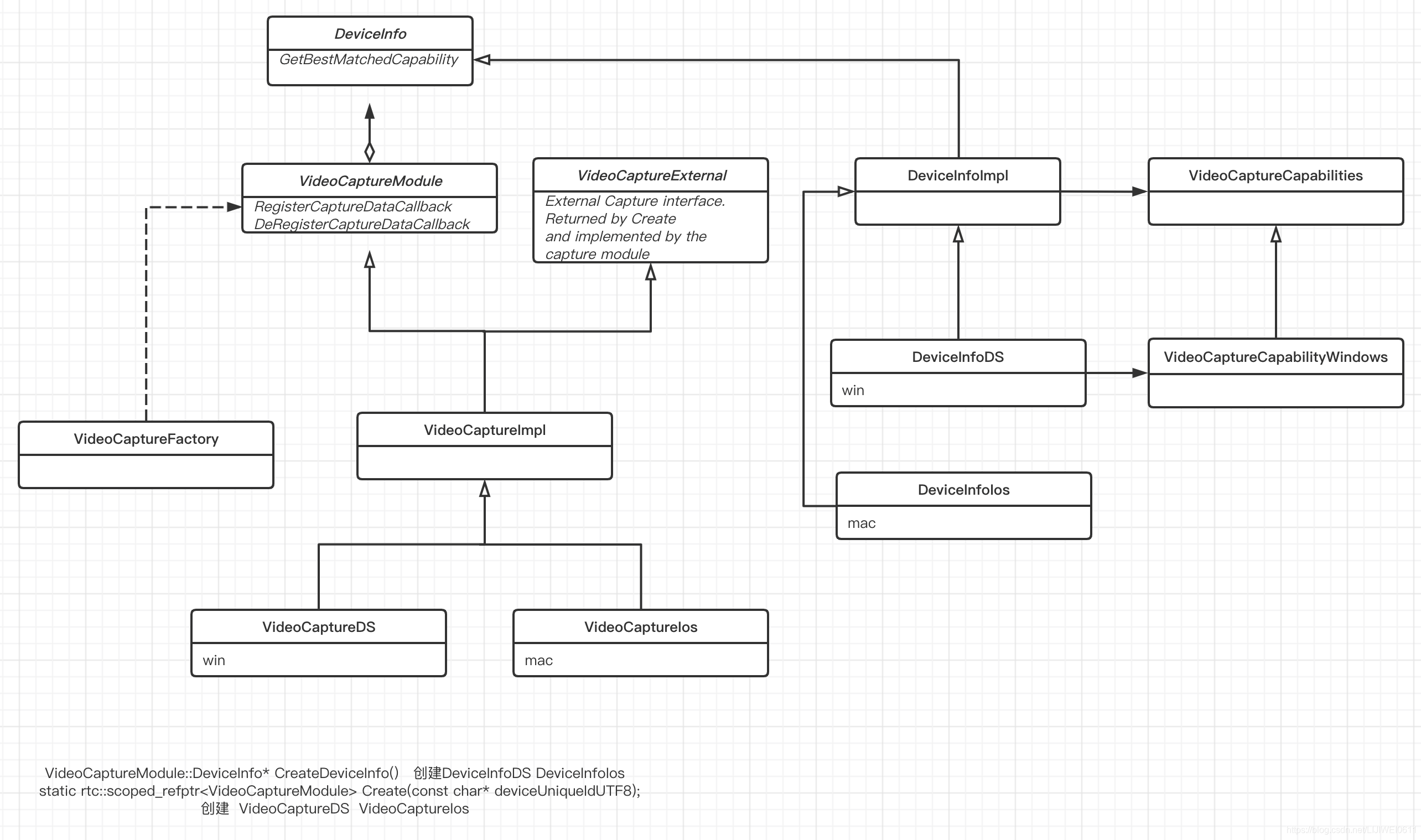

webrtc的video_capture模块,为我们在不同端设备上采集视频提供了一个跨平台封装的视频采集功能,如下图中的webrtc的video_capture源码,现webrtc的video_capture模块支持android、ios、linux、mac和windows各操作平台下的视频采集, 我们在不同端设备上开发视频直播的时刻,也可以使用该模块进行视频采集。

基于WebRTC的实时音视频会议中对于视频处理流水,第一级就是视频采集,视频内容可以摄像头、屏幕和视频文件,视频来源的操作系统可以是Linux、Windows、Mac,IOS以及Android,不同的平台由不同的公司开发设计,因而他们从camera获取视频的底层框架并不一样,Linux系统使用V4L2(Video for Linux Version 2),Mac和IOS都是苹果公司开发的,都使用AVFoundation框架,Windows使用的是微软开发的DS(Direct Show)框架,Android使用camera2.0接口(Camera2Capturer)采集视频。

视频采集模块的虚基类为VideoCaptureModule,它定义一系列视频采集的通用接口函数: Start/StopCapture用来开始/结束视频采集;Register/DeCaptureDataCallback用来注册/注销数据回调模块,数据回调模块用来把视频数据向上层模块推送;CaptureCallback则是向上层报告采集模块自身的运行状态。视频采集模块采用WebRTC的通用模块机制,因此它也继承自Module类,用来完成通用的模块操作。

namespace webrtc {

class VideoCaptureModule: public rtc::RefCountInterface {

public:

// Interface for receiving information about available camera devices.

class DeviceInfo {

public:

virtual uint32_t NumberOfDevices() = 0;

// Returns the available capture devices.

// deviceNumber - Index of capture device.

// deviceNameUTF8 - Friendly name of the capture device.

// deviceUniqueIdUTF8 - Unique name of the capture device if it exist.

// Otherwise same as deviceNameUTF8.

// productUniqueIdUTF8 - Unique product id if it exist.

// Null terminated otherwise.

virtual int32_t GetDeviceName(

uint32_t deviceNumber,

char* deviceNameUTF8,

uint32_t deviceNameLength,

char* deviceUniqueIdUTF8,

uint32_t deviceUniqueIdUTF8Length,

char* productUniqueIdUTF8 = 0,

uint32_t productUniqueIdUTF8Length = 0) = 0;

// Returns the number of capabilities this device.

virtual int32_t NumberOfCapabilities(

const char* deviceUniqueIdUTF8) = 0;

// Gets the capabilities of the named device.

virtual int32_t GetCapability(

const char* deviceUniqueIdUTF8,

const uint32_t deviceCapabilityNumber,

VideoCaptureCapability& capability) = 0;

// Gets clockwise angle the captured frames should be rotated in order

// to be displayed correctly on a normally rotated display.

virtual int32_t GetOrientation(const char* deviceUniqueIdUTF8,

VideoRotation& orientation) = 0;

// Gets the capability that best matches the requested width, height and

// frame rate.

// Returns the deviceCapabilityNumber on success.

virtual int32_t GetBestMatchedCapability(

const char* deviceUniqueIdUTF8,

const VideoCaptureCapability& requested,

VideoCaptureCapability& resulting) = 0;

// Display OS /capture device specific settings dialog

virtual int32_t DisplayCaptureSettingsDialogBox(

const char* deviceUniqueIdUTF8,

const char* dialogTitleUTF8,

void* parentWindow,

uint32_t positionX,

uint32_t positionY) = 0;

virtual ~DeviceInfo() {}

};

// Register capture data callback

virtual void RegisterCaptureDataCallback(

rtc::VideoSinkInterface<VideoFrame> *dataCallback) = 0;

// Remove capture data callback

virtual void DeRegisterCaptureDataCallback() = 0;

// Start capture device

virtual int32_t StartCapture(

const VideoCaptureCapability& capability) = 0;

virtual int32_t StopCapture() = 0;

// Returns the name of the device used by this module.

virtual const char* CurrentDeviceName() const = 0;

// Returns true if the capture device is running

virtual bool CaptureStarted() = 0;

// Gets the current configuration.

virtual int32_t CaptureSettings(VideoCaptureCapability& settings) = 0;

// Set the rotation of the captured frames.

// If the rotation is set to the same as returned by

// DeviceInfo::GetOrientation the captured frames are

// displayed correctly if rendered.

virtual int32_t SetCaptureRotation(VideoRotation rotation) = 0;

// Tells the capture module whether to apply the pending rotation. By default,

// the rotation is applied and the generated frame is up right. When set to

// false, generated frames will carry the rotation information from

// SetCaptureRotation. Return value indicates whether this operation succeeds.

virtual bool SetApplyRotation(bool enable) = 0;

// Return whether the rotation is applied or left pending.

virtual bool GetApplyRotation() = 0;

protected:

virtual ~VideoCaptureModule() {};

};

} // namespace webrtc

#endif // MODULES_VIDEO_CAPTURE_VIDEO_CAPTURE_H_

其中包括一个设备信息类(class DeviceInfo):class DeviceInfo是用来管理处理视频设备信息;virtual int32_t StartCapture (const VideoCaptureCapability& capability) = 0; 用于启动采集视频,而virtual int32_t StopCapture() = 0;用于停止采集视频。

VideoCaptureImpl类是VideoCaptureModule的实现子类,它实现父类定义的通用平台无关接口。对于平台相关接口,则留在平台相关的子类中实现。该类定义一系列工厂方法来创建平台相关的具体子类。不同平台上实现的子类负责在各自平台下实现平台相关功能,主要是开始/结束视频采集和视频数据导出。在Linux平台上实现的子类是VideoCaptureV4L2,在IOS平台的实现为VideoCaptureIos,在Windows平台上的实现为VideoCaptureMF和VideoCaptureDS,等等。

VideoCaptureModule::DeviceInfo 是对视频设备的一个抽象接口类。DeviceInfoImpl 是各个平台的通用实现。Interface for receiving information about available camera devices.

VideoCaptureFactory工厂方法:

#ifndef MODULES_VIDEO_CAPTURE_VIDEO_CAPTURE_FACTORY_H_

#define MODULES_VIDEO_CAPTURE_VIDEO_CAPTURE_FACTORY_H_

#include "modules/video_capture/video_capture.h"

namespace webrtc {

class VideoCaptureFactory {

public:

// Create a video capture module object

// id - unique identifier of this video capture module object.

// deviceUniqueIdUTF8 - name of the device.

// Available names can be found by using GetDeviceName

static rtc::scoped_refptr<VideoCaptureModule> Create(

const char* deviceUniqueIdUTF8);

// Create a video capture module object used for external capture.

// id - unique identifier of this video capture module object

// externalCapture - [out] interface to call when a new frame is captured.

static rtc::scoped_refptr<VideoCaptureModule> Create(

VideoCaptureExternal*& externalCapture);

static VideoCaptureModule::DeviceInfo* CreateDeviceInfo();

private:

~VideoCaptureFactory();

};

} // namespace webrtc

#endif // MODULES_VIDEO_CAPTURE_VIDEO_CAPTURE_FACTORY_H_

在VideoCaptureFactory中,根据不同的参数,创建不同的对象,如下所示:

rtc::scoped_refptr<VideoCaptureModule> VideoCaptureFactory::Create(

const char* deviceUniqueIdUTF8) {

#if defined(WEBRTC_ANDROID)

return nullptr;

#else

return videocapturemodule::VideoCaptureImpl::Create(deviceUniqueIdUTF8);

#endif

}

rtc::scoped_refptr<VideoCaptureModule> VideoCaptureFactory::Create(

VideoCaptureExternal*& externalCapture) {

return videocapturemodule::VideoCaptureImpl::Create(externalCapture);

}

VideoCaptureModule::DeviceInfo* VideoCaptureFactory::CreateDeviceInfo() {

#if defined(WEBRTC_ANDROID)

return nullptr;

#else

return videocapturemodule::VideoCaptureImpl::CreateDeviceInfo();

#endif

}

} // namespace webrtc

在VideoCaptureImpl中:

rtc::scoped_refptr<VideoCaptureModule> VideoCaptureImpl::Create(

VideoCaptureExternal*& externalCapture) {

rtc::scoped_refptr<VideoCaptureImpl> implementation(

new rtc::RefCountedObject<VideoCaptureImpl>());

externalCapture = implementation.get();

return implementation;

}

mac:

rtc::scoped_refptr<VideoCaptureModule> VideoCaptureImpl::Create(

const char* deviceUniqueIdUTF8) {

return VideoCaptureIos::Create(deviceUniqueIdUTF8);

}

mac:

VideoCaptureModule::DeviceInfo* VideoCaptureImpl::CreateDeviceInfo() {

return new DeviceInfoIos();

}

win

rtc::scoped_refptr<VideoCaptureModule> VideoCaptureImpl::Create(

const char* device_id) {

if (device_id == nullptr)

return nullptr;

// TODO(tommi): Use Media Foundation implementation for Vista and up.

rtc::scoped_refptr<VideoCaptureDS> capture(

new rtc::RefCountedObject<VideoCaptureDS>());

if (capture->Init(device_id) != 0) {

return nullptr;

}

return capture;

}

win:

// static

VideoCaptureModule::DeviceInfo* VideoCaptureImpl::CreateDeviceInfo() {

// TODO(tommi): Use the Media Foundation version on Vista and up.

return DeviceInfoDS::Create();

}

- 通过 VideoCaptureFactory 工厂类可以创建 VideoCaptureModule 实例。在 Android 和其他平台有差异,Android 以外的平台创建的是 VideoCaptureImpl 实例。

- 通过VideoCaptureFactory::CreateDeviceInfo()创建一个DeviceInfo的实例,在window下是DeviceInfoDS,在mac下是DeviceInfoIos。往往需要获取多个摄像头,首先是获取摄像头的数据。通过方法 DeviceInfo::NumberOfDevices 实现,然后是调用 DeviceInfo::GetDeviceName 获取摄像头的 name、id 等。

- 获取视频分辨率、帧率参数。用户可以根据自己的需求设置参数,调用 VideoCapture::GetBestCaptureFormat 获取最佳参数设置。

- 调用 VideoCapture::StartCapture 启动视频捕获。

- 通过 VideoCapture::RegisterCaptureDataCallback 接口设置接收视频数据的对象。

- 对于接收视频数据的类需要实现 rtc::VideoSinkInterface 接口,并且要重写虚函数 OnFrame。

通过以上步骤就可以接收到摄像头本地数据。

对于流媒体系统来说,产生数据的装置叫做源(Source),接收数据的装置叫做汇(Sink)。为此,WebRTC 中抽象了 VideoSourceInterface 和 VideoSinkInterface 的概念,分别表示视频源和视频汇,这两个概念在具体实现的时候是一个相对概念。比如,在某一层抽象相对于底层抽象是 Sink,相对于上层抽象就是 Source,需要灵活应对

#ifndef API_VIDEO_VIDEO_SINK_INTERFACE_H_

#define API_VIDEO_VIDEO_SINK_INTERFACE_H_

#include <rtc_base/checks.h>

namespace rtc {

template <typename VideoFrameT>

class VideoSinkInterface {

public:

virtual ~VideoSinkInterface() = default;

virtual void OnFrame(const VideoFrameT& frame) = 0;

// Should be called by the source when it discards the frame due to rate

// limiting.

virtual void OnDiscardedFrame() {}

};

} // namespace rtc

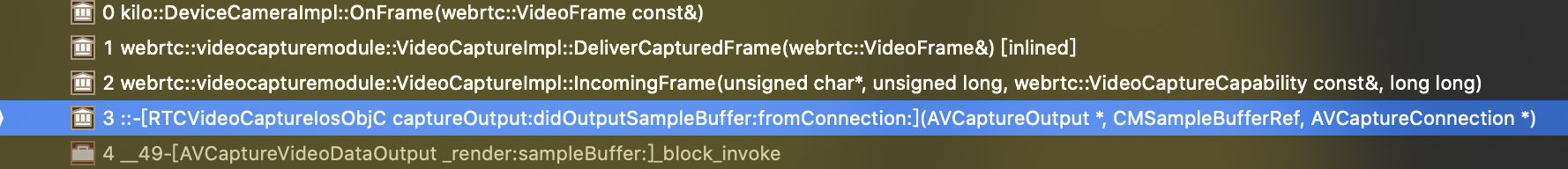

onFrame回调的到来:

首先:

modules/video_capture/objc/rtc_video_capture_objc.h:获取到视频帧,执行 _owner->IncomingFrame

(void)captureOutput:(AVCaptureOutput*)captureOutput

didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer

fromConnection:(AVCaptureConnection*)connection {

const int kFlags = 0;

CVImageBufferRef videoFrame = CMSampleBufferGetImageBuffer(sampleBuffer);

if (CVPixelBufferLockBaseAddress(videoFrame, kFlags) != kCVReturnSuccess) {

return;

}

uint8_t* baseAddress = (uint8_t*)CVPixelBufferGetBaseAddress(videoFrame);

const size_t width = CVPixelBufferGetWidth(videoFrame);

const size_t height = CVPixelBufferGetHeight(videoFrame);

const size_t frameSize = width * height * 2;

VideoCaptureCapability tempCaptureCapability;

tempCaptureCapability.width = width;

tempCaptureCapability.height = height;

tempCaptureCapability.maxFPS = _capability.maxFPS;

tempCaptureCapability.videoType = VideoType::kUYVY;

_owner->IncomingFrame(baseAddress, frameSize, tempCaptureCapability, 0);

CVPixelBufferUnlockBaseAddress(videoFrame, kFlags);

}

"modules/video_capture/video_capture_impl.h"中执行DeliverCapturedFrame(captureFrame);

int32_t VideoCaptureImpl::IncomingFrame(uint8_t* videoFrame,

size_t videoFrameLength,

const VideoCaptureCapability& frameInfo,

int64_t captureTime /*=0*/) {

rtc::CritScope cs(&_apiCs);

const int32_t width = frameInfo.width;

const int32_t height = frameInfo.height;

TRACE_EVENT1("webrtc", "VC::IncomingFrame", "capture_time", captureTime);

// Not encoded, convert to I420.

if (frameInfo.videoType != VideoType::kMJPEG &&

CalcBufferSize(frameInfo.videoType, width, abs(height)) >

videoFrameLength) {

RTC_LOG(LS_ERROR) << "Wrong incoming frame length.";

return -1;

}

int stride_y = width;

int stride_uv = (width + 1) / 2;

int target_width = width;

int target_height = height;

// SetApplyRotation doesn't take any lock. Make a local copy here.

bool apply_rotation = apply_rotation_;

if (apply_rotation) {

// Rotating resolution when for 90/270 degree rotations.

if (_rotateFrame == kVideoRotation_90 ||

_rotateFrame == kVideoRotation_270) {

target_width = abs(height);

target_height = width;

}

}

// Setting absolute height (in case it was negative).

// In Windows, the image starts bottom left, instead of top left.

// Setting a negative source height, inverts the image (within LibYuv).

// TODO(nisse): Use a pool?

rtc::scoped_refptr<I420Buffer> buffer = I420Buffer::Create(

target_width, abs(target_height), stride_y, stride_uv, stride_uv);

libyuv::RotationMode rotation_mode = libyuv::kRotate0;

if (apply_rotation) {

switch (_rotateFrame) {

case kVideoRotation_0:

rotation_mode = libyuv::kRotate0;

break;

case kVideoRotation_90:

rotation_mode = libyuv::kRotate90;

break;

case kVideoRotation_180:

rotation_mode = libyuv::kRotate180;

break;

case kVideoRotation_270:

rotation_mode = libyuv::kRotate270;

break;

}

}

const int conversionResult = libyuv::ConvertToI420(

videoFrame, videoFrameLength, buffer.get()->MutableDataY(),

buffer.get()->StrideY(), buffer.get()->MutableDataU(),

buffer.get()->StrideU(), buffer.get()->MutableDataV(),

buffer.get()->StrideV(), 0, 0, // No Cropping

width, height, target_width, target_height, rotation_mode,

ConvertVideoType(frameInfo.videoType));

if (conversionResult < 0) {

RTC_LOG(LS_ERROR) << "Failed to convert capture frame from type "

<< static_cast<int>(frameInfo.videoType) << "to I420.";

return -1;

}

VideoFrame captureFrame(buffer, 0, rtc::TimeMillis(),

!apply_rotation ? _rotateFrame : kVideoRotation_0);

captureFrame.set_ntp_time_ms(captureTime);

DeliverCapturedFrame(captureFrame);

return 0;

}

其中,_dataCallBack是 VideoCaptureImpl::RegisterCaptureDataCallback注册的。如果_dataCallBack继承了VideoSinkInterface接口,并且重写了OnFrame,就可以获取到视频帧。

int32_t VideoCaptureImpl::DeliverCapturedFrame(VideoFrame& captureFrame) {

UpdateFrameCount(); // frame count used for local frame rate callback.

if (_dataCallBack) {

_dataCallBack->OnFrame(captureFrame);

}

return 0;

}

void VideoCaptureImpl::RegisterCaptureDataCallback(

rtc::VideoSinkInterface<VideoFrame>* dataCallBack) {

rtc::CritScope cs(&_apiCs);

_dataCallBack = dataCallBack;

}

本文详细介绍了WebRTC的video_capture模块,该模块提供跨平台的视频采集功能,支持Android、iOS、Linux、Mac和Windows等平台。VideoCaptureModule作为核心接口,包括设备信息管理、视频启动和停止、数据回调等功能。不同平台通过特定的实现类如VideoCaptureV4L2、VideoCaptureIos等进行底层适配。VideoCaptureFactory工厂类根据平台创建相应的VideoCaptureModule实例。视频数据通过VideoSinkInterface接口传递,最终经过处理并传递给上层应用。

本文详细介绍了WebRTC的video_capture模块,该模块提供跨平台的视频采集功能,支持Android、iOS、Linux、Mac和Windows等平台。VideoCaptureModule作为核心接口,包括设备信息管理、视频启动和停止、数据回调等功能。不同平台通过特定的实现类如VideoCaptureV4L2、VideoCaptureIos等进行底层适配。VideoCaptureFactory工厂类根据平台创建相应的VideoCaptureModule实例。视频数据通过VideoSinkInterface接口传递,最终经过处理并传递给上层应用。

563

563

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?