一、10折交叉验证(10-fold cross validation)

将数据集随机分成10份,使用其中9份进行训练而将另外1份用作测试。该过程可以重复10次,每次使用的测试数据不同

二、留一法(Leave-One-Out)

在机器学习领域,n折交叉验证(n是数据集中样本的数目)被称为留一法。

它的一个优点是每次迭代中都使用了最大可能数目的样本来训练。

另一个优点是该方法具有确定性

因为10折交叉验证中随机将数据分到桶中,而留一法是确定性的

主要一个不足在于计算的开销很大

另一个缺点与分层采样(stratification)有关

三、分层采样

10折交叉验证期望每份数据中实例比例按照其在整个数据集的相同比例,而留一法评估的问题在于测试集中只有一个样本,因此它肯定不是分层采样的结果。

总而言之,留一法可能适用于非常小的数据集,到目前为止10折交叉测试是最流行的选择

四、混淆矩阵

精确率百分比的计算

五、一个编程的例子

汽车MPG数据集

利用分层采样方法将数据分到10个桶中

# divide data into 10 buckets

import random

def buckets(filename, bucketName, separator, classColumn):

"""the original data is in the file named filename

bucketName is the prefix for all the bucket names

separator is the character that divides the columns

(for ex., a tab or comma and classColumn is the column

that indicates the class"""

# put the data in 10 buckets

numberOfBuckets = 10

data = {}

# first read in the data and divide by category

with open(filename) as f:

lines = f.readlines()

for line in lines:

if separator != '\t':

line = line.replace(separator, '\t')

# first get the category

category = line.split()[classColumn]

data.setdefault(category, [])

data[category].append(line)

# initialize the buckets

buckets = []

for i in range(numberOfBuckets):

buckets.append([])

# now for each category put the data into the buckets

for k in data.keys():

#randomize order of instances for each class

random.shuffle(data[k])

bNum = 0

# divide into buckets

for item in data[k]:

buckets[bNum].append(item)

bNum = (bNum + 1) % numberOfBuckets

# write to file

for bNum in range(numberOfBuckets):

f = open("%s-%02i" % (bucketName, bNum + 1), 'w')

for item in buckets[bNum]:

f.write(item)

f.close()

# example of how to use this code

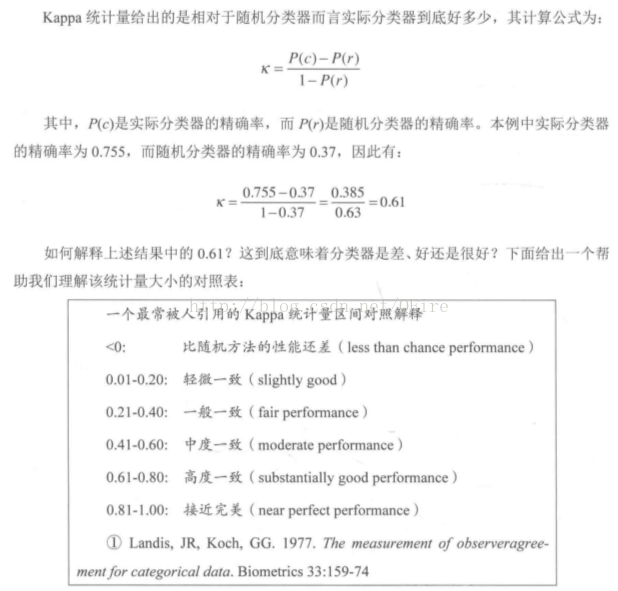

buckets("pimaSmall.txt", 'pimaSmall',',',8)六、Kappa统计量

“分类器到底好到什么程度”

Kappa统计量比较的是分类器与仅仅基于随机的分类器的性能。

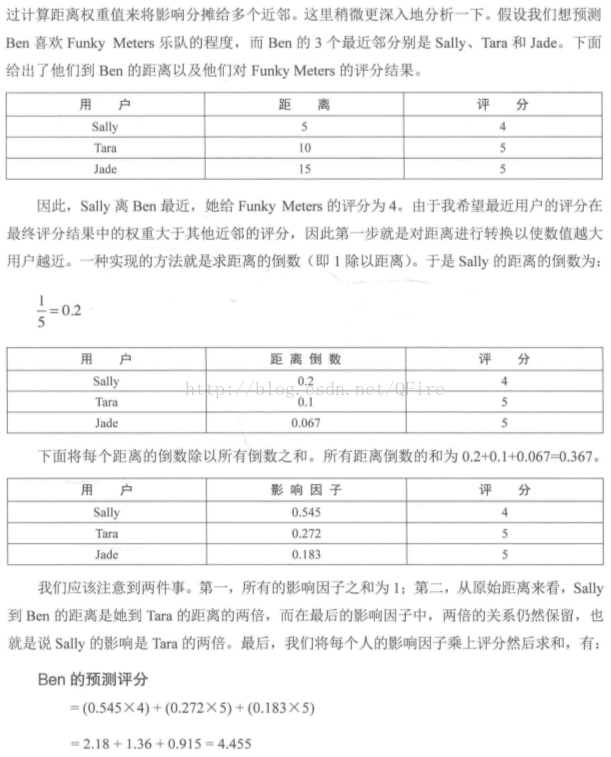

七、近邻算法的改进

考察k个而不只是1个最近的邻居(kNN)

八、一个新数据集也挑战

皮马印第安人糖尿病数据集(Pima Indians Diabetes Data Set),该数据集是美国国立糖尿病、消化和肾脏疾病研究所

每个实例表示一个超过21岁的女性的信息,分两类,即5年没是否患过糖尿病。每个人有8个属性

def knn(self, itemVector):

"""returns the predicted class of itemVector using k

Nearest Neighbors"""

# changed from min to heapq.nsmallest to get the

# k closest neighbors

neighbors = heapq.nsmallest(self.k,

[(self.manhattan(itemVector, item[1]), item)

for item in self.data])

# each neighbor gets a vote

results = {}

for neighbor in neighbors:

theClass = neighbor[1][0]

results.setdefault(theClass, 0)

results[theClass] += 1

resultList = sorted([(i[1], i[0]) for i in results.items()], reverse=True)

#get all the classes that have the maximum votes

maxVotes = resultList[0][0]

possibleAnswers = [i[1] for i in resultList if i[0] == maxVotes]

# randomly select one of the classes that received the max votes

answer = random.choice(possibleAnswers)

return( answer)人们将kNN分类器用于:

5998

5998

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?