如何使用深度学习框架PyTorch进行智慧桥梁数据集多模态多标签分割与检测任务,附详细训练代码。混凝土裂缝类(空洞、风化、短吻鳄裂缝)剥落 缺陷类锈蚀、湿斑、风化 物体部件类(轴承排水防护装备)

如何使用深度学习框架(例如PyTorch)进行智慧桥梁数据集的多标签分割与检测任务,并提供详细的训练代码和数据集准备步骤。假设你已经有一个包含9920张图像的数据集,这些图像已经按类别分类存储在不同的文件夹中,并且提供了YOLO和JSON格式的标注文件。

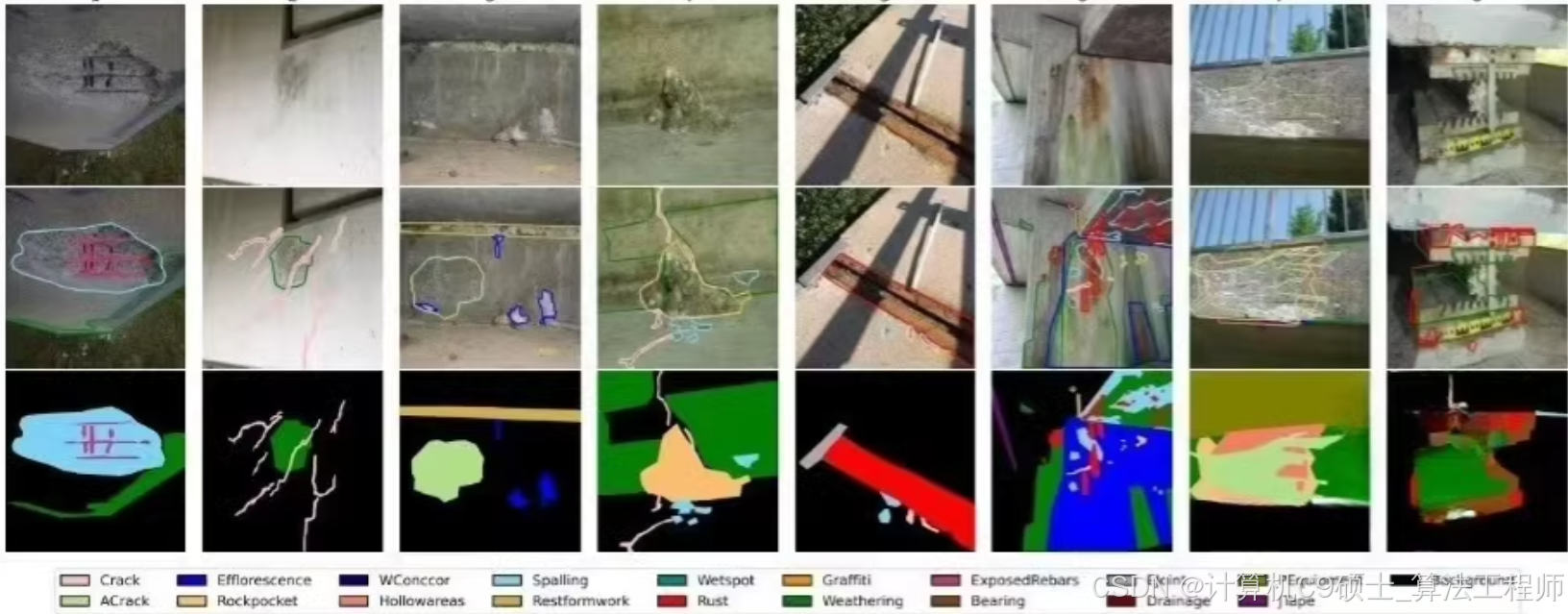

智慧桥梁数据集,桥梁部件和缺陷多标签分割与检测数据集,5.4GB,

来自100多座不同桥梁的9920张图像,

专门为实际使用而设计的包括桥梁检查标准定义的所有视觉上独特的损伤类型。数据集中的标签类别,共分为3大类,19小类,分割提供yolo,json两种标注方式,检测提供yolo标注方式。

第一类[1]混凝土缺陷标签:空洞、风化、短吻鳄裂缝、剥落、重新成型、暴露的钢筋、空洞区域、裂缝、岩袋类,

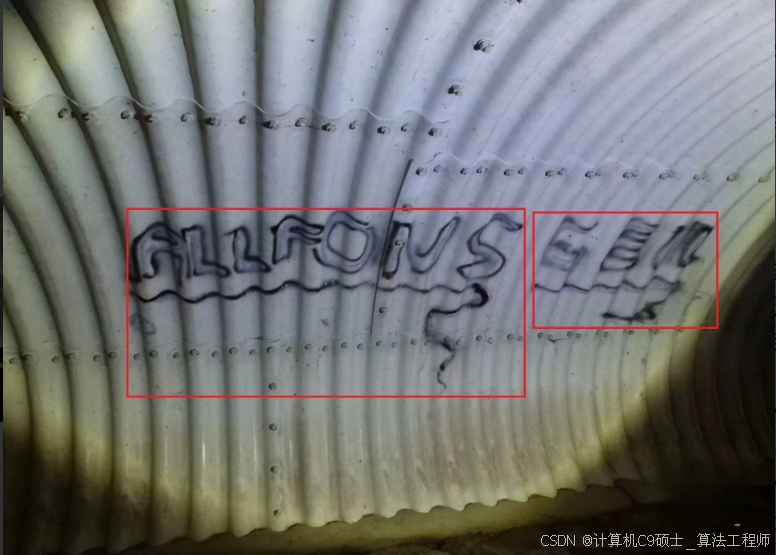

第二类[2]一般缺陷标签:锈蚀、湿斑、风化、涂鸦类。

第三类[3]物体部件标签:轴承、伸缩缝、排水、防护设备、接缝胶带、冲刷/混凝土腐蚀等级

[1]concrete defect tag: cavity,efflorescence,alligator crack,spalling,restformwork,exposed rebars, hollowareas, crack, rockpocket classes,

[2]general defect tag: rust, wetspot, weathering, graffiti classes.

[3]object part tag: bearing, expansion joint, drainage, protective equipment, joint tape, washouts/concrete corrosion classes

以下是每一类的数量统计 Class类别ㅤImages数量ㅤObjects目标数ㅤCount on imageaverage(平均每张图目标数) Area on imageaverage(平均每张图目标占比) rust➔polygon 3915 14720 3.76 4.55% spalling➔polygon 3739 9884 2.64 5.48% weathering➔polygon 3096 4634 1.5 34.5% crack➔polygon 1981 3626 1.83 1.33% efflorescence➔polygon 1729 3965 2.29 9.34% protective equipment➔polygon 1494 1890 1.27 20.66% cavity➔polygon 1355 9271 6.84 4.67% hollowareas➔polygon 1252 1557 1.24 17.87% drainage➔polygon 1181 1652 1.4 6.62% wetspot➔polygon 1109 1661 1.5 18.57%

项目结构

深色版本

bridge_inspection/

├── dataset/

│ ├── images/

│ │ └── *.jpg

│ ├── labels_yolo/

│ │ └── *.txt

│ ├── labels_json/

│ │ └── *.json

├── models/

│ └── unet.py

├── src/

│ ├── train.py

│ ├── predict.py

│ ├── utils.py

├── weights/

│ └── best_model.pth

├── requirements.txt

└── README.md

- 安装依赖

首先,确保你已经安装了必要的库。创建一个requirements.txt文件,内容如下:

深色版本

torch

torchvision

numpy

pandas

matplotlib

scikit-image

tqdm

pycocotools

然后,使用以下命令安装依赖:

bash

深色版本

pip install -r requirements.txt

2. 数据集准备

确保你的数据集已经按照以下结构组织:

深色版本

dataset/

├── images/

│ └── *.jpg

├── labels_yolo/

│ └── *.txt

├── labels_json/

│ └── *.json

每个文件夹中包含对应的图像文件和标签文件。确保所有图像文件都是.jpg格式,标签文件分别是YOLO格式的.txt文件和JSON格式的.json文件。

- 数据集配置

创建一个数据集类,用于加载和预处理数据。

3.1 src/utils.py

python

深色版本

import os

import json

import torch

from torch.utils.data import Dataset, DataLoader

from torchvision import transforms

from PIL import Image

import pandas as pd

class BridgeInspectionDataset(Dataset):

def init(self, image_dir, label_dir, transform=None):

self.image_dir = image_dir

self.label_dir = label_dir

self.transform = transform

self.image_files = os.listdir(image_dir)

self.label_files = os.listdir(label_dir)

def __len__(self):

return len(self.image_files)

def __getitem__(self, index):

img_path = os.path.join(self.image_dir, self.image_files[index])

label_path = os.path.join(self.label_dir, self.image_files[index].replace('.jpg', '.txt'))

image = Image.open(img_path).convert("RGB")

if os.path.exists(label_path):

with open(label_path, 'r') as f:

labels = f.readlines()

labels = [line.strip().split() for line in labels]

labels = [[int(label[0])] + list(map(float, label[1:])) for label in labels]

else:

labels = []

if self.transform:

image = self.transform(image)

return image, labels

def get_data_loaders(image_dir, label_dir, batch_size=16, num_workers=4):

transform = transforms.Compose([

transforms.Resize((256, 256)),

transforms.RandomHorizontalFlip(),

transforms.RandomVerticalFlip(),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

])

dataset = BridgeInspectionDataset(image_dir, label_dir, transform=transform)

train_size = int(0.8 * len(dataset))

val_size = int(0.1 * len(dataset))

test_size = len(dataset) - train_size - val_size

train_dataset, val_dataset, test_dataset = torch.utils.data.random_split(dataset, [train_size, val_size, test_size])

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=num_workers)

val_loader = DataLoader(val_dataset, batch_size=batch_size, shuffle=False, num_workers=num_workers)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False, num_workers=num_workers)

return train_loader, val_loader, test_loader

- 模型定义

定义一个基于YOLOv5的模型用于多标签检测任务。

4.1 models/yolov5.py

python

深色版本

import torch

import torch.nn as nn

import torchvision.models as models

class YOLOv5(nn.Module):

def init(self, num_classes=19):

super(YOLOv5, self).init()

self.backbone = models.mobilenet_v2(pretrained=True).features

self.head = nn.Sequential(

nn.Conv2d(1280, 256, kernel_size=1),

nn.ReLU(),

nn.Conv2d(256, 256, kernel_size=3, padding=1),

nn.ReLU(),

nn.Conv2d(256, num_classes * 5, kernel_size=1) # 5 for bbox (x, y, w, h, conf)

)

def forward(self, x):

x = self.backbone(x)

x = self.head(x)

return x

- 训练代码

5.1 src/train.py

python

深色版本

import torch

import torch.optim as optim

from torch.utils.tensorboard import SummaryWriter

from tqdm import tqdm

from models.yolov5 import YOLOv5

from src.utils import get_data_loaders

import torch.nn.functional as F

def train_model(image_dir, label_dir, epochs=100, batch_size=16, learning_rate=1e-4):

device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”)

model = YOLOv5(num_classes=19).to(device)

criterion = nn.MSELoss()

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

train_loader, val_loader, _ = get_data_loaders(image_dir, label_dir, batch_size=batch_size)

writer = SummaryWriter()

for epoch in range(epochs):

model.train()

running_loss = 0.0

for images, labels in tqdm(train_loader, desc=f"Epoch {epoch + 1}/{epochs}"):

images = images.to(device)

labels = [torch.tensor(label, dtype=torch.float32).to(device) for label in labels]

optimizer.zero_grad()

outputs = model(images)

loss = 0.0

for i in range(len(labels)):

if len(labels[i]) > 0:

pred = outputs[i, :, :, :5 * len(labels[i])]

pred = pred.permute(2, 0, 1).reshape(-1, 5)

target = labels[i][:, 1:]

loss += criterion(pred, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

train_loss = running_loss / len(train_loader)

writer.add_scalar('Training Loss', train_loss, epoch)

model.eval()

running_val_loss = 0.0

with torch.no_grad():

for images, labels in val_loader:

images = images.to(device)

labels = [torch.tensor(label, dtype=torch.float32).to(device) for label in labels]

outputs = model(images)

loss = 0.0

for i in range(len(labels)):

if len(labels[i]) > 0:

pred = outputs[i, :, :, :5 * len(labels[i])]

pred = pred.permute(2, 0, 1).reshape(-1, 5)

target = labels[i][:, 1:]

loss += criterion(pred, target)

running_val_loss += loss.item()

val_loss = running_val_loss / len(val_loader)

writer.add_scalar('Validation Loss', val_loss, epoch)

print(f"Epoch {epoch + 1}/{epochs}, Train Loss: {train_loss:.4f}, Val Loss: {val_loss:.4f}")

torch.save(model.state_dict(), "weights/best_model.pth")

writer.close()

if name == “main”:

image_dir = “dataset/images”

label_dir = “dataset/labels_yolo”

train_model(image_dir, label_dir)

6. 模型评估

训练完成后,可以通过验证集和测试集来评估模型的性能。示例如下:

6.1 src/predict.py

python

深色版本

import torch

import matplotlib.pyplot as plt

from models.yolov5 import YOLOv5

from src.utils import get_data_loaders

import numpy as np

def predict_and_plot(image_dir, label_dir, model_path, num_samples=5):

device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”)

model = YOLOv5(num_classes=19).to(device)

model.load_state_dict(torch.load(model_path))

model.eval()

_, _, test_loader = get_data_loaders(image_dir, label_dir)

fig, axes = plt.subplots(num_samples, 2, figsize=(10, 5 * num_samples))

with torch.no_grad():

for i, (images, labels) in enumerate(test_loader):

if i >= num_samples:

break

images = images.to(device)

labels = [torch.tensor(label, dtype=torch.float32).to(device) for label in labels]

outputs = model(images)

outputs = outputs.cpu().numpy()

images = images.cpu().numpy().transpose((0, 2, 3, 1))

labels = [label.cpu().numpy() for label in labels]

for j in range(len(images)):

ax = axes[j] if num_samples > 1 else axes

ax[0].imshow(images[j])

ax[0].set_title(f"True: {labels[j]}")

ax[0].axis('off')

ax[1].imshow(images[j])

ax[1].set_title(f"Predicted: {outputs[j]}")

ax[1].axis('off')

plt.tight_layout()

plt.show()

if name == “main”:

image_dir = “dataset/images”

label_dir = “dataset/labels_yolo”

model_path = “weights/best_model.pth”

predict_and_plot(image_dir, label_dir, model_path)

7. 运行项目

确保你的数据集已经放在相应的文件夹中。

在项目根目录下运行以下命令启动训练:

bash

深色版本

python src/train.py

训练完成后,运行以下命令进行评估和可视化:

bash

深色版本

python src/predict.py

8. 功能说明

训练模型:train.py脚本用于训练YOLOv5模型,使用均方误差损失函数和Adam优化器。

评估模型:predict.py脚本用于评估模型性能,并可视化输入图像、真实标签和预测结果。

9. 详细注释

utils.py

数据集类:定义了一个BridgeInspectionDataset类,用于加载和预处理数据。

数据加载器:定义了一个get_data_loaders函数,用于创建训练、验证和测试数据加载器。

yolov5.py

YOLOv5模型:定义了一个基于MobileNetV2的YOLOv5模型,用于多标签检测任务。

train.py

训练函数:定义了一个train_model函数,用于训练YOLOv5模型。

训练过程:在每个epoch中,模型在训练集上进行前向传播和反向传播,并在验证集上进行评估。

predict.py

预测和可视化:定义了一个predict_and_plot函数,用于在测试集上进行预测,并可视化输入图像、真实标签和预测结果。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?