目标

爬取三国演义小说所有章节标题和章节内容

网址:https://www.shicimingju.com/book/sanguoyanyi.html

思路

先使用通用爬虫爬取当前页面

解析页面当中提供的所有页面标题

获取标题所对应内容的详情页的链接地址

将详情页中的章节内容提取出来

对首页页面数据进行爬取

import requests

url = 'https://www.shicimingju.com/book/sanguoyanyi.html'

page_text = requests.get(url=url).text

#page_text #用来检验是否获取成功

在首页中解析出标题和详情页url

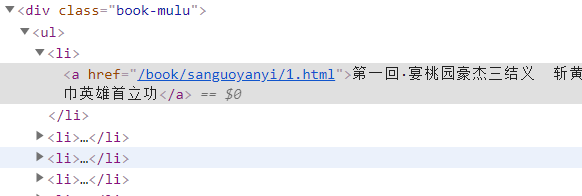

还是需要打开索要爬取的页面,检查元素:

找到标题对应的页面元素,在这里是

用beautifulsoup的层级选择器select

li_list = soup.select('.book-mulu > ul > li')

详情页的url即a的href,但是不完整,真正的url需要加上http:…

detail_url = 'https://www.shicimingju.com'+li.a['href']

遍历获取其他:

for li in li_list:

title = li.a.string#章节标题

detail_url = 'https://www.shicimingju.com'+li.a['href']

对详情页发出请求:

detial_page_text = requests.get(url=detail_url).text

解析出来该详情页的章节的内容:

detial_page_text = requests.get(url=detail_url).text

#解析出详情页中章节的数据

detial_soup = BeautifulSoup(detial_page_text,'lxml')

div_tag = detial_soup.find('div',class_="chapter_content")

#解析到了章节的内容

content = div_tag.text

并保存成.txt文件

fp = open('./sanguo.txt','w',encoding='utf-8')

fp.write(title +':'+content+'\n')

代码整合

from bs4 import BeautifulSoup

#1.实例化beautifulsoup对象,需要将页面源码数据加载到该对象中

soup = BeautifulSoup(page_text,'lxml')

#2.解析章节标题和详情页的url

li_list = soup.select('.book-mulu > ul > li')#层级选择器

fp = open('./sanguo.txt','w',encoding='utf-8')

for li in li_list:

title = li.a.string#章节标题

detail_url = 'https://www.shicimingju.com'+li.a['href']

#对详情页发出请求,解析出章节内容

detial_page_text = requests.get(url=detail_url).text

#解析出详情页中章节的数据

detial_soup = BeautifulSoup(detial_page_text,'lxml')

div_tag = detial_soup.find('div',class_="chapter_content")

#解析到了章节的内容

content = div_tag.text

#循环写入

fp.write(title +':'+content+'\n')

print(title,'爬取成功!')

运行结果

1199

1199

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?