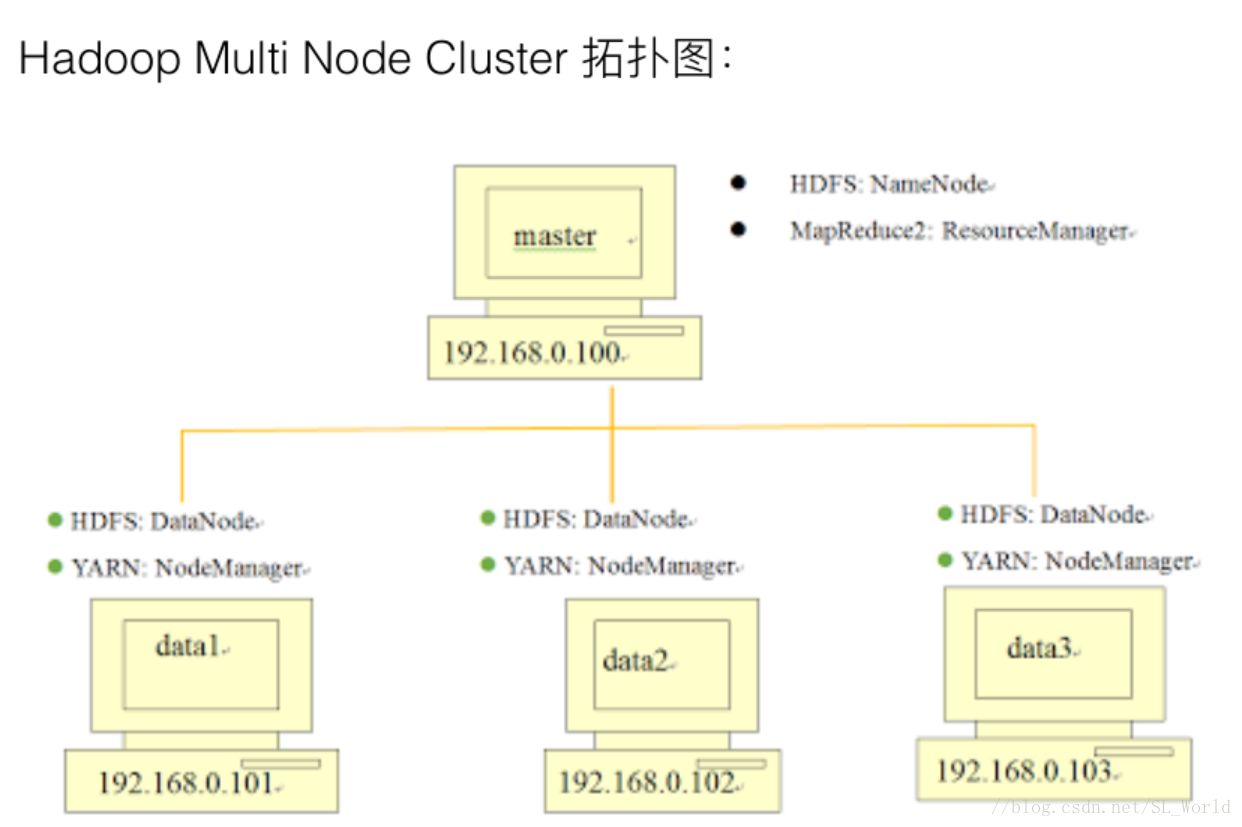

在CentOS7.5上的Hadoop完全分布式搭建请移步:

在CentOS7.5上搭建Hadoop3.0.3完全分布式集群

1 复制Single Node Cluster到data1

单节点安装及代码请参考:Single Node Cluster安装步骤

2 设置data1

编辑网络接口文件

sudo gedit /etc/network/interfaces

auto lo

iface lo inet loopback

auto ens33

iface ens33 inet static

address 192.168.0.101

netmask 255.255.255.0

network 192.168.0.0

gateway 192.168.0.1

dns-nameservers 192.168.0.1

sudo gedit /etc/hostname

data1

sudo gedit /etc/hosts

127.0.0.1 localhost

127.0.1.1 hadoop

192.168.0.100 master

192.168.0.101 data1

192.168.0.102 data2

192.168.0.103 data3

sudo gedit /usr/local/hadoop/etc/hadoop/core-site.xml

<property>

<name>fs.default.name</name>

<value>hdfs://master:9000</value>

</property>

sudo gedit /usr/local/hadoop/etc/hadoop/yarn-site.xml

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8025</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8050</value>

</property>

sudo gedit /usr/local/hadoop/etc/hadoop/mapred-site.xml

<property>

<name>mapred.job.tracker</name>

<value>master:54311</value>

</property>

sudo gedit /usr/local/hadoop/etc/hadoop/hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value> file:/usr/local/hadoop/hadoop_data/hdfs/datanode</value>

</property>

3 复制data1到data2、data3和master

4 设置data2和data3

5 设置master

sudo gedit /usr/local/hadoop/etc/hadoop/hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value> file:/usr/local/hadoop/hadoop_data/hdfs/namenode</value>

</property>

sudo gedit /usr/local/hadoop/etc/hadoop/master

master

sudo gedit /usr/local/hadoop/etc/hadoop/slaves

data1

data2

data3

6 master登录到data1、data2和data3,建立HDFS目录

ssh data1

sudo rm -rf /usr/local/hadoop/hadoop_data/hdfs

sudo mkdir -p /usr/local/hadoop/hadoop_data/hdfs/datanode

sudo chown <用户名>: <组名> -R /usr/local/hadoop

exit

ssh data2

……

ssh data3

……

注:第一行命令,rm(remove)删除命令,后面的参数r表示删除指定目录及其子目录,参数f(force)表示强制删除。

7 建立并格式化NameNode HDFS目录

sudo rm -rf /usr/local/hadoop/hadoop_data/hdfs

mkdir -p /usr/local/hadoop/hadoop_data/hdfs/namenode

sudo chown -R <用户名>: <组名> /usr/local/hadoop

hadoop namenode -format

注:第一行命令,rm(remove)删除命令,后面的参数r表示删除指定目录及其子目录,参数f(force)表示强制删除。

8 启动Hadoop Multi Node Cluster

start-dfs.sh

start-yarn.sh

227

227

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?