粗浅理解就是,只有变量才有梯度,因为梯度是求导得来的嘛

"""

Created on Tue Jan 12 16:41:46 2021

认识pytorch中的梯度

@author: user

"""

import torch

from torch.autograd import Variable

x11=torch.Tensor([1])

x12=torch.Tensor([2])

w11=torch.Tensor([0.1])

w12=torch.Tensor([0.2])

x11 = Variable(x11,requires_grad=True)

x12 = Variable(x12,requires_grad=True)

# w11 = Variable(w11,requires_grad=True)

# w12 = Variable(w12,requires_grad=True)

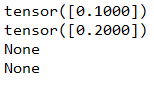

x21=x11*w11+x12*w12

x21.backward()

print(x11.grad)

print(x12.grad)

print(w11.grad)

print(w12.grad)

x11.grad表示的是x21对x11的梯度,等于w11,x12.grad表示的是x21对x12的梯度,,等于w12,

再加一层

import torch

from torch.autograd import Variable

x11=torch.Tensor([1])

x12=torch.Tensor([2])

w11=torch.Tensor([0.1])

w12=torch.Tensor([0.2])

x11 = Variable(x11,requires_grad=True)

x12 = Variable(x12,requires_grad=True)

# w11 = Variable(w11,requires_grad=True)

# w12 = Variable(w12,requires_grad=True)

x21=x11*w11+x12*w12

# x21.backward()

# print(x11.grad)

# print(x12.grad)

# print(w11.grad)

# print(w12.grad)

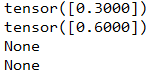

x31=3*x21+4

x31.backward()

print(x11.grad)

print(x12.grad)

print(w11.grad)

print(w12.grad)

5817

5817

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?