上一篇写到了caffe的训练和测试,准确率很高,下面看看每一层的weight和feature:

利用python进行结果的可视化。

输入测试图片:

显示出各层的参数和形状,第一个是批次,第二个feature map数目,第三和第四是每个神经元中图片的长和宽,可以看出,输入是227*227的图片,三个chanel,卷积是32个卷积核卷三个频道,因此有96个feature map

In :[(k, v.data.shape) for k, v in net.blobs.items()]

Out :

[('data', (50L, 3L, 227L, 227L)),

('conv1', (50L, 96L, 55L, 55L)),

('norm1', (50L, 96L, 55L, 55L)),

('pool1', (50L, 96L, 27L, 27L)),

('conv2', (50L, 256L, 27L, 27L)),

('norm2', (50L, 256L, 27L, 27L)),

('pool2', (50L, 256L, 13L, 13L)),

('conv3', (50L, 384L, 13L, 13L)),

('conv4', (50L, 384L, 13L, 13L)),

('conv5', (50L, 256L, 13L, 13L)),

('pool5', (50L, 256L, 6L, 6L)),

('fc6', (50L, 4096L)),

('fc7', (50L, 4096L)),

('fc8', (50L, 2L)),

('prob', (50L, 2L))]

输出一些网络的参数

In: [(k, v[0].data.shape) for k, v in net.params.items()]

Out:

[('conv1', (96L, 3L, 11L, 11L)),

('conv2', (256L, 48L, 5L, 5L)),

('conv3', (384L, 256L, 3L, 3L)),

('conv4', (384L, 192L, 3L, 3L)),

('conv5', (256L, 192L, 3L, 3L)),

('fc6', (4096L, 9216L)),

('fc7', (4096L, 4096L)),

('fc8', (2L, 4096L))]

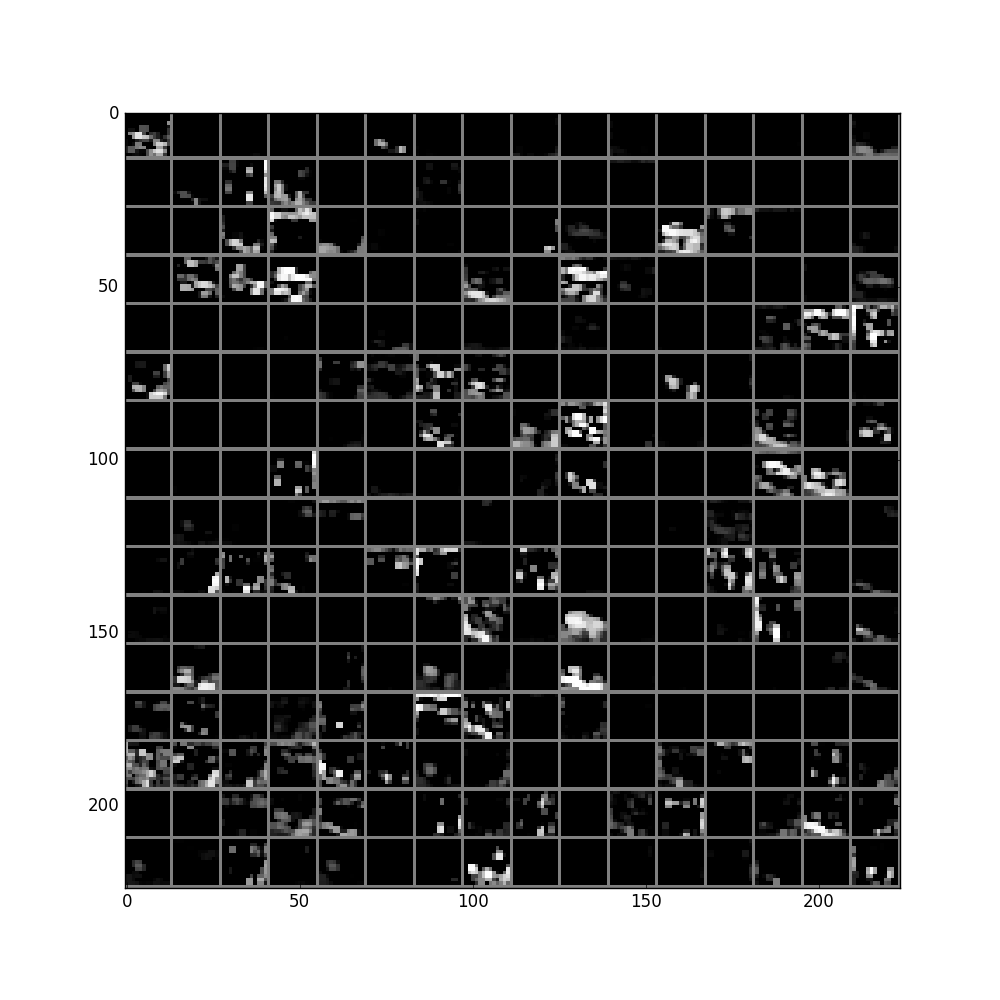

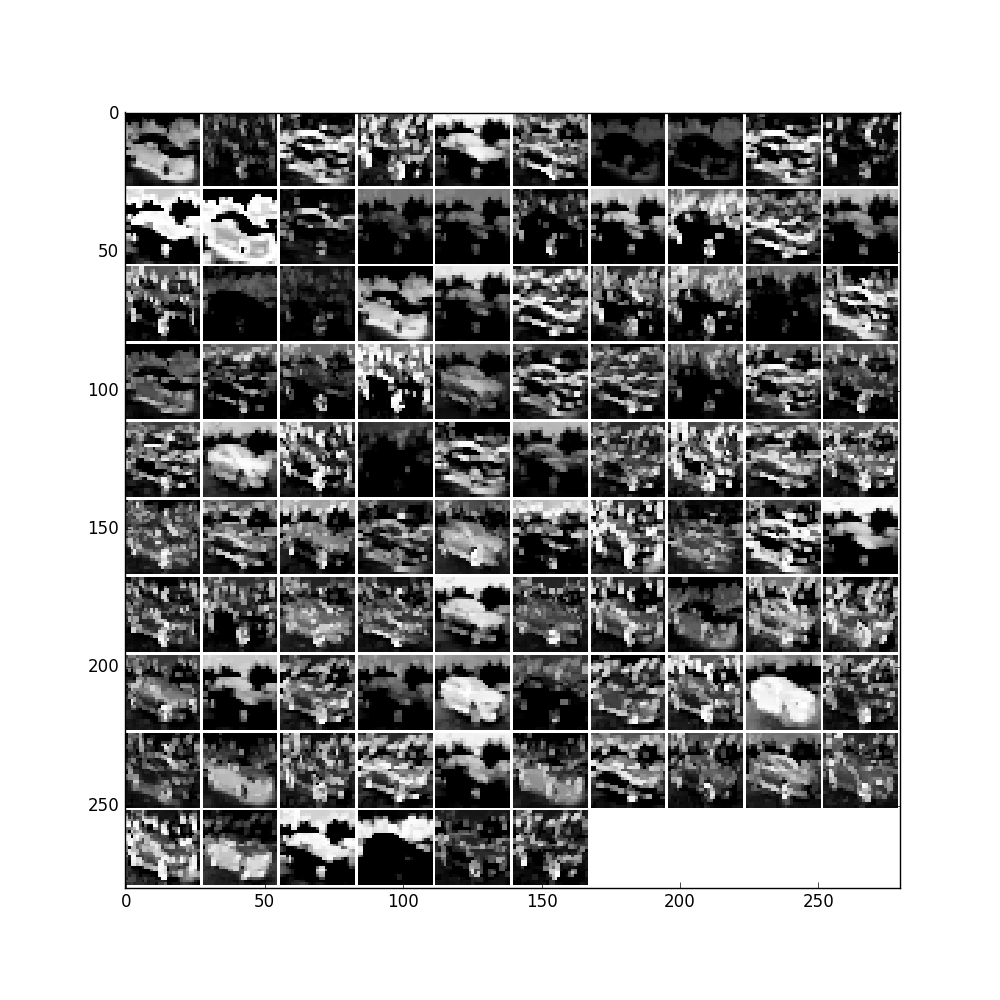

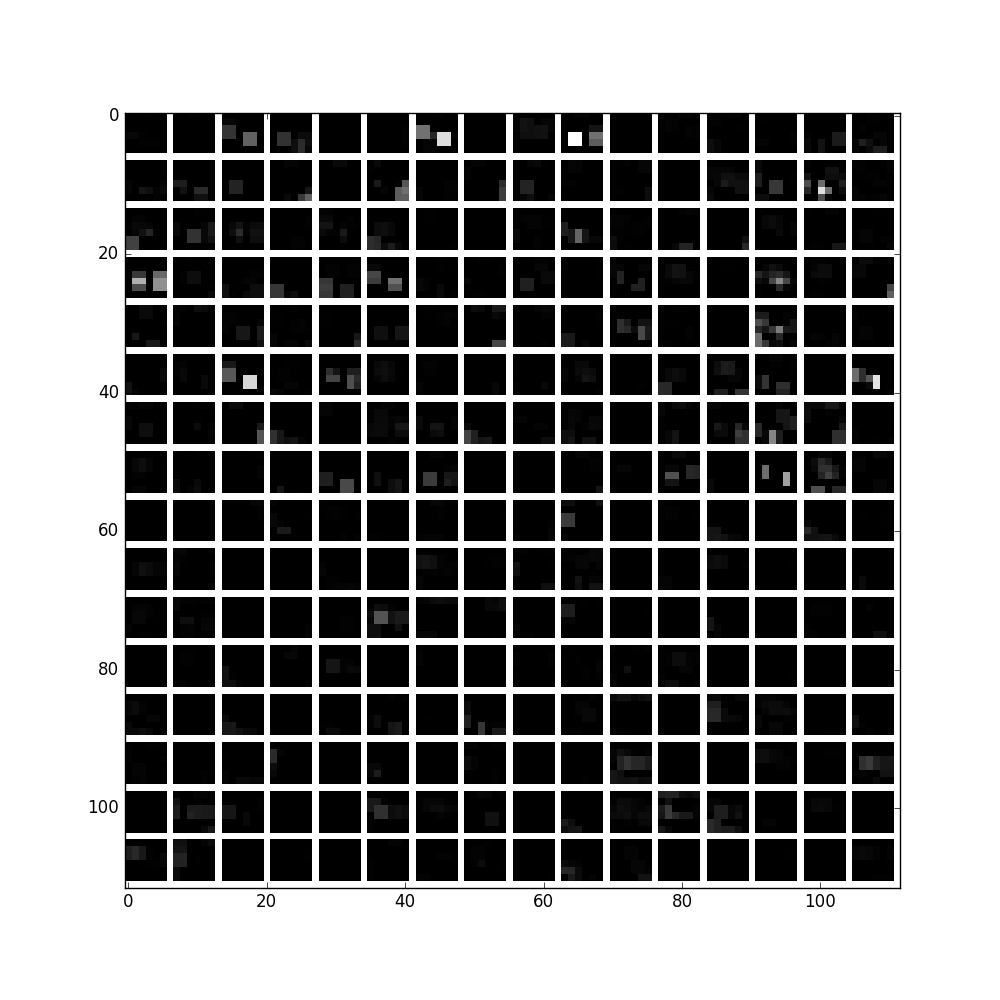

画出Conv1的params:

filters = net.params['conv1'][0].data

vis_square(filters.transpose(0, 2, 3, 1))

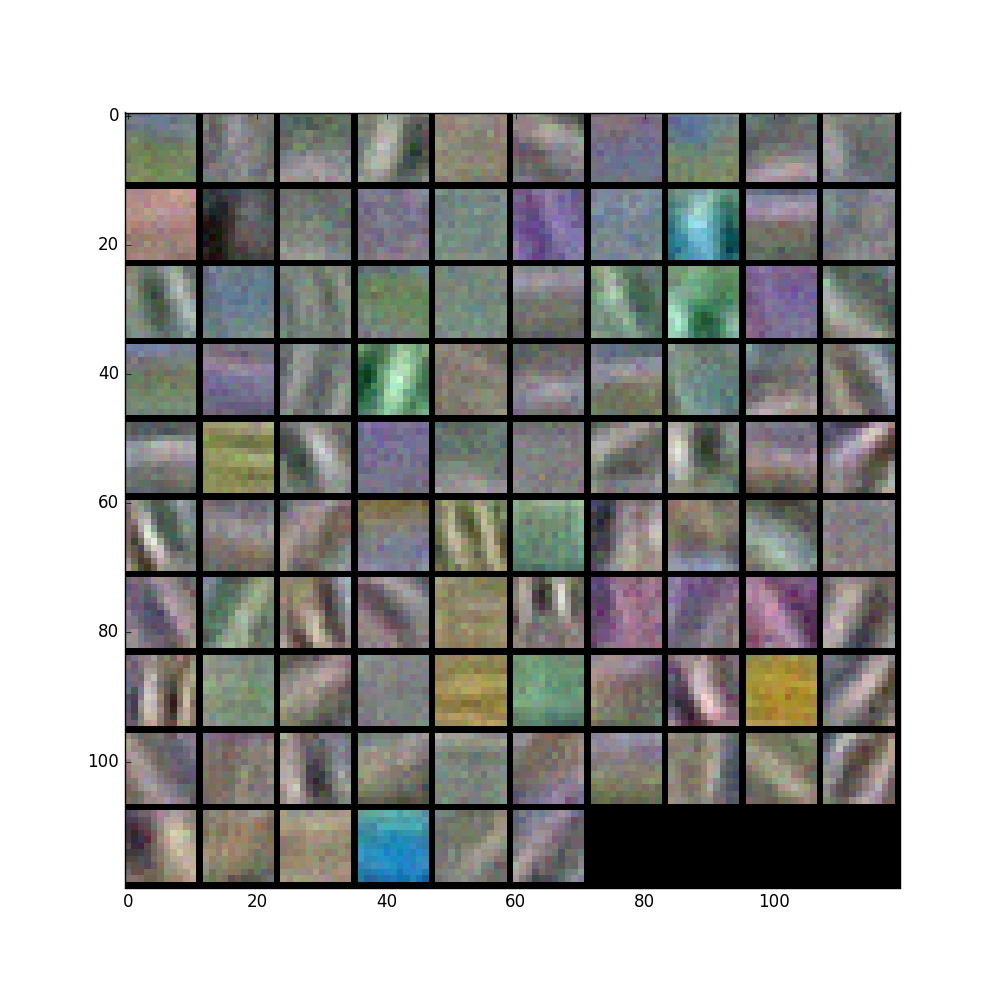

'conv1'输出结果:

feat = net.blobs['conv1'].data[0, :96]

vis_square(feat, padval=1)

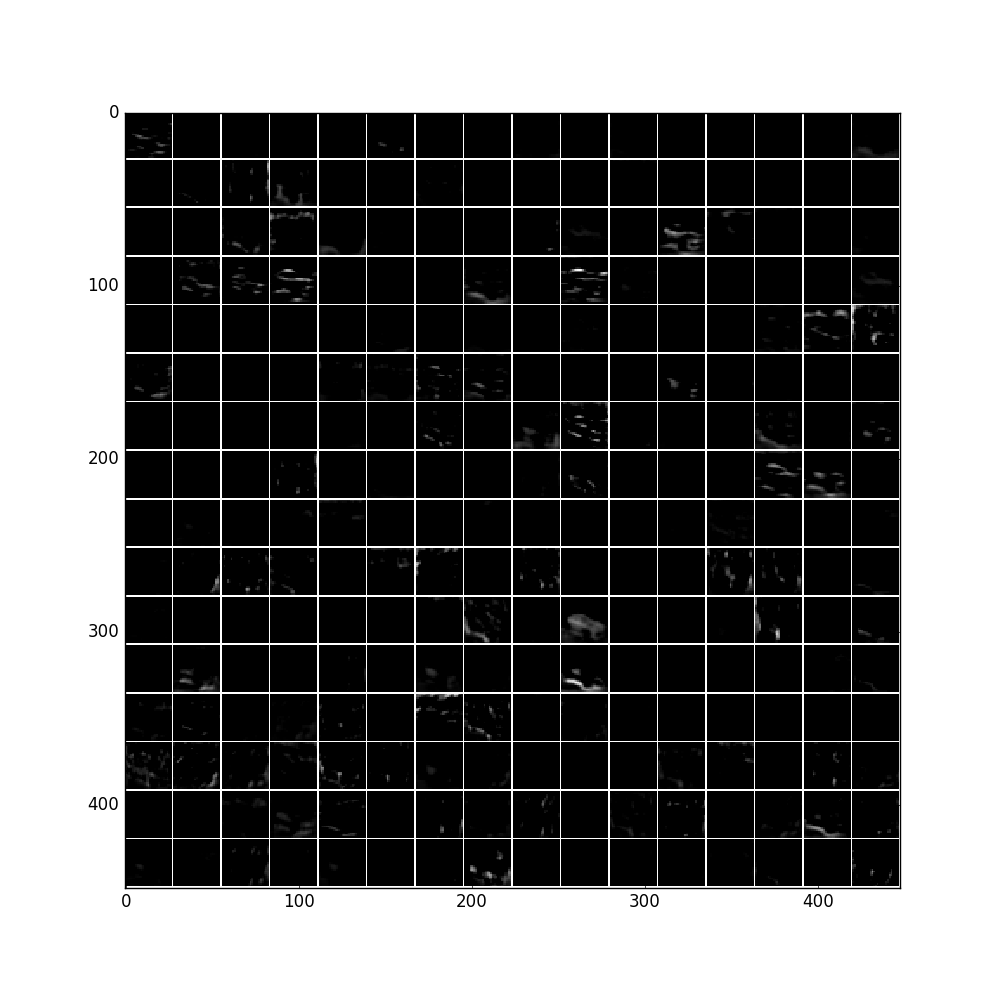

'norm1'输出结果:

feat = net.blobs['norm1'].data[0]

vis_square(feat, padval=1)

feat = net.blobs['pool1'].data[0]

vis_square(feat, padval=1)

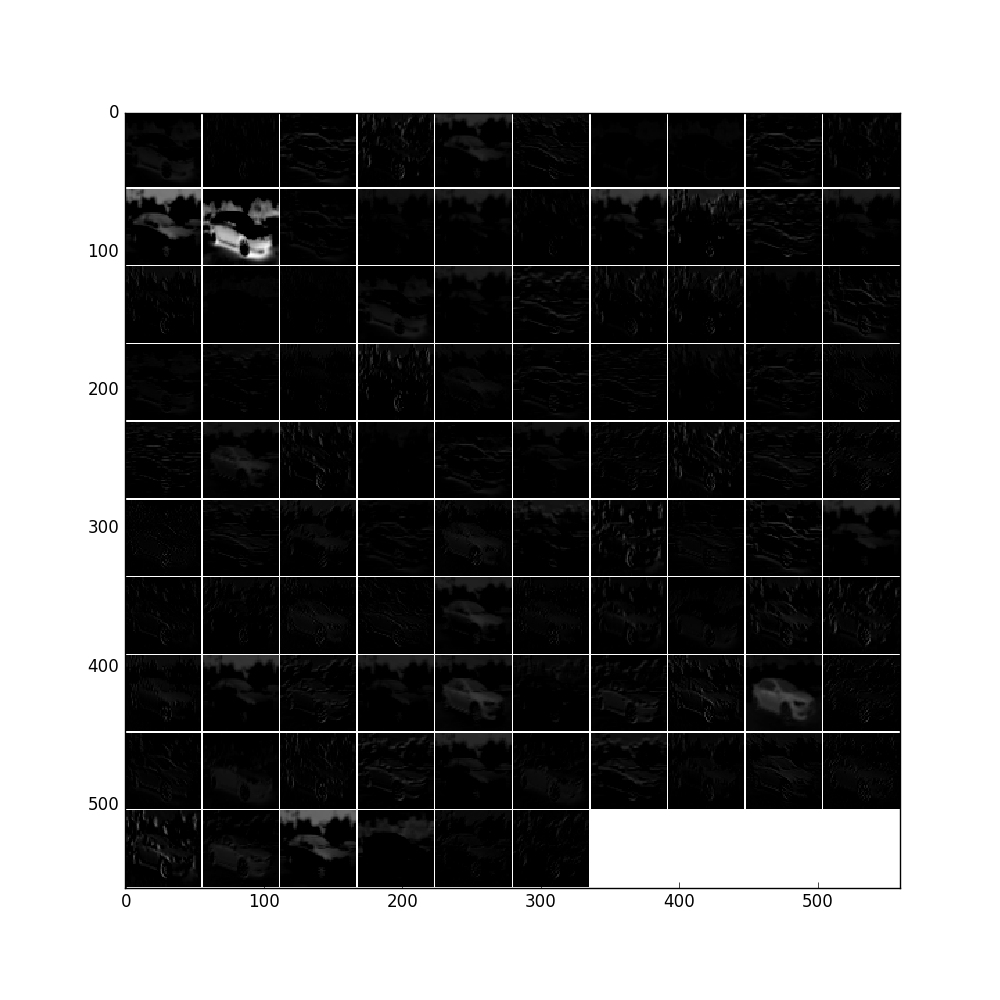

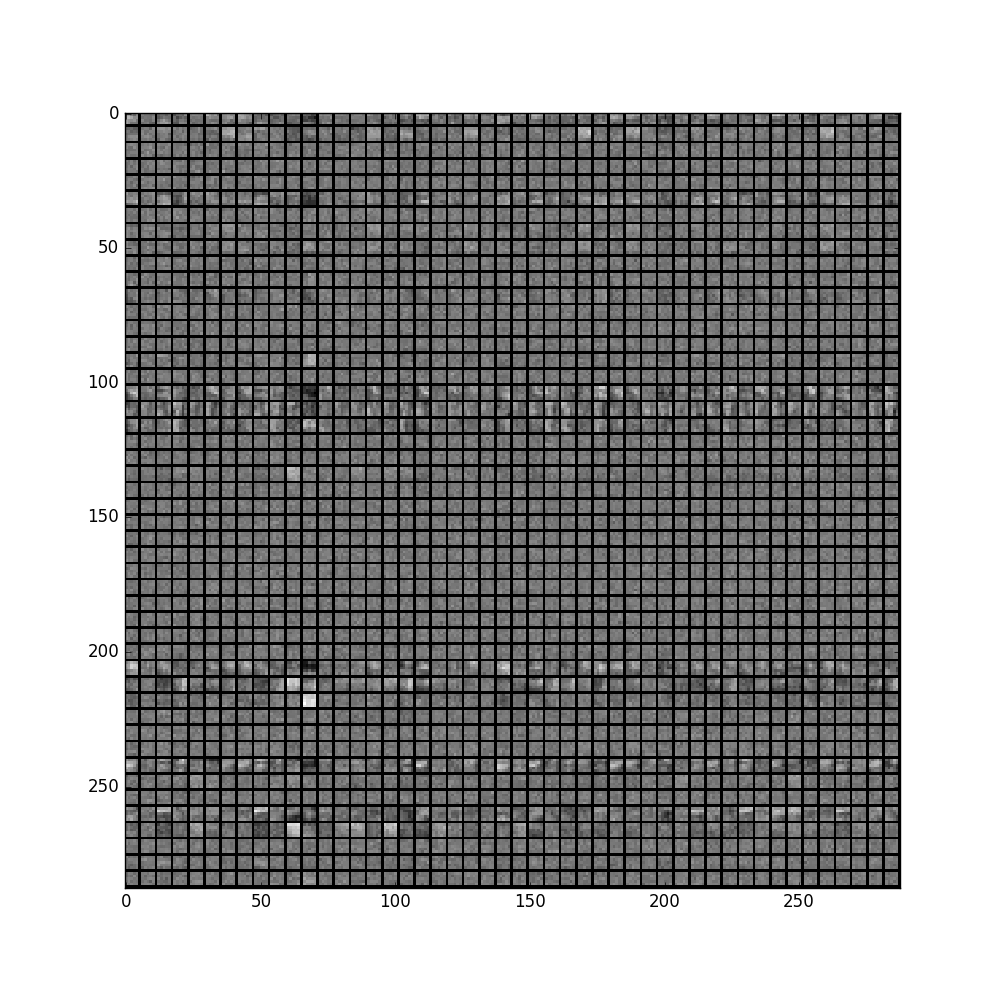

filters = net.params['conv2'][0].data

vis_square(filters[:48].reshape(48**2, 5, 5))

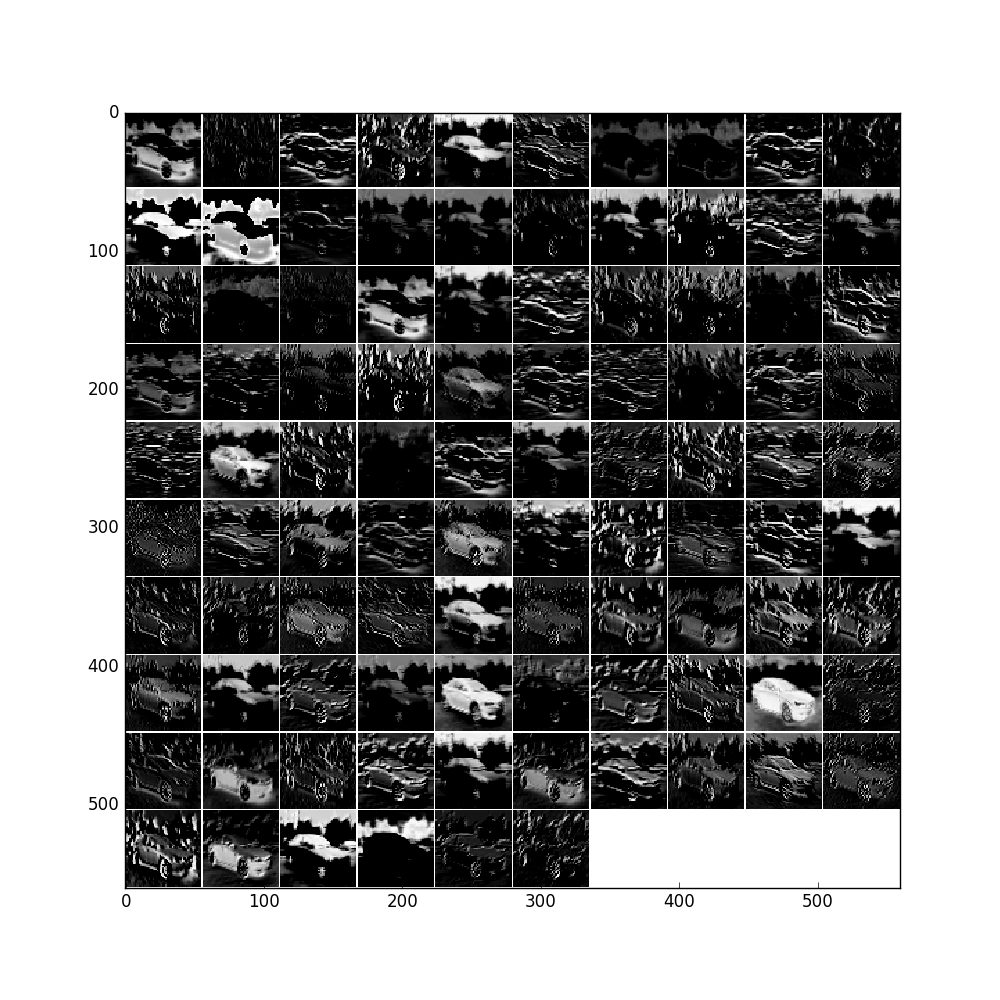

feat = net.blobs['conv2'].data[0]

vis_square(feat, padval=1)

feat = net.blobs['norm2'].data[0]

vis_square(feat, padval=0.5)

feat = net.blobs['pool2'].data[0]

vis_square(feat, padval=0.5)

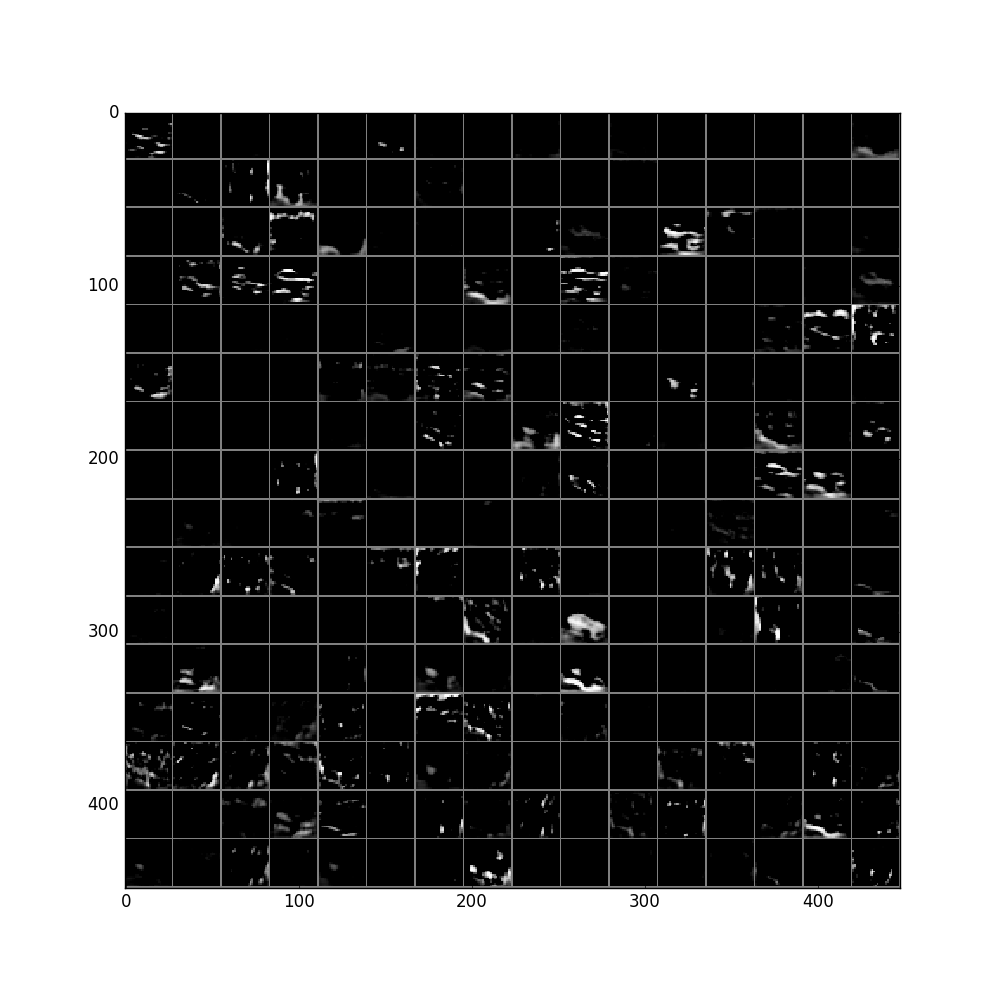

中间省略n层。。。

pool5层:

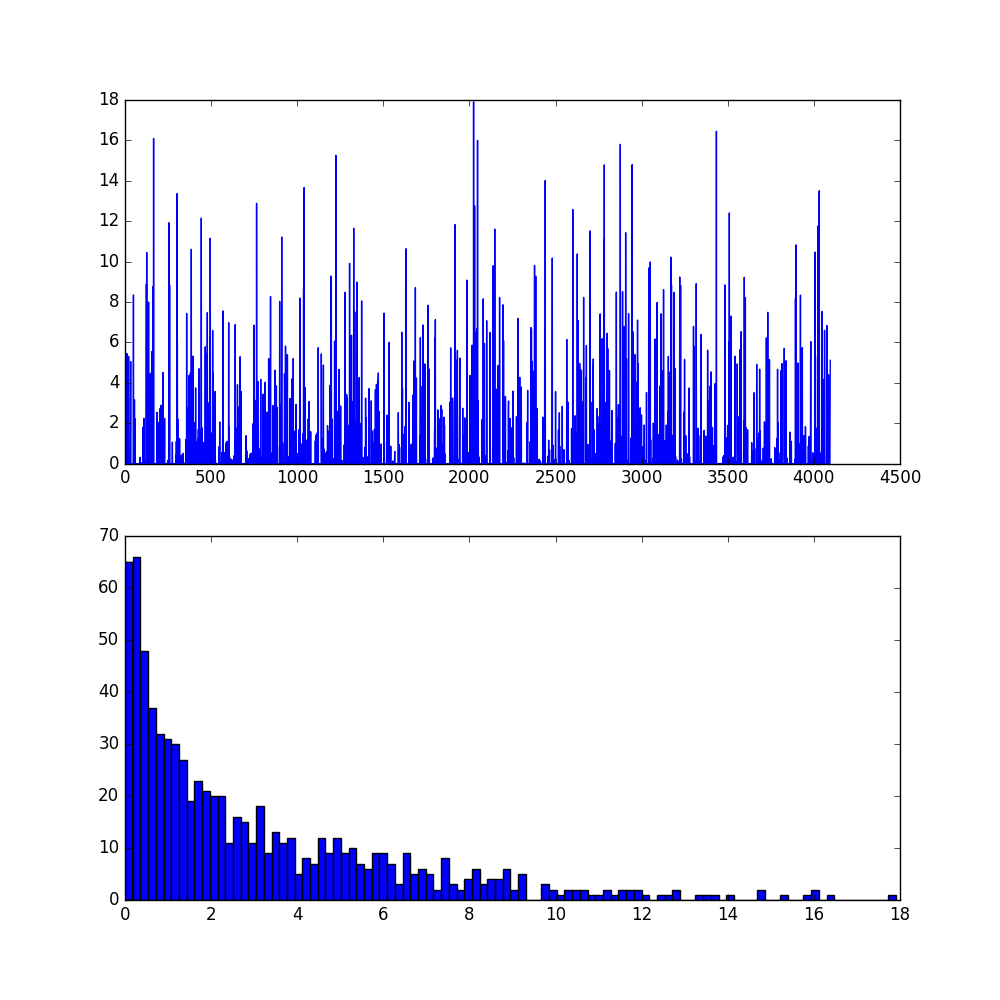

fc6及其直方图:

。。。只能说太奇妙太强大,就是这样莫名其妙的net就能高准确率的辨别出来车子了!

部分代码:

1832

1832

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?