10 多层感知机

从零实现:

import torch

from torch import nn

from d2l import torch as d2l

#实现relu函数

def relu(X):

a = torch.zeros_like(X)

#就是个max,然后将负数置零

return torch.max(X, a)

#定义模型,两个矩阵乘法和加法即可

def net(X):

X = X.reshape((-1, num_inputs)) #num_inputs = 784,一张图片展平后的长度

H = relu(X@W1 + b1) # 这里“@”代表矩阵乘法

return (H@W2 + b2)

#生成数据集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

#初始化模型参数

num_inputs, num_outputs, num_hiddens = 784, 10, 256

#随机生成w矩阵

W1 = nn.Parameter(torch.randn(

num_inputs, num_hiddens, requires_grad=True) * 0.01)

b1 = nn.Parameter(torch.zeros(num_hiddens, requires_grad=True))

W2 = nn.Parameter(torch.randn(

num_hiddens, num_outputs, requires_grad=True) * 0.01)

b2 = nn.Parameter(torch.zeros(num_outputs, requires_grad=True))

params = [W1, b1, W2, b2]

#定义损失函数

loss = nn.CrossEntropyLoss(reduction='none')

#定义优化算法

lr = 0.1

updater = torch.optim.SGD(params, lr=lr)

#训练模型

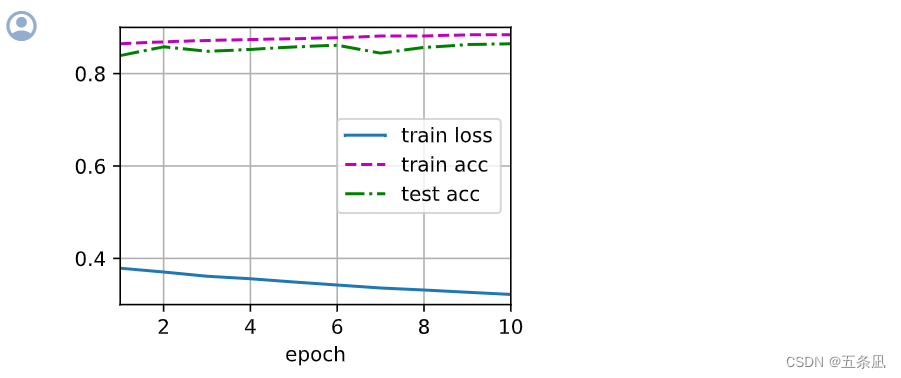

num_epochs = 10

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater)

简单实现:

import torch

from torch import nn

from d2l import torch as d2l

#初始化模型参数的函数

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

#同样需要先将输入展平

net = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 256),

nn.ReLU(),

nn.Linear(256, 10))

#模型初始化

net.apply(init_weights);

#设置参数

batch_size, lr, num_epochs = 256, 0.1, 10

#定义损失函数

loss = nn.CrossEntropyLoss(reduction='none')

#定义优化算法

trainer = torch.optim.SGD(net.parameters(), lr=lr)

#读取数据集

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

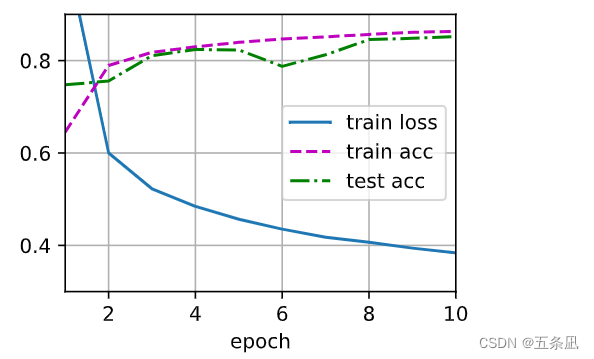

#训练模型

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

PS:简单加多一层:784-1440-256-10

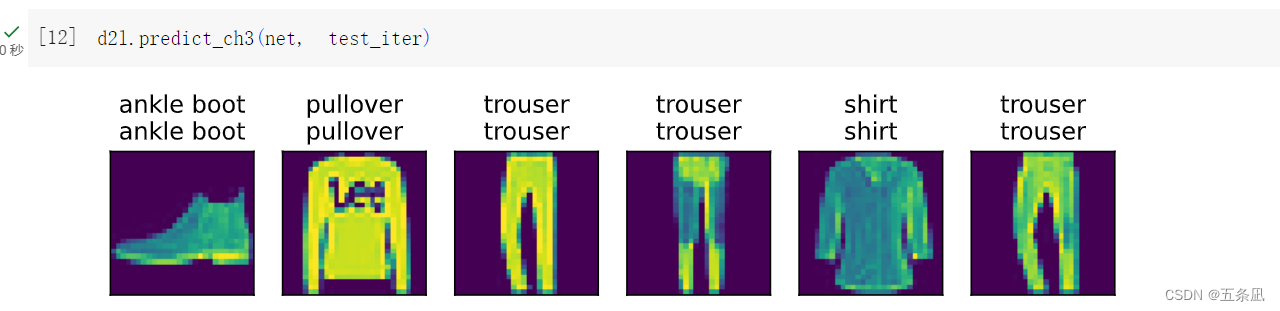

本文介绍了如何使用PyTorch库在Fashion-MNIST数据集上从头实现一个多层感知机,包括定义网络结构、初始化参数、损失函数和优化算法,以及训练过程。作者提供了两种实现方式,一种是逐层构建,另一种是使用Sequential模块简化代码。

本文介绍了如何使用PyTorch库在Fashion-MNIST数据集上从头实现一个多层感知机,包括定义网络结构、初始化参数、损失函数和优化算法,以及训练过程。作者提供了两种实现方式,一种是逐层构建,另一种是使用Sequential模块简化代码。

2136

2136

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?