一、任务要求

1、爬取数据区域拥堵排名、商圈拥堵排名、行政区划排名;

2、爬虫程序包括网页的爬取、数据的解析和数据的存储;

3、存储方式采用MySQL数据库。

二、爬取的页面内容

因为要爬取的网站https://report.amap.com/detail.do?city=110000是个动态页面,数据随时会变动,此处为爬取页面的示例:

三、页面数据分析

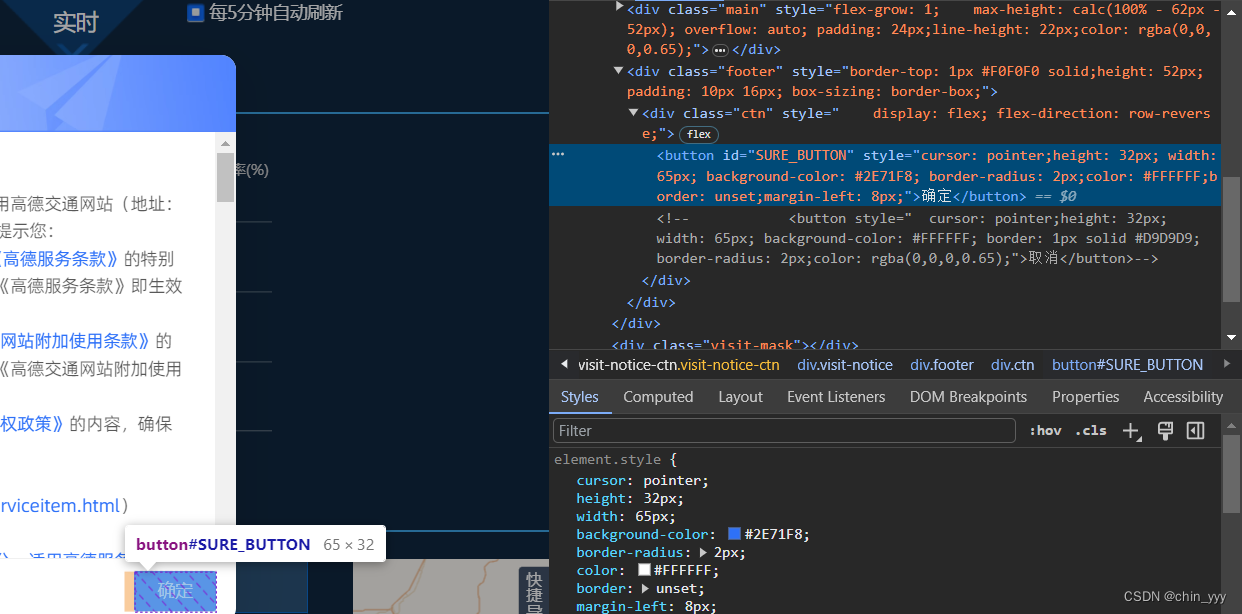

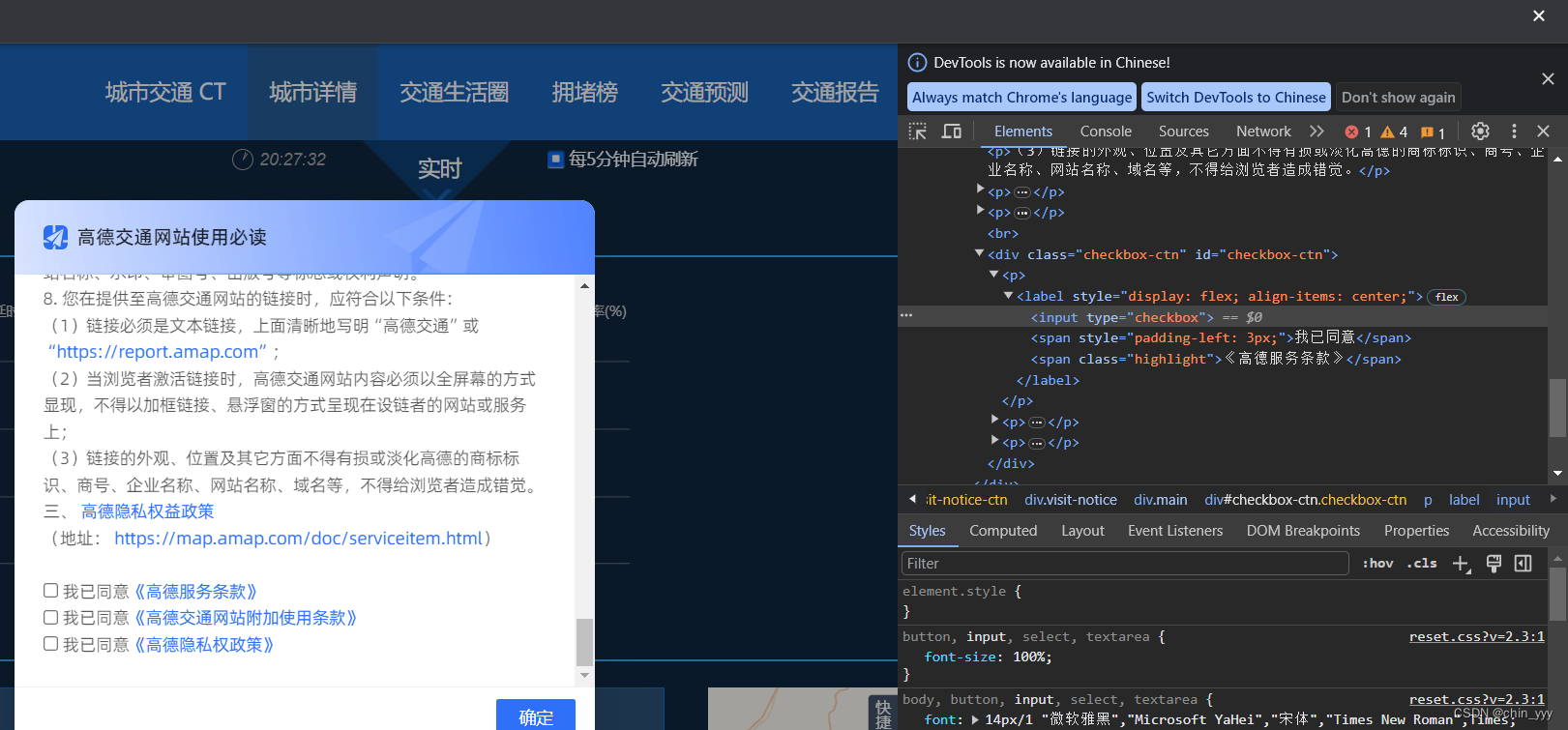

使用selenium库模拟浏览器操作(此处我用的是Chrome谷歌浏览器作为示例),获取动态加载的数据,访问https://report.amap.com/detail.do?city=110000,出现弹窗,找到所在的标签,观察到包括三个勾选协议按钮和一个确定按钮:

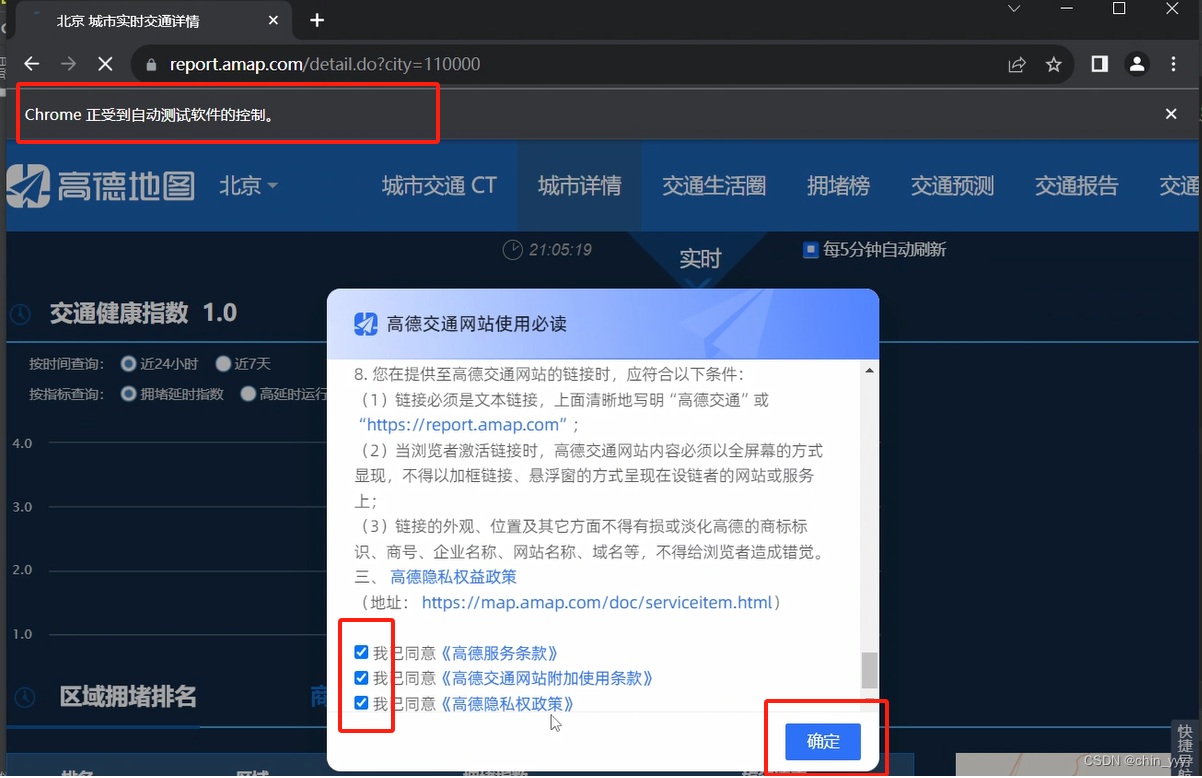

设计代码实现勾选,下图为效果演示图,可以看到浏览器正在受到自动测试软件的控制:

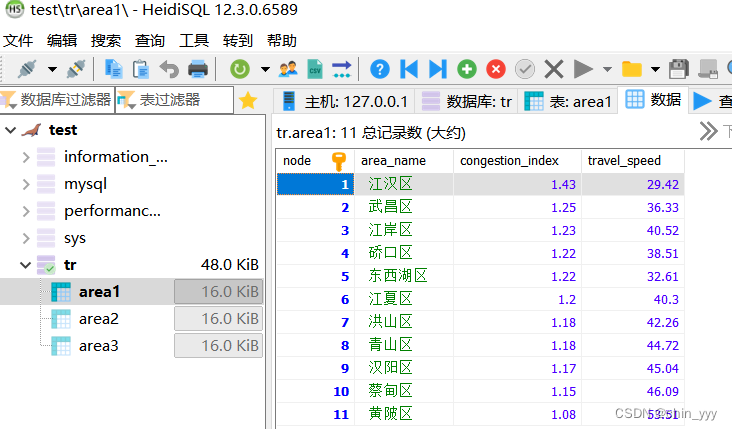

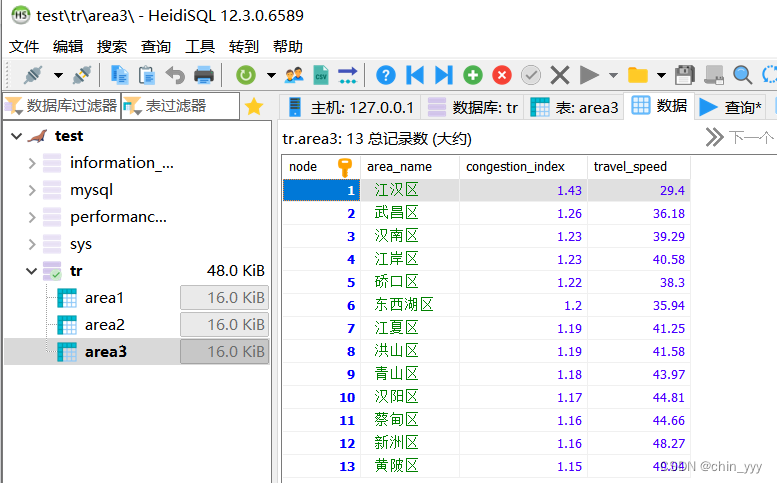

如果要将数据存储在数据库(以heidiSQL)中,还要在python中连接数据库。

(注:创建数据库时记得调整字符格式为utf8)

四、代码演示

下面为整个程序实行的完整代码:

import pymysql

import requests

from selenium import webdriver

import time

from time import sleep

from selenium.webdriver.common.by import By

from selenium.webdriver import Chrome

# 连接数据库

conn = pymysql.connect(host='127.0.0.1', port=3306, user='root', password='123456', database='tr')

# 创建游标对象

cursor = conn.cursor()

# 执行SQL语句,创建表

sql = '''create table if not exists area1(node int(10) comment "排行" primary key auto_increment,

area_name varchar(20) not null comment '区域',

congestion_index float not null comment '拥堵指数',

travel_speed double not null comment '旅行速度')'''

cursor.execute(sql)

sql = '''create table if not exists area2(node int(10) comment "排行" primary key auto_increment,

area_name varchar(20) not null comment '区域',

congestion_index float not null comment "拥堵指数",

travel_speed double not null comment '旅行速度')'''

cursor.execute(sql)

sql = '''create table if not exists area3(node int(10) comment "排行" primary key auto_increment,

area_name varchar(20) not null comment '区域',

congestion_index float not null comment '拥堵指数',

travel_speed double not null comment '旅行速度')'''

cursor.execute(sql)

cursor.execute('alter table area1 default character set utf8;') # 修改area1表编码格式

cursor.execute('alter table area2 default character set utf8;') # 修改area2表编码格式

cursor.execute('alter table area3 default character set utf8;') # 修改area3表编码格式

cursor.execute('alter table AREA1 change area_name area_name VARCHAR(20) character set utf8;') # 修改列的编码格式

cursor.execute('alter table AREA2 change area_name area_name VARCHAR(20) character set utf8;') # 修改列的编码格式

cursor.execute('alter table AREA3 change area_name area_name VARCHAR(20) character set utf8;') # 修改列的编码格式

# 访问交通网站

driver = Chrome()

driver.get("https://report.amap.com/detail.do?city=110000")

# driver.maximize_window()# 最大化窗口

# 勾选三个协议

driver.find_element(By.XPATH, '''//*[@id="checkbox-ctn"]/p[1]/label/input''').click()

driver.find_element(By.XPATH, '''//*[@id="checkbox-ctn"]/p[2]/label/input''').click()

driver.find_element(By.XPATH, '''//*[@id="checkbox-ctn"]/p[3]/label/input''').click()

# 点击确定

search_button_tag = driver.find_element(By.ID, "SURE_BUTTON")

search_button_tag.click()

# 选择武汉

change_city_tag = driver.find_elements(By.XPATH, '//a[@id="other_city"]')

change_city_tag[0].click()

change_city_a_tag = driver.find_elements(By.XPATH, '//*[@id="city_name"]/li[@data-name="wu han"]/a')

change_city_a_tag[0].click()

sleep(1)

# 获取数据

_index = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/thead/tr')

for i in _index:

print(i.text)

pai = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/tbody/tr')

for p in pai:

print(p.text)

data = p.text.split()

cursor.execute(

"INSERT INTO area1(node,area_name,congestion_index,travel_speed) VALUES(" + data[0] + ",'" + data[1] + "'," +

data[2] + "," + data[3] + ")")

conn.commit()

driver.implicitly_wait(5) # 隐形等待

# 切换排名榜

search_button_tag = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/h2/span[2]')

search_button_tag[0].click()

sleep(1)

# 获取数据

_index = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/thead/tr')

for i in _index:

print(i.text)

pai2 = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/tbody/tr')

for p in pai2:

print(p.text)

data = p.text.split()

cursor.execute(

"INSERT INTO area2(node,area_name,congestion_index,travel_speed) VALUES(" + data[0] + ",'" + data[1] + "'," +

data[2] + "," + data[3] + ")")

conn.commit()

driver.implicitly_wait(5) # 隐形等待

# 切换排行名榜

search_button_tag = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/h2/span[3]')

search_button_tag[0].click()

sleep(1)

# 获取数据

_index = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/thead/tr')

for i in _index:

print(i.text)

pai3 = driver.find_elements(By.XPATH, '/html/body/div[1]/div[2]/div/div/div[3]/div/div[2]/table/tbody/tr')

for p in pai3:

print(p.text)

data = p.text.split()

cursor.execute(

"INSERT INTO area3(node,area_name,congestion_index,travel_speed) VALUES(" + data[0] + ",'" + data[1] + "'," +

data[2] + "," + data[3] + ")")

conn.commit()

driver.implicitly_wait(30) # 隐形等待,最多等待30秒

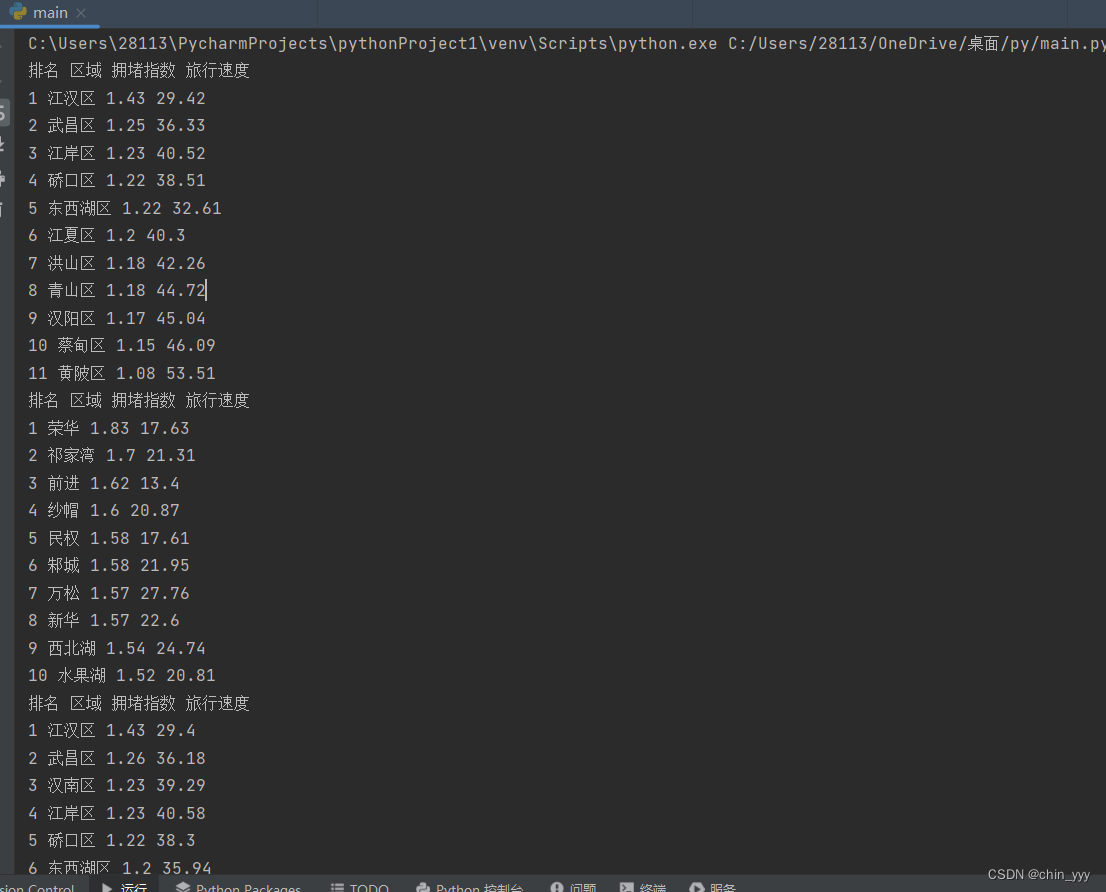

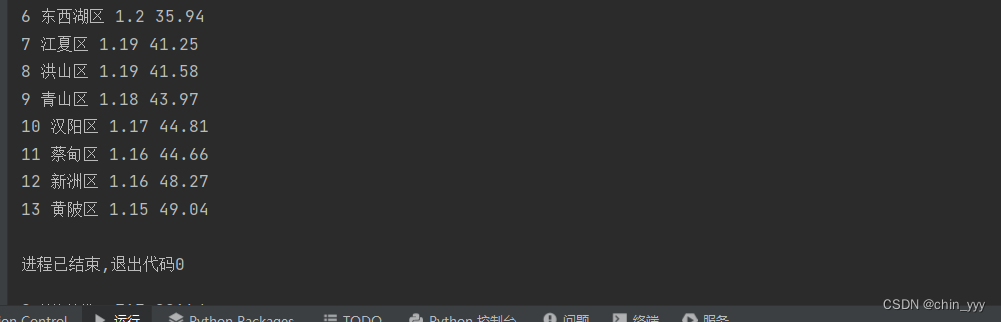

五、运行结果

以上就是本次实验的成果。

以上就是本次实验的成果。

创作不易,点个赞支持一下吧!

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?