- 在委屈求全使用了一段时间的cpu版的tf之后,终于在今天下定决心安装配置GPU版的tf,安装+配置大概用了两个小时,下面记录一下安装过程和爬坑技巧,防止大家踩坑

1.首先安装显卡驱动

以防万一,无论设备原先是否安装过显卡驱动都重新安装或更新一下

附上官网链接 https://www.nvidia.cn/geforce/drivers/

选择手动搜索显卡驱动

作者的辣鸡显卡是GTX1050Ti,大家按照自己的显卡搜索对应的驱动

根据作者的经验,选择一个发行日期不太新也不太旧的版本下载,下载完以后一直next安装即可,最好不要修改安装路径

2.安装CUDA

还是官网下载安装程序 附上链接 https://developer.nvidia.com/cuda-10.0-download-archive?target_os=Windows&target_arch=x86_64&target_version=10&target_type=exelocal

如图直接点击donwload下载,下载完成后一直next安装,需要注意的是这一步最好选择自定义安装

最后安装完成关闭即可

3.下载cudnn

由于官网下载需要注册,这里给大家附上百度云

链接:https://pan.baidu.com/s/1f5ZYrwgbqyxUZbXzB8WDsA

提取码:3yl8

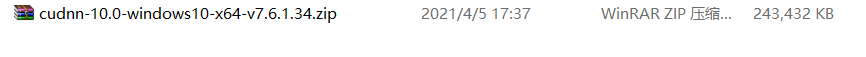

下载完成后是一个压缩文件,将其解压

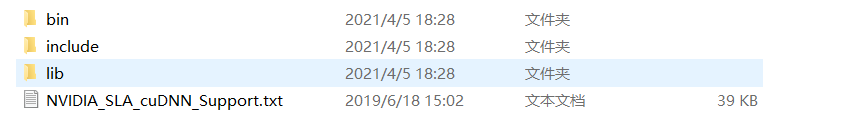

解压后将三个文件夹和一个txt文件全部放在"cudnn"(自己创建)这个文件夹下复制到C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v10.0路径下

cudnn相当于CUDA的一个补丁,至此cudnn配置搞定

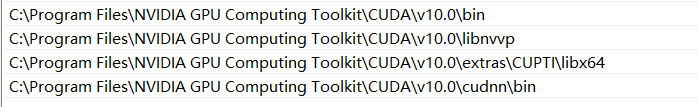

4.配置环境变量

在系统Path环境变量中配置以上四个环境变量,缺一不可,从CUDA的安装路径中找到对应的位置复制地址粘贴进去即可

5.安装tensorflow-gpu

直接以管理员身份打开命令行,键入

pip install tensorflow-gpu==2.2.0 -i http://pypi.douban.com/simple/

这里使用豆瓣的源下载,亲测速度很快

安装完成以后测试是否配置成功

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

import tensorflow as tf

print(tf.__version__)

print(tf.config.list_physical_devices("GPU"))

print(tf.test.is_gpu_available())

不出所料,没有配置成功。不必担心,作者已踩坑。观察警告日志,发现是各种cudnnxxx.dll未能加载

下载各种dll,这里给大家附上百度云

链接:https://pan.baidu.com/s/1yNBXYtw5B36FLNPd1s92UQ

提取码:6bbw

下载下来以后是一堆dll文件,将其全部复制到C:\Windows\System路径下

6.配置成功

最后,再来测试一次

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

import tensorflow as tf

print(tf.__version__)

print(tf.config.list_physical_devices("GPU"))

print(tf.test.is_gpu_available())

哇!终于成功了!enjoy!

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?