在网上下载了60多幅包含西瓜和冬瓜的图像组成melon数据集,使用 EISeg 工具进行标注,然后使用 eiseg2yolov8 脚本将.json文件转换成YOLOv8支持的.txt文件,并自动生成YOLOv8支持的目录结构,包括melon.yaml文件,其内容如下:

path: ../datasets/melon_seg # dataset root dir

train: images/train # train images (relative to 'path')

val: images/val # val images (relative to 'path')

test: # test images (optional)

# Classes

names:

0: watermelon

1: wintermelon对melon数据集进行训练的Python实现如下:最终生成的模型文件有best.pt、best.onnx、best.torchscript

import argparse

import colorama

from ultralytics import YOLO

def parse_args():

parser = argparse.ArgumentParser(description="YOLOv8 train")

parser.add_argument("--yaml", required=True, type=str, help="yaml file")

parser.add_argument("--epochs", required=True, type=int, help="number of training")

parser.add_argument("--task", required=True, type=str, choices=["detect", "segment"], help="specify what kind of task")

args = parser.parse_args()

return args

def train(task, yaml, epochs):

if task == "detect":

model = YOLO("yolov8n.pt") # load a pretrained model

elif task == "segment":

model = YOLO("yolov8n-seg.pt") # load a pretrained model

else:

print(colorama.Fore.RED + "Error: unsupported task:", task)

raise

results = model.train(data=yaml, epochs=epochs, imgsz=640) # train the model

metrics = model.val() # It'll automatically evaluate the data you trained, no arguments needed, dataset and settings remembered

model.export(format="onnx") #, dynamic=True) # export the model, cannot specify dynamic=True, opencv does not support

# model.export(format="onnx", opset=12, simplify=True, dynamic=False, imgsz=640)

model.export(format="torchscript") # libtorch

if __name__ == "__main__":

colorama.init()

args = parse_args()

train(args.task, args.yaml, args.epochs)

print(colorama.Fore.GREEN + "====== execution completed ======")以下是使用libtorch接口加载torchscript文件进行实例分割的实现代码:

namespace {

constexpr bool cuda_enabled{ false };

constexpr int input_size[2]{ 640, 640 }; // {height,width}, input shape (1, 3, 640, 640) BCHW and output shape(s): detect:(1,6,8400); segment:(1,38,8400),(1,32,160,160)

constexpr float confidence_threshold{ 0.45 }; // confidence threshold

constexpr float iou_threshold{ 0.50 }; // iou threshold

constexpr float mask_threshold{ 0.50 }; // segment mask threshold

#ifdef _MSC_VER

constexpr char* onnx_file{ "../../../data/best.onnx" };

constexpr char* torchscript_file{ "../../../data/best.torchscript" };

constexpr char* images_dir{ "../../../data/images/predict" };

constexpr char* result_dir{ "../../../data/result" };

constexpr char* classes_file{ "../../../data/images/labels.txt" };

#else

constexpr char* onnx_file{ "data/best.onnx" };

constexpr char* torchscript_file{ "data/best.torchscript" };

constexpr char* images_dir{ "data/images/predict" };

constexpr char* result_dir{ "data/result" };

constexpr char* classes_file{ "data/images/labels.txt" };

#endif

cv::Mat modify_image_size(const cv::Mat& img)

{

auto max = std::max(img.rows, img.cols);

cv::Mat ret = cv::Mat::zeros(max, max, CV_8UC3);

img.copyTo(ret(cv::Rect(0, 0, img.cols, img.rows)));

return ret;

}

std::vector<std::string> parse_classes_file(const char* name)

{

std::vector<std::string> classes;

std::ifstream file(name);

if (!file.is_open()) {

std::cerr << "Error: fail to open classes file: " << name << std::endl;

return classes;

}

std::string line;

while (std::getline(file, line)) {

auto pos = line.find_first_of(" ");

classes.emplace_back(line.substr(0, pos));

}

file.close();

return classes;

}

auto get_dir_images(const char* name)

{

std::map<std::string, std::string> images; // image name, image path + image name

for (auto const& dir_entry : std::filesystem::directory_iterator(name)) {

if (dir_entry.is_regular_file())

images[dir_entry.path().filename().string()] = dir_entry.path().string();

}

return images;

}

float image_preprocess(const cv::Mat& src, cv::Mat& dst)

{

cv::cvtColor(src, dst, cv::COLOR_BGR2RGB);

float scalex = src.cols * 1.f / input_size[1];

float scaley = src.rows * 1.f / input_size[0];

if (scalex > scaley)

cv::resize(dst, dst, cv::Size(input_size[1], static_cast<int>(src.rows / scalex)));

else

cv::resize(dst, dst, cv::Size(static_cast<int>(src.cols / scaley), input_size[0]));

cv::Mat tmp = cv::Mat::zeros(input_size[0], input_size[1], CV_8UC3);

dst.copyTo(tmp(cv::Rect(0, 0, dst.cols, dst.rows)));

dst = tmp;

return (scalex > scaley) ? scalex : scaley;

}

void get_masks(const cv::Mat& features, const cv::Mat& proto, const std::vector<int>& output1_sizes, const cv::Mat& frame, const cv::Rect box, cv::Mat& mk)

{

const cv::Size shape_src(frame.cols, frame.rows), shape_input(input_size[1], input_size[0]), shape_mask(output1_sizes[3], output1_sizes[2]);

cv::Mat res = (features * proto).t();

res = res.reshape(1, { shape_mask.height, shape_mask.width });

// apply sigmoid to the mask

cv::exp(-res, res);

res = 1.0 / (1.0 + res);

cv::resize(res, res, shape_input);

float scalex = shape_src.width * 1.0 / shape_input.width;

float scaley = shape_src.height * 1.0 / shape_input.height;

cv::Mat tmp;

if (scalex > scaley)

cv::resize(res, tmp, cv::Size(shape_src.width, static_cast<int>(shape_input.height * scalex)));

else

cv::resize(res, tmp, cv::Size(static_cast<int>(shape_input.width * scaley), shape_src.height));

cv::Mat dst = tmp(cv::Rect(0, 0, shape_src.width, shape_src.height));

mk = dst(box) > mask_threshold;

}

void draw_boxes_mask(const std::vector<std::string>& classes, const std::vector<int>& ids, const std::vector<float>& confidences,

const std::vector<cv::Rect>& boxes, const std::vector<cv::Mat>& masks, const std::string& name, cv::Mat& frame)

{

std::cout << "image name: " << name << ", number of detections: " << ids.size() << std::endl;

std::random_device rd;

std::mt19937 gen(rd());

std::uniform_int_distribution<int> dis(100, 255);

cv::Mat mk = frame.clone();

std::vector<cv::Scalar> colors;

for (auto i = 0; i < classes.size(); ++i)

colors.emplace_back(cv::Scalar(dis(gen), dis(gen), dis(gen)));

for (auto i = 0; i < ids.size(); ++i) {

cv::rectangle(frame, boxes[i], colors[ids[i]], 2);

std::string class_string = classes[ids[i]] + ' ' + std::to_string(confidences[i]).substr(0, 4);

cv::Size text_size = cv::getTextSize(class_string, cv::FONT_HERSHEY_DUPLEX, 1, 2, 0);

cv::Rect text_box(boxes[i].x, boxes[i].y - 40, text_size.width + 10, text_size.height + 20);

cv::rectangle(frame, text_box, colors[ids[i]], cv::FILLED);

cv::putText(frame, class_string, cv::Point(boxes[i].x + 5, boxes[i].y - 10), cv::FONT_HERSHEY_DUPLEX, 1, cv::Scalar(0, 0, 0), 2, 0);

mk(boxes[i]).setTo(colors[ids[i]], masks[i]);

}

cv::addWeighted(frame, 0.5, mk, 0.5, 0, frame);

//cv::imshow("Inference", frame);

//cv::waitKey(-1);

std::string path(result_dir);

cv::imwrite(path + "/" + name, frame);

}

void post_process_mask(const cv::Mat& output0, const cv::Mat& output1, const std::vector<int>& output1_sizes, const std::vector<std::string>& classes, const std::string& name, cv::Mat& frame)

{

std::vector<int> class_ids;

std::vector<float> confidences;

std::vector<cv::Rect> boxes;

std::vector<std::vector<float>> masks;

float scalex = frame.cols * 1.f / input_size[1]; // note: image_preprocess function

float scaley = frame.rows * 1.f / input_size[0];

auto scale = (scalex > scaley) ? scalex : scaley;

const float* data = (float*)output0.data;

for (auto i = 0; i < output0.rows; ++i) {

cv::Mat scores(1, classes.size(), CV_32FC1, (float*)data + 4);

cv::Point class_id;

double max_class_score;

cv::minMaxLoc(scores, 0, &max_class_score, 0, &class_id);

if (max_class_score > confidence_threshold) {

confidences.emplace_back(max_class_score);

class_ids.emplace_back(class_id.x);

masks.emplace_back(std::vector<float>(data + 4 + classes.size(), data + output0.cols)); // 32

float x = data[0];

float y = data[1];

float w = data[2];

float h = data[3];

int left = std::max(0, std::min(int((x - 0.5 * w) * scale), frame.cols));

int top = std::max(0, std::min(int((y - 0.5 * h) * scale), frame.rows));

int width = std::max(0, std::min(int(w * scale), frame.cols - left));

int height = std::max(0, std::min(int(h * scale), frame.rows - top));

boxes.emplace_back(cv::Rect(left, top, width, height));

}

data += output0.cols;

}

std::vector<int> nms_result;

cv::dnn::NMSBoxes(boxes, confidences, confidence_threshold, iou_threshold, nms_result);

cv::Mat proto = output1.reshape(0, { output1_sizes[1], output1_sizes[2] * output1_sizes[3] });

std::vector<int> ids;

std::vector<float> confs;

std::vector<cv::Rect> rects;

std::vector<cv::Mat> mks;

for (size_t i = 0; i < nms_result.size(); ++i) {

auto index = nms_result[i];

ids.emplace_back(class_ids[index]);

confs.emplace_back(confidences[index]);

boxes[index] = boxes[index] & cv::Rect(0, 0, frame.cols, frame.rows);

cv::Mat mk;

get_masks(cv::Mat(masks[index]).t(), proto, output1_sizes, frame, boxes[index], mk);

mks.emplace_back(mk);

rects.emplace_back(boxes[index]);

}

draw_boxes_mask(classes, ids, confs, rects, mks, name, frame);

}

} // namespace

int test_yolov8_segment_libtorch()

{

if (auto flag = torch::cuda::is_available(); flag == true)

std::cout << "cuda is available" << std::endl;

else

std::cout << "cuda is not available" << std::endl;

torch::Device device(torch::cuda::is_available() ? torch::kCUDA : torch::kCPU);

auto classes = parse_classes_file(classes_file);

if (classes.size() == 0) {

std::cerr << "Error: fail to parse classes file: " << classes_file << std::endl;

return -1;

}

std::cout << "classes: ";

for (const auto& val : classes) {

std::cout << val << " ";

}

std::cout << std::endl;

if (!std::filesystem::exists(result_dir)) {

std::filesystem::create_directories(result_dir);

}

try {

torch::jit::script::Module model;

if (torch::cuda::is_available() == true)

model = torch::jit::load(torchscript_file, torch::kCUDA);

else

model = torch::jit::load(torchscript_file, torch::kCPU);

model.eval();

// note: cpu is normal; gpu is abnormal: the model may not be fully placed on the gpu

// model = torch::jit::load(file); model.to(torch::kCUDA) ==> model = torch::jit::load(file, torch::kCUDA)

// model.to(device, torch::kFloat32);

for (const auto& [key, val] : get_dir_images(images_dir)) {

cv::Mat frame = cv::imread(val, cv::IMREAD_COLOR);

if (frame.empty()) {

std::cerr << "Warning: unable to load image: " << val << std::endl;

continue;

}

auto tstart = std::chrono::high_resolution_clock::now();

cv::Mat bgr = modify_image_size(frame);

cv::resize(bgr, bgr, cv::Size(input_size[1], input_size[0]));

torch::Tensor tensor = torch::from_blob(bgr.data, { bgr.rows, bgr.cols, 3 }, torch::kByte).to(device);

tensor = tensor.toType(torch::kFloat32).div(255);

tensor = tensor.permute({ 2, 0, 1 });

tensor = tensor.unsqueeze(0);

std::vector<torch::jit::IValue> inputs{ tensor };

// reference: https://medium.com/@psopen11/complete-guide-to-gpu-accelerated-yolov8-segmentation-in-c-via-libtorch-c-dlls-a0e3e6029d82

auto output = model.forward(inputs).toTuple()->elements();

auto output0 = output[0].toTensor().transpose(1, 2).contiguous().to(torch::kCPU);

auto output1 = output[1].toTensor().to(torch::kCPU);

if (output0.dim() != 3 || output1.dim() != 4) {

std::cerr << "Error: unmatch dimensions: " << output0.dim() << "," << output1.dim() << std::endl;

}

cv::Mat data0 = cv::Mat(output0.size(1), output0.size(2), CV_32FC1, output0.data_ptr<float>());

std::vector<int> sizes;

for (int i = 0; i < 4; ++i)

sizes.emplace_back(output1.size(i));

cv::Mat data1 = cv::Mat(sizes, CV_32F, output1.data_ptr<float>());

auto tend = std::chrono::high_resolution_clock::now();

std::cout << "elapsed millisenconds: " << std::chrono::duration_cast<std::chrono::milliseconds>(tend - tstart).count() << " ms" << std::endl;

post_process_mask(data0, data1, sizes, classes, key, frame);

}

}

catch (const c10::Error& e) {

std::cerr << "Error: " << e.msg() << std::endl;

return -1;

}

return 0;

}labels.txt文件内容如下:仅2类

watermelon 0

wintermelon 1说明:

1.这里使用的libtorch版本为2.2.2;

2.通过函数torch::cuda::is_available()判断执行cpu还是gpu

3.通过非cmake构建项目时,调用torch::cuda::is_available()时即使在gpu下也会返回false,解决方法:项目属性:链接器 --> 命令行:其他选项中添加如下语句:

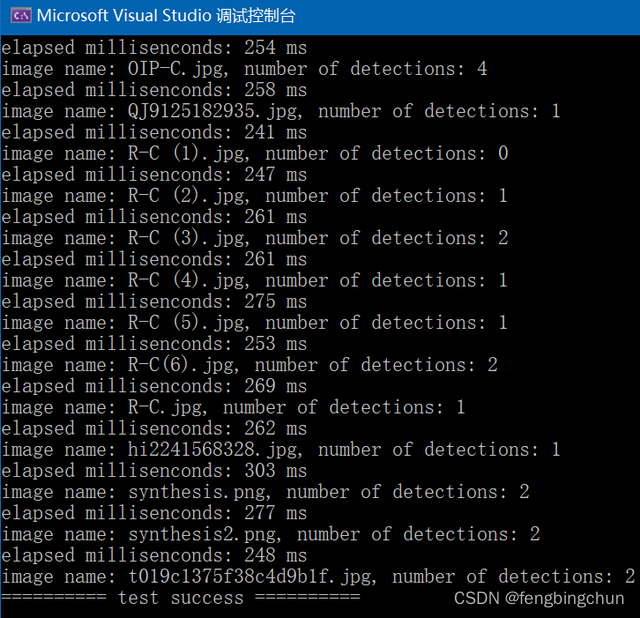

/INCLUDE:?warp_size@cuda@at@@YAHXZ执行结果如下图所示:下面显示的耗时是在cpu下,gpu下仅20毫秒左右

其中一幅图像的分割结果如下图所示:cpu与gpu下结果有的不同;与opencv/onnruntime采用相同的后处理,结果不如opencv/onnxruntime的好

1294

1294

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?