BasicRNNCell是抽象类RNNCell的一个最简单的实现。

class BasicRNNCell(RNNCell):

def __init__(self, num_units, activation=None, reuse=None):

super(BasicRNNCell, self).__init__(_reuse=reuse)

self._num_units = num_units

self._activation = activation or math_ops.tanh

self._linear = None

@property

def state_size(self):

return self._num_units

@property

def output_size(self):

return self._num_units

def call(self, inputs, state):

if self._linear is None:

self._linear = _Linear([inputs, state], self._num_units, True)

output = self._activation(self._linear([inputs, state]))

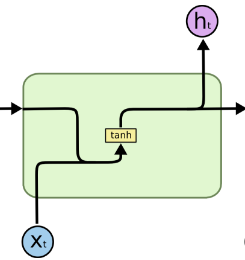

return output, output实现了下面的运算:

用公式表示就是:

ht=tanh(Wk[xt,ht−1]+b)

h

t

=

t

a

n

h

(

W

k

[

x

t

,

h

t

−

1

]

+

b

)

有时这个公式会写成下面这个形式(W叫Kernel,U叫Recurrent Kernel,图形可参考这里):

ht=tanh(Wxt+Uht−1+b)

h

t

=

t

a

n

h

(

W

x

t

+

U

h

t

−

1

+

b

)

结果是一样的。

从源代码里可以看到,state_size和output_size都跟num_units都是同一个数字,call函数返回两个一模一样的向量。

简单调用例子:

import tensorflow as tf

import numpy as np

batch_size = 3

input_dim = 2

output_dim = 4

inputs = tf.placeholder(dtype=tf.float32, shape=(batch_size, input_dim))

previous_state = tf.random_normal(shape=(batch_size, output_dim))

cell = tf.contrib.rnn.BasicRNNCell(num_units=output_dim)

output, state = cell(inputs, previous_state)

X = np.ones(shape=(batch_size, input_dim))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

o, s = sess.run([output, state], feed_dict={inputs: X})

print(X)

print(previous_state.eval())

print(o)

print(s)

Out:

# Input:

[[ 1. 1.]

[ 1. 1.]

[ 1. 1.]]

# Previous State:

[[ 0.29562142 1.88447475 -0.71091568 -1.03161728]

[-0.32763469 -0.4521957 -0.33536151 0.06760707]

[-0.04627729 0.04288582 -0.62693876 0.70083541]]

# Output = State

[[ 0.1948889 0.77429289 0.41136274 0.42551333]

[-0.99327117 -0.68583459 0.97010344 -0.56064779]

[-0.98540735 -0.51250875 0.92181391 -0.98040372]]

[[ 0.1948889 0.77429289 0.41136274 0.42551333]

[-0.99327117 -0.68583459 0.97010344 -0.56064779]

[-0.98540735 -0.51250875 0.92181391 -0.98040372]]额外

- 想要查看内部 Wk W k 和 b b <script type="math/tex" id="MathJax-Element-4">b</script>的值可参考这里。

307

307

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?