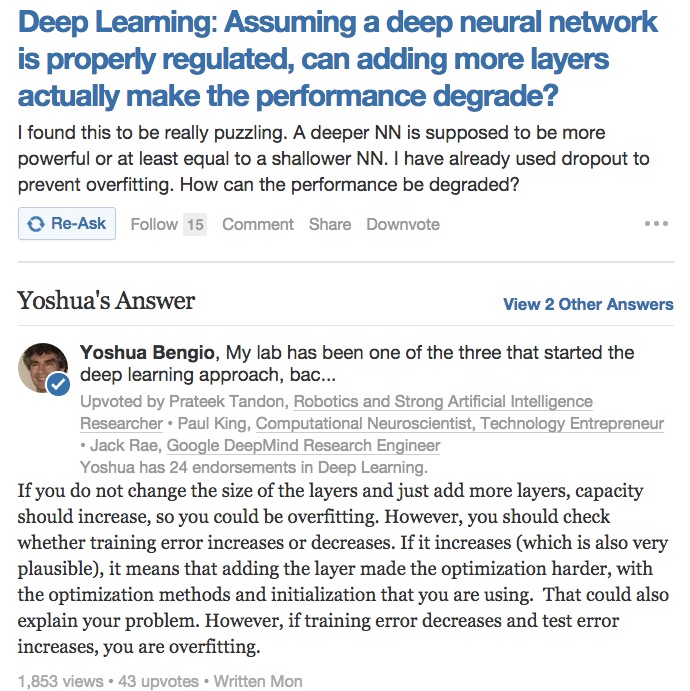

Quora上的一个问题:”增加DNN层数是否会导致性能下降?”Bengio给出的回答,点出了单纯增加层数可能会出现过拟合。并且训练误差的增加或减少对结果的影响也很大。

原话如下:

If you do not change the size of the layers and just add more layers, capacity should increase, so you could be overfitting. However, you should check whether training error increases or decreases. If it increases (which is also very plausible), it means that adding the layer made the optimization harder, with the optimization methods and initialization that you are using. That could also explain your problem. However, if training error decreases and test error increases, you are overfitting.

原文链接点这里。

然而对于这个回答,毕业于纽约大学的 @G_Auss ,微博评论如下:

真实情况不是这样的。添加参数是否增大假设函数空间复杂性取决于参数的实际值范围。纵向添加是否增加复杂度要看增加层之后的Lipschitz是否有界及其范围。横向添加参数增大模型复杂是基本没有异议的。深度学习应用统计学习理论看参数数量太过于简化不符合实际。

653

653

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?