锁主要分为两种,自旋锁和互斥锁。

自旋锁

线程反复检查锁变量是否可用,处于忙等状态。一旦获取了自旋锁,线程会一直保持该锁,直至释放,会阻塞线程,但是避免了线程上下文的调度开销,适合短时间的场合。

互斥锁

是⼀种⽤于多线程编程中,防⽌两条线程同时对同⼀公共资源(⽐如全局变量)进⾏读写的机制。该⽬的通过将代码切⽚成⼀个⼀个的临界区⽽达成。

递归锁

同一个线程可以加锁N次而不会引发死锁 。NSRecursiveLock ;pthread_mutex(recursive)

读写锁

读写锁实际是⼀种特殊的⾃旋锁,它把对共享资源的访问者划分成读者和写者,读者只对共享资源进⾏读访问,写者则需要对共享资源进⾏写操作。

synchronized

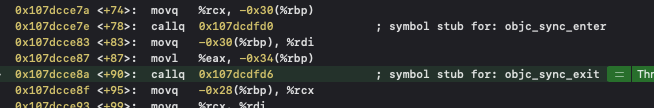

想要看源码,却不知道在哪里,根据经验就进入汇编调试了。发现了objc_sync_enter入口和objc_sync_exit出口,这是在objc源码中。

objc_sync_enter,如果没有objc的话,就什么也不操作;有objc生成对应的SyncData,内部封装了互斥锁mutex,这是一个链表的数据结构,会进行tls线程操作。ACQUIRE状态会对对象进行锁的计数加一。

int objc_sync_enter(id obj)

{

int result = OBJC_SYNC_SUCCESS;

if (obj) {

SyncData* data = id2data(obj, ACQUIRE);

ASSERT(data);

data->mutex.lock();

} else {

// @synchronized(nil) does nothing

if (DebugNilSync) {

_objc_inform("NIL SYNC DEBUG: @synchronized(nil); set a breakpoint on objc_sync_nil to debug");

}

objc_sync_nil();

}

return result;

}

typedef struct alignas(CacheLineSize) SyncData {

struct SyncData* nextData;

DisguisedPtr<objc_object> object;

int32_t threadCount; // number of THREADS using this block

recursive_mutex_t mutex;

} SyncData;objc_sync_exit,出口会调用id2data,状态传RELEASE,会对内部封装的lockCount减去,最后为0的时候,从表中移除

int objc_sync_exit(id obj)

{

int result = OBJC_SYNC_SUCCESS;

if (obj) {

SyncData* data = id2data(obj, RELEASE);

if (!data) {

result = OBJC_SYNC_NOT_OWNING_THREAD_ERROR;

} else {

bool okay = data->mutex.tryUnlock();

if (!okay) {

result = OBJC_SYNC_NOT_OWNING_THREAD_ERROR;

}

}

} else {

// @synchronized(nil) does nothing

}

return result;

}id2data这里是核心

- 刚加锁时,外面传入状态为ACQUIRE,会从StripedMap表中判断是否有根据这个对象生成的SyncData,有的话,lockCount加1,然后线程操作,没有的话,根据对象创建SyncData

- 退出时也一样会调用id2data只是状态为RELEASE,同样会从StripedMap表中根据obj获取到SyncData,再相应的lockCount减1,最后如果变为0的话,移除StripedMap表中的SyncData

static SyncData* id2data(id object, enum usage why)

{

spinlock_t *lockp = &LOCK_FOR_OBJ(object);

SyncData **listp = &LIST_FOR_OBJ(object);

SyncData* result = NULL;

#if SUPPORT_DIRECT_THREAD_KEYS

// Check per-thread single-entry fast cache for matching object

bool fastCacheOccupied = NO;

SyncData *data = (SyncData *)tls_get_direct(SYNC_DATA_DIRECT_KEY);

if (data) {

fastCacheOccupied = YES;

if (data->object == object) {

// Found a match in fast cache.

uintptr_t lockCount;

result = data;

lockCount = (uintptr_t)tls_get_direct(SYNC_COUNT_DIRECT_KEY);

if (result->threadCount <= 0 || lockCount <= 0) {

_objc_fatal("id2data fastcache is buggy");

}

switch(why) {

case ACQUIRE: {

lockCount++;

tls_set_direct(SYNC_COUNT_DIRECT_KEY, (void*)lockCount);

break;

}

case RELEASE:

lockCount--;

tls_set_direct(SYNC_COUNT_DIRECT_KEY, (void*)lockCount);

if (lockCount == 0) {

// remove from fast cache

tls_set_direct(SYNC_DATA_DIRECT_KEY, NULL);

// atomic because may collide with concurrent ACQUIRE

OSAtomicDecrement32Barrier(&result->threadCount);

}

break;

case CHECK:

// do nothing

break;

}

return result;

}

}

#endif

// Check per-thread cache of already-owned locks for matching object

SyncCache *cache = fetch_cache(NO);

if (cache) {

unsigned int i;

for (i = 0; i < cache->used; i++) {

SyncCacheItem *item = &cache->list[i];

if (item->data->object != object) continue;

// Found a match.

result = item->data;

if (result->threadCount <= 0 || item->lockCount <= 0) {

_objc_fatal("id2data cache is buggy");

}

switch(why) {

case ACQUIRE:

item->lockCount++;

break;

case RELEASE:

item->lockCount--;

if (item->lockCount == 0) {

// remove from per-thread cache

cache->list[i] = cache->list[--cache->used];

// atomic because may collide with concurrent ACQUIRE

OSAtomicDecrement32Barrier(&result->threadCount);

}

break;

case CHECK:

// do nothing

break;

}

return result;

}

}

// Thread cache didn't find anything.

// Walk in-use list looking for matching object

// Spinlock prevents multiple threads from creating multiple

// locks for the same new object.

// We could keep the nodes in some hash table if we find that there are

// more than 20 or so distinct locks active, but we don't do that now.

lockp->lock();

{

SyncData* p;

SyncData* firstUnused = NULL;

for (p = *listp; p != NULL; p = p->nextData) {

if ( p->object == object ) {

result = p;

// atomic because may collide with concurrent RELEASE

OSAtomicIncrement32Barrier(&result->threadCount);

goto done;

}

if ( (firstUnused == NULL) && (p->threadCount == 0) )

firstUnused = p;

}

// no SyncData currently associated with object

if ( (why == RELEASE) || (why == CHECK) )

goto done;

// an unused one was found, use it

if ( firstUnused != NULL ) {

result = firstUnused;

result->object = (objc_object *)object;

result->threadCount = 1;

goto done;

}

}

// Allocate a new SyncData and add to list.

// XXX allocating memory with a global lock held is bad practice,

// might be worth releasing the lock, allocating, and searching again.

// But since we never free these guys we won't be stuck in allocation very often.

posix_memalign((void **)&result, alignof(SyncData), sizeof(SyncData));

result->object = (objc_object *)object;

result->threadCount = 1;

new (&result->mutex) recursive_mutex_t(fork_unsafe_lock);

result->nextData = *listp;

*listp = result;

done:

lockp->unlock();

if (result) {

// Only new ACQUIRE should get here.

// All RELEASE and CHECK and recursive ACQUIRE are

// handled by the per-thread caches above.

if (why == RELEASE) {

// Probably some thread is incorrectly exiting

// while the object is held by another thread.

return nil;

}

if (why != ACQUIRE) _objc_fatal("id2data is buggy");

if (result->object != object) _objc_fatal("id2data is buggy");

#if SUPPORT_DIRECT_THREAD_KEYS

if (!fastCacheOccupied) {

// Save in fast thread cache

tls_set_direct(SYNC_DATA_DIRECT_KEY, result);

tls_set_direct(SYNC_COUNT_DIRECT_KEY, (void*)1);

} else

#endif

{

// Save in thread cache

if (!cache) cache = fetch_cache(YES);

cache->list[cache->used].data = result;

cache->list[cache->used].lockCount = 1;

cache->used++;

}

}

return result;

}锁的性能

这里有张从其他地方获来的截图,可以详细的介绍了各种锁的性能。synchronized性能显示最差是因为内部不断的增删改查,但是可以避免死锁,所以使用频繁。

1440

1440

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?