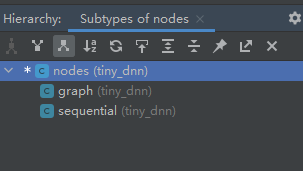

layer类层次

前向传播调用栈举例

后向传播调用栈举例

前向传播

后向传播

参与后向传播的有:

- 网络network(图graph或序列seq),

- 层layer(激活函数层, cnn层, rnn层,全连接层, … )

参与后前传播的有:

- 网络network(图graph或序列seq),

- 层layer(激活函数层, cnn层, rnn层,全连接层, … )

去掉并行选项, 看到完整调用栈

//config.h

//去掉以下这行的注释, 即 去掉了并行选项, 变成单线程直接调用.

#define CNN_SINGLE_THREAD

去掉并行选项后, 再编译, 调式, 看到以下完整调用栈

#完整调用栈文本

tiny_dnn::kernels::avx_conv2d_5x5_kernel<tiny_dnn::aligned_allocator<float,64> >(const tiny_dnn::core::conv_params &,const std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,const std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,const std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,const bool) conv2d_op_avx.h:178

<lambda_93cd752631eaddbf3fd5164277579d9c>::operator()(unsigned long long) conv2d_op_avx.h:461

tiny_dnn::for_i<unsigned __int64,<lambda_93cd752631eaddbf3fd5164277579d9c> >(bool,unsigned long long,<lambda_93cd752631eaddbf3fd5164277579d9c>,unsigned long long) parallel_for.h:187

tiny_dnn::kernels::conv2d_op_avx(const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > &,const std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,const std::vector<float,tiny_dnn::aligned_allocator<float,64> > &,std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > &,const tiny_dnn::core::conv_params &,const bool) conv2d_op_avx.h:460

tiny_dnn::Conv2dOp::compute(tiny_dnn::core::OpKernelContext &) conv2d_op.h:46

tiny_dnn::convolutional_layer::forward_propagation(const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *> > &,std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *> > &) convolutional_layer.h:298

tiny_dnn::layer::forward() layer.h:554

tiny_dnn::sequential::forward(const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > >,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > > > &) nodes.h:296

tiny_dnn::network<tiny_dnn::sequential>::fprop(const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > >,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > > > &) network.h:203

tiny_dnn::network<tiny_dnn::sequential>::train_onebatch<tiny_dnn::mse,tiny_dnn::adagrad>(tiny_dnn::adagrad &,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,int,const int,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *) network.h:924

tiny_dnn::network<tiny_dnn::sequential>::train_once<tiny_dnn::mse,tiny_dnn::adagrad>(tiny_dnn::adagrad &,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *,int,const int,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > *) network.h:897

tiny_dnn::network<tiny_dnn::sequential>::fit<tiny_dnn::mse,tiny_dnn::adagrad,<lambda_2167b27d0bf24d6ba6fbafdc977e3835>,<lambda_dfd0aadcd2585f275bac64861e8acb9b> >(tiny_dnn::adagrad &,const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > >,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > > > &,const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > >,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > > > &,unsigned long long,int,<lambda_2167b27d0bf24d6ba6fbafdc977e3835>,<lambda_dfd0aadcd2585f275bac64861e8acb9b>,const bool,const int,const std::vector<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > >,std::allocator<std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > > > &) network.h:863

tiny_dnn::network<tiny_dnn::sequential>::train<tiny_dnn::mse,tiny_dnn::adagrad,<lambda_2167b27d0bf24d6ba6fbafdc977e3835>,<lambda_dfd0aadcd2585f275bac64861e8acb9b> >(tiny_dnn::adagrad &,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > &,const std::vector<unsigned __int64,std::allocator<unsigned __int64> > &,unsigned long long,int,<lambda_2167b27d0bf24d6ba6fbafdc977e3835>,<lambda_dfd0aadcd2585f275bac64861e8acb9b>,const bool,const int,const std::vector<std::vector<float,tiny_dnn::aligned_allocator<float,64> >,std::allocator<std::vector<float,tiny_dnn::aligned_allocator<float,64> > > > &) network.h:305

train_lenet(const std::basic_string<char,std::char_traits<char>,std::allocator<char> > &,double,const int,const int,backend_t) train.cpp:115

main(int,char **) train.cpp:212

invoke_main() 0x00007ff6939e9629

__scrt_common_main_seh() 0x00007ff6939e950e

__scrt_common_main() 0x00007ff6939e93ce

mainCRTStartup(void *) 0x00007ff6939e96be

BaseThreadInitThunk 0x00007ff903867034

RtlUserThreadStart 0x00007ff9043e2651

很明显的层次:

- train

- train_lenet

- sequential.train

- sequential.fit

- sequential.train_once

- sequential.train_onebatch

- forward

- sequential.fprop : 这是缩写 forward_propagation

- sequential.forward

- layer.forward

- convolutional_layer.forward_propagation

- Conv2dOp.compute

- kernel

- kernels.conv2d_op_avx

- for_i

- operator()

- kernels.avx_conv2d_5x5_kernel

由此可见, 所谓kernel就是实际干活的, 实际执行具体计算过程的. 所以应该有各种各样的kernel

完成各种各样具体计算任务的部件 叫 kernel

tiny-dnn中有以下这些kernel:

注意 pytorch 中的 kernel 也是这个意思

nodes: 图或序列

nodes : 多个节点, 即 表示 网络结构 的顶层标记类. 网络结构 有 : 图结构、序列结构

compute: 前向计算入口统称, 且 即将转向kernel

5492

5492

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?