大势所趋,开始向OpenGL搬家。发现OpenGL和Direct3D相比各种残疾啊,连个计算矩阵的包都要自己实现,更别提dds和skin animation什么的了。竟然Tangent和binormal都不自带,哦滴神!

不过也因此终于搞明白tangent space是怎么来的了。 Tangent和binormal的计算可以参照这篇文章,摘要如下。后来发现之间做的shader各种不对,如何算正确的实现了bump map了呢?首先,按下面公式计算出的T cross B应该等于顶点的Normal(不可思议,虽然公式完全没有涉及到法线,但竟然真的相等,不知道背后的数学原理是怎样的);其次,先不要匆忙从bump贴图里取法线,对比下一般方法实现的效果,和将light和view转换到tangent space里,法线local取(0,0,1)的效果看看是否一致。另外需要注意的是用

gl.glBindAttribLocation(glsl.getProgram(), 12, "tangent");

gl.glBindAttribLocation(glsl.getProgram(), 13, "binormal");

gl.glVertexAttrib3f(12, tangent.getX(), tangent.getY(), tangent.getZ());

gl.glVertexAttrib3f(13, binormal.getX(), binormal.getY(), binormal.getZ());

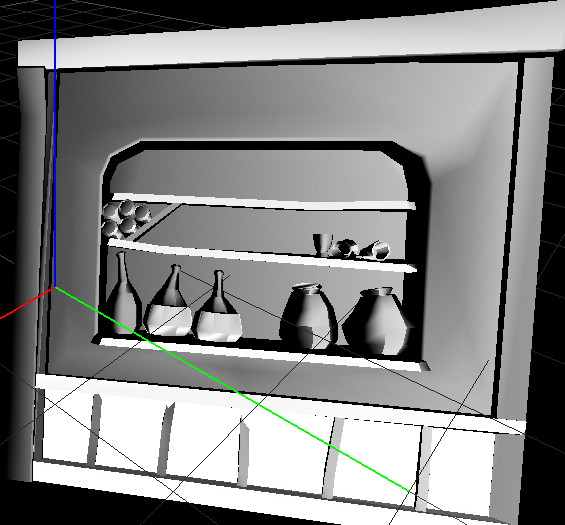

设置的时候,需要在bind attribute之后linkProgram到glsl,不然可是传不进去的哦。效果如下,高光还是有点小瑕疵,不过基本ok拉。

这之后,从这篇文章看到了相关的数学原理。解释得还比较透彻。摘要在最后。

如果需要从高度图转bump,见后面。

不晓得为什么用一般的渲染方法会出现各向异性的感觉,估计diffuse图有做过效果,或者normal有玄机,还蛮神奇的。

上面是( pow( fRDotV, 1) )的效果,如果是( pow( fRDotV, 50) )那么几乎看不出来,需要底图才能隐约看到。

一般方法渲染的高光竟然可以这么好看。(看来原来是我一直弄得不对啊-____-)

Tangent Space definition

-

Coordinate system definition

Tangent space is composed of 3 vectors (T, B, N) respectively called tangent, binormal and normal. Tangent and binormal are vectors in the plane. While normal vector is perpendicular to the place, so it is also perpendicular to tangent and binormal vectors.

In the texture space, (T, B) vectors correspond to (u, v) vectors. This means, T is the vector (1, 0) in texture space and B is the (0, 1). This is the coordinates of T and B in texture space.

You can see a representation of (T, B, N) coordinate system in both texture and world space. The picture was

From Matyas Premecz's paper [1]

The tangent space transformation convert a point expressed in world space ie (x, y, z) to a vector in texture space (u, v, w).

Tangent space is really usefull for advanced rendering texhnique which stores pixels information (normal, height, color ...) in a texture. Tangent space allow working directly in texture coordinate system

-

Equation of T and B

Suppose a point pi in world coordinate system for which texture coordinates are (ui, vi), the relation between texture coordinates (ie ui ui, vi) and world space (pi) is govern by the relation .

Note: This point can also be considered as the vector starting from the origin to pi.

pi = ui.T + vi.B

Texture/World space relation

Writting this equation for the points p1, p2 and p3 give :

p1 = u1.T + v1.B

p2 = u2.T + v2.B

p3 = u3.T + v3.B

With equation manipulation (equation subtraction), we can write :

p2 - p1 = (u2 - u1).T + (v2 - v1).B

p3 - p1 = (u3 - u1).T + (v3 - v1).B

By resolving this system :

(v3 - v1).(p2 - p1) = (v3 - v1).(u2 - u1).T + (v3 - v1).(v2 - v1).B

- (v2 - v1).(p3 - p1) - (v2 - v1).(u3 - u1).T - (v2 - v1).(v3 - v1).B

(u3 - u1).(p2 - p1) = (u3 - u1).(u2 - u1).T + (u3 - u1).(v2 - v1).B

- (u2 - u1).(p3 - p1) - (u2 - u1).(u3 - u1).T - (u2 - u1).(v3 - v1).B

And we finally have the formula of T and B :

(v3 - v1).(p2 - p1) - (v2 - v1).(p3 - p1)

T = ---------------------------------------

(u2 - u1).(v3 - v1) - (v2 - v1).(u3 - u1)

(u3 - u1).(p2 - p1) - (u2 - u1).(p3 - p1)

B = ---------------------------------------

(v2 - v1).(u3 - u1) - (u2 - u1).(v3 - v1)

Equation of tangent and binormal vectors

-

Equation of N

(T, B, N) is a base for the coordinate system. N can be obtained as the cross product of T by B :

N = cross(T, B)

Equation of normal vector

-

Equation of T, B, N (Summary)

![]()

Tangent

![]()

Binormal

![]()

Normal

Bump Map的原理

We define two tangent vectors to the surface at (x, y) as follows (heightmap[x, y] represents the height at (x, y)):

t = (1, 0, heightmap[x+1, y] - heightmap[x-1, y])

β = (0, 1, heightmap[x, y+1] - heightmap[x, y-1])

The normal vector η at (x, y) is the normalized cross product of t and β. If (x, y) is a point in an area of the height map where the neighborhood is all the same color (all black or all white, i.e. all "low" or all "high"), then t is (1,0,0) and β is (0,1,0). This makes sense: if the neighborhood is "flat", then the tangent vectors are the X and Y axes and η points straight "up" along the Z axis (0,0,1). If (x, y) is a point in an area of the height map at the edge between a black and a white region, then the Z component of t and β will be non-zero, so η will be perturbed away from the Z axis.

Note that η has been computed relative to the surface that we wish to bump map. If the surface would be rotated or translated, η would not change. This implies that we cannot immediately use η in the lighting equation: the light vector is not defined relative to the surface but relative to all surfaces in our scene.

Texture Space and Object Space

For every surface (triangle) in our scene there are two frames of reference. One frame of reference, called texture space (blue in the figure below), is used for defining the triangle texture coordinates, the η vector, etc. Another frame of reference, called object space (red in the figure below), is used for defining all other triangles in our scene, the light vector, the eye position, etc.:

In order to compute the lighting equation, we wish to use the light vector (object space) and the η vector (texture space). This suggests that we must transform the light vector from object space into texture space.

Let object space use the basis [(1,0,0), (0,1,0), (0,0,1)] and let texture space use the basis [T, B, N] where N is the cross product of the two basis vectors T and B and all basis vectors are normalized. We want to compute T and B in order to fully define texture space.

Consider the following triangle whose vertices (V1, V2, V3) are defined in object space and whose texture coordinates (C1, C2, C3) are defined texture space [2]:

Let V4 be some point (in object space) that lies inside the triangle and let C4 be its corresponding texture coordinate (in texture space). The vector (C4 - C1), in texture space, can be decomposed along T and B: let the T component be (C4 - C1)T and let the B component be (C4 - C1)B.

The vector (V4 - V1) is the sum of the T and B components of (C4 - C1):

V4 - V1 = (C4 - C1)T * T + (C4 - C1)B * B

V2 - V1 = (C2 - C1)T * T + (C2 - C1)B * B

V3 - V1 = (C3 - C1)T * T + (C3 - C1)B * B

where

t1 = (C2 - C1)T, t2 = (C3 - C1)T

b1 = (C2 - C1)B, b2 = (C3 - C1)B

高度图转bumpmap

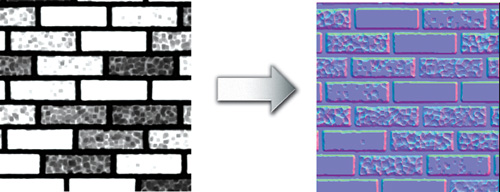

Authoring normal maps raises another issue. Painting direction vectors in a computer paint program is very unintuitive. However, most normal maps are derived from what is known as a height field. Rather than encoding vectors, a height field texture encodes the height, or elevation, of each texel. A height field stores a single unsigned component per texel rather than using three components to store a vector. Figure 8-1 shows an example of a brick wall height field. (See Plate 12 in the book's center insert for a color version of the normal map.) Darker regions of the height field are lower; lighter regions are higher. Solid white bricks are smooth. Bricks with uneven coloring are bumpy. The mortar is recessed, so it is the darkest region of the height field.

Figure 8-1 A Height Field Image for a Brick Bump Map

Converting a height field to a normal map is an automatic process, and it is typically done as a preprocessing step along with range compression. For each texel in the height field, you sample the height at the given texel, as well as the texels immediately to the right of and above the given texel. The normal vector is the normalized version of the cross product of two difference vectors. The first difference vector is (1, 0, Hr – Hg ), where Hg is the height of the given texel and Hr is the height of the texel directly to the right of the given texel. The second difference vector is (0, 1, Ha – Hg ), where Ha is the height of the texel directly above the given texel.

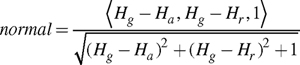

The cross product of these two vectors is a third vector pointing away from the height field surface. Normalizing this vector creates a normal suitable for bump mapping. The resulting normal is:

This normal is signed and must be range-compressed to be stored in an unsigned RGB texture. Other approaches exist for converting height fields to normal maps, but this approach is typically adequate.

The normal (0, 0, 1) is computed in regions of the height field that are flat. Think of the normal as a direction vector pointing up and away from the surface. In bumpy or uneven regions of the height field, an otherwise straight-up normal tilts appropriately.

As we've already mentioned, range-compressed normal maps are commonly stored in an unsigned RGB texture, where red, green, and blue correspond to x, y, and z. Due to the nature of the conversion process from height field to normal map, the zcomponent is always positive and often either 1.0 or nearly 1.0. The z component is stored in the blue component conventionally, and range compression converts positive z values to the [0.5, 1] range. Thus, the predominant color of range-compressed normal maps stored in an RGB texture is blue. Figure 8-1 also shows the brick wall height field converted into a normal map. Because the coloration is important, you should refer to the color version of Figure 8-1 shown in Plate 12.

9056

9056

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?