Pruning Filters and Channels - Neural Network Distillerhttps://intellabs.github.io/distiller/tutorial-struct_pruning.htmlDistiller tutorial: Pruning Filters & Channels_koberonaldo24的博客-CSDN博客本文为对distiller教程 Pruning Filters & Channels 的翻译。原文地址:Pruning Filters & ChannelsIntroductionchannel和filter的剪枝是结构化剪枝的示例,这些剪枝方法不需要特殊硬件即可完成剪枝并压缩模型,这也使得这种剪枝方法非常有趣并广泛应用。在具有串行数据依赖性的网络中,很容易理解和定义如何修建...

https://blog.csdn.net/koberonaldo24/article/details/102939434深度学习笔记_基本概念_卷积网络中的通道channel、特征图feature map、过滤器filter和卷积核kernel_惊鸿一博-CSDN博客目录卷积网络中的通道、特征图、过滤器和卷积核1.feature map1.1 feature map1.2 feature map怎么生成的?1.3 多个feature map 的作用是什么?2.卷积核2.1 定义2.2 形状2.3卷积核个数的理解3.filter4.channel4.1 定义4.2 分类4.3 通道与特征4.4通道的终点卷积网络中的通道、特征图、过滤器和卷积核1.feature map1.1 feature map.

https://visionary.blog.csdn.net/article/details/108048264?utm_medium=distribute.pc_relevant.none-task-blog-2~default~CTRLIST~default-1.no_search_link&depth_1-utm_source=distribute.pc_relevant.none-task-blog-2~default~CTRLIST~default-1.no_search_link模型通道剪枝汇总(channel pruning)_三寸光阴___的博客-CSDN博客_通道剪枝

![]() https://blog.csdn.net/qq_38109843/article/details/107462887

https://blog.csdn.net/qq_38109843/article/details/107462887

pytorch Resnet50分类模型剪枝_三寸光阴___的博客-CSDN博客_resnet50剪枝Resnet50网络结构:https://www.jianshu.com/p/993c03c22d52剪枝方式1.基于network-slimming论文的方法:pytorch版代码:https://github.com/Eric-mingjie/network-slimming思路:去掉downsample里面的BN层,为了方便采用Resnetv2的结构:BN-Conv-ReLU,在每一个bottleneck的第一个BN后自定义一个通道选择层(全1层),训练过程中不影响,剪枝时先生成BN的通道mahttps://blog.csdn.net/qq_38109843/article/details/107671873YOLOv3模型剪枝_三寸光阴___的博客-CSDN博客_yolov3剪枝参考代码:https://github.com/Lam1360/YOLOv3-model-pruninghttps://github.com/coldlarry/YOLOv3-complete-pruning剪枝代码解读:https://blog.csdn.net/just_sort/article/details/107089710剪枝原理:https://openaccess.thecvf.com/content_iccv_2017/html/Liu_Learning_Efficient_Conv

https://blog.csdn.net/qq_38109843/article/details/107234801

一.filter剪枝

1.串行模型filter剪枝

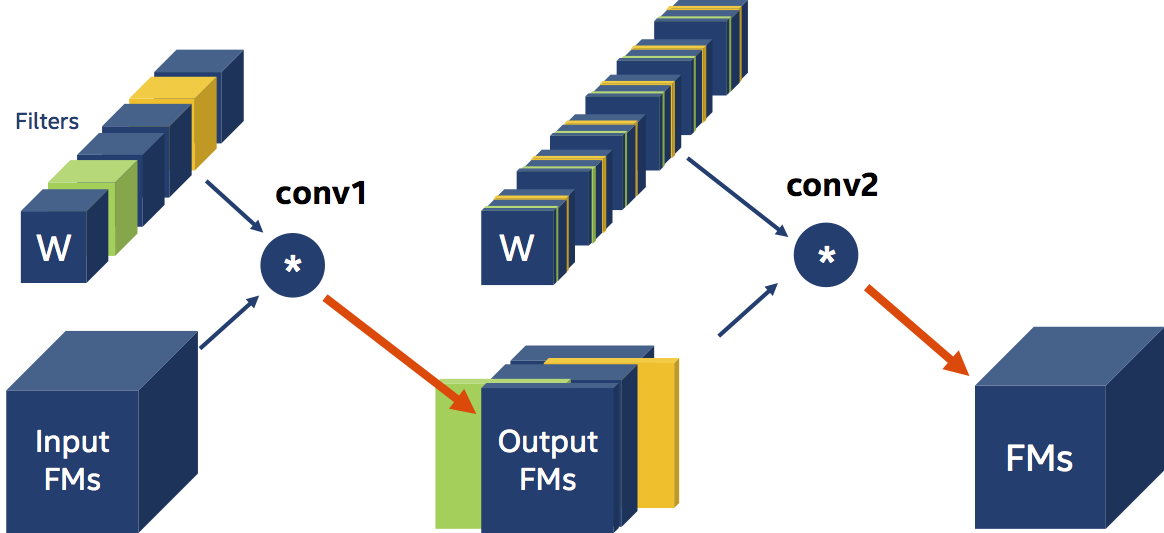

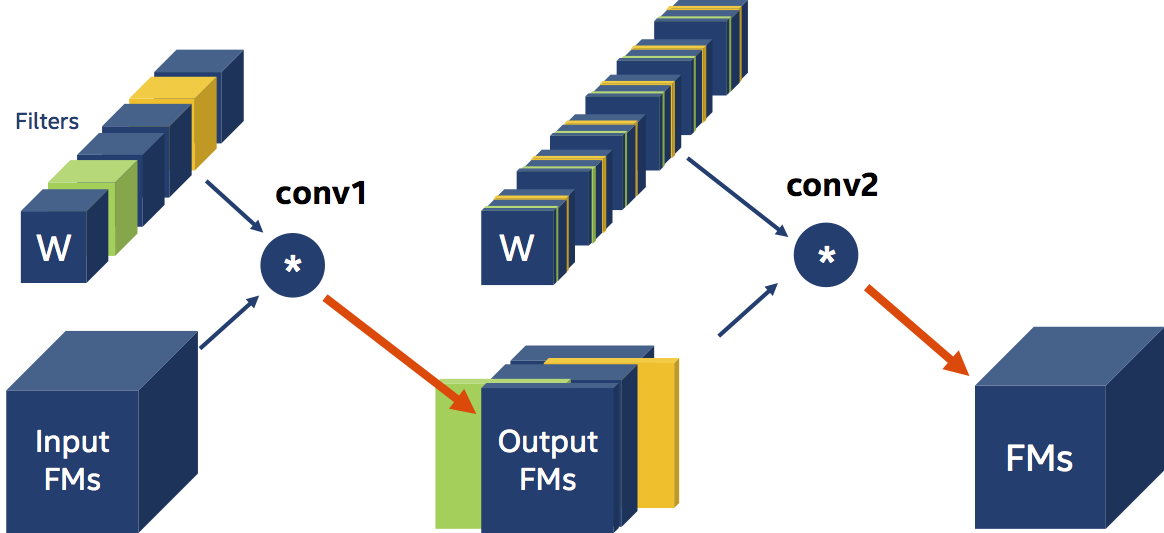

In filter pruning we use some criterion to determine which filters are important and which are not. Researchers came up with all sorts of pruning criteria: the L1-magnitude of the filters (citation), the entropy of the activations (citation), and the classification accuracy reduction (citation) are just some examples. Disregarding how we chose the filters to prune, let’s imagine that in the diagram below, we chose to prune (remove) the green and orange filters (the circle with the “*” designates a Convolution operation).

filter剪枝使用一些标准决定filter是否重要。各种各样的标准包括:filter的L1,激活的熵,分类准确率下降。假设绿色和橘色filter需要移除。

pruners: example_pruner:

class: L1RankedStructureParameterPruner_AGP

initial_sparsity : 0.10

final_sparsity: 0.50

group_type: Filters

weights: [module.conv1.weight]

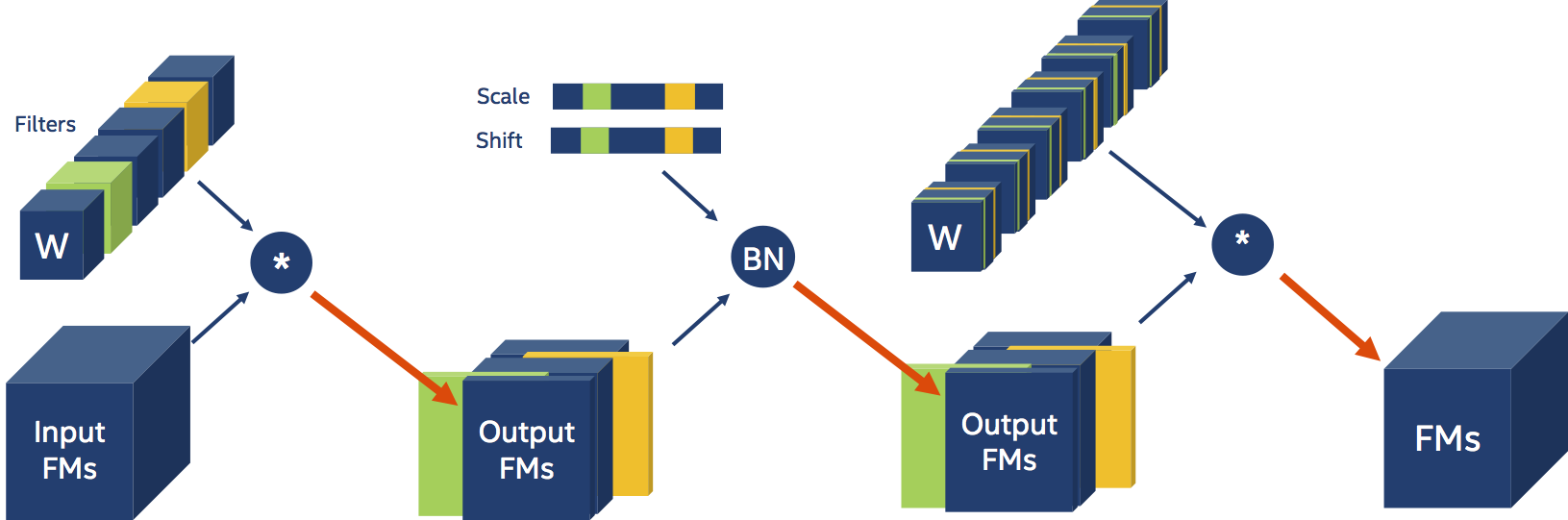

Distiller detects the presence of Batch Normalization layers and adjusts their parameters automatically.

2.自动调整batchnorm层结构

distiller能自动检测批归一化层的存在,自动调整批归一化的参数。

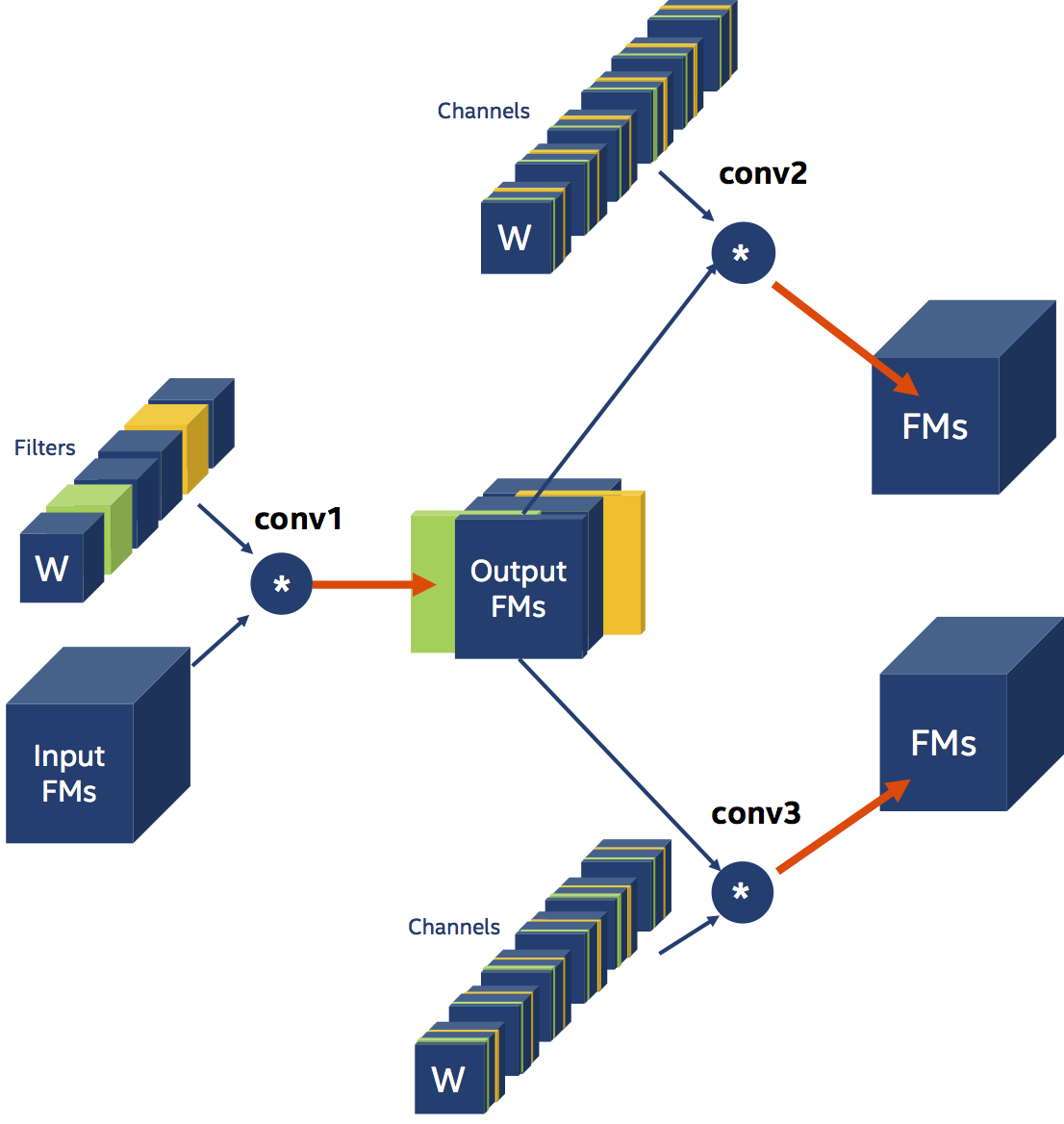

3.并行结构剪枝

3.1和串行结构一样

pruners: example_pruner:

class: L1RankedStructureParameterPruner_AGP

initial_sparsity : 0.10

final_sparsity: 0.50

group_type: Filters

weights: [module.conv1.weight]

3.2自行修改

pruners: example_pruner:

class: L1RankedStructureParameterPruner_AGP

initial_sparsity : 0.10

final_sparsity: 0.50

group_type: Filters

group_dependency: Leader

weights: [module.conv1.weight, module.conv2.weight]

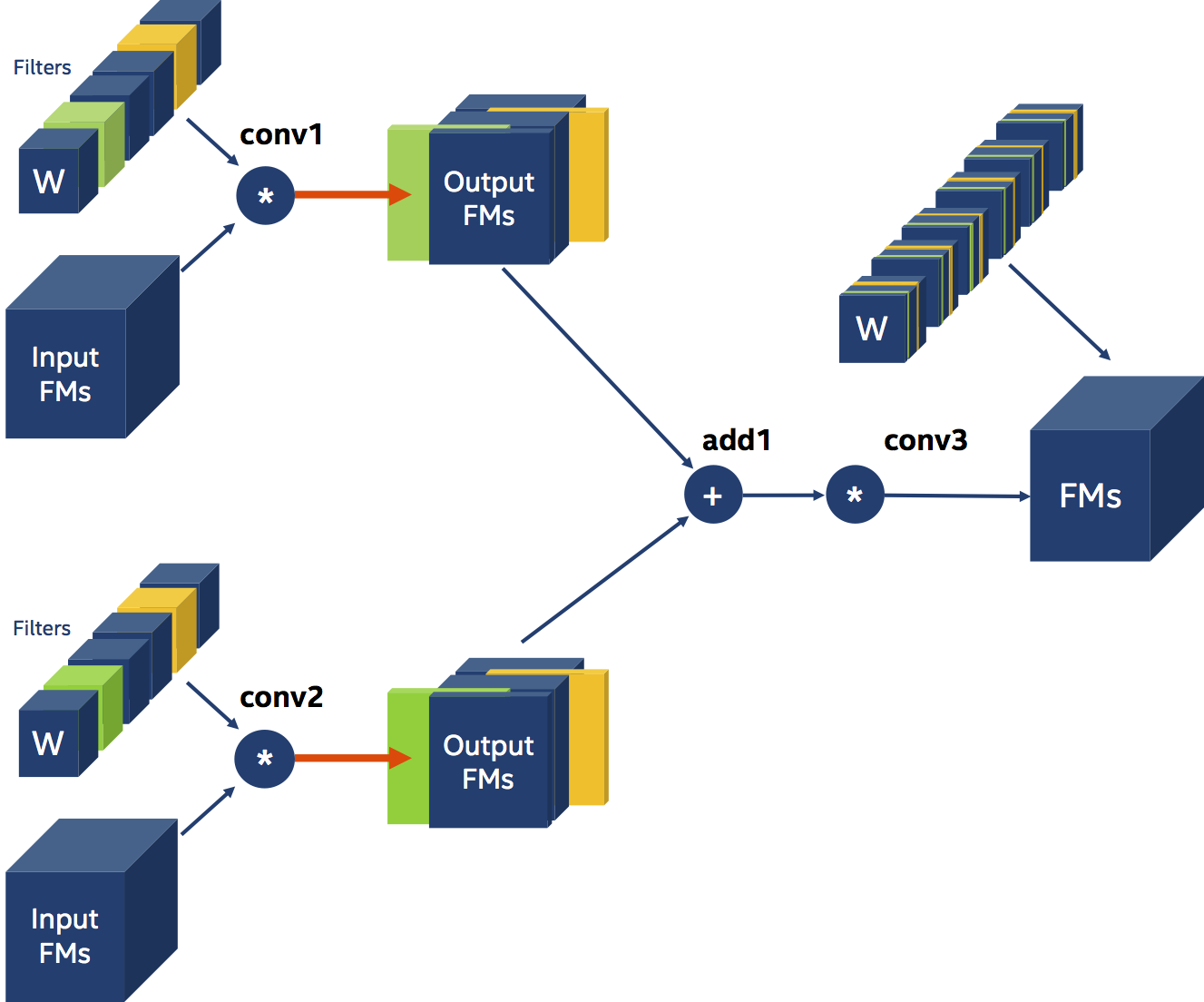

二.通道剪枝

一个卷积层包含F个filter,每个filter包含C个高度为H宽度为W的Channel。卷积层权重张量尺寸为HxWxCxF。 图中方块代表一个filter,每个方块找那个的分片,代表一个channel。减少conv1的filter会减少输出通道,distiller能自动减少conv2的channel.反之,减少conv2的channel,distiller会自动减少conv1的filter。

709

709

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?