开源地址:

https://github.com/DingXiaoH/RepLKNet-pytorch

有论文:

https://github.com/megvii-research/RepLKNet

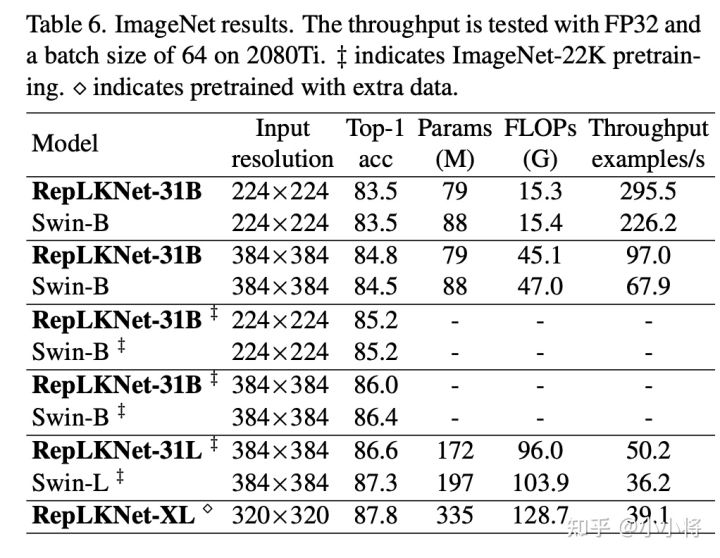

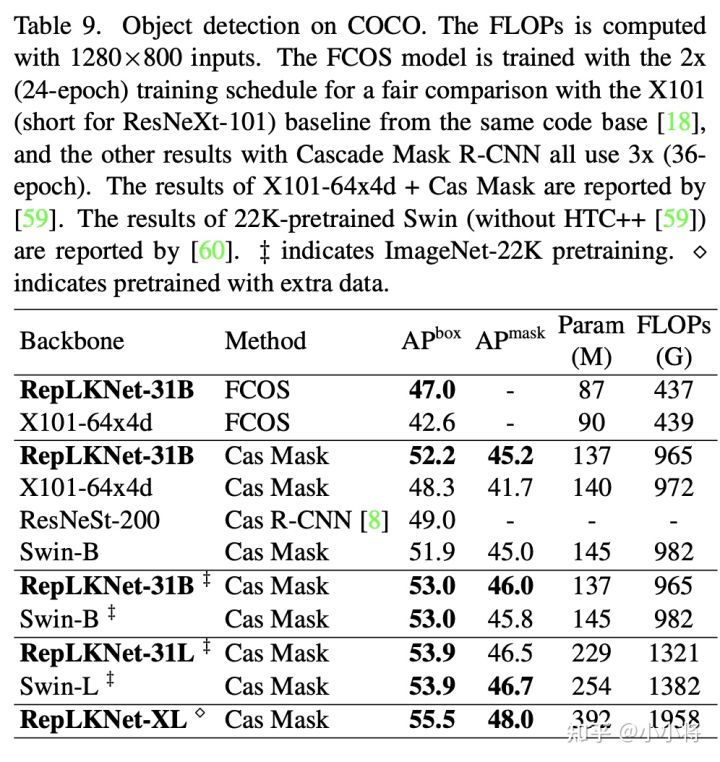

CVPR22最新论文,RepVGG作者提出RepLKNet:采用31×31大kernel的CNN网络,性能超过Swin,作者在论文中提出了大kernel size卷积的4个设计准则,并设计了31x32的纯CNN结构,在图像分类和下游检测分割上超过Swin!

In this paper we revisit large kernel design in modern convolutional neural networks (CNNs), which is often ne- glected in the past few years. Inspired by recent advances of vision transformers (ViTs), we point out that using a few large kernels instead of a stack of small convolutions could be a more powerful paradigm. We therefore summarize 5 guidelines, e.g., applying re-parameterized large depth- wise convolutions, to design efficient high-performance large-kernel CNNs. Following the guidelines, we propose RepLKNet, a pure CNN architecture whose kernel size is as large as 31×31. RepLKNet greatly bridges the perfor- mance gap between CNNs and ViTs, e.g., achieving compa- rable or better results than Swin Transformer on ImageNet and downstream tasks, while the latency of RepLKNet is much lower. Moreover, RepLKNet also shows feasible scal- ability to big data and large models, obtaining 87.8% top-1 accuracy on ImageNet and 56.0% mIoU on ADE20K. At last, our study further suggests large-kernel CNNs share several nice properties with ViTs, e.g., much larger effective receptive fields than conventional CNNs, and higher shape bias rather than texture bias. Code & models at https: //http://github.com/megvii-research/RepLKNet.

603

603

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?