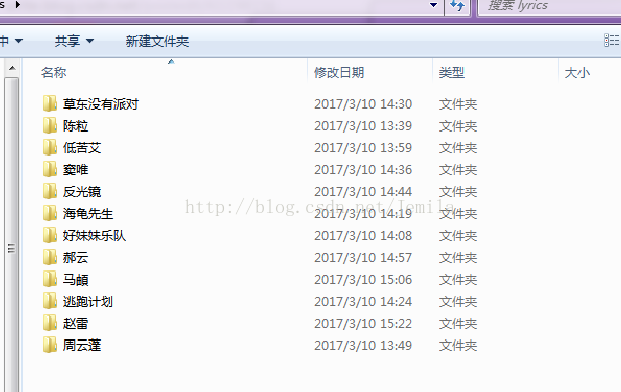

首先我的原始数据是这样的,关于爬虫请看http://blog.csdn.net/jemila/article/details/61196863

我的数据链接:http://pan.baidu.com/s/1hskNlEO 密码:dxv5

加载以下模块

import os

import jieba

import sys

reload(sys)

sys.setdefaultencoding("utf-8")from langconv import *

from langconv import *加载这个模块是为了简繁转换

加载停止词

f=open(r'C:/Users/user/Desktop/stopword.txt')

stopwords = f.readlines()

stopwords = [i.replace("\n","").decode("gbk") for i in stopwords]定义一个分词函数

def sent2word(sentence):

"""

Segment a sentence to words

Delete stopwords

"""

segList = jieba.cut(sentence)

segResult = []

for w in segList:

segResult.append(w)

newSent = []

for word in segResult:

if word in stopwords:

# print "stopword: %s" % word

continue

else:

newSent.append(Converter('zh-hans').convert(word.decode('utf-8')))

return newSent定义一个新建文件夹函数

def mkdir(path):

# 引入模块

import os

# 去除首位空格

path&#

本文介绍了一个使用Python进行歌词分析的过程,包括文件分词、剔除非歌词信息、应用TF-IDF进行关键词提取。作者注意到TF-IDF在歌词分析中的局限性,如特殊分词导致的异常结果,并分享了具体的算法实现。最后,生成了包含所有歌手分词后歌词的concat文件夹。

本文介绍了一个使用Python进行歌词分析的过程,包括文件分词、剔除非歌词信息、应用TF-IDF进行关键词提取。作者注意到TF-IDF在歌词分析中的局限性,如特殊分词导致的异常结果,并分享了具体的算法实现。最后,生成了包含所有歌手分词后歌词的concat文件夹。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

3944

3944

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?