日志的流向比较灵活,已经测试过的包括:

filebeat —> logstash

filebeat —> elastic

filebeat —> redis

logstash <—> redis

logstash —> elastic

logstash –>redis –> logstash –> elastic

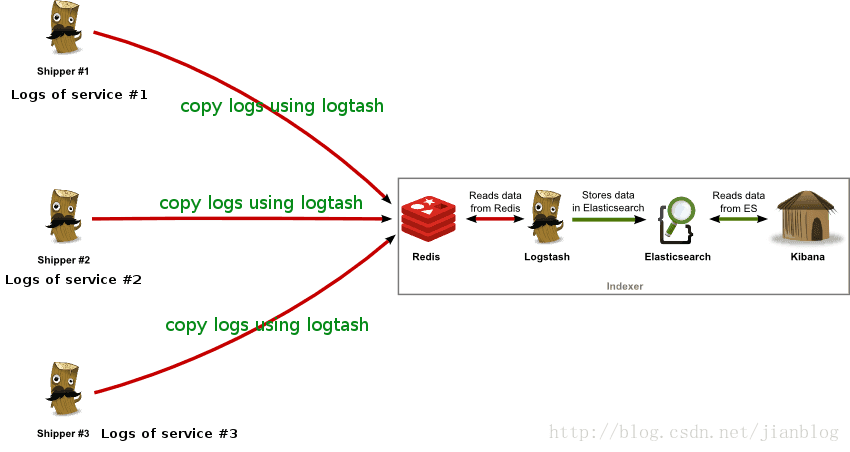

早期利用的环境,由于跨不同网络,故中间使用redis作为中转。

采集端 logstash配置

前端不处理,直接导入redis。正则匹配放在后端进行。

input {

file {

path => "/var/log/nginx/prod_access.log"

start_position => beginning

codec => plain {

charset => "UTF-8"

}

type => "prod_nginx"

}

}

output {

redis {

host => "ip_address" ##实际地址

port => 37000

data_type => "list"

key => "prod_nginx"

}后台logstash 读取redis

input {

file {

path => "/data/path/to/log/prod_access.log"

start_position => beginning

codec => plain {

charset => "UTF-8"

}

type => "nginx"

}

}

filter {

grok {

patterns_dir => "/usr/local/logstash-5.1.1/nginx_pattern"

match => {

"message" => ["%{NGINXACCESS1}", "%{NGINXACCESS2}"]

}

overwrite => ["message"]

}

date {

match => [ "timestamp", "dd/MMM/YYYY:HH:mm:ss Z" ]

}

}

output {

elasticsearch {

hosts => "192.168.100.41:9200"

manage_template =>

本文整理了两种日志处理流程,涉及filebeat、logstash和redis。第一种是logstash通过redis中转到elastic,第二种是filebeat直接到redis,再到logstash最后进入elastic。采集端logstash仅负责采集,正则匹配在后台logstash完成。filebeat作为轻量级替代,直接读取nginx日志存为json,避免了后端正则解析。

本文整理了两种日志处理流程,涉及filebeat、logstash和redis。第一种是logstash通过redis中转到elastic,第二种是filebeat直接到redis,再到logstash最后进入elastic。采集端logstash仅负责采集,正则匹配在后台logstash完成。filebeat作为轻量级替代,直接读取nginx日志存为json,避免了后端正则解析。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

500

500

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?