NLP中简单数据增强包括插入、删除、替换。

在word级别完成的替换,比如:https://github.com/tedljw/data_augment/blob/master/eda.py

但是映射到token后,在tensor级别中,我并没有找到,因此参考BERT、LevOCR的代码实现在token级别中的替换、删除。

一、替换

参考代码:Bert,这个很简单。

# replace多个原子

def randomReplaceAtoms(input_ids, vocab_size, device,probability_matrix=0.1):

"""

Args:

input_ids: tensor object,the shape is (batch_size,max_len)

vocab_size: the size of dictionary

device: the device of your programme

probability_matrix: probability of each word being replaced

"""

probability_matrix = torch.full(input_ids.shape, probability_matrix)

masked_indices = torch.bernoulli(probability_matrix).bool()

masked_indices[input_ids == self.tokenizer.pad_token_id] = 0. # 不替换 <pad>

masked_indices[input_ids == self.tokenizer.cls_token_id] = 0. # 不替换 <cls>

indices_replace = masked_indices

random_words = torch.randint(vocab_size, input_ids.shape, dtype=torch.long).to(device)

input_ids[indices_replace] = random_words[indices_replace]

return input_ids二、删除

参考代码:https://github.com/AlibabaResearch/AdvancedLiterateMachinery/tree/main/OCR/LevOCR

def randomDeleteAtoms(self,input_ids):

"""

Args:

input_ids: tensor object,the shape is (batch_size,max_len)

"""

pad = self.tokenizer.pad_token_id

cls = self.tokenizer.cls_token_id

max_len = input_ids.size(1) # total token length

target_mask = input_ids.eq(pad)

target_score = input_ids.clone().float().uniform_() # target_score

target_score.masked_fill_(

input_ids.eq(cls), 0.0 # <cls> 0

)

target_score.masked_fill_(target_mask, 1) # <pad> 1

target_score, target_rank = target_score.sort(1) #

target_length = target_mask.size(1) - target_mask.float().sum( # word length

1, keepdim=True

)

# do not delete <cls> (we assign 0 score for them)

target_cutoff = ( # final_target_length

1

+ (

(target_length - 1)

* target_score.new_zeros(target_score.size(0), 1).uniform_(0.7,0.99)

).long()

)

target_cutoff = target_score.sort(1)[1] >= target_cutoff

prev_target_tokens = (

input_ids.gather(1, target_rank)

.masked_fill_(target_cutoff, pad)

.gather(1, target_rank.masked_fill_(target_cutoff, max_len).sort(1)[1])

)

return prev_target_tokens其中用到一个技巧,就是:

s = torch.tensor([[1,3,2,4,0,0]])

tar_s = s.clone().float().uniform_()

tar_s, tar_i = tar_s.sort(1)

#######################

## 对tar_i操作 ##

#######################

final_s = s.gather(1,tar_i).gather(1,tar_i.sort(1)[1])

s.equal(final_s) # True,上面的方法可以经过一系列操作。如果不对tar_i操作最终得到的是原来的tensor,如果对tar_i操作,比如pad填充最后几个tar_i对应的原tensor的值并配合其他操作就可以删除,然后其他元素还是原来顺序。

效果:

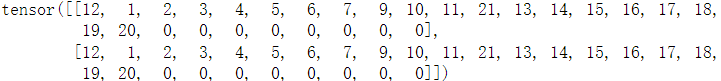

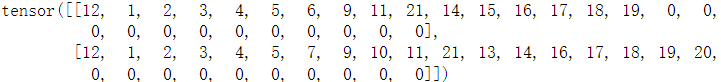

raw_tensor: 12为[cls],0为[pad]

final_tensor:(随机删除了tensor[0]中的7、10、13、20,tensor[1]中的6、15):

731

731

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?