实现LSTM

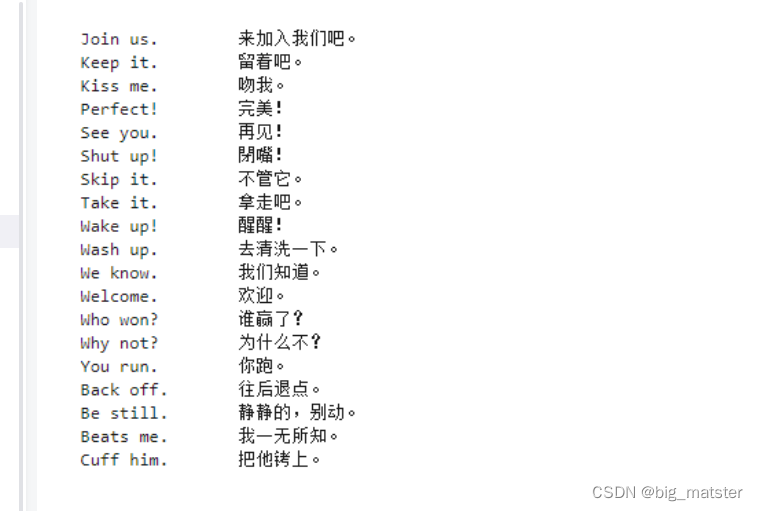

数据以Tab键分割

数据处理模板

from keras.models import Model

from keras.layers import Input,LSTM,Dense

import numpy as np

batch_size = 64 #分批次

epochs = 100 #训练迭代次数

latent_dim = 256

num_samples = 10000

data_path = 'cmn.txt'

#数据向量

input_texts = []

target_texts = []

input_characters = set() #创建一个无序不重复的元素集.

with open(data_path,'r',encoding = 'utf-8') as f: #以只读的方式进行打开

lines = f.read().split('\n') #读取并切分

for line in lines[:,min(num_samples,len(lines) - 1)]:

#遍历样本行数范围

# We use "tab" as the "start sequence" character

#for the targets, and "\n" as "end sequence" character.

input_text,target_text = line.split('\t') #区分输入文本,目标文本

target_text = '\t' + target_text + '\n' #空四个字符,类似于文档的tab键 相当于按一个Tab键.

input_texts.append(input_text)

target_texts.append(target_text)

for char in input_text:

if char not in input_characters:

input_characters.add(char)

for char in target_text:

if char not in target_characters:

target_characters.add(char)

#将输入字符生成列表并进行排序

input_characters = sorted(list(input_characters))

target_characters = sorted(list(target_characters))

num_encoder_tokens = len(input_characters)

num_decoder_tokens = len(target_characters)

#最大编码序列长度

max_encoder_seq_length = max([len(txt) for txt in input_texts])

#最大解码序列长度

max_decoder_seq_length = max([len(txt) for txt in target_texts])

print('Number of samples:', len(input_texts))

print('Number of unique input tokens:', num_encoder_tokens)

print('Number of unique output tokens:', num_decoder_tokens)

print('Max sequence length for inputs:', max_encoder_seq_length)

print('Max sequence length for outputs:', max_decoder_seq_length)

项目连接

总结

一天文章搞四章,然后慢慢的将各种东西会自己进行梳理与整洁。全部都将其搞完整,会自己找到数据集啦,看模型搞起来都行啦的回事与打算。

574

574

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?