搞llama factory时环境出了问题,运行时会提示

The installed version of bitsandbytes was compiled without GPU support.

尝试了网上各种方法都没有,后来发现是torch装错了版本,由于llama factory采用并行计算 bitsandbytes 无法使用gpu引起的问题

-

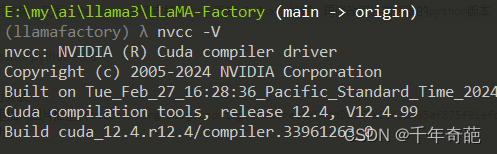

首先确定安装好了cuda,控制台敲 nvcc -V 如果有返回结果就说明成功安装了

-

然后查看torch是否支持gpu,依次在命令行中敲入,或直接编写py脚本执行均可。

python

improt torch

print(torch.cuda.isavailabel)

如果返回ture就是支持,如果返回false就是不支持,说明cuda没搞好

- 安装gpu版本的torch

去这个网站上找好对应版本的指令:https://pytorch.org/get-started/previous-versions/

与我环境契合的指令是conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.7 -c pytorch -c nvidia

出现了新的问题

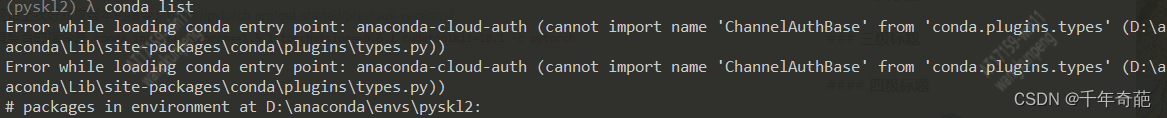

在安装时提示入口错误。经过排查发现是安装途径有问题。直接执行这个命令修改安装途径

conda-forge::libarchive```

再次尝试安装torch,提示缺少chardet、prompt库,好吧,手动安装

conda install prompt

conda install chardet

再次尝试安装,又说cuda version不支持python版本=3.9.x 好吧,再修改下当前环境的python版本

conda install python=3.8

再次执行安装

终于成功了

再次执行llama factory,不再出现不支持gpu的提示了

4. 该方法处理有可能会导致pytorch加载的其他库无法运行,只需pip uninstall xxxx, pip install xxxx 对目标库进行重新安装即可解决,下列是我遇到的实际出现问题的库

```python

sentencepiece

safetensors

pydantic_core

orjson

pandas

pyarrow

multiprocess

xxhash

matplotlib

kiwisolver

regex

scipy

29万+

29万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?