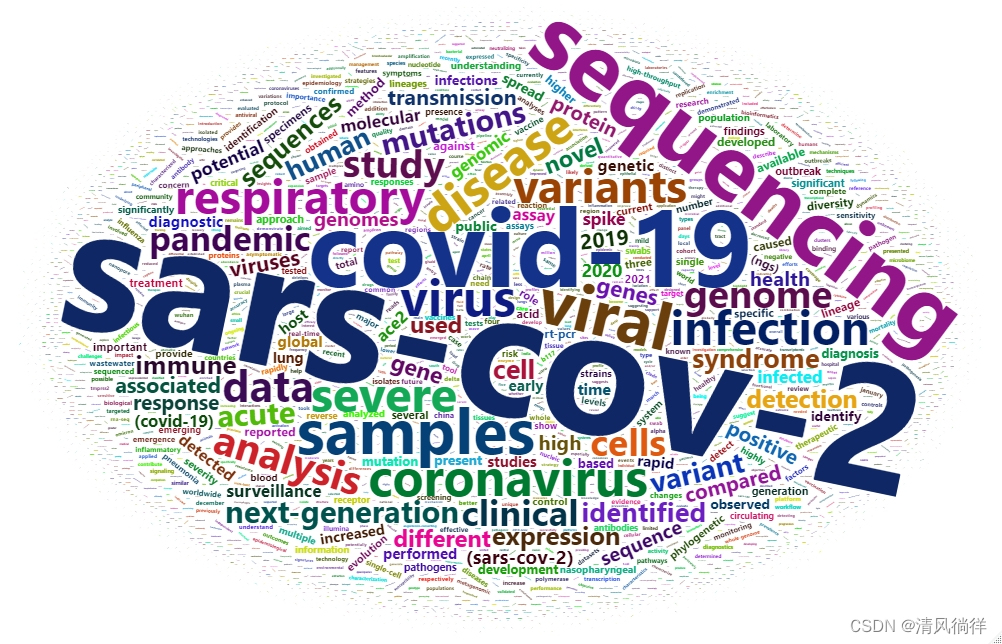

先上成品图,观摩一下。

对一段文字做词频统计,然后根据词频做成词云图,途中文字大小与词频成正比。

结果实际上是一个交互html文件,也可输出为图形。

上代码

library(wordcloud2)

library(dplyr)

filePath = "COVID1.txt" #搜索结果存为txt

text = readLines(filePath)

txt = text[text!=""] #校验是否为空文件

txt<-iconv(txt,"WINDOWS-1252","UTF-8") #转码

txt = tolower(txt)

txtList = lapply(txt, strsplit," ")

txtChar = unlist(txtList)

#清洗数据

txtChar = gsub("\\.|,|\\!|:|;|\\?|(|)","",txtChar) #去除常见符号(.,!:;?)

#txtChar<-gsub("[^a-zA-Z]"," ",txtChar)

txtChar = txtChar[txtChar!=""]

data = as.data.frame(table(txtChar))

data$txtChar<-as.character(data$txtChar)

data<-data[-which(nchar(data[,1])<=2),] #过滤长度<=2的单词

colnames(data) = c("Word","freq")

ordFreq = data[order(data$freq,decreasing=T),] #词频排序

#过滤常见词

filePath = "filter200.csv" #常用虚词列表

df = read.csv(filePath,header = T)

Word = select(df,Word)

antiWord = data.frame(Word,stringsAsFactors=F)

result = anti_join(ordFreq,antiWord,by="Word") %>% arrange(desc(freq)) #取差集

head(result)

wordcloud2(data = result)

想要 常用过滤词文件 filtere200.csv 的同学,请回复,楼主发免费下载链接。

1266

1266

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?