一、准备工作

1、下载ollama

官网链接:Ollama

2、llama2为例子

ollama run llama2接下来就可以下载,在终端进行一些简单的对话。

二、client.py连接(本地pycharm运行)

1、启动ollama,并且保证ollama里面有llama2大模型,采用指令。

ollama list

2、ollama的默认接口是127.0.0.1:11434

用python简单的网络连接的代码为:

import json

import requests

# NOTE: ollama must be running for this to work, start the ollama app or run `ollama serve`

model = "llama2" # TODO: update this for whatever model you wish to use

def chat(messages):

r = requests.post(

"http://127.0.0.1:11434/api/chat",

json={"model": model, "messages": messages, "stream": True},

)

r.raise_for_status()

output = ""

for line in r.iter_lines():

body = json.loads(line)

if "error" in body:

raise Exception(body["error"])

if body.get("done") is False:

message = body.get("message", "")

content = message.get("content", "")

output += content

# the response streams one token at a time, print that as we receive it

print(content, end="", flush=True)

if body.get("done", False):

message["content"] = output

return message

def main():

messages = []

while True:

user_input = input("Enter a prompt: ")

if not user_input:

exit()

print()

messages.append({"role": "user", "content": user_input})

message = chat(messages)

messages.append(message)

print("\n\n")

if __name__ == "__main__":

main()启动后可以,进行一系列对话。

注:网络要保证稳定,确定没有其他进程在连接11434端口,最好不要挂着梯子用,不然都会容易报错失败。

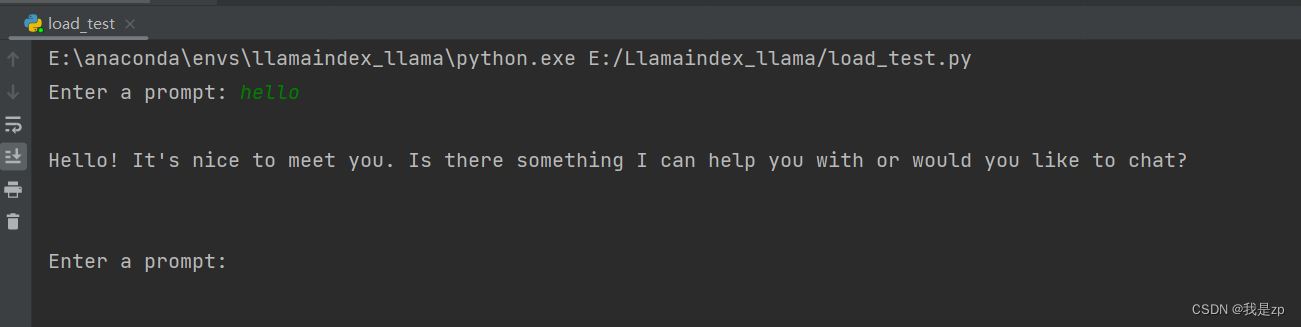

3、运行效果

450

450

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?