目录

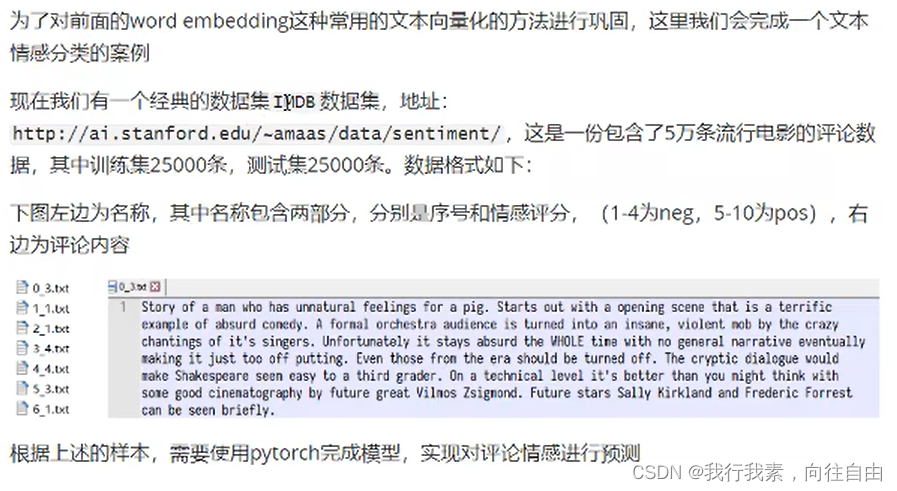

1.案例介绍

或者数据存在百度网盘中:glove.6B.50d.txt_免费高速下载|百度网盘-分享无限制 (baidu.com)

提取码:p1ua

关于文本分类(情感分析)的英文数据集汇总:(2条消息) 关于文本分类(情感分析)的英文数据集汇总_饭饭童鞋的博客-CSDN博客_英文文本分类数据集

2.思路分析

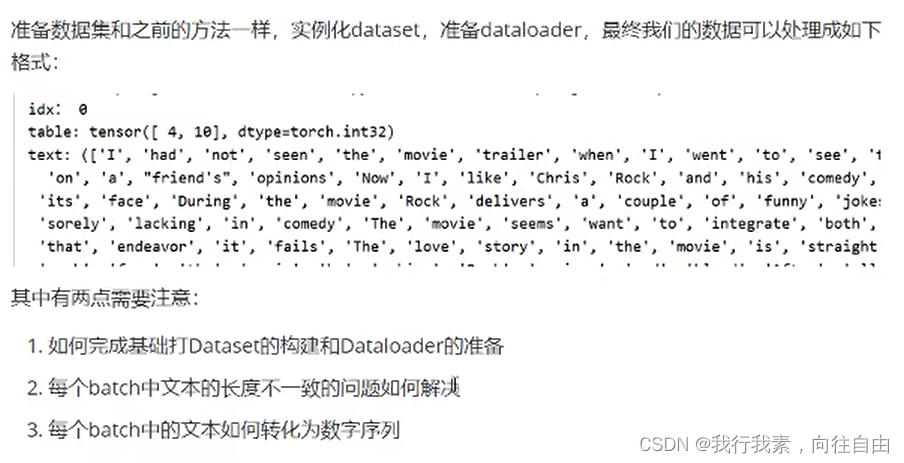

3.准备数据集

3.1 基础Dataset的准备

import jieba

from keras.datasets import imdb#情感文本分类数据集

import torch,os,re

from torch.utils.data import DataLoader,Dataset

def tokenlize(content):

re.sub('<.*?>',' ',content)#将特殊字符替换成空格

fileters=["\.",":",'\t','\n','\x97','\x96','#','$','%','&']#删去这些字符

content=re.sub('|'.join(fileters),' ',content)

tokens=[i.strip() for i in content.split()]

return tokens

class ImdbDataset(Dataset):

def __init__(self,train=True):

self.train_data_path=r'D:\各种编译器的代码\pythonProject12\机器学习\NLP自然语言处理\datas\IMDB文本情感分类数据集\aclImdb\train'

self.test_data_path=r'D:\各种编译器的代码\pythonProject12\机器学习\NLP自然语言处理\datas\IMDB文本情感分类数据集\aclImdb\test'

data_path=self.train_data_path if train else self.test_data_path

#1.把所有的文件名放入列表

temp_data_path=[os.path.join(data_path,'pos'),os.path.join(data_path,'neg')]#不需要\符号了 两个文件夹

self.total_file_path=[]#所有评论文件的path

for path in temp_data_path:

file_name_list=os.listdir(path)

file_path_list=[os.path.join(path,i) for i in file_name_list if i.endswith('.txt')]#当前文件夹中所有的文件名字

self.total_file_path.extend(file_path_list)#正例 负例文件名字都在

def __getitem__(self, index):

file_path=self.total_file_path[index]

#获取label

label_str=file_path.split("\\")[-2]

label=0 if label_str=='neg' else 1#文本类型数字化

#获取内容

tokens=tokenlize(open(file_path,'r',encoding='utf8').read())

return tokens,label

def __len__(self):

return len(self.total_file_path)

def collate_fn(batch):

"""

:param batch:([tokens,label],[tokens,label]...)

:return:

"""

content,label=zip(*batch)

return content,label

def get_dataloader(train=True):

imdb_dataset=ImdbDataset(train)

data_loader=DataLoader(imdb_dataset,batch_size=2,shuffle=True,collate_fn=collate_fn)

return data_loader

if __name__ == '__main__':

for idx,(input,target) in enumerate(get_dataloader()):

print(idx)

print(input)

print(target)

break

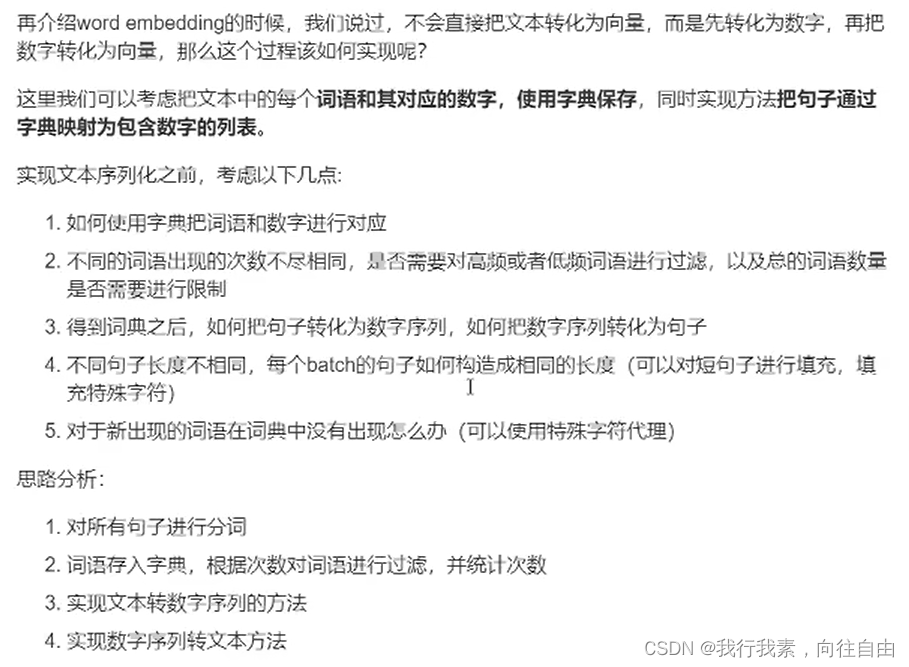

3.2 文本序列化

"""

实现的是:构建字典,实现方法把句子转化为数字序列和其翻转

"""

class Word2Sequence:

UNK_TAG='UNK'#不常见的单词 标记

PAD_TAG="PAD"#padding填充,即测试集中遇到新单词 标记

UNK=0

PAD=1

def __init__(self):

self.dict={

self.UNK_TAG:self.UNK,

self.PAD_TAG:self.PAD

}

self.count={}#统计词频

def fit(self,sentence):

"""

把单个句子保存到dict中

:param sentence:[word1,word2,word3,...]

:return:

"""

for word in sentence:

self.count[word]=self.count.get(word,0)+1

def build_vocab(self,min=5,max=None,max_features=None):

"""

生成词典

:param min:最小出现的次数

:param max:最大出现的次数

:param max_features:一共保留多少个词语

:return:

"""

#删除count中词频小于min的word

if min is not None:

self.count={word:value for word,value in self.count.items() if value>min}

#删除count中词频大于max的word

if max is not None:

self.count={word:value for word,value in self.count.items() if value<max}

#限制保留的词语数

if max_features is not None:

temp=sorted(self.count.items(),key=lambda x:x[-1],reverse=True)[:max_features]#降序,按照values值

self.count=dict(temp)#转换为字典

for word in self.count:

self.dict[word]=len(self.dict)

#得到一个翻转的字典

self.inverse_dict=dict(zip(self.dict.values(),self.dict.keys()))

def transform(self,sentence,max_len=None):

"""

把句子转化为数字序列

:param sentence:[word1,word2,...]

:param max_len:int,对句子进行填充或裁剪裁剪

:return:

"""

if max_len is not None:

if max_len>len(sentence):#填充

sentence=sentence+[self.PAD_TAG]*(max_len-len(sentence))

elif max_len<len(sentence):#裁剪

sentence=sentence[:max_len]

return [self.dict.get(word,self.UNK) for word in sentence]

def inverse_transform(self,indices):

"""

把序列转化为句子

:param indices:[1,2,3,4,...]

:return:

"""

return [self.inverse_dict.get(idx) for idx in indices]

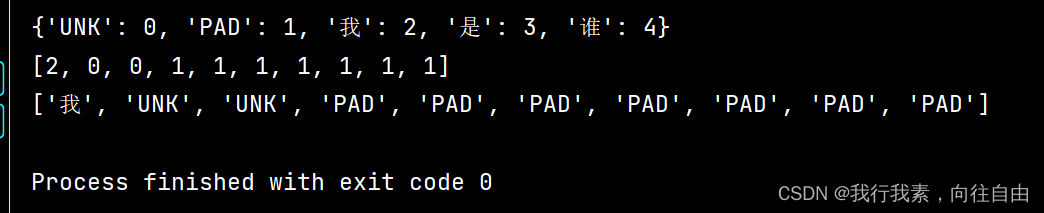

if __name__ == '__main__':

ws=Word2Sequence()

ws.fit(['我', '是', '谁'])

ws.fit(['我', '是', '我'])

ws.build_vocab(min=0)

print(ws.dict)

ret=ws.transform(['我','爱','北京'],max_len=10)

print(ret)

ret=ws.inverse_transform(ret)

print(ret)

4.构建模型

'''

定义模型

'''

import torch

import torch.nn as nn

from lib import ws,max_len

import torch.nn.functional as F

from torch.optim import Adam

from dataset import get_dataloader

class MyModel(nn.Module):

def __init__(self):

super(MyModel,self).__init__()

self.embedding=nn.Embedding(len(ws),100)

self.fc=nn.Linear(max_len*100,2)

def forward(self,*input):

"""

:param input:[batch_size,max_len]

:return:

"""

x=self.embedding(input)#进行embedding操作,形状:[batch_size,max_len,100]

x=x.view([-1,max_len*100])

out=self.fc(x)

return F.log_softmax(out,dim=-1)

model=MyModel()

optimizer=Adam(model.parameters(),lr=0.001)

def train(epoch):

for idx,(input,target) in enumerate(get_dataloader(train=True)):

optimizer.zero_grad()#梯度归0

output=model(input)

loss=F.nll_loss(output,target)

loss.backward()

optimizer.step()

print(loss.item())

if __name__ == '__main__':

for i in range(1):

train(i)

2166

2166

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?