先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前阿里P7

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年最新Python全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Python知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

如果你需要这些资料,可以添加V获取:vip1024c (备注Python)

正文

fword=FreqDist(text1)

print(text1.name)#书名

print(fword)

voc=fword.most_common(50)#频率最高的50个字符

fword.plot(50,cumulative=True)#绘出波形图

print(fword.hapaxes())#低频词

分词和分句

-----

from nltk.tokenize import word_tokenize,sent_tokenize

#分词 TreebankWordTokenizer PunktTokenizer

print(word_tokenize(text=“All work and no play makes jack a dull boy, all work and no play”,language=“english”))

#分句

data = “All work and no play makes jack dull boy. All work and no play makes jack a dull boy.”

print(sent_tokenize(data))

from nltk.corpus import stopwords

print(type(stopwords.words(‘english’)))

print([w for w in word_tokenize(text=“All work and no play makes jack a dull boy, all work and no play”,language=“english”) if w not in stopwords.words(‘english’)])#去掉停用词

时态 和 单复数

--------

from nltk.stem import PorterStemmer

data=word_tokenize(text=“All work and no play makes jack a dull boy, all work and no play,playing,played”,language=“english”)

ps=PorterStemmer()

for w in data:

print(w,":",ps.stem(word=w))

from nltk.stem import SnowballStemmer

snowball_stemmer = SnowballStemmer(‘english’)

snowball_stemmer.stem(‘presumably’)

#u’presum’

from nltk.stem import WordNetLemmatizer

wordnet_lemmatizer = WordNetLemmatizer()

wordnet_lemmatizer.lemmatize(‘dogs’)

u’dog’

词性标注

----

sentence = “”“At eight o’clock on Thursday morning… Arthur didn’t feel very good.”“”

tokens = nltk.word_tokenize(sentence)

print(tokens)

#[‘At’, ‘eight’, “o’clock”, ‘on’, ‘Thursday’, ‘morning’,

‘Arthur’, ‘did’, “n’t”, ‘feel’, ‘very’, ‘good’, ‘.’]

nltk.help.upenn_tagset(‘NNP’)#输出NNP的含义

tagged = nltk.pos_tag(tokens)

nltk.batch_pos_tag([[‘this’, ‘is’, ‘batch’, ‘tag’, ‘test’], [‘nltk’, ‘is’, ‘text’, ‘analysis’, ‘tool’]])#批量标注

print(tagged)

[(‘At’, ‘IN’), (‘eight’, ‘CD’), (“o’clock”, ‘JJ’), (‘on’, ‘IN’),

(‘Thursday’, ‘NNP’), (‘morning’, ‘NN’)]

附表:

分类器

---

下面列出的是NLTK中自带的分类器

from nltk.classify.api import ClassifierI, MultiClassifierI

from nltk.classify.megam import config_megam, call_megam

from nltk.classify.weka import WekaClassifier, config_weka

from nltk.classify.naivebayes import NaiveBayesClassifier

from nltk.classify.positivenaivebayes import PositiveNaiveBayesClassifier

from nltk.classify.decisiontree import DecisionTreeClassifier

from nltk.classify.rte_classify import rte_classifier, rte_features, RTEFeatureExtractor

from nltk.classify.util import accuracy, apply_features, log_likelihood

from nltk.classify.scikitlearn import SklearnClassifier

from nltk.classify.maxent import (MaxentClassifier, BinaryMaxentFeatureEncoding,TypedMaxentFeatureEncoding,ConditionalExponentialClassifier)

### 应用1:通过名字预测性别

from nltk.corpus import names

#特征取的是最后一个字母

def gender_features(word):

return {'last_letter': word[-1]}

#数据准备

name=[(n,‘male’) for n in names.words(‘male.txt’)]+[(n,‘female’) for n in names.words(‘female.txt’)]

print(len(name))

#特征提取和训练模型

features=[(gender_features(n),g) for (n,g) in name]

classifier = nltk.NaiveBayesClassifier.train(features[:6000])

#测试

print(classifier.classify(gender_features(‘Frank’)))

from nltk import classify

print(classify.accuracy(classifier,features[6000:]))

### 应用2:情感分析

import nltk.classify.util

from nltk.classify import NaiveBayesClassifier

from nltk.corpus import names

def word_feats(words):

return dict([(word, True) for word in words])

#数据准备

positive_vocab = [‘awesome’, ‘outstanding’, ‘fantastic’, ‘terrific’, ‘good’, ‘nice’, ‘great’, ‘😃’]

negative_vocab = [‘bad’, ‘terrible’, ‘useless’, ‘hate’, ‘😦’]

neutral_vocab = [‘movie’, ‘the’, ‘sound’, ‘was’, ‘is’, ‘actors’, ‘did’, ‘know’, ‘words’, ‘not’]

#特征提取

positive_features = [(word_feats(pos), ‘pos’) for pos in positive_vocab]

negative_features = [(word_feats(neg), ‘neg’) for neg in negative_vocab]

neutral_features = [(word_feats(neu), ‘neu’) for neu in neutral_vocab]

train_set = negative_features + positive_features + neutral_features

#训练

classifier = NaiveBayesClassifier.train(train_set)

测试

感谢每一个认真阅读我文章的人,看着粉丝一路的上涨和关注,礼尚往来总是要有的:

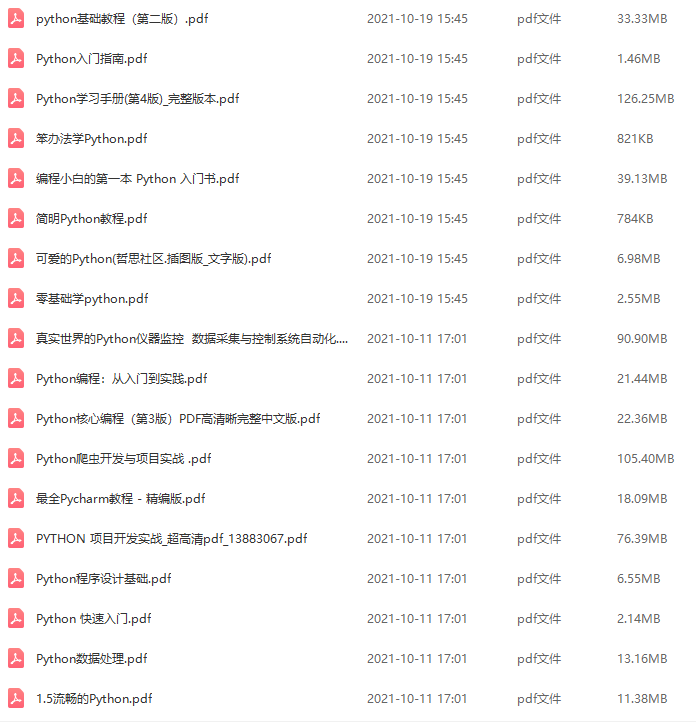

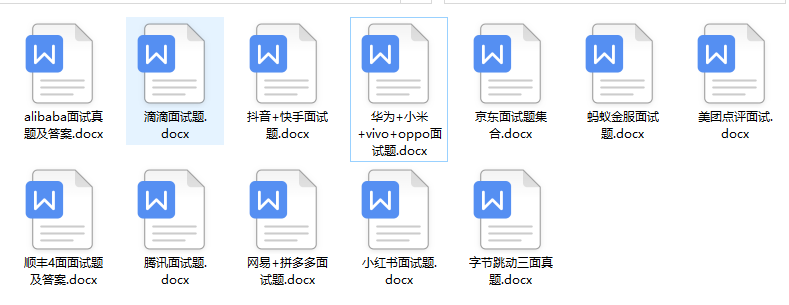

① 2000多本Python电子书(主流和经典的书籍应该都有了)

② Python标准库资料(最全中文版)

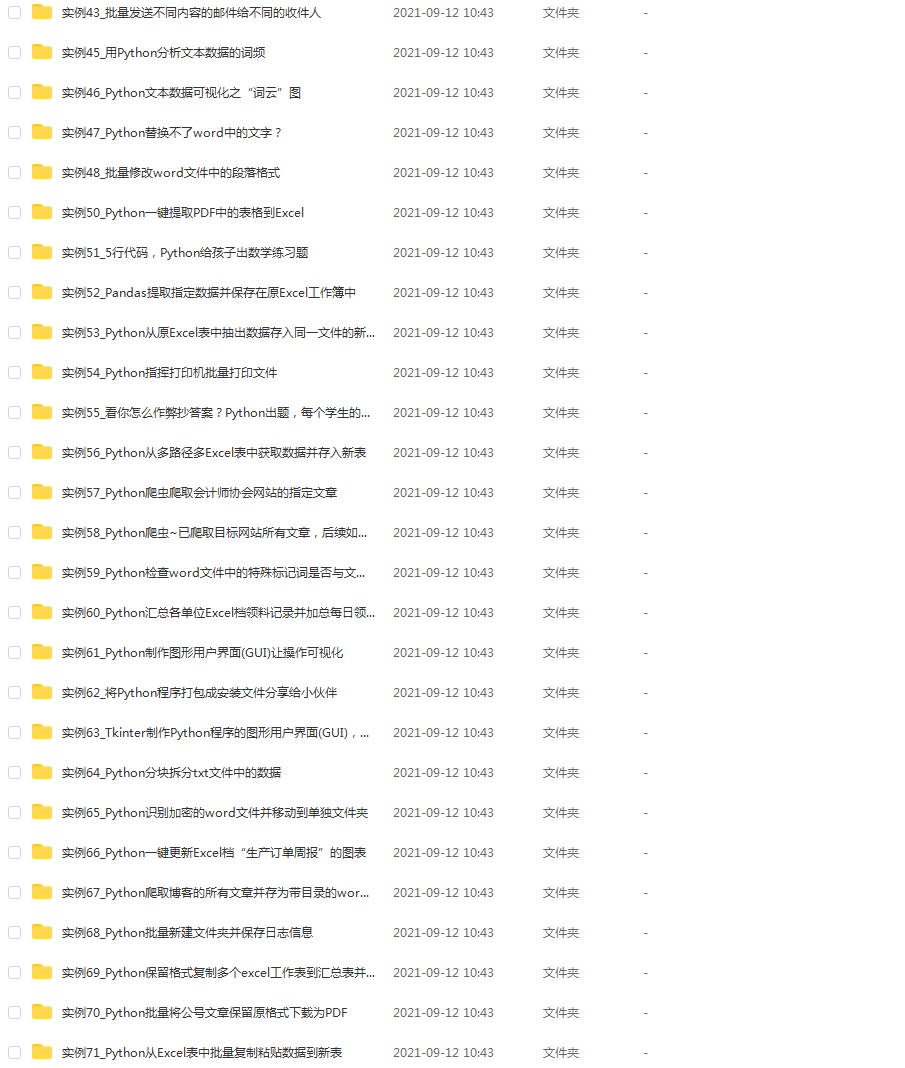

③ 项目源码(四五十个有趣且经典的练手项目及源码)

④ Python基础入门、爬虫、web开发、大数据分析方面的视频(适合小白学习)

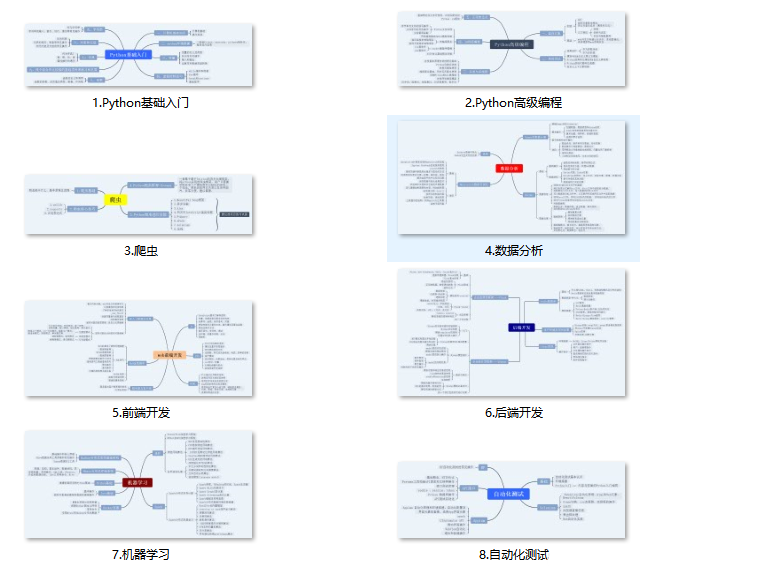

⑤ Python学习路线图(告别不入流的学习)

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024c (备注python)

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

白学习)

⑤ Python学习路线图(告别不入流的学习)

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024c (备注python)

[外链图片转存中…(img-R5p0qv3a-1713417510990)]

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

15万+

15万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?