数据准备

使用IMDB影评数据集,包含Positive和Negative两类。

数据下载

import os

import shutil

import requests

import tempfile

from tqdm import tqdm

from typing import IO

from pathlib import Path

# 指定保存路径为 `home_path/.mindspore_examples`

cache_dir = Path.home() / '.mindspore_examples'

def http_get(url: str, temp_file: IO):

"""使用requests库下载数据,并使用tqdm库进行流程可视化"""

req = requests.get(url, stream=True)

content_length = req.headers.get('Content-Length')

total = int(content_length) if content_length is not None else None

progress = tqdm(unit='B', total=total)

for chunk in req.iter_content(chunk_size=1024):

if chunk:

progress.update(len(chunk))

temp_file.write(chunk)

progress.close()

def download(file_name: str, url: str):

"""下载数据并存为指定名称"""

if not os.path.exists(cache_dir):

os.makedirs(cache_dir)

cache_path = os.path.join(cache_dir, file_name)

cache_exist = os.path.exists(cache_path)

if not cache_exist:

with tempfile.NamedTemporaryFile() as temp_file:

http_get(url, temp_file)

temp_file.flush()

temp_file.seek(0)

with open(cache_path, 'wb') as cache_file:

shutil.copyfileobj(temp_file, cache_file)

return cache_path

imdb_path = download('aclImdb_v1.tar.gz', 'https://mindspore-website.obs.myhuaweicloud.com/notebook/datasets/aclImdb_v1.tar.gz')

imdb_path加载IMDB数据集

import re

import six

import string

import tarfile

class IMDBData():

"""IMDB数据集加载器

加载IMDB数据集并处理为一个Python迭代对象。

"""

label_map = {

"pos": 1,

"neg": 0

}

def __init__(self, path, mode="train"):

self.mode = mode

self.path = path

self.docs, self.labels = [], []

self._load("pos")

self._load("neg")

def _load(self, label):

pattern = re.compile(r"aclImdb/{}/{}/.*\.txt$".format(self.mode, label))

# 将数据加载至内存

with tarfile.open(self.path) as tarf:

tf = tarf.next()

while tf is not None:

if bool(pattern.match(tf.name)):

# 对文本进行分词、去除标点和特殊字符、小写处理

self.docs.append(str(tarf.extractfile(tf).read().rstrip(six.b("\n\r"))

.translate(None, six.b(string.punctuation)).lower()).split())

self.labels.append([self.label_map[label]])

tf = tarf.next()

def __getitem__(self, idx):

return self.docs[idx], self.labels[idx]

def __len__(self):

return len(self.docs)

imdb_train = IMDBData(imdb_path, 'train')

len(imdb_train)将IMDB数据集加载至内存并构造为迭代对象,并加载为train和test

import mindspore.dataset as ds

def load_imdb(imdb_path):

imdb_train = ds.GeneratorDataset(IMDBData(imdb_path, "train"), column_names=["text", "label"], shuffle=True, num_samples=10000)

imdb_test = ds.GeneratorDataset(IMDBData(imdb_path, "test"), column_names=["text", "label"], shuffle=False)

return imdb_train, imdb_test

imdb_train, imdb_test = load_imdb(imdb_path)

imdb_train加载预训练词向量

预训练词向量是对输入单词的数值化表示,采用查表的方式,输入单词对应词表中的index,获得对应的表达向量。 因此进行模型构造前,需要将Embedding层所需的词向量和词表进行构造。使用Glove(Global Vectors for Word Representation)这种经典的预训练词向量, 其数据格式如下:

| Word | Vector |

| the | 0.418 0.24968 -0.41242 0.1217 0.34527 -0.044457 -0.49688 -0.17862 -0.00066023 ... |

| , | 0.013441 0.23682 -0.16899 0.40951 0.63812 0.47709 -0.42852 -0.55641 -0.364 ... |

代码实现:

import zipfile

import numpy as np

def load_glove(glove_path):

glove_100d_path = os.path.join(cache_dir, 'glove.6B.100d.txt')

if not os.path.exists(glove_100d_path):

glove_zip = zipfile.ZipFile(glove_path)

glove_zip.extractall(cache_dir)

embeddings = []

tokens = []

with open(glove_100d_path, encoding='utf-8') as gf:

for glove in gf:

word, embedding = glove.split(maxsplit=1)

tokens.append(word)

embeddings.append(np.fromstring(embedding, dtype=np.float32, sep=' '))

# 添加 <unk>, <pad> 两个特殊占位符对应的embedding

embeddings.append(np.random.rand(100))

embeddings.append(np.zeros((100,), np.float32))

vocab = ds.text.Vocab.from_list(tokens, special_tokens=["<unk>", "<pad>"], special_first=False)

embeddings = np.array(embeddings).astype(np.float32)

return vocab, embeddings下载Glove词向量,并加载生成词表和词向量权重矩阵。

glove_path = download('glove.6B.zip', 'https://mindspore-website.obs.myhuaweicloud.com/notebook/datasets/glove.6B.zip')

vocab, embeddings = load_glove(glove_path)

len(vocab.vocab())使用词表将 the 转换为index id,并查询词向量矩阵对应的词向量:

idx = vocab.tokens_to_ids('the')

embedding = embeddings[idx]

idx, embedding数据集预处理

包含的预处理如下

1. 通过Vocab将所有的Token处理为index id

2. 将文本序列同意长度,不足的使用 <pad> 补齐,超出的进行截断

代码实现:

import mindspore as ms

lookup_op = ds.text.Lookup(vocab, unknown_token='<unk>')

pad_op = ds.transforms.PadEnd([500], pad_value=vocab.tokens_to_ids('<pad>'))

type_cast_op = ds.transforms.TypeCast(ms.float32)

imdb_train = imdb_train.map(operations=[lookup_op, pad_op], input_columns=['text'])

imdb_train = imdb_train.map(operations=[type_cast_op], input_columns=['label'])

imdb_test = imdb_test.map(operations=[lookup_op, pad_op], input_columns=['text'])

imdb_test = imdb_test.map(operations=[type_cast_op], input_columns=['label'])

imdb_train, imdb_valid = imdb_train.split([0.7, 0.3])

imdb_train = imdb_train.batch(64, drop_remainder=True)

imdb_valid = imdb_valid.batch(64, drop_remainder=True)模型构建

Embedding

Embedding层又可称为EmbeddingLookup层,其作用是使用index id对权重矩阵对应id的向量进行查找,当输入为一个由index id组成的序列时,则查找并返回一个相同长度的矩阵。

RNN

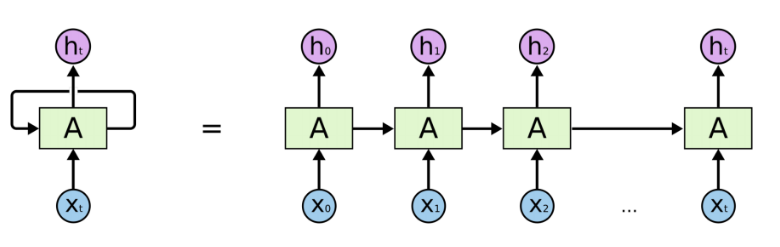

循环神经网络(Recurrent Neural Network, RNN)是一类以序列(sequence)数据为输入,在序列的演进方向进行递归(recursion)且所有节点(循环单元)按链式连接的神经网络。

由于RNN的循环特性,和自然语言文本的序列特性(句子是由单词组成的序列)十分匹配,因此被大量应用于自然语言处理研究中。

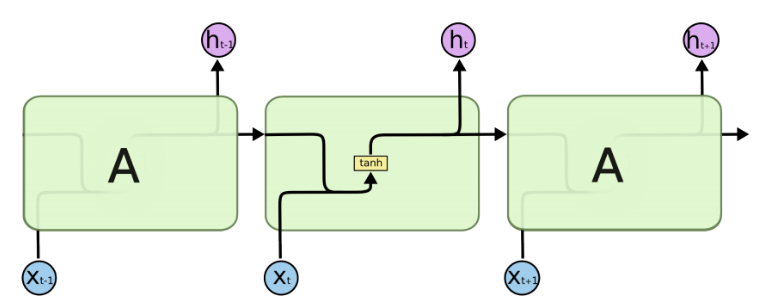

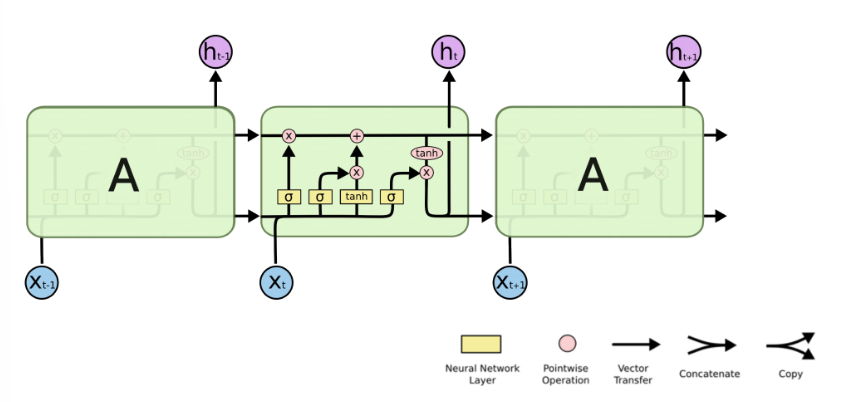

RNN单个Cell的结构简单,因此也造成了梯度消失(Gradient Vanishing)问题,具体表现为RNN网络在序列较长时,在序列尾部已经基本丢失了序列首部的信息。为了克服这一问题,LSTM(Long short-term memory)被提出,通过门控机制(Gating Mechanism)来控制信息流在每个循环步中的留存和丢弃。

LSTM对应的公式:

Dense

在经过LSTM编码获取句子特征后,将其送入一个全连接层,将特征维度变换为二分类所需的维度1,经过Dense层后的输出即为模型预测结果。

import math

import mindspore as ms

import mindspore.nn as nn

import mindspore.ops as ops

from mindspore.common.initializer import Uniform, HeUniform

class RNN(nn.Cell):

def __init__(self, embeddings, hidden_dim, output_dim, n_layers,

bidirectional, pad_idx):

super().__init__()

vocab_size, embedding_dim = embeddings.shape

self.embedding = nn.Embedding(vocab_size, embedding_dim, embedding_table=ms.Tensor(embeddings), padding_idx=pad_idx)

self.rnn = nn.LSTM(embedding_dim,

hidden_dim,

num_layers=n_layers,

bidirectional=bidirectional,

batch_first=True)

weight_init = HeUniform(math.sqrt(5))

bias_init = Uniform(1 / math.sqrt(hidden_dim * 2))

self.fc = nn.Dense(hidden_dim * 2, output_dim, weight_init=weight_init, bias_init=bias_init)

def construct(self, inputs):

embedded = self.embedding(inputs)

_, (hidden, _) = self.rnn(embedded)

hidden = ops.concat((hidden[-2, :, :], hidden[-1, :, :]), axis=1)

output = self.fc(hidden)

return output损失函数与优化器

采用二分类交叉熵损失函数

hidden_size = 256

output_size = 1

num_layers = 2

bidirectional = True

lr = 0.001

pad_idx = vocab.tokens_to_ids('<pad>')

model = RNN(embeddings, hidden_size, output_size, num_layers, bidirectional, pad_idx)

loss_fn = nn.BCEWithLogitsLoss(reduction='mean')

optimizer = nn.Adam(model.trainable_params(), learning_rate=lr)训练逻辑

1. 读取一个Batch的数据

2. 送入网络,进行正向计算和反向传播,更新权重

3. 返回loss

代码实现:

def forward_fn(data, label):

logits = model(data)

loss = loss_fn(logits, label)

return loss

grad_fn = ms.value_and_grad(forward_fn, None, optimizer.parameters)

def train_step(data, label):

loss, grads = grad_fn(data, label)

optimizer(grads)

return loss

def train_one_epoch(model, train_dataset, epoch=0):

model.set_train()

total = train_dataset.get_dataset_size()

loss_total = 0

step_total = 0

with tqdm(total=total) as t:

t.set_description('Epoch %i' % epoch)

for i in train_dataset.create_tuple_iterator():

loss = train_step(*i)

loss_total += loss.asnumpy()

step_total += 1

t.set_postfix(loss=loss_total/step_total)

t.update(1)评估指标和逻辑

训练逻辑完成后,需要对模型进行评估。即使用模型的预测结果和测试集的正确标签进行对比,求出预测的准确率。由于IMDB的情感分类为二分类问题,对预测值直接进行四舍五入即可获得分类标签(0或1),然后判断是否与正确标签相等即可。

def binary_accuracy(preds, y):

"""

计算每个batch的准确率

"""

# 对预测值进行四舍五入

rounded_preds = np.around(ops.sigmoid(preds).asnumpy())

correct = (rounded_preds == y).astype(np.float32)

acc = correct.sum() / len(correct)

return acc有了准确率计算函数后,类似于训练逻辑,对评估逻辑进行设计, 分别为以下步骤:

1. 读取一个Batch的数据

2. 送入网络,进行正向计算,获得预测结果

3. 计算准确率

def evaluate(model, test_dataset, criterion, epoch=0):

total = test_dataset.get_dataset_size()

epoch_loss = 0

epoch_acc = 0

step_total = 0

model.set_train(False)

with tqdm(total=total) as t:

t.set_description('Epoch %i' % epoch)

for i in test_dataset.create_tuple_iterator():

predictions = model(i[0])

loss = criterion(predictions, i[1])

epoch_loss += loss.asnumpy()

acc = binary_accuracy(predictions, i[1])

epoch_acc += acc

step_total += 1

t.set_postfix(loss=epoch_loss/step_total, acc=epoch_acc/step_total)

t.update(1)

return epoch_loss / total模型训练与保存

num_epochs = 2

best_valid_loss = float('inf')

ckpt_file_name = os.path.join(cache_dir, 'sentiment-analysis.ckpt')

for epoch in range(num_epochs):

train_one_epoch(model, imdb_train, epoch)

valid_loss = evaluate(model, imdb_valid, loss_fn, epoch)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

ms.save_checkpoint(model, ckpt_file_name)可见每轮Loss逐步下降,再验证集上的准确率逐步提升。

模型加载与测试

param_dict = ms.load_checkpoint(ckpt_file_name)

ms.load_param_into_net(model, param_dict)

imdb_test = imdb_test.batch(64)

evaluate(model, imdb_test, loss_fn)自定义输入测试

score_map = {

1: "Positive",

0: "Negative"

}

def predict_sentiment(model, vocab, sentence):

model.set_train(False)

tokenized = sentence.lower().split()

indexed = vocab.tokens_to_ids(tokenized)

tensor = ms.Tensor(indexed, ms.int32)

tensor = tensor.expand_dims(0)

prediction = model(tensor)

return score_map[int(np.round(ops.sigmoid(prediction).asnumpy()))]predict_sentiment(model, vocab, "This film is terrible")'Positive'

predict_sentiment(model, vocab, "This film is great")'Positive'

可见由于迭代次数太少,结果与实际依然有偏差。

总结

LSTM作为RNN的一种升级,在自然语言处理情感分类任务中有着重要作用。

958

958

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?