代码参照

- 实验目的

熟悉和掌握循环神经网络的模型架构和工作原理,了解语言数据集的一些基本

概念,例如词表、数据对齐等等,掌握对语言数据集的基本预处理操作,加深对分

类问题的理解,并进一步熟悉使用paddle paddle进行深度学习。

- 实验内容

本实验使用IMDB数据集,该数据集中包括50000条偏向明显的评论,其中包括

25000条训练数据和25000条测试数据,label为0(negative)和1(positive)。

本实验要求使用飞桨搭建循环神经网络模型,并利用上述数据集训练该模型;

训练结束后保存模型,存储模型的参数;评估网络时,从参数文件中加载模型参

数,再评估模型。

- 实验环境

1.建议使用百度提供的AI Studio平台做下面两个实验,该平台提供了免费的算

力支持,并且已经集成了我们要用的paddle等库。

https://aistudio.baidu.com/aistudio/index

进入网页后--》点击项目—》创建项目—》Notebook—》选paddle 2.0.2版本

—》创建即可。该实验建议选择gpu环境,否则训练十分缓慢。

2.如果要在自己电脑实验,Paddle Paddle的安装参考一下飞桨的官方文档:

https://www.paddlepaddle.org.cn/install/quick,paddlepaddle的版本建议选择2.0.2登

三、实验步骤

1.登入飞桨平台https://aistudio.baidu.com/index

2.登录后创建项目

3.选Notebook->+添加数据集->选择IMDB情感分析数据集->选AI Studio经典版->选paddle 2.0.2版本->创建

4.启动环境->选择GPU->确定->进入

5.代码段分开

一、环境设置

import paddle

import numpy as np

import matplotlib.pyplot as plt

import paddle.nn as nn

print(paddle.__version__) # 查看当前版本

# cpu/gpu环境选择,在 paddle.set_device() 输入对应运行设备。

#device = paddle.set_device('gpu')

二、数据准备

# 加载训练数据

train_dataset = paddle.text.datasets.Imdb(mode='train')

# 加载测试数据

test_dataset = paddle.text.datasets.Imdb(mode='test')

#eval_datasetword_dict = train_dataset.word_idx # 获取数据集的词表

# add a pad token to the dict for later padding the sequence

word_dict['<pad>'] = len(word_dict)

for k in list(word_dict)[:5]:

print("{}:{}".format(k.decode('ASCII'), word_dict[k]))

print("...")

for k in list(word_dict)[-5:]:

print("{}:{}".format(k if isinstance(k, str) else k.decode('ASCII'), word_dict[k]))

print("totally {} words".format(len(word_dict)))

三、 参数设置

设置词表大小,embedding的大小,batch_size,等等

vocab_size = len(word_dict) + 1

print(vocab_size)

emb_size = 256

seq_len = 200

batch_size = 32

epochs = 2

pad_id = word_dict['<pad>']

classes = ['negative', 'positive']

# 生成句子列表

def ids_to_str(ids):

# print(ids)

words = []

for k in ids:

w = list(word_dict)[k]

words.append(w if isinstance(w, str) else w.decode('ASCII'))

return " ".join(words)

# 可以用 docs 获取数据的list,用 labels 获取数据的label值,打印出来对数据有一个初步的印象。

# 取出来第一条数据看看样子。

sent = train_dataset.docs[0]

label = train_dataset.labels[1]

print('sentence list id is:', sent)

print('sentence label id is:', label)

print('--------------------------')

print('sentence list is: ', ids_to_str(sent))

print('sentence label is: ', classes[label])

四、对齐数据

文本数据中,每一句话的长度都是不一样的,为了方便后续的神经网络的计算,常见的处理方式是把数据集中的数据都统一成同样长度的数据。这包括:对于较长的数据进行截断处理,对于较短的数据用特殊的词进行填充。

# 读取数据归一化处理

def create_padded_dataset(dataset):

padded_sents = []

labels = []

for batch_id, data in enumerate(dataset):

sent, label = data[0], data[1]

padded_sent = np.concatenate([sent[:seq_len], [pad_id] * (seq_len - len(sent))]).astype('int32')

padded_sents.append(padded_sent)

labels.append(label)

return np.array(padded_sents), np.array(labels)

# 对train、test数据进行实例化

train_sents, train_labels = create_padded_dataset(train_dataset)

test_sents, test_labels = create_padded_dataset(test_dataset)

# 查看数据大小及举例内容

print(train_sents.shape)

print(train_labels.shape)

print(test_sents.shape)

print(test_labels.shape)

for sent in train_sents[:3]:

print(ids_to_str(sent))

五、用Dataset 与 DataLoader 加载

将前面准备好的训练集与测试集用Dataset 与 DataLoader封装后,完成数据的加载。

class IMDBDataset(paddle.io.Dataset):

'''

继承paddle.io.Dataset类进行封装数据

'''

def __init__(self, sents, labels):

self.sents = sents

self.labels = labels

def __getitem__(self, index):

data = self.sents[index]

label = self.labels[index]

return data, label

def __len__(self):

return len(self.sents)

train_dataset = IMDBDataset(train_sents, train_labels)

test_dataset = IMDBDataset(test_sents, test_labels)

train_loader = paddle.io.DataLoader(train_dataset, return_list=True,

shuffle=True, batch_size=batch_size, drop_last=True)

test_loader = paddle.io.DataLoader(test_dataset, return_list=True,

shuffle=True, batch_size=batch_size, drop_last=True)

六、模型配置

import paddle.nn as nn

import paddle

# 定义RNN网络

class MyRNN(paddle.nn.Layer):

def __init__(self):

super(MyRNN, self).__init__()

self.embedding = nn.Embedding(vocab_size, 256)

self.rnn = nn.SimpleRNN(256, 256, num_layers=2, direction='forward',dropout=0.5)#维数,隐藏维数,层数

self.linear = nn.Linear(in_features=256*2, out_features=2)

self.dropout = nn.Dropout(0.5)

def forward(self, inputs):

emb = self.dropout(self.embedding(inputs))

#output形状大小为[batch_size,seq_len,num_directions * hidden_size]

#hidden形状大小为[num_layers * num_directions, batch_size, hidden_size]

#把前向的hidden与后向的hidden合并在一起

output, hidden = self.rnn(emb)

hidden = paddle.concat((hidden[-2,:,:], hidden[-1,:,:]), axis = 1)

#hidden形状大小为[batch_size, hidden_size * num_directions]

hidden = self.dropout(hidden)

return self.linear(hidden)

七、模型训练

# 可视化定义

def draw_process(title,color,iters,data,label):

plt.title(title, fontsize=24)

plt.xlabel("iter", fontsize=20)

plt.ylabel(label, fontsize=20)

plt.plot(iters, data,color=color,label=label)

plt.legend()

plt.grid()

plt.show()

# 对模型进行封装

def train(model):

model.train()

opt = paddle.optimizer.Adam(learning_rate=0.001, parameters=model.parameters())

steps = 0

Iters, total_loss, total_acc = [], [], []

for epoch in range(epochs):

for batch_id, data in enumerate(train_loader):

steps += 1

sent = data[0]

label = data[1]

logits = model(sent)

loss = paddle.nn.functional.cross_entropy(logits, label)

acc = paddle.metric.accuracy(logits, label)

if batch_id % 500 == 0: # 500个epoch输出一次结果

Iters.append(steps)

total_loss.append(loss.numpy()[0])

total_acc.append(acc.numpy()[0])

print("epoch: {}, batch_id: {}, loss is: {}".format(epoch, batch_id, loss.numpy()))

loss.backward()

opt.step()

opt.clear_grad()

# evaluate model after one epoch

model.eval()

accuracies = []

losses = []

for batch_id, data in enumerate(test_loader):

sent = data[0]

label = data[1]

logits = model(sent)

loss = paddle.nn.functional.cross_entropy(logits, label)

acc = paddle.metric.accuracy(logits, label)

accuracies.append(acc.numpy())

losses.append(loss.numpy())

avg_acc, avg_loss = np.mean(accuracies), np.mean(losses)

print("[validation] accuracy: {}, loss: {}".format(avg_acc, avg_loss))

model.train()

# 保存模型

paddle.save(model.state_dict(),str(epoch)+"_model_final.pdparams")

# 可视化查看

draw_process("trainning loss","red",Iters,total_loss,"trainning loss")

draw_process("trainning acc","green",Iters,total_acc,"trainning acc")

model = MyRNN()

train(model)

八、模型评估

'''

模型评估

'''

model_state_dict = paddle.load('1_model_final.pdparams') # 导入模型

model = MyRNN()

model.set_state_dict(model_state_dict)

model.eval()

accuracies = []

losses = []

for batch_id, data in enumerate(test_loader):

sent = data[0]

label = data[1]

logits = model(sent)

loss = paddle.nn.functional.cross_entropy(logits, label)

acc = paddle.metric.accuracy(logits, label)

accuracies.append(acc.numpy())

losses.append(loss.numpy())

avg_acc, avg_loss = np.mean(accuracies), np.mean(losses)

print("[validation] accuracy: {}, loss: {}".format(avg_acc, avg_loss))

九、模型预测

def ids_to_str(ids):

words = []

for k in ids:

w = list(word_dict)[k]

words.append(w if isinstance(w, str) else w.decode('UTF-8'))

return " ".join(words)

label_map = {0:"negative", 1:"positive"}

# 导入模型

model_state_dict = paddle.load('1_model_final.pdparams')

model = MyRNN()

model.set_state_dict(model_state_dict)

model.eval()

for batch_id, data in enumerate(test_loader):

sent = data[0]

results = model(sent)

predictions = []

for probs in results:

# 映射分类label

idx = np.argmax(probs)

labels = label_map[idx]

predictions.append(labels)

for i,pre in enumerate(predictions):

print(' 数据: {} \n 情感: {}'.format(ids_to_str(sent[0]), pre))

break

break

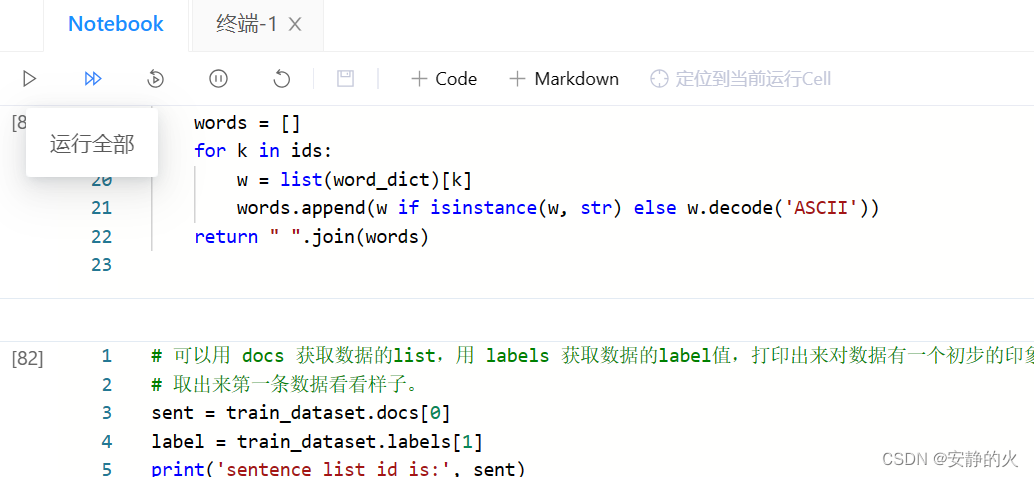

图片如下,每段代码单独放置

想要运行点“运行全部”,或者一段一段接着点运行,上一段运行结束再点下一段。

4127

4127

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?