秋招面试专栏推荐 :深度学习算法工程师面试问题总结【百面算法工程师】——点击即可跳转

💡💡💡本专栏所有程序均经过测试,可成功执行💡💡💡

专栏目录: 《YOLOv5入门 + 改进涨点》专栏介绍 & 专栏目录 |目前已有60+篇内容,内含各种Head检测头、损失函数Loss、Backbone、Neck、NMS等创新点改进

高分辨率的密集预测使许多吸引人的现实世界应用成为可能,如计算摄影、自动驾驶等。然而,庞大的计算成本使得将最先进的高分辨率密集预测模型部署到硬件设备上变得困难。本文介绍了EfficientViT,这是一种新的高分辨率视觉模型,具有新颖的多尺度线性注意力。与之前依赖重softmax注意力、硬件效率低的大核卷积或复杂拓扑结构来获得良好性能的高分辨率密集预测模型不同,我们的多尺度线性注意力仅通过轻量级和硬件高效的操作就实现了全局感受野和多尺度学习。因此,EfficientViT在包括移动CPU、边缘GPU和云GPU在内的各种硬件平台上,相较于以前的最先进模型,实现了显著的速度提升和性能增益。文章在介绍主要的原理后,将手把手教学如何进行模块的代码添加和修改,并将修改后的完整代码放在文章的最后,方便大家一键运行,小白也可轻松上手实践。以帮助您更好地学习深度学习目标检测YOLO系列的挑战。

目录

1. 原理

论文地址:EfficientViT: Multi-Scale Linear Attention for High-Resolution Dense Prediction——点击即可跳转

官方代码: 官方代码仓库——点击即可跳转

EfficientViT 是一种用于高分辨率密集预测任务的视觉模型,其核心是多尺度线性注意力(Multi-Scale Linear Attention)模块。它的设计目的是在保持硬件高效性的同时,实现全局感受野和多尺度学习,这是高分辨率密集预测中提高模型性能的两个关键特性。

主要原理和创新点:

-

多尺度线性注意力(Multi-Scale Linear Attention):

-

全局感受野:EfficientViT 使用了一种称为 ReLU 线性注意力 的技术,取代了传统的 Softmax 注意力。这种方法将计算复杂度从与输入分辨率成二次方的复杂度降低为线性复杂度,从而更适合处理高分辨率图像。

-

多尺度学习:ReLU 线性注意力的局部信息提取能力有限,为了弥补这一点,EfficientViT 通过小卷积核对相邻的 tokens 进行聚合,生成多尺度的 Q/K/V tokens,从而增强了多尺度学习能力。

-

-

硬件效率:

-

EfficientViT 设计的一个主要目标是避免在硬件上效率低下的操作。通过用轻量级的 ReLU 线性注意力取代 Softmax 注意力,并引入小卷积核来增强多尺度学习,EfficientViT 能够在不牺牲性能的情况下大幅提高计算效率。

-

这种方法显著减少了 FLOPs(浮点运算数),并且在多种硬件平台(如移动 CPU、边缘 GPU、云端 GPU)上将这些计算效率的提升转化为实际的延迟降低。

-

-

模型架构:

-

EfficientViT 模型由多尺度线性注意力模块和带有深度卷积的 FFN(前馈神经网络)组成。多尺度线性注意力负责捕捉上下文信息,而 FFN+DWConv 负责提取局部信息。

-

通过这种设计,EfficientViT 在语义分割、超分辨率、以及新兴的任务(如 Segment Anything)上表现出显著的速度提升和性能提升。

-

性能优势:

EfficientViT 在多个高分辨率密集预测任务中(如语义分割和超分辨率)表现出色,与现有的最先进模型相比,在多个硬件平台上实现了显著的速度提升,同时保持或提升了性能。例如,在 Cityscapes 数据集上的测试中,EfficientViT 在 GPU 上的延迟相比 SegFormer 提高了多达 13.9 倍,同时在超分辨率任务中相比 Restormer 提高了 6.4 倍。

总体而言,EfficientViT 是通过引入硬件高效的多尺度线性注意力模块来优化高分辨率密集预测任务的一种创新性方法,既保证了性能,又显著提升了模型在实际应用中的效率。

2. 将EfficientViT添加到YOLOv5中

2.1 EfficientViT的代码实现

关键步骤一: 将下面的代码粘贴到\yolov5\models\common.py中

# --------------------------------------------------------

# EfficientViT Model Architecture for Downstream Tasks

# Copyright (c) 2022 Microsoft

# Written by: Xinyu Liu

# --------------------------------------------------------

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.checkpoint as checkpoint

import itertools

from timm.models.layers import SqueezeExcite

import numpy as np

import itertools

__all__ = ['EfficientViT_M0', 'EfficientViT_M1', 'EfficientViT_M2', 'EfficientViT_M3', 'EfficientViT_M4', 'EfficientViT_M5']

class Conv2d_BN(torch.nn.Sequential):

def __init__(self, a, b, ks=1, stride=1, pad=0, dilation=1,

groups=1, bn_weight_init=1, resolution=-10000):

super().__init__()

self.add_module('c', torch.nn.Conv2d(

a, b, ks, stride, pad, dilation, groups, bias=False))

self.add_module('bn', torch.nn.BatchNorm2d(b))

torch.nn.init.constant_(self.bn.weight, bn_weight_init)

torch.nn.init.constant_(self.bn.bias, 0)

@torch.no_grad()

def switch_to_deploy(self):

c, bn = self._modules.values()

w = bn.weight / (bn.running_var + bn.eps)**0.5

w = c.weight * w[:, None, None, None]

b = bn.bias - bn.running_mean * bn.weight / \

(bn.running_var + bn.eps)**0.5

m = torch.nn.Conv2d(w.size(1) * self.c.groups, w.size(

0), w.shape[2:], stride=self.c.stride, padding=self.c.padding, dilation=self.c.dilation, groups=self.c.groups)

m.weight.data.copy_(w)

m.bias.data.copy_(b)

return m

def replace_batchnorm(net):

for child_name, child in net.named_children():

if hasattr(child, 'fuse'):

setattr(net, child_name, child.fuse())

elif isinstance(child, torch.nn.BatchNorm2d):

setattr(net, child_name, torch.nn.Identity())

else:

replace_batchnorm(child)

class PatchMerging(torch.nn.Module):

def __init__(self, dim, out_dim, input_resolution):

super().__init__()

hid_dim = int(dim * 4)

self.conv1 = Conv2d_BN(dim, hid_dim, 1, 1, 0, resolution=input_resolution)

self.act = torch.nn.ReLU()

self.conv2 = Conv2d_BN(hid_dim, hid_dim, 3, 2, 1, groups=hid_dim, resolution=input_resolution)

self.se = SqueezeExcite(hid_dim, .25)

self.conv3 = Conv2d_BN(hid_dim, out_dim, 1, 1, 0, resolution=input_resolution // 2)

def forward(self, x):

x = self.conv3(self.se(self.act(self.conv2(self.act(self.conv1(x))))))

return x

class Residual(torch.nn.Module):

def __init__(self, m, drop=0.):

super().__init__()

self.m = m

self.drop = drop

def forward(self, x):

if self.training and self.drop > 0:

return x + self.m(x) * torch.rand(x.size(0), 1, 1, 1,

device=x.device).ge_(self.drop).div(1 - self.drop).detach()

else:

return x + self.m(x)

class FFN(torch.nn.Module):

def __init__(self, ed, h, resolution):

super().__init__()

self.pw1 = Conv2d_BN(ed, h, resolution=resolution)

self.act = torch.nn.ReLU()

self.pw2 = Conv2d_BN(h, ed, bn_weight_init=0, resolution=resolution)

def forward(self, x):

x = self.pw2(self.act(self.pw1(x)))

return x

class CascadedGroupAttention(torch.nn.Module):

r""" Cascaded Group Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution, correspond to the window size.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

kernels=[5, 5, 5, 5],):

super().__init__()

self.num_heads = num_heads

self.scale = key_dim ** -0.5

self.key_dim = key_dim

self.d = int(attn_ratio * key_dim)

self.attn_ratio = attn_ratio

qkvs = []

dws = []

for i in range(num_heads):

qkvs.append(Conv2d_BN(dim // (num_heads), self.key_dim * 2 + self.d, resolution=resolution))

dws.append(Conv2d_BN(self.key_dim, self.key_dim, kernels[i], 1, kernels[i]//2, groups=self.key_dim, resolution=resolution))

self.qkvs = torch.nn.ModuleList(qkvs)

self.dws = torch.nn.ModuleList(dws)

self.proj = torch.nn.Sequential(torch.nn.ReLU(), Conv2d_BN(

self.d * num_heads, dim, bn_weight_init=0, resolution=resolution))

points = list(itertools.product(range(resolution), range(resolution)))

N = len(points)

attention_offsets = {}

idxs = []

for p1 in points:

for p2 in points:

offset = (abs(p1[0] - p2[0]), abs(p1[1] - p2[1]))

if offset not in attention_offsets:

attention_offsets[offset] = len(attention_offsets)

idxs.append(attention_offsets[offset])

self.attention_biases = torch.nn.Parameter(

torch.zeros(num_heads, len(attention_offsets)))

self.register_buffer('attention_bias_idxs',

torch.LongTensor(idxs).view(N, N))

@torch.no_grad()

def train(self, mode=True):

super().train(mode)

if mode and hasattr(self, 'ab'):

del self.ab

else:

self.ab = self.attention_biases[:, self.attention_bias_idxs]

def forward(self, x): # x (B,C,H,W)

B, C, H, W = x.shape

trainingab = self.attention_biases[:, self.attention_bias_idxs]

feats_in = x.chunk(len(self.qkvs), dim=1)

feats_out = []

feat = feats_in[0]

for i, qkv in enumerate(self.qkvs):

if i > 0: # add the previous output to the input

feat = feat + feats_in[i]

feat = qkv(feat)

q, k, v = feat.view(B, -1, H, W).split([self.key_dim, self.key_dim, self.d], dim=1) # B, C/h, H, W

q = self.dws[i](q)

q, k, v = q.flatten(2), k.flatten(2), v.flatten(2) # B, C/h, N

attn = (

(q.transpose(-2, -1) @ k) * self.scale

+

(trainingab[i] if self.training else self.ab[i])

)

attn = attn.softmax(dim=-1) # BNN

feat = (v @ attn.transpose(-2, -1)).view(B, self.d, H, W) # BCHW

feats_out.append(feat)

x = self.proj(torch.cat(feats_out, 1))

return x

class LocalWindowAttention(torch.nn.Module):

r""" Local Window Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dim = dim

self.num_heads = num_heads

self.resolution = resolution

assert window_resolution > 0, 'window_size must be greater than 0'

self.window_resolution = window_resolution

self.attn = CascadedGroupAttention(dim, key_dim, num_heads,

attn_ratio=attn_ratio,

resolution=window_resolution,

kernels=kernels,)

def forward(self, x):

B, C, H, W = x.shape

if H <= self.window_resolution and W <= self.window_resolution:

x = self.attn(x)

else:

x = x.permute(0, 2, 3, 1)

pad_b = (self.window_resolution - H %

self.window_resolution) % self.window_resolution

pad_r = (self.window_resolution - W %

self.window_resolution) % self.window_resolution

padding = pad_b > 0 or pad_r > 0

if padding:

x = torch.nn.functional.pad(x, (0, 0, 0, pad_r, 0, pad_b))

pH, pW = H + pad_b, W + pad_r

nH = pH // self.window_resolution

nW = pW // self.window_resolution

# window partition, BHWC -> B(nHh)(nWw)C -> BnHnWhwC -> (BnHnW)hwC -> (BnHnW)Chw

x = x.view(B, nH, self.window_resolution, nW, self.window_resolution, C).transpose(2, 3).reshape(

B * nH * nW, self.window_resolution, self.window_resolution, C

).permute(0, 3, 1, 2)

x = self.attn(x)

# window reverse, (BnHnW)Chw -> (BnHnW)hwC -> BnHnWhwC -> B(nHh)(nWw)C -> BHWC

x = x.permute(0, 2, 3, 1).view(B, nH, nW, self.window_resolution, self.window_resolution,

C).transpose(2, 3).reshape(B, pH, pW, C)

if padding:

x = x[:, :H, :W].contiguous()

x = x.permute(0, 3, 1, 2)

return x

class EfficientViTBlock(torch.nn.Module):

""" A basic EfficientViT building block.

Args:

type (str): Type for token mixer. Default: 's' for self-attention.

ed (int): Number of input channels.

kd (int): Dimension for query and key in the token mixer.

nh (int): Number of attention heads.

ar (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, type,

ed, kd, nh=8,

ar=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dw0 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn0 = Residual(FFN(ed, int(ed * 2), resolution))

if type == 's':

self.mixer = Residual(LocalWindowAttention(ed, kd, nh, attn_ratio=ar, \

resolution=resolution, window_resolution=window_resolution, kernels=kernels))

self.dw1 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn1 = Residual(FFN(ed, int(ed * 2), resolution))

def forward(self, x):

return self.ffn1(self.dw1(self.mixer(self.ffn0(self.dw0(x)))))

class EfficientViT(torch.nn.Module):

def __init__(self, img_size=400,

patch_size=16,

frozen_stages=0,

in_chans=3,

stages=['s', 's', 's'],

embed_dim=[64, 128, 192],

key_dim=[16, 16, 16],

depth=[1, 2, 3],

num_heads=[4, 4, 4],

window_size=[7, 7, 7],

kernels=[5, 5, 5, 5],

down_ops=[['subsample', 2], ['subsample', 2], ['']],

pretrained=None,

distillation=False,):

super().__init__()

resolution = img_size

self.patch_embed = torch.nn.Sequential(Conv2d_BN(in_chans, embed_dim[0] // 8, 3, 2, 1, resolution=resolution), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 8, embed_dim[0] // 4, 3, 2, 1, resolution=resolution // 2), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 4, embed_dim[0] // 2, 3, 2, 1, resolution=resolution // 4), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 2, embed_dim[0], 3, 1, 1, resolution=resolution // 8))

resolution = img_size // patch_size

attn_ratio = [embed_dim[i] / (key_dim[i] * num_heads[i]) for i in range(len(embed_dim))]

self.blocks1 = []

self.blocks2 = []

self.blocks3 = []

for i, (stg, ed, kd, dpth, nh, ar, wd, do) in enumerate(

zip(stages, embed_dim, key_dim, depth, num_heads, attn_ratio, window_size, down_ops)):

for d in range(dpth):

eval('self.blocks' + str(i+1)).append(EfficientViTBlock(stg, ed, kd, nh, ar, resolution, wd, kernels))

if do[0] == 'subsample':

#('Subsample' stride)

blk = eval('self.blocks' + str(i+2))

resolution_ = (resolution - 1) // do[1] + 1

blk.append(torch.nn.Sequential(Residual(Conv2d_BN(embed_dim[i], embed_dim[i], 3, 1, 1, groups=embed_dim[i], resolution=resolution)),

Residual(FFN(embed_dim[i], int(embed_dim[i] * 2), resolution)),))

blk.append(PatchMerging(*embed_dim[i:i + 2], resolution))

resolution = resolution_

blk.append(torch.nn.Sequential(Residual(Conv2d_BN(embed_dim[i + 1], embed_dim[i + 1], 3, 1, 1, groups=embed_dim[i + 1], resolution=resolution)),

Residual(FFN(embed_dim[i + 1], int(embed_dim[i + 1] * 2), resolution)),))

self.blocks1 = torch.nn.Sequential(*self.blocks1)

self.blocks2 = torch.nn.Sequential(*self.blocks2)

self.blocks3 = torch.nn.Sequential(*self.blocks3)

self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

def forward(self, x):

outs = []

x = self.patch_embed(x)

x = self.blocks1(x)

outs.append(x)

x = self.blocks2(x)

outs.append(x)

x = self.blocks3(x)

outs.append(x)

return outs

EfficientViT_m0 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [64, 128, 192],

'depth': [1, 2, 3],

'num_heads': [4, 4, 4],

'window_size': [7, 7, 7],

'kernels': [7, 5, 3, 3],

}

EfficientViT_m1 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [128, 144, 192],

'depth': [1, 2, 3],

'num_heads': [2, 3, 3],

'window_size': [7, 7, 7],

'kernels': [7, 5, 3, 3],

}

EfficientViT_m2 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [128, 192, 224],

'depth': [1, 2, 3],

'num_heads': [4, 3, 2],

'window_size': [7, 7, 7],

'kernels': [7, 5, 3, 3],

}

EfficientViT_m3 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [128, 240, 320],

'depth': [1, 2, 3],

'num_heads': [4, 3, 4],

'window_size': [7, 7, 7],

'kernels': [5, 5, 5, 5],

}

EfficientViT_m4 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [128, 256, 384],

'depth': [1, 2, 3],

'num_heads': [4, 4, 4],

'window_size': [7, 7, 7],

'kernels': [7, 5, 3, 3],

}

EfficientViT_m5 = {

'img_size': 224,

'patch_size': 16,

'embed_dim': [192, 288, 384],

'depth': [1, 3, 4],

'num_heads': [3, 3, 4],

'window_size': [7, 7, 7],

'kernels': [7, 5, 3, 3],

}

def EfficientViT_M0(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m0):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def EfficientViT_M1(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m1):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def EfficientViT_M2(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m2):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def EfficientViT_M3(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m3):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def EfficientViT_M4(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m4):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def EfficientViT_M5(pretrained='', frozen_stages=0, distillation=False, fuse=False, pretrained_cfg=None, model_cfg=EfficientViT_m5):

model = EfficientViT(frozen_stages=frozen_stages, distillation=distillation, pretrained=pretrained, **model_cfg)

if pretrained:

model.load_state_dict(update_weight(model.state_dict(), torch.load(pretrained)['model']))

if fuse:

replace_batchnorm(model)

return model

def update_weight(model_dict, weight_dict):

idx, temp_dict = 0, {}

for k, v in weight_dict.items():

# k = k[9:]

if k in model_dict.keys() and np.shape(model_dict[k]) == np.shape(v):

temp_dict[k] = v

idx += 1

model_dict.update(temp_dict)

print(f'loading weights... {idx}/{len(model_dict)} items')

return model_dictEfficientViT 处理图像的主要步骤可以分为以下几个关键阶段:

1. 图像分块和初步处理:

-

输入图像分块:首先,将输入图像分割成固定大小的图像块(tokens),每个图像块都将作为后续处理的基本单元。这个步骤类似于在传统的 Vision Transformer (ViT) 中对图像的处理方法。

2. 多尺度线性注意力(Multi-Scale Linear Attention):

-

线性注意力机制:EfficientViT 使用 ReLU 线性注意力取代传统的 Softmax 注意力。线性注意力将每个图像块与其他块进行相对位置无关的相似性计算,这种计算的复杂度是线性的,因此非常适合高分辨率图像的处理。

-

多尺度特征提取:由于线性注意力在提取局部信息时存在一定局限性,EfficientViT 引入了小卷积核,对相邻的 tokens 进行聚合和融合,生成多尺度的查询(Q)、键(K)、和值(V)tokens。这些多尺度 tokens 有助于捕捉图像中不同尺度上的特征信息,从而增强模型的全局和局部感知能力。

3. 深度卷积网络(Depthwise Convolutional Network, DWConv):

-

卷积操作:在经过多尺度线性注意力处理后,EfficientViT 使用深度卷积网络(DWConv)对这些处理过的 tokens 进行进一步的特征提取。深度卷积网络能够有效地提取图像的细粒度局部特征,补充和增强注意力模块的全局信息。

-

前馈神经网络(FFN):经过卷积处理后的特征进一步通过前馈神经网络进行非线性变换,帮助模型更好地捕捉复杂的特征关系。

4. 全局和局部特征融合:

-

特征聚合与融合:EfficientViT 通过多个多尺度线性注意力和深度卷积模块的堆叠,实现了全局信息和局部信息的逐层聚合和融合。随着网络的加深,模型能够捕捉到越来越多的图像语义信息,最终形成高质量的特征表示。

5. 输出预测:

-

输出特征映射:经过多层特征处理后,模型将生成最终的特征映射,这些映射可以用于不同的密集预测任务,如语义分割、图像超分辨率等。

-

任务特定头部:根据具体的任务需求,EfficientViT 的输出层将特征映射传递给任务特定的预测头部,进行最终的输出(如语义分割图或高分辨率图像)。

总的来说,EfficientViT 通过分块处理、线性注意力、多尺度特征提取、深度卷积处理等步骤,逐步提取和融合图像的全局与局部特征,最终输出用于密集预测任务的高质量特征映射。这个过程在多个硬件平台上实现了高效的计算和推理。

2.2 新增yaml文件

关键步骤二:在下/yolov5/models下新建文件 yolov5_EfficientViT.yaml并将下面代码复制进去

- 目标检测yaml文件

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 3 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, EfficientViT_M0, []], # 4 EfficientViT_M0, EfficientViT_M1, EfficientViT_M2, EfficientViT_M3, EfficientViT_M4, EfficientViT_M5

[-1, 1, SPPF, [1024, 5]], # 5

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]], # 6

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 7

[[-1, 3], 1, Concat, [1]], # cat backbone P4 8

[-1, 3, C3, [512, False]], # 9

[-1, 1, Conv, [256, 1, 1]], # 10

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 11

[[-1, 2], 1, Concat, [1]], # cat backbone P3 12

[-1, 3, C3, [256, False]], # 13 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]], # 14

[[-1, 10], 1, Concat, [1]], # cat head P4 15

[-1, 3, C3, [512, False]], # 16 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]], # 17

[[-1, 5], 1, Concat, [1]], # cat head P5 18

[-1, 3, C3, [1024, False]], # 19 (P5/32-large)

[[13, 16, 19], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]- 语义分割yaml文件

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 3 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, EfficientViT_M0, []], # 4 EfficientViT_M0, EfficientViT_M1, EfficientViT_M2, EfficientViT_M3, EfficientViT_M4, EfficientViT_M5

[-1, 1, SPPF, [1024, 5]], # 5

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]], # 6

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 7

[[-1, 3], 1, Concat, [1]], # cat backbone P4 8

[-1, 3, C3, [512, False]], # 9

[-1, 1, Conv, [256, 1, 1]], # 10

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 11

[[-1, 2], 1, Concat, [1]], # cat backbone P3 12

[-1, 3, C3, [256, False]], # 13 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]], # 14

[[-1, 10], 1, Concat, [1]], # cat head P4 15

[-1, 3, C3, [512, False]], # 16 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]], # 17

[[-1, 5], 1, Concat, [1]], # cat head P5 18

[-1, 3, C3, [1024, False]], # 19 (P5/32-large)

[[13, 16, 19], 1, Segment, [nc, anchors, 32, 256]], # Detect(P3, P4, P5)

]温馨提示:本文只是对yolov5基础上添加模块,如果要对yolov5n/l/m/x进行添加则只需要指定对应的depth_multiple 和 width_multiple。

# YOLOv5n

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.25 # layer channel multiple

# YOLOv5s

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# YOLOv5l

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

# YOLOv5m

depth_multiple: 0.67 # model depth multiple

width_multiple: 0.75 # layer channel multiple

# YOLOv5x

depth_multiple: 1.33 # model depth multiple

width_multiple: 1.25 # layer channel multiple2.3 注册模块

关键步骤三:在yolo.py的parse_model函数替换为下面的函数

def parse_model(d, ch): # model_dict, input_channels(3)

# Parse a YOLOv5 model.yaml dictionary

LOGGER.info(f"\n{'':>3}{'from':>18}{'n':>3}{'params':>10} {'module':<40}{'arguments':<30}")

anchors, nc, gd, gw, act = d['anchors'], d['nc'], d['depth_multiple'], d['width_multiple'], d.get('activation')

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

LOGGER.info(f"{colorstr('activation:')} {act}") # print

na = (len(anchors[0]) // 2) if isinstance(anchors, list) else anchors # number of anchors

no = na * (nc + 5) # number of outputs = anchors * (classes + 5)

is_backbone = False

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

for i, (f, n, m, args) in enumerate(d['backbone'] + d['head']): # from, number, module, args

try:

t = m

m = eval(m) if isinstance(m, str) else m # eval strings

except:

pass

for j, a in enumerate(args):

with contextlib.suppress(NameError):

try:

args[j] = eval(a) if isinstance(a, str) else a # eval strings

except:

args[j] = a

n = n_ = max(round(n * gd), 1) if n > 1 else n # depth gain

if m in {

Conv, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, MixConv2d, Focus, CrossConv,

BottleneckCSP, C3, C3TR, C3SPP, C3Ghost, nn.ConvTranspose2d, DWConvTranspose2d, C3x}:

c1, c2 = ch[f], args[0]

if c2 != no: # if not output

c2 = make_divisible(c2 * gw, 8)

args = [c1, c2, *args[1:]]

if m in {BottleneckCSP, C3, C3TR, C3Ghost, C3x}:

args.insert(2, n) # number of repeats

n = 1

elif m is nn.BatchNorm2d:

args = [ch[f]]

elif m is Concat:

c2 = sum(ch[x] for x in f)

# TODO: channel, gw, gd

elif m in {Detect, Segment}:

args.append([ch[x] for x in f])

if isinstance(args[1], int): # number of anchors

args[1] = [list(range(args[1] * 2))] * len(f)

if m is Segment:

args[3] = make_divisible(args[3] * gw, 8)

elif m is Contract:

c2 = ch[f] * args[0] ** 2

elif m is Expand:

c2 = ch[f] // args[0] ** 2

elif isinstance(m, str):

t = m

m = timm.create_model(m, pretrained=args[0], features_only=True)

c2 = m.feature_info.channels()

elif m in {

EfficientViT_M0,

EfficientViT_M1,

EfficientViT_M2,

EfficientViT_M3,

EfficientViT_M4,

EfficientViT_M5,

}:

m = m(*args)

c2 = m.channel

else:

c2 = ch[f]

if isinstance(c2, list):

is_backbone = True

m_ = m

m_.backbone = True

else:

m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

t = str(m)[8:-2].replace('__main__.', '') # module type

np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type, m_.np = i + 4 if is_backbone else i, f, t, np # attach index, 'from' index, type, number params

LOGGER.info(f'{i:>3}{str(f):>18}{n_:>3}{np:10.0f} {t:<40}{str(args):<30}') # print

save.extend(x % (i + 4 if is_backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

for _ in range(5 - len(ch)):

ch.insert(0, 0)

else:

ch.append(c2)

return nn.Sequential(*layers), sorted(save)

2.4 替换函数

关键步骤四:在yolo.py的_forward_once函数替换为下面的函数

def _forward_once(self, x, profile=False, visualize=False):

y, dt = [], [] # outputs

for m in self.model:

if m.f != -1: # if not from previous layer

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, 'backbone'):

x = m(x)

for _ in range(5 - len(x)):

x.insert(0, None)

for i_idx, i in enumerate(x):

if i_idx in self.save:

y.append(i)

else:

y.append(None)

x = x[-1]

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

return x2.5 执行程序

在train.py中,将cfg的参数路径设置为yolov5_EfficientViT.yaml的路径

建议大家写绝对路径,确保一定能找到

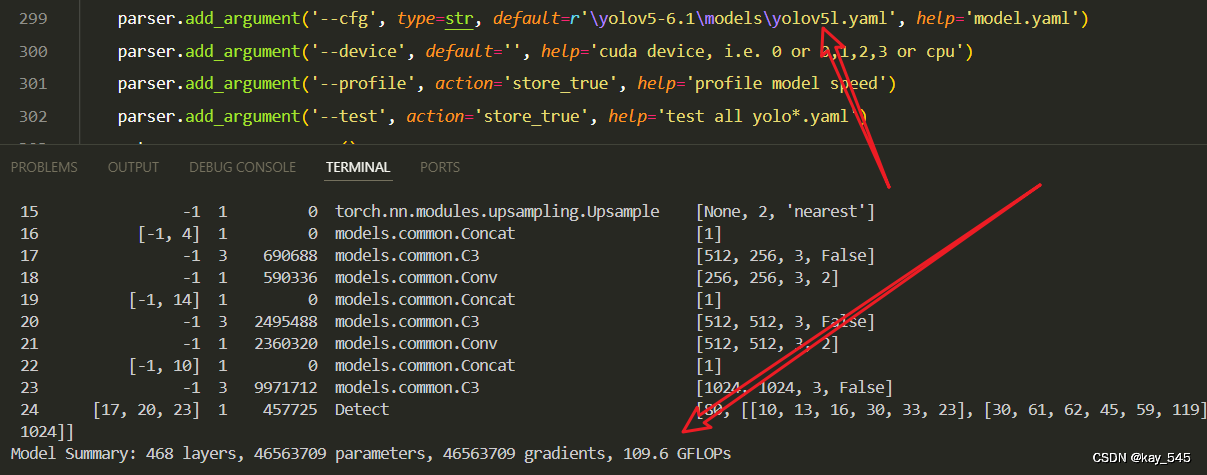

🚀运行程序,如果出现下面的内容则说明添加成功🚀

from n params module arguments

0 -1 1 2155680 EfficientViT_M0 []

1 -1 1 413888 models.common.SPPF [192, 1024, 5]

2 -1 1 525312 models.common.Conv [1024, 512, 1, 1]

2 -1 1 525312 models.common.Conv [1024, 512, 1, 1]

3 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

4 [-1, 3] 1 0 models.common.Concat [1]

5 -1 3 2561024 models.common.C3 [640, 512, 3, False]

6 -1 1 131584 models.common.Conv [512, 256, 1, 1]

7 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

8 [-1, 2] 1 0 models.common.Concat [1]

9 -1 3 641536 models.common.C3 [320, 256, 3, False]

10 -1 1 590336 models.common.Conv [256, 256, 3, 2]

11 [-1, 10] 1 0 models.common.Concat [1]

12 -1 3 2495488 models.common.C3 [512, 512, 3, False]

13 -1 1 2360320 models.common.Conv [512, 512, 3, 2]

14 [-1, 5] 1 0 models.common.Concat [1]

15 -1 3 10496000 models.common.C3 [1536, 1024, 3, False]

16 [13, 16, 19] 1 43080 Detect [3, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [256, 512, 1024]]

YOLOv5_EfficientVIT summary: 638 layers, 22414248 parameters, 22414248 gradients, 42.4 GFLOPs3. 完整代码分享

https://pan.baidu.com/s/11lPA3M4UVnExHWgnjpPYLQ?pwd=9hb2提取码: 9hb2

4. GFLOPs

关于GFLOPs的计算方式可以查看:百面算法工程师 | 卷积基础知识——Convolution

未改进的GFLOPs

改进后的GFLOPs

5. 进阶

可以结合损失函数或者卷积模块进行多重改进

YOLOv5改进 | 损失函数 | EIoU、SIoU、WIoU、DIoU、FocuSIoU等多种损失函数——点击即可跳转

6. 总结

EfficientViT 的主要原理是在高分辨率密集预测任务中通过多尺度线性注意力(Multi-Scale Linear Attention)模块来实现高效的全局感受野和多尺度学习。与传统的 Softmax 注意力不同,EfficientViT 采用 ReLU 线性注意力,将计算复杂度从二次降低为线性,同时避免了软硬件上低效的操作。为了弥补线性注意力在局部信息提取上的不足,EfficientViT 通过小卷积核聚合相邻 tokens,生成多尺度的 Q/K/V tokens,从而增强模型的多尺度学习能力。此外,模型架构中还引入了深度卷积网络(FFN+DWConv)以进一步提升局部特征提取能力。这种设计在确保硬件效率的前提下,使得 EfficientViT 能够在多种硬件平台上显著加速推理过程,同时在多个高分辨率视觉任务上保持或提升性能。

2526

2526

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?