秋招面试专栏推荐 :深度学习算法工程师面试问题总结【百面算法工程师】——点击即可跳转

💡💡💡本专栏所有程序均经过测试,可成功执行💡💡💡

专栏目录 :《YOLOv5入门 + 改进涨点》专栏介绍 & 专栏目录 |目前已有80+篇内容,内含各种Head检测头、损失函数Loss、Backbone、Neck、NMS等创新点改进

红外小目标检测是计算机视觉中的关键任务,面临目标微小和背景复杂的挑战。本文介绍了可以显著提升检测性能的C3_PPA(C3通过融合PPA模块)。PPA模块提取多尺度特征。文章在介绍主要的原理后,将手把手教学如何进行模块的代码添加和修改,并将修改后的完整代码放在文章的最后,方便大家一键运行,小白也可轻松上手实践。以帮助您更好地学习深度学习目标检测YOLO系列的挑战。

目录

1. 原理

论文地址:HCF-Net: Hierarchical Context Fusion Network for Infrared Small Object Detection——点击即可跳转

官方代码:官方代码仓库——点击即可跳转

PPA(Parallelized Patch-Aware Attention,平行化感知补丁注意力)模块是HCF-Net中用于红外小目标检测的关键组件之一,专注于通过多分支的特征提取策略和注意力机制提升检测精度。其主要原理如下:

-

多分支特征提取:PPA模块通过并行的局部分支、全局分支和串行卷积分支来捕捉不同尺度和层级的特征。这种多分支策略能够有效提取多尺度信息,尤其适用于红外小目标的检测,增强了对微小目标的定位能力。

-

特征融合与注意力机制:在提取到多分支特征后,PPA模块利用通道注意力和空间注意力机制对特征进行自适应增强。通道注意力用于选择最具区分性的特征通道,而空间注意力则有助于突出目标区域。通过这些注意力机制,PPA模块可以有效提升小目标在复杂背景下的显著性。

具体流程包括:

-

首先将输入特征通过点卷积调整,然后分成局部和全局特征分支,通过非重叠补丁的方式来实现不同尺度的特征聚合。

-

利用卷积层进一步处理这些特征,最后通过通道和空间维度的注意力机制对结果进行融合和增强。

该模块通过保留关键的局部和全局信息,有效减少了红外小目标在多次下采样过程中可能丢失的重要信息,从而提高检测的精度和鲁棒性。

2. 将C3_PPA添加到yolov5网络中

2.1 C3_PPA代码实现

关键步骤一: 将下面的代码粘贴到\yolov5\models\common.py中

import math

import torch.nn.functional as F

class SpatialAttentionModule(nn.Module):

def __init__(self):

super(SpatialAttentionModule, self).__init__()

self.conv2d = nn.Conv2d(in_channels=2, out_channels=1, kernel_size=7, stride=1, padding=3)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avgout = torch.mean(x, dim=1, keepdim=True)

maxout, _ = torch.max(x, dim=1, keepdim=True)

out = torch.cat([avgout, maxout], dim=1)

out = self.sigmoid(self.conv2d(out))

return out * x

class LocalGlobalAttention(nn.Module):

def __init__(self, output_dim, patch_size):

super().__init__()

self.output_dim = output_dim

self.patch_size = patch_size

self.mlp1 = nn.Linear(patch_size*patch_size, output_dim // 2)

self.norm = nn.LayerNorm(output_dim // 2)

self.mlp2 = nn.Linear(output_dim // 2, output_dim)

self.conv = nn.Conv2d(output_dim, output_dim, kernel_size=1)

self.prompt = torch.nn.parameter.Parameter(torch.randn(output_dim, requires_grad=True))

self.top_down_transform = torch.nn.parameter.Parameter(torch.eye(output_dim), requires_grad=True)

def forward(self, x):

x = x.permute(0, 2, 3, 1)

B, H, W, C = x.shape

P = self.patch_size

# Local branch

local_patches = x.unfold(1, P, P).unfold(2, P, P) # (B, H/P, W/P, P, P, C)

local_patches = local_patches.reshape(B, -1, P*P, C) # (B, H/P*W/P, P*P, C)

local_patches = local_patches.mean(dim=-1) # (B, H/P*W/P, P*P)

local_patches = self.mlp1(local_patches) # (B, H/P*W/P, input_dim // 2)

local_patches = self.norm(local_patches) # (B, H/P*W/P, input_dim // 2)

local_patches = self.mlp2(local_patches) # (B, H/P*W/P, output_dim)

local_attention = F.softmax(local_patches, dim=-1) # (B, H/P*W/P, output_dim)

local_out = local_patches * local_attention # (B, H/P*W/P, output_dim)

cos_sim = F.normalize(local_out, dim=-1) @ F.normalize(self.prompt[None, ..., None], dim=1) # B, N, 1

mask = cos_sim.clamp(0, 1)

local_out = local_out * mask

local_out = local_out @ self.top_down_transform

# Restore shapes

local_out = local_out.reshape(B, H // P, W // P, self.output_dim) # (B, H/P, W/P, output_dim)

local_out = local_out.permute(0, 3, 1, 2)

local_out = F.interpolate(local_out, size=(H, W), mode='bilinear', align_corners=False)

output = self.conv(local_out)

return output

class ECA(nn.Module):

def __init__(self,in_channel,gamma=2,b=1):

super(ECA, self).__init__()

k=int(abs((math.log(in_channel,2)+b)/gamma))

kernel_size=k if k % 2 else k+1

padding=kernel_size//2

self.pool=nn.AdaptiveAvgPool2d(output_size=1)

self.conv=nn.Sequential(

nn.Conv1d(in_channels=1,out_channels=1,kernel_size=kernel_size,padding=padding,bias=False),

nn.Sigmoid()

)

def forward(self,x):

out=self.pool(x)

out=out.view(x.size(0),1,x.size(1))

out=self.conv(out)

out=out.view(x.size(0),x.size(1),1,1)

return out*x

class PPA(nn.Module):

def __init__(self, in_features, filters) -> None:

super().__init__()

self.skip = Conv(in_features, filters, act=False)

self.c1 = Conv(filters, filters, 3)

self.c2 = Conv(filters, filters, 3)

self.c3 = Conv(filters, filters, 3)

self.sa = SpatialAttentionModule()

self.cn = ECA(filters)

self.lga2 = LocalGlobalAttention(filters, 2)

self.lga4 = LocalGlobalAttention(filters, 4)

self.drop = nn.Dropout2d(0.1)

self.bn1 = nn.BatchNorm2d(filters)

self.silu = nn.SiLU()

def forward(self, x):

x_skip = self.skip(x)

x_lga2 = self.lga2(x_skip)

x_lga4 = self.lga4(x_skip)

x1 = self.c1(x)

x2 = self.c2(x1)

x3 = self.c3(x2)

x = x1 + x2 + x3 + x_skip + x_lga2 + x_lga4

x = self.cn(x)

x = self.sa(x)

x = self.drop(x)

x = self.bn1(x)

x = self.silu(x)

return x

class Bag(nn.Module):

def __init__(self):

super(Bag, self).__init__()

def forward(self, p, i, d):

edge_att = torch.sigmoid(d)

return edge_att * p + (1 - edge_att) * i

class DASI(nn.Module):

def __init__(self, in_features, out_features) -> None:

super().__init__()

self.bag = Bag()

self.tail_conv = nn.Conv2d(out_features, out_features, 1)

self.conv = nn.Conv2d(out_features // 2, out_features // 4, 1)

self.bns = nn.BatchNorm2d(out_features)

self.skips = nn.Conv2d(in_features[1], out_features, 1)

self.skips_2 = nn.Conv2d(in_features[0], out_features, 1)

self.skips_3 = nn.Conv2d(in_features[2], out_features, kernel_size=3, stride=2, dilation=2, padding=2)

self.silu = nn.SiLU()

def forward(self, x_list):

# x_high, x, x_low = x_list

x_low, x, x_high = x_list

if x_high != None:

x_high = self.skips_3(x_high)

x_high = torch.chunk(x_high, 4, dim=1)

if x_low != None:

x_low = self.skips_2(x_low)

x_low = F.interpolate(x_low, size=[x.size(2), x.size(3)], mode='bilinear', align_corners=True)

x_low = torch.chunk(x_low, 4, dim=1)

x = self.skips(x)

x_skip = x

x = torch.chunk(x, 4, dim=1)

if x_high == None:

x0 = self.conv(torch.cat((x[0], x_low[0]), dim=1))

x1 = self.conv(torch.cat((x[1], x_low[1]), dim=1))

x2 = self.conv(torch.cat((x[2], x_low[2]), dim=1))

x3 = self.conv(torch.cat((x[3], x_low[3]), dim=1))

elif x_low == None:

x0 = self.conv(torch.cat((x[0], x_high[0]), dim=1))

x1 = self.conv(torch.cat((x[0], x_high[1]), dim=1))

x2 = self.conv(torch.cat((x[0], x_high[2]), dim=1))

x3 = self.conv(torch.cat((x[0], x_high[3]), dim=1))

else:

x0 = self.bag(x_low[0], x_high[0], x[0])

x1 = self.bag(x_low[1], x_high[1], x[1])

x2 = self.bag(x_low[2], x_high[2], x[2])

x3 = self.bag(x_low[3], x_high[3], x[3])

x = torch.cat((x0, x1, x2, x3), dim=1)

x = self.tail_conv(x)

x += x_skip

x = self.bns(x)

x = self.silu(x)

return x

class C3_PPA(C3):

def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

self.m = nn.Sequential(*(PPA(c_, c_) for _ in range(n)))2.2 C3_PPA的神经网络模块代码解析

C3_PPA 模块是神经网络中自定义块的扩展,大概是从 C3 模块派生而来,该模块在 YOLOv5 等架构中很常用。我们来分解一下:

1. 父模块:C3

-

C3模块通常由一系列瓶颈层组成,常用于高效的特征提取。它具有c1、c2(输入和输出通道)、n(瓶颈数量)、shortcut(是否使用残差连接)、g(卷积中的分组)和e(扩展率)等参数。

2. 扩展模块:C3_PPA

-

初始化(

__init__方法): -

c1:输入通道数。

-

c2:输出通道数。

-

n:要堆叠的

PPA模块数量。 -

shortcut:是否使用残差连接(默认为

False)。 -

g:卷积的分组(默认为

1)。 -

e:隐藏通道的扩展比例(默认为

0.5)。 -

c_变量计算为int(c2 * e),表示瓶颈层中的隐藏通道数。 -

主组件(

self.m): -

self.m是一个顺序容器,堆叠了n个PPA模块实例,每个实例有c_个输入和输出通道。PPA模块集成了多种高级注意力机制和卷积层。

3. PPA模块

-

注意力和卷积:

-

它包括

SpatialAttentionModule,ECA(高效通道注意力)和LocalGlobalAttention。 -

PPA模块还使用卷积层(Conv)、批量归一化、dropout 和激活函数(SiLU)。 -

该模块有效地结合了这些操作,通过关注空间和通道方面的重要特征来增强特征表示。

4. 缝合

-

C3_PPA模块集成了PPA模块,代替了典型C3模块中使用的标准瓶颈层。这使它能够利用复杂的注意力机制并增强神经网络中的特征提取。 -

C3_PPA模块可能旨在通过添加更高级的特征提取和注意力机制来提高网络的性能,使其在对象检测或图像分类等任务中更加强大。

与标准 C3 模块相比,C3_PPA通过结合 PPA 模块的增强注意力机制丰富了特征提取功能。

2.3 新增yaml文件

关键步骤二:在下/yolov5/models下新建文件 yolov5_C3_PPA.yaml并将下面代码复制进去

- 目标检测yaml文件

# Ultralytics YOLOv5 🚀, AGPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [10, 13, 16, 30, 33, 23] # P3/8

- [30, 61, 62, 45, 59, 119] # P4/16

- [116, 90, 156, 198, 373, 326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[

[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3_PPA, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3_PPA, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3_PPA, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3_PPA, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head: [

[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, "nearest"]],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, "nearest"]],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

- 语义分割yaml文件

# Ultralytics YOLOv5 🚀, AGPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [10, 13, 16, 30, 33, 23] # P3/8

- [30, 61, 62, 45, 59, 119] # P4/16

- [116, 90, 156, 198, 373, 326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[

[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3_PPA, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3_PPA, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3_PPA, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3_PPA, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head: [

[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, "nearest"]],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, "nearest"]],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Segment, [nc, anchors, 32, 256]], # Segment (P3, P4, P5)

]

2.4 注册模块

关键步骤三:在yolo.py的parse_model函数替换添加C3_PPA

2.5 执行程序

在train.py中,将cfg的参数路径设置为yolov5_C3_PPA.yaml的路径

建议大家写绝对路径,确保一定能找到

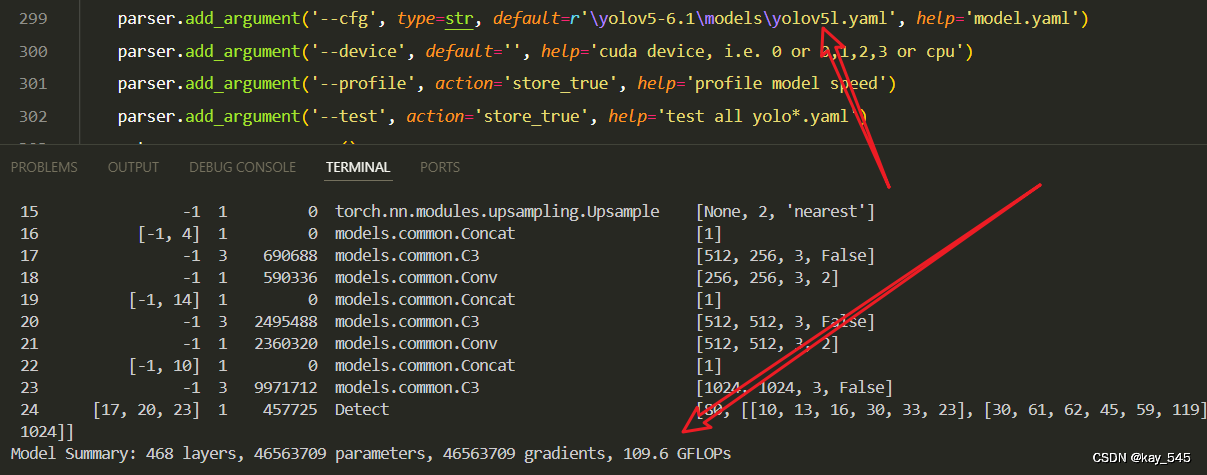

🚀运行程序,如果出现下面的内容则说明添加成功🚀

from n params module arguments

0 -1 1 7040 models.common.Conv [3, 64, 6, 2, 2]

1 -1 1 73984 models.common.Conv [64, 128, 3, 2]

2 -1 3 444658 models.common.C3_PPA [128, 128, 3]

3 -1 1 295424 models.common.Conv [128, 256, 3, 2]

4 -1 6 3399024 models.common.C3_PPA [256, 256, 6]

5 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

6 -1 9 20058280 models.common.C3_PPA [512, 512, 9]

7 -1 1 4720640 models.common.Conv [512, 1024, 3, 2]

8 -1 3 28098360 models.common.C3_PPA [1024, 1024, 3]

9 -1 1 2624512 models.common.SPPF [1024, 1024, 5]

10 -1 1 525312 models.common.Conv [1024, 512, 1, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 models.common.Concat [1]

13 -1 3 2757632 models.common.C3 [1024, 512, 3, False]

14 -1 1 131584 models.common.Conv [512, 256, 1, 1]

15 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

16 [-1, 4] 1 0 models.common.Concat [1]

17 -1 3 690688 models.common.C3 [512, 256, 3, False]

18 -1 1 590336 models.common.Conv [256, 256, 3, 2]

19 [-1, 14] 1 0 models.common.Concat [1]

20 -1 3 2495488 models.common.C3 [512, 512, 3, False]

21 -1 1 2360320 models.common.Conv [512, 512, 3, 2]

22 [-1, 10] 1 0 models.common.Concat [1]

23 -1 3 9971712 models.common.C3 [1024, 1024, 3, False]

24 [17, 20, 23] 1 457725 Detect [80, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [256, 512, 1024]]

YOLOv5_C3_PPA summary: 956 layers, 80883391 parameters, 80883391 gradients3. 完整代码分享

https://pan.baidu.com/s/1W9R3m-neEw0KWxJVhVXQhQ?pwd=jjk9提取码: jjk9

4. GFLOPs

关于GFLOPs的计算方式可以查看:百面算法工程师 | 卷积基础知识——Convolution

未改进的GFLOPs

改进后的GFLOPs

手里的没有卡了,需要的同学自己测一下吧

5. 进阶

可以结合损失函数或者卷积模块进行多重改进

YOLOv5改进 | 损失函数 | EIoU、SIoU、WIoU、DIoU、FocuSIoU等多种损失函数——点击即可跳转

6. 总结

PPA(Parallelized Patch-Aware Attention)模块通过并行的多分支特征提取策略来捕捉目标的多尺度特征,包含局部分支和全局分支,分别负责提取不同尺度的局部和全局特征。这些特征随后通过串联卷积分支进一步处理,以增强细节信息。然后,模块利用通道注意力和空间注意力机制进行自适应的特征融合与增强,选择最具区分性的特征通道并突出目标区域,从而在复杂背景下提升红外小目标的检测精度。通过这一多分支的特征提取和注意力机制,PPA模块能够有效减少信息丢失,保留关键特征,显著提高小目标检测的准确性和鲁棒性。

379

379

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?