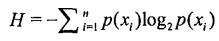

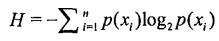

决策树基本原理可以概括为:通过计算信息增益划分属性集,选择增益最大的属性作为决策树当前节点,依次往下,构建整个决策树。为了计算熵,需要先计算每个属性的信息增益值,通过下面公式计算:

创建数据集:

def createDataSet():

dataSet = [ [1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

return dataSet, labels

计算熵代码片:

def calcShannonEnt(dataSet):

numEntries = len(dataSet)

print 'total numEntries = %d' % numEntries

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys():

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

for key in labelCounts.keys():

print key,':',labelCounts[key]

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

shannonEnt -= prob * log(prob,2)

print 'shannonEnt = ',shannonEnt

return shannonEnt

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

labelCounts 是存储所有label个数的字典,key为label,key_value为label个数。for循环计算label个数,并打印出字典值。函数返回熵值。

myDat, labels = createDataSet()

shannonEnt = calcShannonEnt(myDat)

计算结果为:

numEntries = 5

yes : 2

no : 3

shannonEnt = 0.970950594455

熵值越高,数据集越混乱(label越多,越混乱)。试着改变label值可以观察熵值的变化。

myDat[0][-1] = ‘maybe’

shannonEnt = calcShannonEnt(myDat)

输出结果:

numEntries = 5

maybe : 1

yes : 1

no : 3

shannonEnt = 1.37095059445

得到熵值后即可计算各属性信息增益值,选取最大信息增益值作为当前分类节点,知道分类结束。

splitDataSet函数参数为:dataSet为输入数据集,包含你label值;axis为每行的第axis元素,对应属性特征;value为对应元素的值,即特征的值。

函数功能:找出所有行中第axis个元素值为value的行,去掉该元素,返回对应行矩阵。

当需要按照某个特征值划分数据时,需要将所有符合要求的元素抽取出来,便于计算信息增益。

def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

print 'retDataSet = ',retDataSet

return retDataSet

例如:

splitDataSet(myDat,0,1)

执行结果:

dataSet = [[1, 1, ‘yes’], [1, 1, ‘yes’], [1, 0, ‘no’], [0, 1, ‘no’], [0, 1, ‘no’]]

retDataSet = [[1, ‘yes’], [1, ‘yes’], [0, ‘no’]]

splitDataSet(myDat,1,1)

执行结果:

dataSet = [[1, 1, ‘yes’], [1, 1, ‘yes’], [1, 0, ‘no’], [0, 1, ‘no’], [0, 1, ‘no’]]

retDataSet = [[1, ‘yes’], [1, ‘yes’], [0, ‘no’], [0, ‘no’]]

为了便于查看计算过程,我重新生成了一个dataset用于计算信息增益,如下:

def createDataSet_me():

dataSet = [ ['sunny', 'busy', 'male', 'no'],

['rainy', 'not busy', 'female', 'no'],

['cloudy', 'relax', 'male', 'maybe'],

['sunny', 'relax', 'male', 'yes'],

['cloudy', 'not busy', 'male', 'maybe'],

['sunny', 'not busy', 'female', 'yes']]

return dataSet

基本含义是根据天气、是否忙碌以及性别,判断是否出门旅行。计算信息增益代码如下

def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0]) - 1

print 'numFeatures = ',numFeatures

baseEntropy = calcShannonEnt(dataSet)

print 'the baseEntropy is :',baseEntropy

bestInfoGain = 0.0

bestFeature = 0

for i in range(numFeatures):

print 'in feature %d' % i

featList = [example[i] for example in dataSet]

print 'in feature %d,value List : ' % i,featList

uniqueVals = set(featList)

print 'uniqueVals:',uniqueVals

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

print '\tnewEntropy of feature %d is : ' % i,newEntropy

infoGain = baseEntropy - newEntropy

print '\tinfoGain : ',infoGain

if (infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

print 'bestFeature:',bestFeature

return bestFeature

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

先获取属性个数,dataset最后一列为label,所以需要-1。

for循环嵌套即用来计算信息增益。

外层for循环用于遍历所有特征。featList = [example[i] for example in dataSet] 语句用于查找该属性下所有属性值,并使用set函数对属性值列表进行唯一化,防止重复计算。

内侧for循环用于遍历当前属性下所有属性值。计算每一个属性值对应的熵值并求和。结果与原始熵值的差即为信息增益。

信息增益越大,说明该特征越利于分类,即当前分类节点应该选择该属性。

函数返回用来分类的属性标号。

简单实验:

DataSet_me = createDataSet_me();

bestFeature = chooseBestFeatureToSplit(DataSet_me)

输出:

in feature 0

in feature 0,value List : [‘sunny’, ‘rainy’, ‘cloudy’, ‘sunny’, ‘cloudy’, ‘sunny’]

uniqueVals: set([‘rainy’, ‘sunny’, ‘cloudy’])

newEntropy of feature 0 is : 0.459147917027

infoGain : 1.12581458369

in feature 1

in feature 1,value List : [‘busy’, ‘not busy’, ‘relax’, ‘relax’, ‘not busy’, ‘not busy’]

uniqueVals: set([‘not busy’, ‘busy’, ‘relax’])

newEntropy of feature 1 is : 1.12581458369

infoGain : 0.459147917027

in feature 2

in feature 2,value List : [‘male’, ‘female’, ‘male’, ‘male’, ‘male’, ‘female’]

uniqueVals: set([‘male’, ‘female’])

newEntropy of feature 2 is : 1.33333333333

infoGain : 0.251629167388

bestFeature: 0

可得属性0的信息增益最大,用属性0来分类最好。

知道如何得到最佳的属性划分节点,即可递归调用该函数,创建决策树。结束递归的条件是:1)遍历完所有要划分的属性;2)分支下所有实例都具有相同label。

函数majorityCnt用于:如果数据集已经处理了所有属性,但是label并不唯一,这是使用多数表决,决定label。

比如上述dataset中多了以下几个元素

[‘sunny’, ‘busy’, ‘male’, ‘no’]

[‘sunny’, ‘busy’, ‘male’, ‘no’]

[‘sunny’, ‘busy’, ‘male’, ‘no’]

[‘sunny’, ‘busy’, ‘male’, ‘yes’]

这是就需要多数表决来决定label号。

输入参数classList即为dataset的所有label号。sorted即对字典按降序排列,返回label次数最多的label。

def majorityCnt(classList):

classCount={}

for vote in classList:

if vote not in classCount.keys():

classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.iteritems(), key=operator.itemgetter(1), reverse=True)

return sortedClassCount[0][0]

为了便于测试,重新创建数据集,如下:

def createDataSet2():

dataSet = [ ['sunny', 'busy', 'male', 'no'],

['sunny', 'busy', 'male', 'no'],

['sunny', 'busy', 'female', 'yes'],

['rainy', 'not busy', 'female', 'no'],

['cloudy', 'relax', 'male', 'maybe'],

['sunny', 'relax', 'male', 'yes'],

['cloudy', 'not busy', 'male', 'maybe'],

['sunny', 'not busy', 'female', 'yes']]

features = ['weather', 'busy or not', 'gender']

return dataSet, features

feature为对应属性名。

下面构造决策树代码,输入dataset和label:

def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet]

print 'classList:',classList

if classList.count(classList[0]) == len(classList):

return classList[0]

if len(dataSet[0]) == 1:

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:]

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value),subLabels)

print 'myTree = ',myTree

return myTree

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

输出结果为:

myTree = {‘weather’: {‘rainy’: ‘no’, ‘sunny’: {‘busy or not’: {‘not busy’: ‘yes’, ‘busy’: {‘gender’: {‘male’: ‘no’, ‘female’: ‘yes’}}, ‘relax’: ‘yes’}}, ‘cloudy’: ‘maybe’}}

下面classify用于对给定测试向量进行分类:

def classify(inputTree,featLabels,testVec):

print 'featLabels: ',featLabels

print 'testVec: ',testVec

firstStr = inputTree.keys()[0]

print 'firstStr:',firstStr

secondDict = inputTree[firstStr]

print 'secondDict: ',secondDict

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else: classLabel = valueOfFeat

print 'classLabel:',classLabel

return classLabel

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

也是递归调用classify函数,依次对输入的属性值通过决策树进行判定,得到最终的label。

例如

feature_label = ['weather','gender','busy or not']

test_vector = ['rainy','female','busy']

classify(MyTree,feature_label,test_vector)

输出结果:

featLabels: [‘weather’, ‘gender’, ‘busy or not’]

testVec: [‘rainy’, ‘female’, ‘busy’]

firstStr: weather

secondDict: {‘rainy’: ‘no’, ‘sunny’: {‘busy or not’: {‘not busy’: ‘yes’, ‘busy’: {‘gender’: {‘male’: ‘no’, ‘female’: ‘yes’}}, ‘relax’: ‘yes’}}, ‘cloudy’: ‘maybe’}

classLabel: no

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?