PyTorch线性回归代码实现(自学笔记)

基本框架

-

prepare dataset Dataset and Dataloader

-

Design model using Class inherit from nn.Module

-

construct loss and optimizer using PyTorch API

-

Training cycle forward ,backward,update

代码及详解

#导入模块

##

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

#==================初始化============================================

batch_size = 200 #批处理大小 ,随机选择200个样本进行批处理

learning_rate = 0.01 #学习速率,超参数

epochs = 10 #epochs 是10次,一个epochs是所有训练数据走了一遍,随着epochs的前进也就是学习前进

#=================加载数据==========================================

#加载训练数据 prepare dataset Dataset and Dataloader

# 1. train设置为true)

# 2. transforms.ToTensor() 将0-255的图像转换成0-1的张量

# 3. transforms.Normalize((0.1307,), (0.3081,)将0-1的张量标准化成-1到1

# 4. shuffle=True 训练数据需要打乱洗牌

#=================加载测试数据=======================================

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('./data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),batch_size=batch_size, shuffle=True)

#======================测试数据====================================

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('./data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])), batch_size=batch_size, shuffle=True)#shuffle 进行洗牌

#=========================Datesets与DataLoader详解===================

'''

1. **from torchvision import datasets, transforms**

#datasets 是一个抽象类, 继承后要实现方法__init__(self)

#__getitem__(self,index)

#__len__(self)

#例如:class DisabetesDataset(Dataset):

# def __init__(self):

pass

def __getitem__(self,index):

pass

def __len__(self):

pass

dataset = DiabetesDataset() 实例化类

2.**torch.utils.data.DataLoader**

这个在PyTorch中加数据

train_loader = DataLoader(

dataset = dataset,

batch_size =32 ,

shuffle = True,

num_workers= 2 #线程个数 ,一般设置4或8

)

这里num_workers 在windows下面会出错误 swrap 代替了fork

**if _name_ == '_main_':**#解决多线程问题

for epoch in range(100):

for i,data in enumerate(train_loader,0)

'''

##=============================== Design model using Class inherit from nn.Module=============================================

class MLP(nn.Module):

# 构造函数,通过继承nn.Module类来实现,在__init__构造函数中申明各个层的定义,在forward中实现层之间的连接关系,实际上就是前向传播的过程。

def __init__(self):

super(MLP, self).__init__()

#建立全连接 ,三层神经网络,输入神经元个数 和输出神经元个数要保持一致,并用激活函数激活神经元

self.model = nn.Sequential(

nn.Linear(784, 200),

nn.ReLU(inplace=True),

nn.Linear(200, 200),

nn.ReLU(inplace=True),

nn.Linear(200, 10),

nn.ReLU(inplace=True),

)

def forward(self, x):

x = self.model(x)

return x

#=====================================实例化Model===================================

net = MLP()

#=========================================construct loss and optimizer using PyTorch API======================

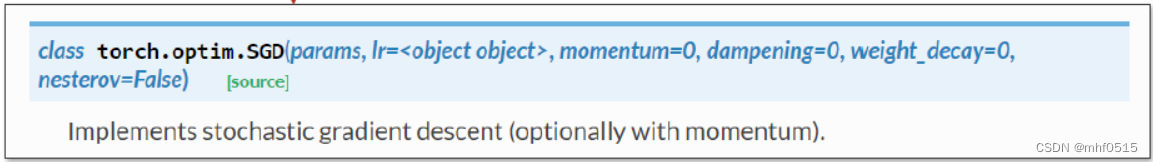

optimizer = optim.SGD(net.parameters(), lr=learning_rate)#随机梯度下降

optimizer = optim.SGD(net.parameters(), lr=learning_rate)

cri = nn.CrossEntropyLoss()#交叉熵损失

#=======================================Training cycle forward ,backward,update==============================================

for epoch in range(epochs):

for batch_idx, (data, target) in enumerate(train_loader):

data = data.view(-1, 784)

logits = net(data)

loss = cri(logits, target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if batch_idx % 100 == 0:

print(epoch, loss.item())

#===============================training 详解=========================

```python

'''for epoch in range(100):

for i,data in enumerate(train_loader,0)

#1. prepare data

inputs,label= data #这里data 由输入,标签组成,inputs 为x label 为y

#2. forward

y_pred = model(inputs)#将x丢进模型,算出y_hat

loss = criterion(y_pred,labels)#计算损失 通过y_hat ,y 利用损失函数计算损失

print(epoch,i,loss.item())

#3.backward

optimizer.zero_grad()#梯度请0

loss.backward()

#4.Update

optimizer.step()

'''

#====================测试集========================================

test_loss = 0

correct = 0

for data, target in test_loader:

#1.加载测试数据

data = data.view(-1, 784)

#2.把x丢进模型中算出y_hat

logits = net(data)

#3.利用y_hat和y标签算出损失,是一个标量

test_loss = cri(logits, target).item() + test_loss

#4.取出y_hat概率最大

pred = logits.data.max(1)[1]

correct = pred.eq(target.data).sum()

test_loss /= len(test_loader.dataset)

print(test_loss)

与上面例子无关 的补充:

#因为数据量很小,全部加载到内存中

class DiabetesDataset(Dataset)

def init(self,filepath):

xy = np.loadtxt(filepath,delimiter=‘,’,dtype = np.float32)

self.len = xy.shape[0] # shape返回的是(N,9)这里shape[0]的意思是拿到了N

self.x_data = torch.from_numpy(xy[:,:-1]) #x_data 切片,xy行全选,列左闭右开

self.y_data = torch.from_numpy(xy[:,[-1]])# y_data 切片,xy 行全选,列最后一列

def getitem(self,index):

return self.x_data[index],self.y_data[index]#返回的是元组

def __len__():

return self.len

*

515

515

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?