推荐系统项目基础(六)基于LFM的推荐系统

从SVD到FunkSVD

传统的SVD矩阵分解将一个矩阵分解为三个矩阵,对于一些缺失值需要进行矩阵的填充,这会对原有的数据产生噪声。

FunkSVD

FunkSVD将矩阵分解为2个矩阵,分别为用户-隐含特征矩阵User-LF ,与隐含特征–物品LF-Item矩阵。Funk SVD也被成为最原始的LFM模型。损失函数为拟合矩阵的元素与真实矩阵元素的相减值。

∑ i , j ( m i j − q j T p i ) 2 \sum_{i,j}{(m_{ij}-q_j^Tp_i)}^2 i,j∑(mij−qjTpi)2

同时为了避免过拟合,可以在上式中加入L2正则项

∑ i , j ( m i j − q j T p i ) 2 + λ ( ∥ q i ∥ 2 + ∥ p u ∥ 2 ) \sum_{i,j}{(m_{ij}-q_j^Tp_i)}^2+\lambda(\left\|q_i\right\|^2+\left\|p_u\right\|^2) i,j∑(mij−qjTpi)2+λ(∥qi∥2+∥pu∥2)

代码为:

'''

LFM Model

'''

import pandas as pd

import numpy as np

# 评分预测 1-5

class LFM(object):

def __init__(self, alpha, reg_p, reg_q, number_LatentFactors=10, number_epochs=10,

columns=["uid", "iid", "rating"]):

self.alpha = alpha # 学习率

self.reg_p = reg_p # P矩阵正则

self.reg_q = reg_q # Q矩阵正则

self.number_LatentFactors = number_LatentFactors # 隐式类别数量

self.number_epochs = number_epochs # 最大迭代次数

self.columns = columns

def fit(self, dataset):

'''

fit dataset

:param dataset: uid, iid, rating

:return:

'''

self.dataset = pd.DataFrame(dataset)

self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]

print(self.users_ratings)

self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]

print(self.items_ratings)

self.globalMean = self.dataset[self.columns[2]].mean()

self.P, self.Q = self.sgd()

def _init_matrix(self):

'''

初始化P和Q矩阵,同时为设置0,1之间的随机值作为初始值

:return:

'''

# User-LF

P = dict(zip(

self.users_ratings.index,

np.random.rand(len(self.users_ratings), self.number_LatentFactors).astype(np.float32)

))

# Item-LF

Q = dict(zip(

self.items_ratings.index,

np.random.rand(len(self.items_ratings), self.number_LatentFactors).astype(np.float32)

))

return P, Q

def sgd(self):

'''

使用随机梯度下降,优化结果

:return:

'''

P, Q = self._init_matrix()

for i in range(self.number_epochs):

print("iter%d" % i)

error_list = []

for uid, iid, r_ui in self.dataset.itertuples(index=False):

# User-LF P

## Item-LF Q

v_pu = P[uid] # 用户向量

v_qi = Q[iid] # 物品向量

err = np.float32(r_ui - np.dot(v_pu, v_qi))

v_pu += self.alpha * (err * v_qi - self.reg_p * v_pu)

v_qi += self.alpha * (err * v_pu - self.reg_q * v_qi)

P[uid] = v_pu

Q[iid] = v_qi

# for k in range(self.number_of_LatentFactors):

# v_pu[k] += self.alpha*(err*v_qi[k] - self.reg_p*v_pu[k])

# v_qi[k] += self.alpha*(err*v_pu[k] - self.reg_q*v_qi[k])

error_list.append(err ** 2)

print(np.sqrt(np.mean(error_list)))

return P, Q

def predict(self, uid, iid):

# 如果uid或iid不在,我们使用全剧平均分作为预测结果返回

if uid not in self.users_ratings.index or iid not in self.items_ratings.index:

return self.globalMean

p_u = self.P[uid]

q_i = self.Q[iid]

return np.dot(p_u, q_i)

def test(self, testset):

'''预测测试集数据'''

for uid, iid, real_rating in testset.itertuples(index=False):

try:

pred_rating = self.predict(uid, iid)

except Exception as e:

print(e)

else:

yield uid, iid, real_rating, pred_rating

if __name__ == '__main__':

dtype = [("userId", np.int32), ("movieId", np.int32), ("rating", np.float32)]

dataset = pd.read_csv("./ml-25m/ratings.csv", usecols=range(3), dtype=dict(dtype)).loc[0:2000]

lfm = LFM(0.02, 0.01, 0.01, 10, 100, ["userId", "movieId", "rating"])

lfm.fit(dataset)

while True:

uid = input("uid: ")

iid = input("iid: ")

print(lfm.predict(int(uid), int(iid)))

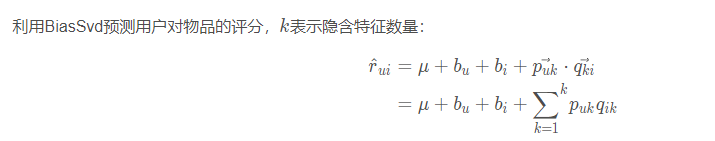

BiasSVD

在FunkSVD提出来后,出现了很多变形版本,其中比较成功的是BiasSVD

BiasSVD在之前的基础上加入了baseline的思想。

代码:

'''

BiasSvd Model

'''

import math

import random

import pandas as pd

import numpy as np

class BiasSvd(object):

def __init__(self, alpha, reg_p, reg_q, reg_bu, reg_bi, number_LatentFactors=10, number_epochs=10, columns=["uid", "iid", "rating"]):

self.alpha = alpha # 学习率

self.reg_p = reg_p

self.reg_q = reg_q

self.reg_bu = reg_bu

self.reg_bi = reg_bi

self.number_LatentFactors = number_LatentFactors # 隐式类别数量

self.number_epochs = number_epochs

self.columns = columns

def fit(self, dataset):

'''

fit dataset

:param dataset: uid, iid, rating

:return:

'''

self.dataset = pd.DataFrame(dataset)

self.users_ratings = dataset.groupby(self.columns[0]).agg([list])[[self.columns[1], self.columns[2]]]

self.items_ratings = dataset.groupby(self.columns[1]).agg([list])[[self.columns[0], self.columns[2]]]

self.globalMean = self.dataset[self.columns[2]].mean()

self.P, self.Q, self.bu, self.bi = self.sgd()

def _init_matrix(self):

'''

初始化P和Q矩阵,同时为设置0,1之间的随机值作为初始值

:return:

'''

# User-LF

P = dict(zip(

self.users_ratings.index,

np.random.rand(len(self.users_ratings), self.number_LatentFactors).astype(np.float32)

))

# Item-LF

Q = dict(zip(

self.items_ratings.index,

np.random.rand(len(self.items_ratings), self.number_LatentFactors).astype(np.float32)

))

return P, Q

def sgd(self):

'''

使用随机梯度下降,优化结果

:return:

'''

P, Q = self._init_matrix()

# 初始化bu、bi的值,全部设为0

bu = dict(zip(self.users_ratings.index, np.zeros(len(self.users_ratings))))

bi = dict(zip(self.items_ratings.index, np.zeros(len(self.items_ratings))))

for i in range(self.number_epochs):

print("iter%d"%i)

error_list = []

for uid, iid, r_ui in self.dataset.itertuples(index=False):

v_pu = P[uid]

v_qi = Q[iid]

err = np.float32(r_ui - self.globalMean - bu[uid] - bi[iid] - np.dot(v_pu, v_qi))

v_pu += self.alpha * (err * v_qi - self.reg_p * v_pu)

v_qi += self.alpha * (err * v_pu - self.reg_q * v_qi)

P[uid] = v_pu

Q[iid] = v_qi

bu[uid] += self.alpha * (err - self.reg_bu * bu[uid])

bi[iid] += self.alpha * (err - self.reg_bi * bi[iid])

error_list.append(err ** 2)

print(np.sqrt(np.mean(error_list)))

return P, Q, bu, bi

def predict(self, uid, iid):

if uid not in self.users_ratings.index or iid not in self.items_ratings.index:

return self.globalMean

p_u = self.P[uid]

q_i = self.Q[iid]

return self.globalMean + self.bu[uid] + self.bi[iid] + np.dot(p_u, q_i)

if __name__ == '__main__':

dtype = [("userId", np.int32), ("movieId", np.int32), ("rating", np.float32)]

dataset = pd.read_csv("datasets/ml-latest-small/ratings.csv", usecols=range(3), dtype=dict(dtype))

bsvd = BiasSvd(0.02, 0.01, 0.01, 0.01, 0.01, 10, 20)

bsvd.fit(dataset)

while True:

uid = input("uid: ")

iid = input("iid: ")

print(bsvd.predict(int(uid), int(iid)))

3470

3470

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?