最近在研究orb slam,发现经常出现特征点跟丢情况,打算slam中VO中的特征提取方式用深度学习模型去尝试,首先需要提取出比较准确的特征点.废话不多说直接上代码:

cv::Ptr<cv::ORB> orb=cv::ORB::create();

std::vector<cv::KeyPoint>keypoints1,keypoints2;

cv::Mat descriptors1,descriptors2;

orb->detectAndCompute(img_left,cv::Mat(),keypoints1,descriptors1);

orb->detectAndCompute(img_right,cv::Mat(),keypoints2,descriptors2);

cv::Mat showKeypoints1,showKeypoints2;

cv::drawKeypoints(img_left,keypoints1,showKeypoints1);

cv::drawKeypoints(img_right,keypoints2,showKeypoints2);

std::vector<cv::DMatch>matches;

cv::Ptr<cv::DescriptorMatcher>matcher=cv::DescriptorMatcher::create("BruteForce-Hamming");

matcher->match(descriptors1,descriptors2,matches);

double min_dist=0,max_dist=0;

for (int i = 0; i <descriptors1.rows ; ++i) {

double dist=matches[i].distance;

if(dist<min_dist)min_dist=dist;

if(dist>max_dist)max_dist=dist;

}

std::vector<cv::DMatch>good_matches;

for (int j = 0; j <descriptors1.rows ; ++j) {

if(matches[j].distance <= std::max(2*min_dist,25.0)){

good_matches.push_back(matches[j]);

}

}

cv::Mat showMatches;

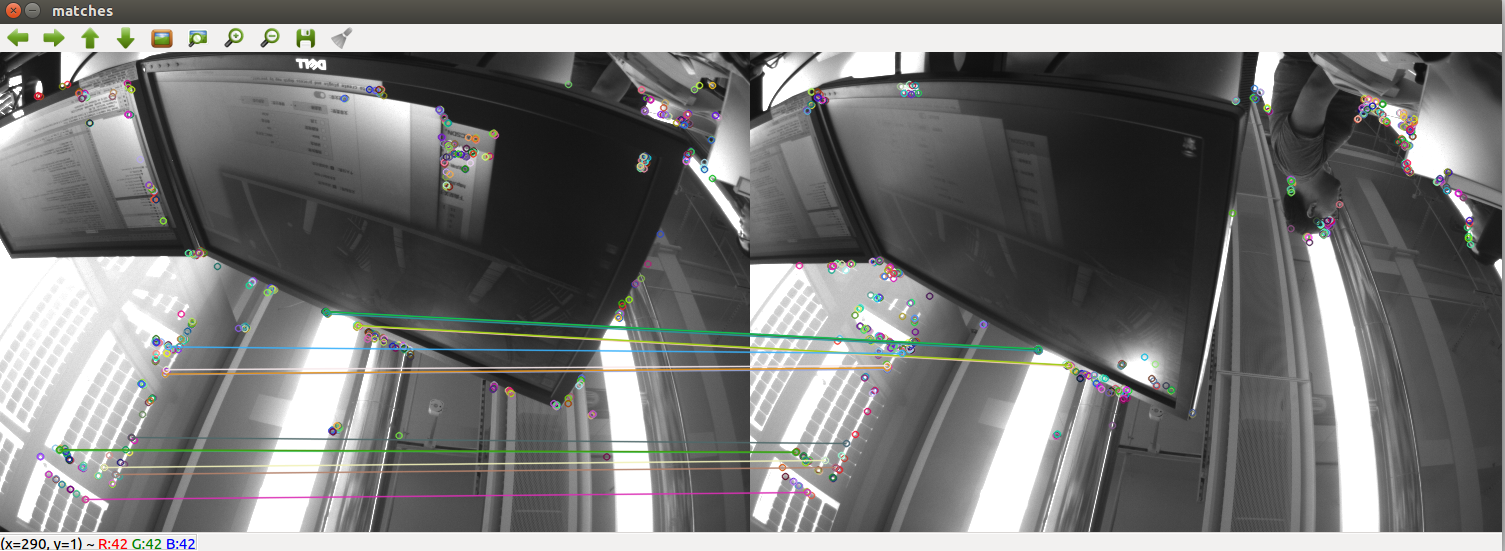

cv::drawMatches(img_left,keypoints1,img_right,keypoints2,good_matches,showMatches);

cv::imshow("matches",showMatches);

cv::waitKey();步骤:

1:检测角点位置并计算描述子

2:对描述子进行匹配

3:遍历matches数组,找出距离的最大值和最小值;

4:根据最小距离对匹配点进行筛选

5:绘制匹配结果

6.打印出匹配点和保存图片已备后面训练用

2648

2648

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?