目录

摄取元数据

Ingestion界面添加新数据源-》选择superset

配置yml文件

source:

type: superset

config:

connect_uri: 'http://xx.xx.xx.xx:8080'

username: xxxx

password: xxx

sink:

type: datahub-rest

config:

server: 'localhost:8080'

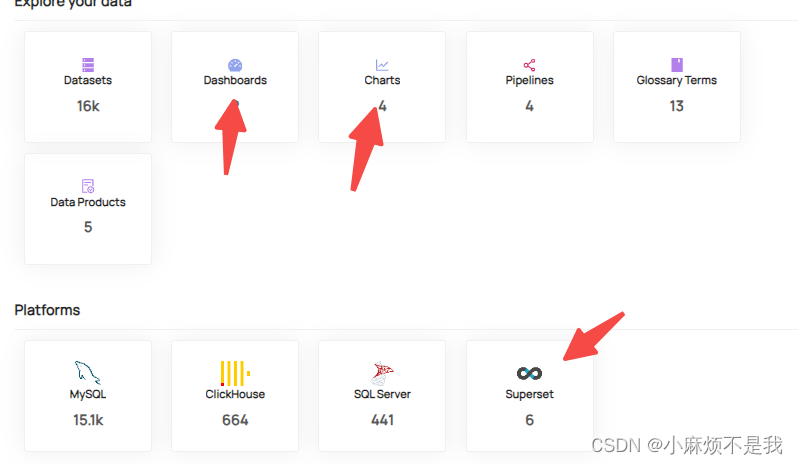

执行成功后,首页显示

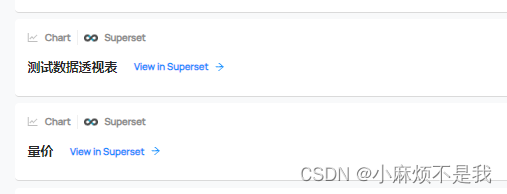

进入charts,可显示superset平台元数据

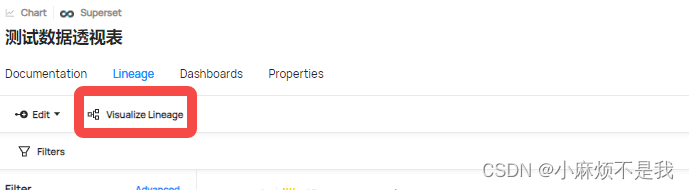

查看数据数据血缘

发现数据血缘只显示charts->dashboard的血缘,并未显示dataset->charts血缘

数据血缘写入

直连superset后台数据库,将数据血缘写入datahub,循环遍历所有charts,获取其dataset数据集和charts(探索这个charts urn的name填什么真是测试的要疯。。。。翻烂了superset数据库和datahub存的内容, 试了所有的可能性,中文名,urn:li:charts....,然后最后看的源码又尝试,最后终于成了,官方文档给的真的写不进去啊)

from typing import List

import datahub.emitter.mce_builder as builder

from datahub.emitter.mcp import MetadataChangeProposalWrapper

from datahub.emitter.rest_emitter import DatahubRestEmitter

from datahub.metadata.com.linkedin.pegasus2avro.chart import ChartInfoClass

from datahub.metadata.schema_classes import ChangeAuditStampsClass

import pandas as pd

import pymysql

import json

import pandas as pd

# 连接 MySQL 数据库

conns = pymysql.connect(

host='10.xx.xx.xxx', # 主机名

port=3306, # 端口号,MySQL默认为3306

user='xxx', # 用户名

password='xxx', # 密码

database='superset', # 数据库名称

)

# 创建游标对象

cursors = conns.cursor()

# 执行 SQL 查询语句

cursors.execute("select * from slices ")

# 获取查询结果

resultsql = cursors.fetchall()

# 将查询结果转化为 Pandas dataframe 对象

df = pd.DataFrame(resultsql, columns=[i[0] for i in cursors.description])

df

for i in range(len(df)):

slice_id=df.loc[i,'id']

slice_name=df.loc[i,'slice_name']

datasource_name=df.loc[i,'datasource_name']

schema_perm=df.loc[i,'schema_perm']

platforms=schema_perm.split('.')[0].replace('[','').replace(']','').lower()

database=schema_perm.split('.')[1].replace('[','').replace(']','').lower()

if platforms.find('-')>=0:

database=platforms.split('-')[1].capitalize()

platforms=platforms.split('-')[0].lower()

ds=platforms+'.'+database

datasource_name=ds+'.'+datasource_name

print(platforms,datasource_name)

input_datasets: List[str] = [

builder.make_dataset_urn(platform=platforms, name=datasource_name, env="PROD"),

]

last_modified = ChangeAuditStampsClass()

chart_info = ChartInfoClass(

title=slice_name,

description="",

lastModified=last_modified,

inputs=input_datasets,

chartUrl="http://superset_url:8080/explore/?slice_id="+str(slice_id)

)

#

# Construct a MetadataChangeProposalWrapper object with the ChartInfo aspect.

# NOTE: This will overwrite all of the existing chartInfo aspect information associated with this chart.

chart_info_mcp = MetadataChangeProposalWrapper(

entityUrn=builder.make_chart_urn(platform="superset", name=slice_id),

aspect=chart_info,

)

# Create an emitter to the GMS REST API.

emitter = DatahubRestEmitter("http://localhost:8080")

# Emit metadata!

emitter.emit_mcp(chart_info_mcp)

将以上py文件,放入datahub服务器,调用脚本即可写入。

1170

1170

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?