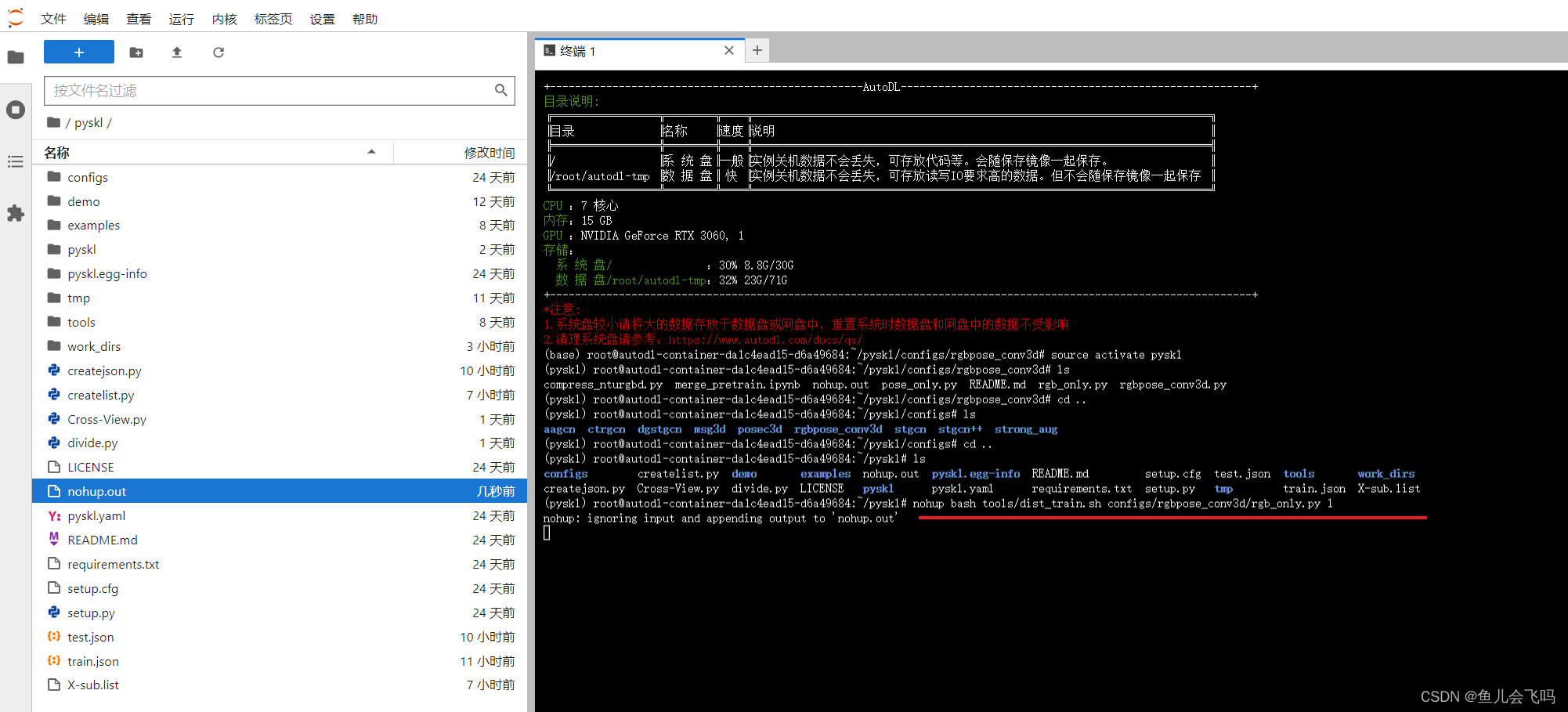

我是使用的MobaXterm远程终端工具,输入的训练命令

nohup bash tools/dist_train.sh configs/rgbpose_conv3d/rgb_only.py 1

然后等了一会,直接把远程终端工具关闭了。

于是吃完饭回来实验室打开日志文件发现报错了,报错如下:

/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launch.py:186: FutureWarning: The module torch.distributed.launch is deprecated

and will be removed in future. Use torchrun.

Note that --use_env is set by default in torchrun.

If your script expects `--local_rank` argument to be set, please

change it to read from `os.environ['LOCAL_RANK']` instead. See

https://pytorch.org/docs/stable/distributed.html#launch-utility for

further instructions

FutureWarning,

WARNING:torch.distributed.elastic.agent.server.api:Received 1 death signal, shutting down workers

WARNING:torch.distributed.elastic.multiprocessing.api:Sending process 17462 closing signal SIGHUP

Traceback (most recent call last):

File "/root/miniconda3/envs/pyskl/lib/python3.7/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/root/miniconda3/envs/pyskl/lib/python3.7/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launch.py", line 193, in <module>

main()

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launch.py", line 189, in main

launch(args)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launch.py", line 174, in launch

run(args)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/run.py", line 718, in run

)(*cmd_args)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launcher/api.py", line 131, in __call__

return launch_agent(self._config, self._entrypoint, list(args))

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/launcher/api.py", line 236, in launch_agent

result = agent.run()

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/elastic/metrics/api.py", line 125, in wrapper

result = f(*args, **kwargs)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/elastic/agent/server/api.py", line 709, in run

result = self._invoke_run(role)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/elastic/agent/server/api.py", line 850, in _invoke_run

time.sleep(monitor_interval)

File "/root/miniconda3/envs/pyskl/lib/python3.7/site-packages/torch/distributed/elastic/multiprocessing/api.py", line 60, in _terminate_process_handler

raise SignalException(f"Process {os.getpid()} got signal: {sigval}", sigval=sigval)

torch.distributed.elastic.multiprocessing.api.SignalException: Process 17456 got signal: 1原因分析:

是因为SSH终端与服务器之间建立的是临时的交互会话,如果一段时间没有交互,或者关闭窗口,会话就结束,那么会话内部的进程也终止,所以训练任务就结束了。

解决方案:

依然使用旧办法,老老实实在云端服务器上自带的终端工具来输入训练命令

参考:

570

570

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?