目录

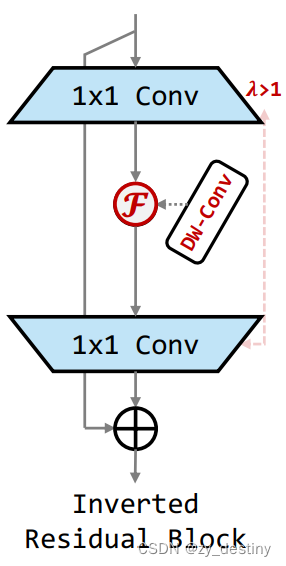

Inverted Residual Block:简称iRBlock,倒残差模块

Inverted Residual Mobile Block:简称iRMBlock,倒残差移动模块,是iRBlock的改进版本

1. iRBlock简介

Inverted Residual Block,也被称为MobileNet的构建模块,通常被用在轻量化网络中,方便在移动端和嵌入式端进行部署,是一种用于提取特征的深度学习模块。它结合了深度可分离卷积(depthwise separable convolution)和shortcut connections,以减少计算成本和参数数量。

以下是一个简单的Python代码示例,展示了如何实现一个Inverted Residual Block:

import torch

import torch.nn as nn

class InvertedResidual(nn.Module):

def __init__(self, in_channels, out_channels, stride, expand_ratio):

super(InvertedResidual, self).__init__()

self.stride = stride

hidden_channels = in_channels * expand_ratio

# Squeeze and Excitation

self.conv_se = nn.Sequential(

nn.Conv2d(hidden_channels, hidden_channels // ratio, 1),

nn.ReLU(inplace=True),

nn.Conv2d(hidden_channels // ratio, hidden_channels, 1),

nn.Sigmoid()

)

# Pointwise convolution

self.conv_pw = nn.Conv2d(hidden_channels, out_channels, 1)

# Depthwise convolution

self.conv_dw = nn.Conv2d(in_channels, hidden_channels, 3, stride, 1, groups=hidden_channels)

def forward(self, x):

if self.stride == 1:

shortcut = x

else:

shortcut = self.pool(x)

x = self.conv_dw(x)

x = self.conv_se(x)

x = self.conv_pw(x)

x = x * shortcut

return x 这个模块接收输入的通道数in_channels,输出的通道数out_channels,步长stride,以及扩展比率expand_ratio作为参数。它首先使用深度可分离卷积进行特征提取,然后通过Squeeze and Excitation模块进一步改善特征表示,最后使用点卷积输出。如果步长大于1,它会使用平均池化来进行shortcut connection。

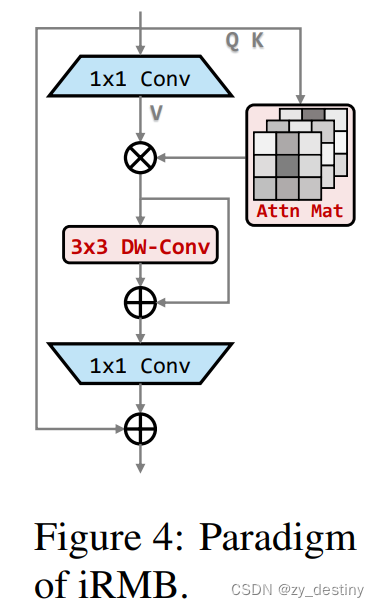

2. iRMBlock简介

iRMBlock模块是这篇论文基于iRBlock进行改进的。 iRBlock模块虽然被认为是标准的高效模块代表作之一。然而,受限于静态 CNN 的归纳偏差影响,纯 CNN 模型的准确性仍然保持较低水平,随着Transformer的出现,一时间涌现了许多性能性能超群的网络,如 Swin transformer、PVT、Eatformer、EAT等。得益于其动态建模和不受归纳偏置的影响,这些方法都取得了相对 CNN 的显着改进。然而,受多头自注意(MHSA)参数和计算量的二次方限制,基于 Transformer 的模型往往具有大量资源消耗,因此也难以在轻量化环境下部署。

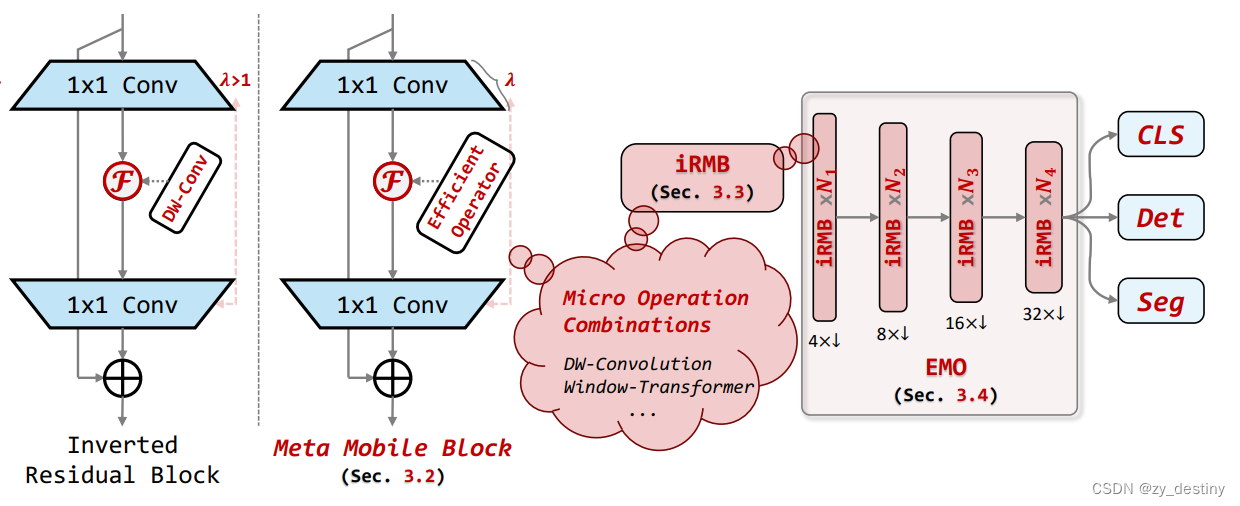

受此启发,作者重新考虑了 MobileNetv2 中的 Inverted Residual Block 和 Transformer 中的 MHSA/FFN 模块,归纳抽象出一个通用的 Meta Mobile Block,它采用参数扩展比 λ 和高效算子 F 来实例化不同的模块,即 IRB、MHSA 和前馈网络 (FFN)。

官网给出的iRMB模块的python类实现python代码如下:

class iRMB(nn.Module):

def __init__(self, dim_in, dim_out, norm_in=True, has_skip=True, exp_ratio=1.0, norm_layer='bn_2d',

act_layer='relu', v_proj=True, dw_ks=3, stride=1, dilation=1, se_ratio=0.0, dim_head=64, window_size=7,

attn_s=True, qkv_bias=False, attn_drop=0., drop=0., drop_path=0., v_group=False, attn_pre=False):

super().__init__()

self.norm = get_norm(norm_layer)(dim_in) if norm_in else nn.Identity()

dim_mid = int(dim_in * exp_ratio)

self.has_skip = (dim_in == dim_out and stride == 1) and has_skip

self.attn_s = attn_s

if self.attn_s:

assert dim_in % dim_head == 0, 'dim should be divisible by num_heads'

self.dim_head = dim_head

self.window_size = window_size

self.num_head = dim_in // dim_head

self.scale = self.dim_head ** -0.5

self.attn_pre = attn_pre

self.qk = ConvNormAct(dim_in, int(dim_in * 2), kernel_size=1, bias=qkv_bias, norm_layer='none', act_layer='none')

self.v = ConvNormAct(dim_in, dim_mid, kernel_size=1, groups=self.num_head if v_group else 1, bias=qkv_bias, norm_layer='none', act_layer=act_layer, inplace=inplace)

self.attn_drop = nn.Dropout(attn_drop)

else:

if v_proj:

self.v = ConvNormAct(dim_in, dim_mid, kernel_size=1, bias=qkv_bias, norm_layer='none', act_layer=act_layer, inplace=inplace)

else:

self.v = nn.Identity()

self.conv_local = ConvNormAct(dim_mid, dim_mid, kernel_size=dw_ks, stride=stride, dilation=dilation, groups=dim_mid, norm_layer='bn_2d', act_layer='silu', inplace=inplace)

self.se = SE(dim_mid, rd_ratio=se_ratio, act_layer=get_act(act_layer)) if se_ratio > 0.0 else nn.Identity()

self.proj_drop = nn.Dropout(drop)

self.proj = ConvNormAct(dim_mid, dim_out, kernel_size=1, norm_layer='none', act_layer='none', inplace=inplace)

self.drop_path = DropPath(drop_path) if drop_path else nn.Identity()

def forward(self, x):

shortcut = x

x = self.norm(x)

B, C, H, W = x.shape

if self.attn_s:

# padding

if self.window_size <= 0:

window_size_W, window_size_H = W, H

else:

window_size_W, window_size_H = self.window_size, self.window_size

pad_l, pad_t = 0, 0

pad_r = (window_size_W - W % window_size_W) % window_size_W

pad_b = (window_size_H - H % window_size_H) % window_size_H

x = F.pad(x, (pad_l, pad_r, pad_t, pad_b, 0, 0,))

n1, n2 = (H + pad_b) // window_size_H, (W + pad_r) // window_size_W

x = rearrange(x, 'b c (h1 n1) (w1 n2) -> (b n1 n2) c h1 w1', n1=n1, n2=n2).contiguous()

# attention

b, c, h, w = x.shape

qk = self.qk(x)

qk = rearrange(qk, 'b (qk heads dim_head) h w -> qk b heads (h w) dim_head', qk=2, heads=self.num_head, dim_head=self.dim_head).contiguous()

q, k = qk[0], qk[1]

attn_spa = (q @ k.transpose(-2, -1)) * self.scale

attn_spa = attn_spa.softmax(dim=-1)

attn_spa = self.attn_drop(attn_spa)

if self.attn_pre:

x = rearrange(x, 'b (heads dim_head) h w -> b heads (h w) dim_head', heads=self.num_head).contiguous()

x_spa = attn_spa @ x

x_spa = rearrange(x_spa, 'b heads (h w) dim_head -> b (heads dim_head) h w', heads=self.num_head, h=h, w=w).contiguous()

x_spa = self.v(x_spa)

else:

v = self.v(x)

v = rearrange(v, 'b (heads dim_head) h w -> b heads (h w) dim_head', heads=self.num_head).contiguous()

x_spa = attn_spa @ v

x_spa = rearrange(x_spa, 'b heads (h w) dim_head -> b (heads dim_head) h w', heads=self.num_head, h=h, w=w).contiguous()

# unpadding

x = rearrange(x_spa, '(b n1 n2) c h1 w1 -> b c (h1 n1) (w1 n2)', n1=n1, n2=n2).contiguous()

if pad_r > 0 or pad_b > 0:

x = x[:, :, :H, :W].contiguous()

else:

x = self.v(x)

x = x + self.se(self.conv_local(x)) if self.has_skip else self.se(self.conv_local(x))

x = self.proj_drop(x)

x = self.proj(x)

x = (shortcut + self.drop_path(x)) if self.has_skip else x

return x

3.如何使用iRMB模块

论文中将多个iRMB堆叠起来,形成一个stage,EMO网络将四个stage串联起来,便形成了基本的骨干特征提取网络,只需按需连接下游任务(分类、分割、目标检测)即可实现完整网络结构构建。

官网实现EMO网络代码:github

反向残差移动块 (iRMB),它吸收了 CNN 架构的效率来建模局部特征和 Transformer 架构动态建模的能力来学习长距离交互。

具体实现中,iRMB 中的 F 被建模为级联的 MHSA 和卷积运算,公式可以抽象为F(⋅)=Conv(MHSA(⋅))。这里需要考虑的问题主要有两个:

λ通常大于中间维度将是输入维度的倍数,导致参数和计算的二次增加。因此作者很自然的考虑结合 W-MHSA 和 DW-Conv 并结合残差机制设计了一种新的模块。此外,通过这种级联方式可以提高感受野的扩展率,同时可以有效降低模型计算复杂度。

总结:后续在轻量化网络设计,即可考虑在网络中设计iRBlock 或iRMBlock模块的实现。

整理不易,欢迎一键三连!!!

送你们一条美丽的--分割线--

🌷🌷🍀🍀🌾🌾🍓🍓🍂🍂🙋🙋🐸🐸🙋🙋💖💖🍌🍌🔔🔔🍉🍉🍭🍭🍋🍋🍇🍇🏆🏆📸📸⛵⛵⭐⭐🍎🍎👍👍🌷🌷

3486

3486

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?