Table of Contents

论文:ShuffleNet V2: Practical Guidelines for Ecient CNN Architecture Design

论文链接:https://arxiv.org/abs/1807.11164

ShuffleNet V2是ShuffleNet的升级版(了解ShuffleNet请移步博客ShuffleNet网络深入解析),该论文是通过理论与实验的方法,得出了四条结论来指导网络结构设计,使得网络的运行速度更快.

设计理念

网络运行速度不只是受到FLOPs(float-point operations)的影响,内存访问同样是需要消耗时间的,该论文从内存访问量(MAC)的角度来指导网络的设计.

1.通道数相等时最小化内存访问量(MAC)

假设一个1*1卷积层的输入特征通道数是c1,输出特征尺寸是h和w,输出特征通道数是c2,那么这样一个1*1卷积层的FLOPs就是下面式子所示,更具体的写法是B=1*1*c1*c2*h*w,这里省略了1*1。

接下来看看存储空间,因为是1*1卷积,所以输入特征和输出特征的尺寸是相同的,这里用h和w表示,其中hwc1表示输入特征所需存储空间,hwc2表示输出特征所需存储空间,c1c2表示卷积核所需存储空间。

根据均值不等式可以得到公式1。接下来有意思了,把MAC和B代入式子1,就得到(c1-c2)^2>=0,因此等式成立的条件是c1=c2,也就是输入特征通道数和输出特征通道数相等时,在给定FLOPs前提下,MAC达到取值的下界。

2.过量使用组卷积会增加MAC

带group操作的1*1卷积的FLOPs如下所示,多了一个除数g,g表示group数量。这是因为每个卷积核都只和c1/g个通道的输入特征做卷积,所以多个一个除数g。

MAC如下所示,和前面不同的是这里卷积核的存储量多了除数g,和B同理。

如公式2所示,可以看出在B不变时,g越大,MAC也越大。

3.网络碎片化会降低并行度

模型的分支数量会影响模型的速度,分支数量越少,模型速度越快.

4.元素级(element-wise)操作影响模型速度

element-wise操作所带来的时间消耗远比在FLOPs上的体现的数值要多,因此要尽可能减少element-wise操作。

网络结构

ShuffleNetV2 Unit

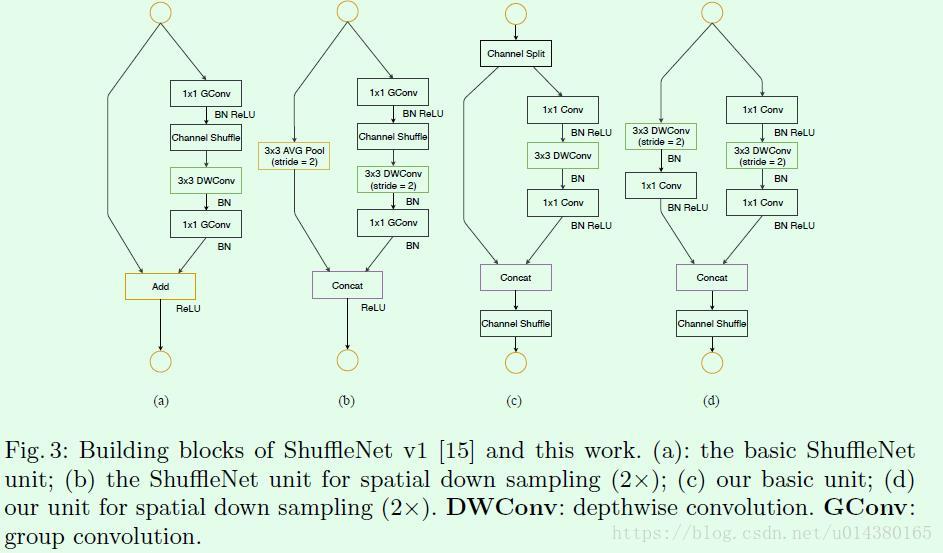

Figure3是关于ShuffleNet v1和ShuffleNet v2的结构对比,从(a)和(c)的对比可以看出首先(c)在开始处增加了一个channel split操作,这个操作将输入特征的通道分成c-c’和c’,c’在文章中采用c/2,这主要是和前面第1点发现对应。然后(c)中取消了1*1卷积层中的group操作,这和前面第2点发现对应,同时前面的channel split其实已经算是变相的group操作了。其次,channel shuffle的操作移到了concat后面,和前面第3点发现对应,同时也是因为第一个1*1卷积层没有group操作,所以在其后面跟channel shuffle也没有太大必要。最后是将element-wise add操作替换成concat,这个和前面第4点发现对应。多个(c)结构连接在一起的话,channel split、concat和channel shuffle是可以合并在一起的。(b)和(d)的对比也是同理,只不过因为(d)的开始处没有channel split操作。

网络整体结构

ShuffleNetV2实现

Unit

def split(x, groups):

out = x.chunk(groups, dim=1)

return out

def shuffle( x, groups):

N, C, H, W = x.size()

out = x.view(N, groups, C // groups, H, W).permute(0, 2, 1, 3, 4).contiguous().view(N, C, H, W)

return out

class ShuffleUnit(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super().__init__()

mid_channels = out_channels // 2

if stride > 1:

self.branch1 = nn.Sequential(

nn.Conv2d(in_channels, in_channels, 3, stride=stride, padding=1, groups=in_channels, bias=False),

nn.BatchNorm2d(in_channels),

nn.Conv2d(in_channels, mid_channels, 1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True)

)

self.branch2 = nn.Sequential(

nn.Conv2d(in_channels, mid_channels, 1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True),

nn.Conv2d(mid_channels, mid_channels, 3, stride=stride, padding=1, groups=mid_channels, bias=False),

nn.BatchNorm2d(mid_channels),

nn.Conv2d(mid_channels, mid_channels, 1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True)

)

else:

self.branch1 = nn.Sequential()

self.branch2 = nn.Sequential(

nn.Conv2d(mid_channels, mid_channels, 1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True),

nn.Conv2d(mid_channels, mid_channels, 3, stride=stride, padding=1, groups=mid_channels, bias=False),

nn.BatchNorm2d(mid_channels),

nn.Conv2d(mid_channels, mid_channels, 1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True)

)

self.stride = stride

def forward(self, x):

if self.stride == 1:

x1, x2 = split(x, 2)

out = torch.cat((self.branch1(x1), self.branch2(x2)), dim=1)

else:

out = torch.cat((self.branch1(x), self.branch2(x)), dim=1)

out = shuffle(out, 2)

return outShuffleNetV2

class ShuffleNetV2(nn.Module):

def __init__(self, channel_num, class_num=settings.CLASSES_NUM):

super().__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 24, 3, stride=2, padding=1, bias=False),

nn.BatchNorm2d(24),

nn.ReLU(inplace=True)

)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.stage2 = self.make_layers(24, channel_num[0], 4, 2)

self.stage3 = self.make_layers(channel_num[0], channel_num[1], 8, 2)

self.stage4 = self.make_layers(channel_num[1], channel_num[2], 4, 2)

self.conv5 = nn.Sequential(

nn.Conv2d(channel_num[2], 1024, 1, bias=False),

nn.BatchNorm2d(1024),

nn.ReLU(inplace=True)

)

self.avgpool = nn.AdaptiveAvgPool2d(1)

self.fc = nn.Linear(1024, class_num)

def make_layers(self, in_channels, out_channels, layers_num, stride):

layers = []

layers.append(ShuffleUnit(in_channels, out_channels, stride))

in_channels = out_channels

for i in range(layers_num - 1):

ShuffleUnit(in_channels, out_channels, 1)

return nn.Sequential(*layers)

def forward(self, x):

out = self.conv1(x)

out = self.maxpool(out)

out = self.stage2(out)

out = self.stage3(out)

out = self.stage4(out)

out = self.conv5(out)

out = self.avgpool(out)

out = out.flatten(1)

out = self.fc(out)

return out

2571

2571

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?