卷积网络中的输入和层与传统神经网络有些区别,需重新设计,训练模块基本一致 上一节全连接层将像素点扩展一个向量,假设的前提是每个像素点之间都是相互独立的 图像之间的像素点往往是有关联的,所以使用卷积可以考虑更多图像间像素点的联系。 一般在处理图像的过程中更多使用的是卷积,全连接一般只用于最后几层做分类映射或者回归的映射 import torch

import torch. nn as nn

import torch. optim as optim

import torch. nn. functional as F

from torchvision import datasets, transforms

import matplotlib. pyplot as plt

import numpy as np

% matplotlib inline

input_size = 28

num_classes = 10

num_epochs = 3

batch_size = 64

train_dataset = datasets. MNIST( root= './data' ,

train= True ,

transform= transforms. ToTensor( ) ,

download= True )

test_dataset = datasets. MNIST( root= './data' ,

train= False ,

transform= transforms. ToTensor( ) )

train_loader = torch. utils. data. DataLoader( dataset= train_dataset,

batch_size= batch_size,

shuffle= True )

test_loader = torch. utils. data. DataLoader( dataset= test_dataset,

batch_size= batch_size,

shuffle= True )

一般卷积层,relu层,池化层可以组在一起成为一个block 注意卷积最后结果还是一个特征图,需要把图转换成向量才能做分类或者回归任务(maxpooling) 一般图像是通道数优先:128 28,也有通道数最后的:2828 1 in_channels:输入图像的通道数 out_channels:输出的特征图个数 padding:考虑边界像素点,对于不是中心堆成的图,resize会改变图像的形状,做padding避免此问题。 卷积后图像大小计算:(h-kernel+2*padding)/stride + 1(向下取整) 一般padding设置方法:kernel5,padding=2;kernel 3,padding=1 maxpooling:希望将图像压缩,变为原来的一半 view和reshape操作一样的:将64,7,7变为647 7 class CNN ( nn. Module) :

def __init__ ( self) :

super ( CNN, self) . __init__( )

self. conv1 = nn. Sequential(

nn. Conv2d(

in_channels= 1 ,

out_channels= 16 ,

kernel_size= 5 ,

stride= 1 ,

padding= 2 ,

) ,

nn. ReLU( ) ,

nn. MaxPool2d( kernel_size= 2 ) ,

)

self. conv2 = nn. Sequential(

nn. Conv2d( 16 , 32 , 5 , 1 , 2 ) ,

nn. ReLU( ) ,

nn. Conv2d( 32 , 32 , 5 , 1 , 2 ) ,

nn. ReLU( ) ,

nn. MaxPool2d( 2 ) ,

)

self. conv3 = nn. Sequential(

nn. Conv2d( 32 , 64 , 5 , 1 , 2 ) ,

nn. ReLU( ) ,

)

self. out = nn. Linear( 64 * 7 * 7 , 10 )

def forward ( self, x) :

x = self. conv1( x)

x = self. conv2( x)

x = self. conv3( x)

x = x. view( x. size( 0 ) , - 1 )

output = self. out( x)

return output

def accuracy ( predictions, labels) :

pred = torch. max ( predictions. data, 1 ) [ 1 ]

rights = pred. eq( labels. data. view_as( pred) ) . sum ( )

return rights, len ( labels)

net = CNN( )

criterion = nn. CrossEntropyLoss( )

optimizer = optim. Adam( net. parameters( ) , lr= 0.001 )

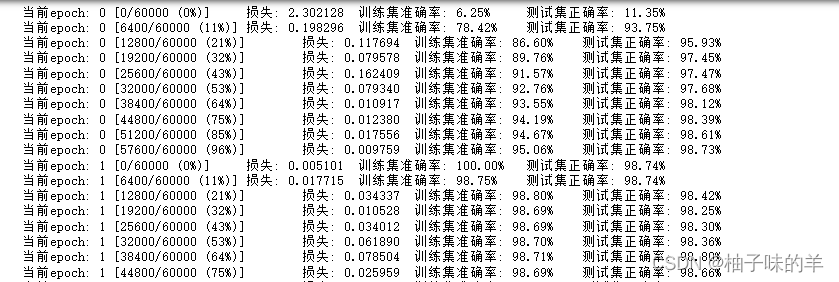

for epoch in range ( num_epochs) :

train_rights = [ ]

for batch_idx, ( data, target) in enumerate ( train_loader) :

net. train( )

output = net( data)

loss = criterion( output, target)

optimizer. zero_grad( )

loss. backward( )

optimizer. step( )

right = accuracy( output, target)

train_rights. append( right)

if batch_idx % 100 == 0 :

net. eval ( )

val_rights = [ ]

for ( data, target) in test_loader:

output = net( data)

right = accuracy( output, target)

val_rights. append( right)

train_r = ( sum ( [ tup[ 0 ] for tup in train_rights] ) , sum ( [ tup[ 1 ] for tup in train_rights] ) )

val_r = ( sum ( [ tup[ 0 ] for tup in val_rights] ) , sum ( [ tup[ 1 ] for tup in val_rights] ) )

print ( '当前epoch: {} [{}/{} ({:.0f}%)]\t损失: {:.6f}\t训练集准确率: {:.2f}%\t测试集正确率: {:.2f}%' . format (

epoch, batch_idx * batch_size, len ( train_loader. dataset) ,

100. * batch_idx / len ( train_loader) ,

loss. data,

100. * train_r[ 0 ] . numpy( ) / train_r[ 1 ] ,

100. * val_r[ 0 ] . numpy( ) / val_r[ 1 ] ) )

255

255

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?